EVGA GeForce GTX 680 Classified Review: Pushing GTX 680 To Its Peak

by Ryan Smith on July 20, 2012 12:00 PM ESTCrysis, Metro, DiRT 3, Shogun 2, & Batman

Since the GTX 680 Classified doesn’t bring anything new to the table architecturally, we’ll keep our commentary on its stock performance brief. At stock it’s much like any other overclocked GTX 680 (factory or otherwise), with the only real room for differentiation being the greater amount of RAM and the higher power target. In practice the greater amount of RAM doesn’t make much of a difference in our single-GPU tests, as that much RAM is far more beneficial for the ultra-high resolutions of multi-monitor gaming, at which point you’re going to need a second card to provide the necessary horsepower.

The higher default power target on the other hand is quite interesting. The GTX 680 Classified will hit its top boost bin almost all of the time thanks to the generous power target, something the reference GTX 680 can have trouble with even at stock. So although reference cards can be overclocked to this level, it doesn’t necessarily mean they’ll match the GTX 680 Classified’s boost clocks in that state.

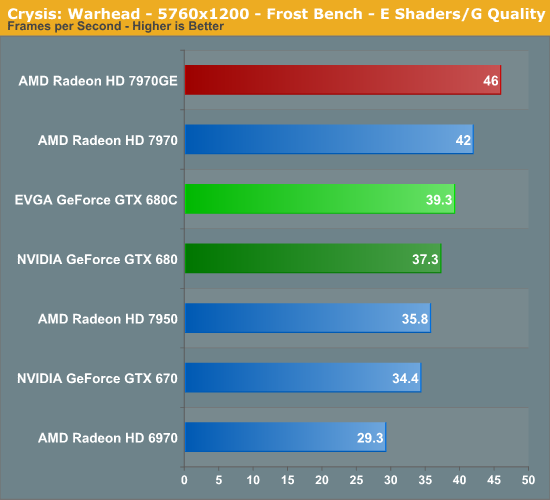

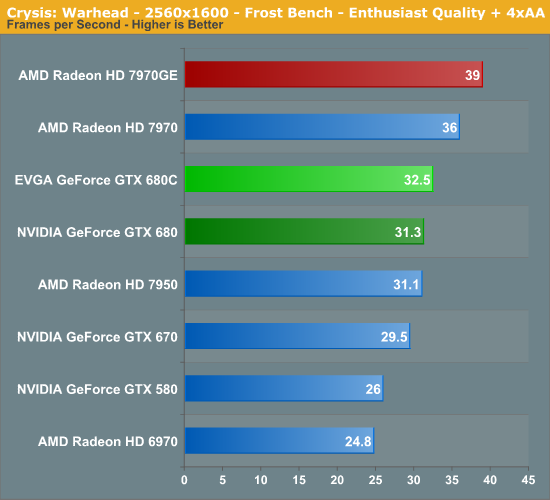

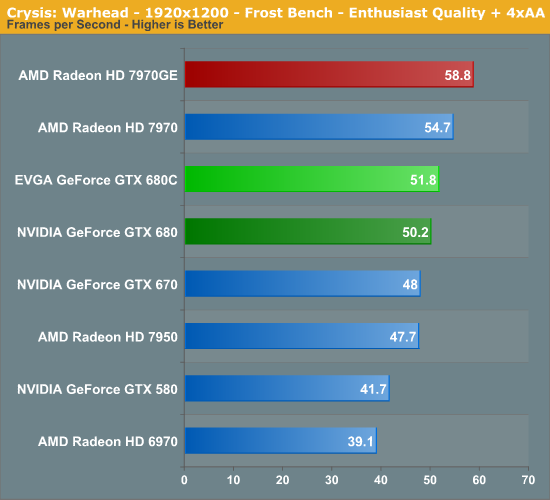

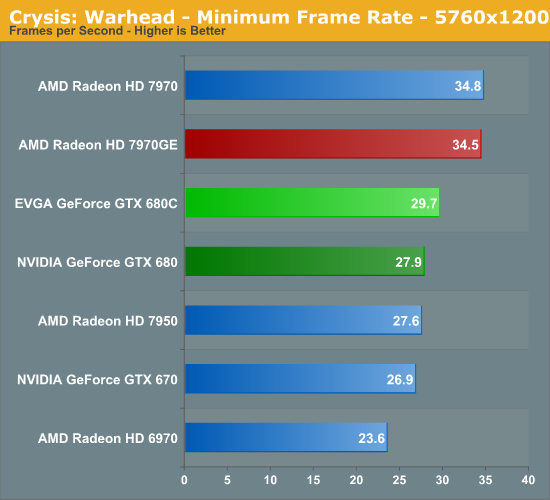

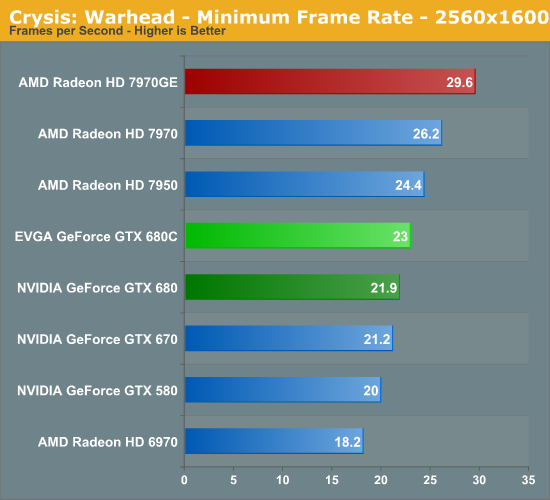

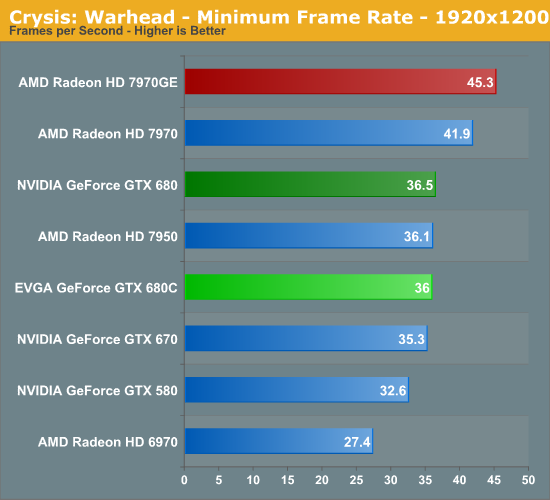

Starting off as always in Crysis, there’s actually not much to see. Since the reference GTX 680 is already memory bandwidth limited here and since the GTX 680 Classified doesn’t have a memory overclock, the factory core overclock does very little for its performance here.

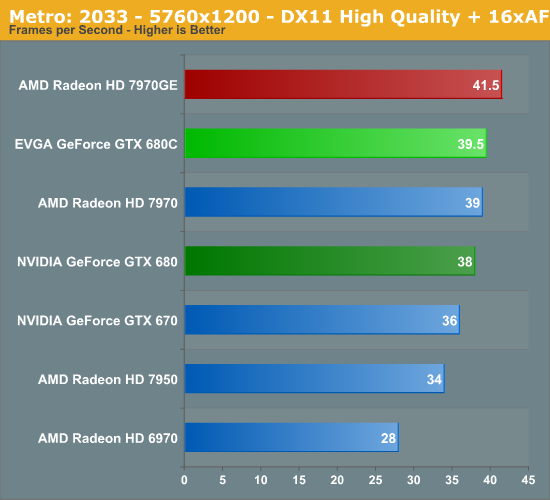

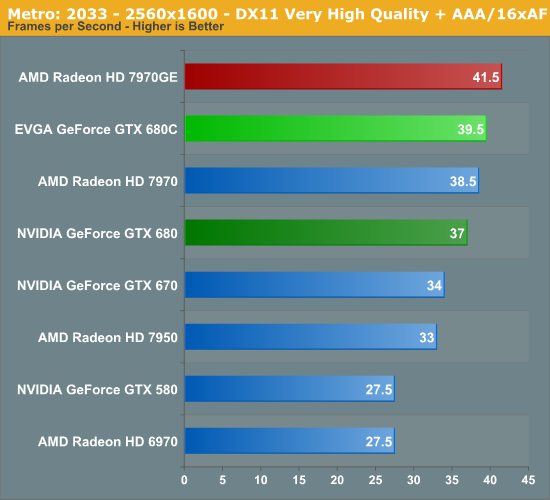

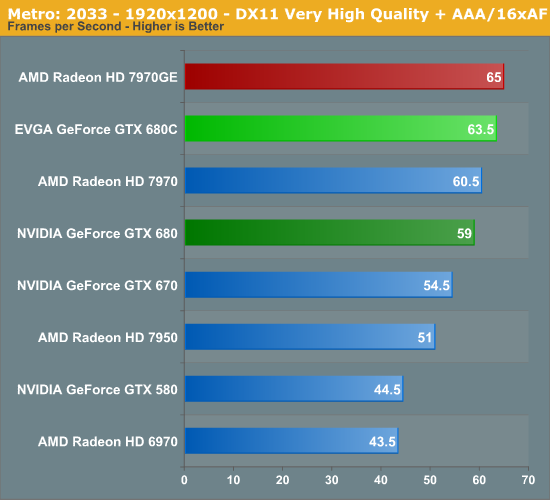

Metro isn’t a title that we’ve previously considered to be memory bandwidth limited, but given its GPU-crushing nature like Crysis (and the fact that the 7970GE does so well here), maybe we should take that into consideration. The GTX 680C picks up 7% here at 2560, which is decent but it’s less than what the factory overclock can provide when the GPU can fully stretch its legs.

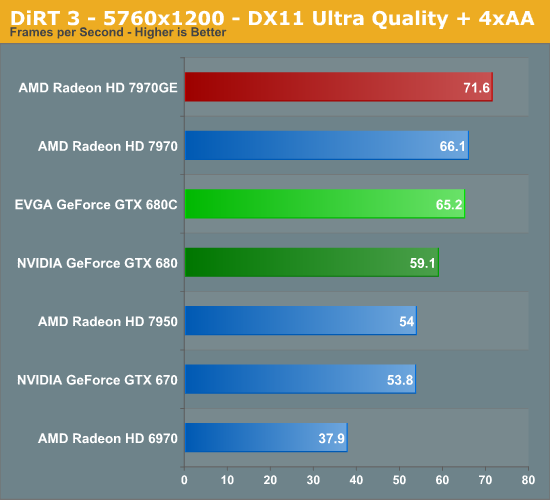

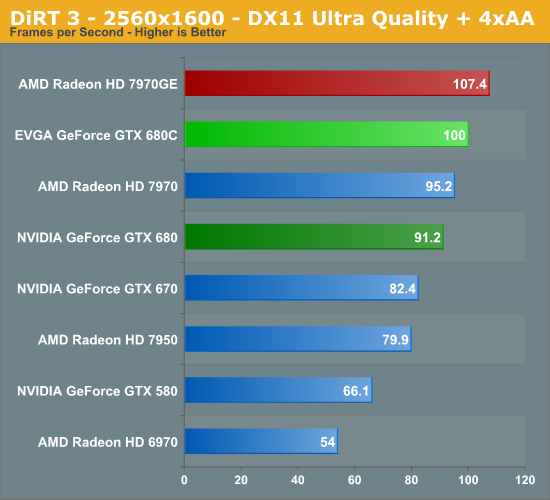

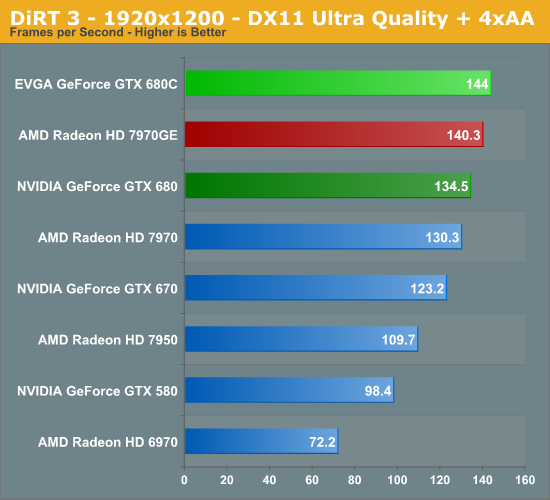

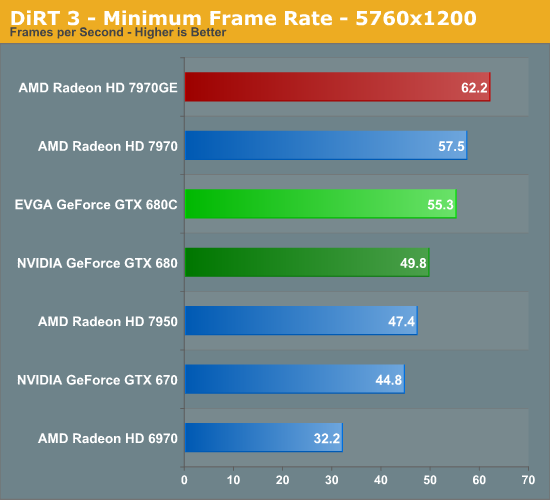

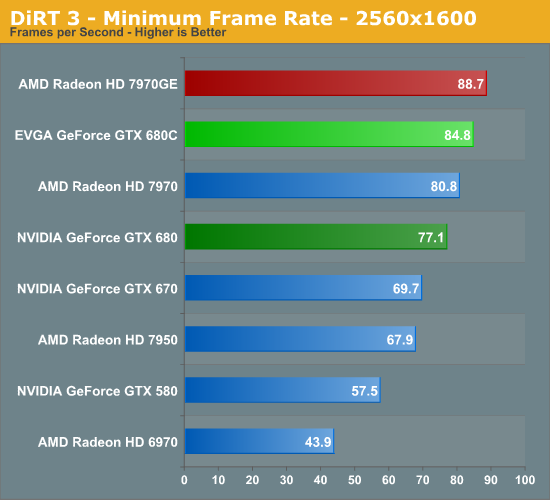

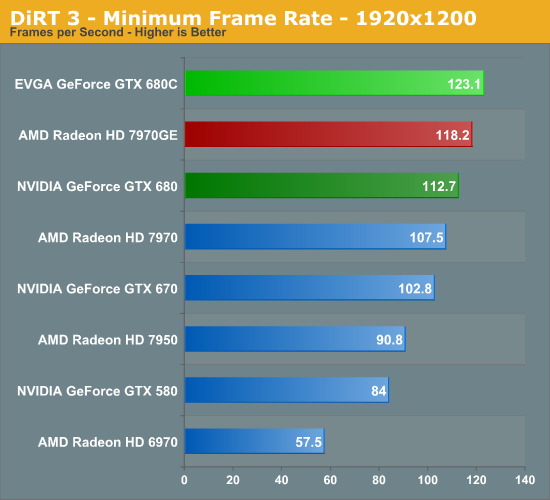

Now when the GTX 680 Classified can stretch its legs in a GPU-bound situation, we see the full impact of that factory overclock. With DiRT 3 it picks up the full 10% performance improvement the factory overclock is capable of providing.

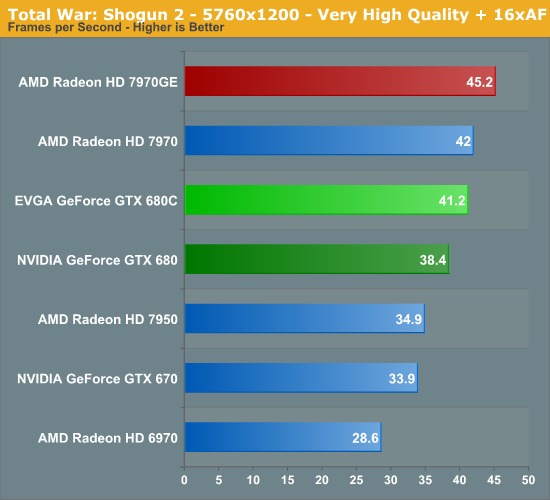

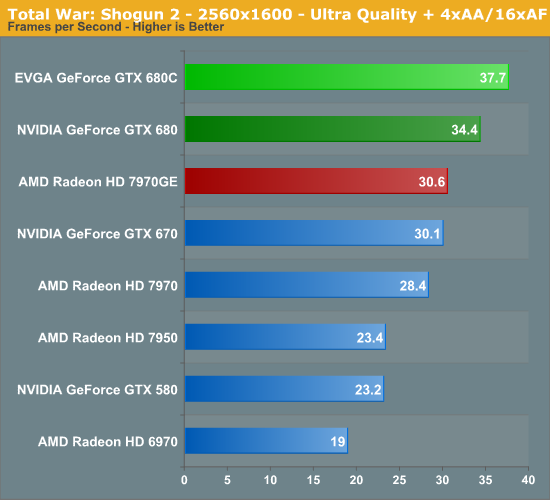

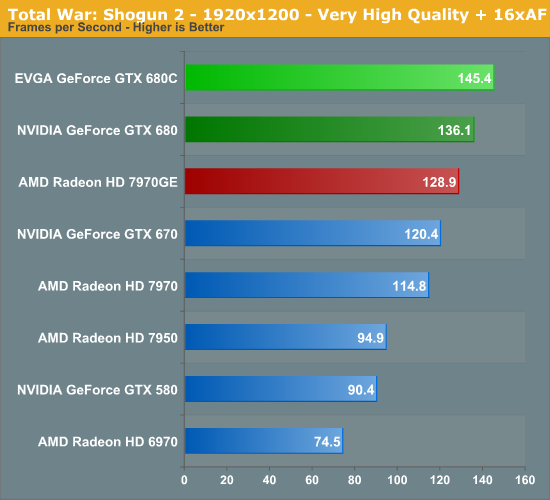

Things also look good with Shogun 2 at 2560, with another 10% gain. On the other hand 5760 only picks up 7%.

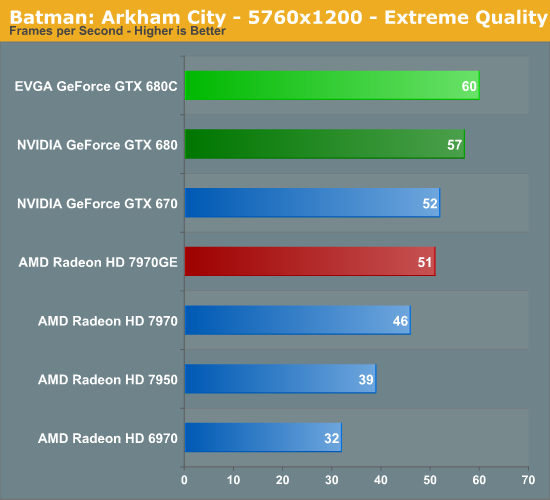

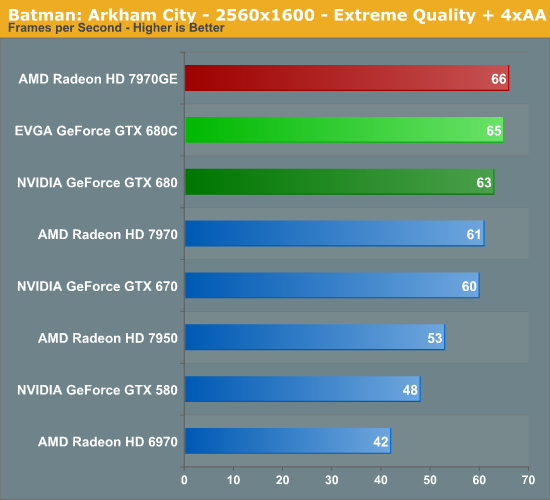

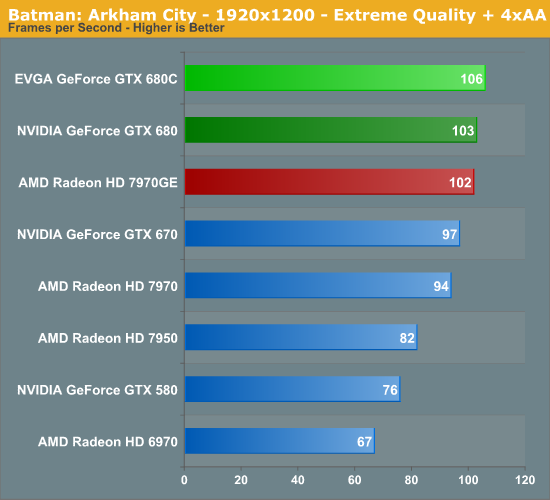

Batman on the other hand doesn’t do the GTX 680 Classified any favors, which is a bit odd. 3-5% just isn’t what you expect here, since there’s no real evidence that the game is CPU or memory bandwidth bottlenecked.

75 Comments

View All Comments

HisDivineOrder - Tuesday, July 24, 2012 - link

To be fair, AMD started the gouging with the 7970 series, its moderate boost over the 580 series, and its modest mark-up over that line.When nVidia saw what AMD had launched, they must have laughed and rubbed their hands together with glee. Because their mainstream part was beating it and it cost them a LOT less to make. So they COULD have passed those savings onto the customer and launched at nominal pricing, pressuring AMD with extremely low prices that AMD could probably not afford to match...

...or they could join with the gouging. They joined with the gouging. They knocked the price down by $50 and AMD's pricing (besides the 78xx series) has been in a freefall ever since.

CeriseCogburn - Tuesday, July 24, 2012 - link

You people are using way too much tin foil, it's already impinged bloodflow to the brain from it's weight crimping that toothpick neck... at least the amd housefire heatrays won't further cook the egg under said foil hat.Since nVidia just barely has recently gotten a few 680's and 670's in stock on the shelves, how pray tell, would they produce a gigantic 7 billion transistor chip that it appears no forge, even the one in Asgard, could possibly currently have produced on time for any launch, up to and perhaps past even today ?

See that's what I find so interesting. Forget reality, the Charlie D semi accurate brain fart smell is a fine delicacy for so many, that they will never stop inhaling.

Wow.

I'll ask again - at what price exactly was the 680 "midrange" chip supposed to land at ? Recall the GTX580 was still $499+ when amd launched - let's just say since nVidia was holding back according to the 20lbs of tinfoil you guys have lofted, they could have released GTX680 midrange when their GTX580 was still $499+ - right when AMD launched... so what price exactly was GTX680 supposed to be, and where would that put the rest of the lineups on down the price alley ?

Has one of you wanderers EVER comtemplated that ? Where are you going to put the card lineups with GTX680 at the $250-$299 midrange in January ? Heck ... even right now, you absolute geniuses ?

natsume - Sunday, July 22, 2012 - link

For that price, I prefer rather the Sapphire HD 7970 Toxic 6GB @ 1200MhzCeriseCogburn - Tuesday, July 24, 2012 - link

Currently unavailable it appears.And amd fan boys have told us 7970 overclocks so well to (1300mhz they claim) so who cares.

Toxic starts at 1100, and no amd fan boy would admit the run of the mill 7970 can't do that out of the box, as it's all we've heard now since January.

It's nice seeing 6GB on a card though that cannot use even 3GB an maintain a playable frame rate at any resolution or settings, including 100+ Skyrim mods at once attempts.

CeriseCogburn - Tuesday, July 24, 2012 - link

Sad how it loses so often to a reference GTX680 in 1920 and at triple monitor resolutions.http://www.overclockersclub.com/reviews/sapphire__...

Sabresiberian - Sunday, July 22, 2012 - link

One good reason not to have it is the fact that software overclocking can sometimes be rather wonky. I can see Nvidia erring on the cautious side to protect their customers from untidy programs.EVGA is a company I want to love, but they are, in my opinion, one that "almost" goes the extra mile. This card is a good example, I think. Their customers expressed a desire for unlocked voltage and 4GB cards (or "more than 2GB"), and they made it for us.

But they leave the little things out. Where do you go to find out what those little letters mean on the EVBot display? I'll tell you where I went - to this article. I looked in the EVBot manual, looked up the manual online to see if it was updated - it wasn't; scoured the website and forums, and no where could I find a breakdown of what the list of voltage settings meant from EVGA!

I'm not regretting my purchase of this card; it is a very nice piece of hardware. It just doesn't have the 100% commitment to it a piece of hardware like this should.

But then, EVGA, in my opinion, does at least as good as anybody, in my opinion. MSI is an excellent company, but they released their Lightning that was supposed to be over-voltable without a way to do it. Asus makes some of the best stuff in the business - if their manufacturing doesn't bungle the job and leave film that needs to be removed between heatsinks and what they should be attached to.

Cards like this are necessarily problematic. To make them worth their money in a strict results sense, EVGA would have to guarantee they overclock to something like 1400MHz. If they bin to that strict of a standard, why don't they just factory overclock to 1400 to begin with?

And, what's going to be the cost of a chip guaranteed to overclock that high? I don't know; I don't know what EVGA's current standards are for a "binning for the Classified" pass, but my guess is it would drive the price up, so that cost value target will be missed again.

No, you can judge these cards strictly by value for yourself, that's quite a reasonable thing to do, but to be fair you must understand that some people are interested in getting value from something other than better frame rates in the games they are playing. For this card, that means the hours spent overclocking - not just the results, the results are almost beside the point, but the time spent itself. In the OC world that often means people will be disappointed in the final results, and it's too bad companies can't guarantee better success - but if they could, really what would be the point for the hard-core overclocker? They would be running a fixed race, and for people like that it would make the race not worth running.

These cards aren't meant for the general-population overclocker that wants a guaranteed more "bang for the buck" out of his purchase. Great OCing CPUs like Nehalem and Sandy Bridge bring a lot of people into the overclocking world that expect to get great results easily, that don't understand the game it is for those who are actually responsible for discovering those great overclocking items, and that kind of person talks down a card like this.

Bottom line - if you want a GTX 680 with a guaranteed value equivalent to a stock card, then don't buy this card! It's no more meant for you than a Mack truck is meant to be a family car. However, if you are a serious overclocker that likes to tinker and wants the best starting point, this may be exactly what you want.

;)

Oxford Guy - Sunday, July 22, 2012 - link

Nvidia wasn't happy with the partners' designs, eh? Oh please. We all remember the GTX 480. That was Nvidia's doing, including the reference card and cooler. Their partners, the ones who didn't use the awful reference design, did Nvidia a favor by putting three fans on it and such.Then there's the lack of mention of Big Kepler on the first page of this review, even though it's very important for framing since this card is being presented as "monstrous". It's not so impressive when compared to Big Kepler.

And there's the lack of mention that the regular 680's cooler doesn't use a vapor chamber like the previous generation card (580). That's not the 680 being a "jack of all trades and a master of none". That's Nvidia making an inferior cooler in comparison with the previous generation.

CeriseCogburn - Tuesday, July 24, 2012 - link

I, for one, find the 3rd to the last paragraph of the 1st review page a sad joke.Let's take this sentence for isntance, and keep in mind the nVidia reference cooler does everything better than the amd reference:

" Even just replacing the cooler while maintaining the reference board – what we call a semi-custom card – can have a big impact on noise, temperatures, and can improve overclocking. "

One wonders why amd epic failure in comparison never gets that kind of treatment.

If nVidia doesn't find that sentence I mentioned a ridiculous insult, I'd be surprised, because just before that, they got treated to this one: " NVIDIA’s reference design is a jack of all trades but master of none "

I guess I wouldn't mind one bit if the statements were accompanied by flat out remarks that despite the attitude presented, amd's mock up is a freaking embarrassingly hot and loud disaster in every metric of comparison...

I do wonder where all these people store all their mind bending twisted hate for nVidia, I really do.

The 480 cooler was awesome because one could simply remove the gpu sink and still have a metal covered front of the pcb card and thus a better gpu HS would solve OC limits, which were already 10-15% faster than 5870 at stock and gaining more from OC than the 5870.

Speaking of that, we're supposed to sill love the 5870, this sight claimed the 5850 that lost to 480 and 470 was the best card to buy, and to this day our amd fans proclaim the 5870 a king, compare it to their new best bang 6870 and 6850 that were derided for lack of performance when they came out, and now 6870 CF is some wonderkin for the fan boys.

I'm pretty sick of it. nVidia spanked the 5000 series with their 400 series, then slammed the GTX460 down their throats to boot - the card all amd fans never mention now - pretending it never existed and still doesn't exist...

It's amazing to me. All the blabbing stupid praise about amd cards and either don't mention nVidia cards or just cut them down and attack, since amd always loses, that must be why.

Oxford Guy - Tuesday, July 24, 2012 - link

Nvidia cheaped out and didn't use a vapor chamber for the 680 as it did with the 580. AMD is irrelevant to that fact.The GF100 has far worse performance per watt, according to techpowerup's calculations than anything AMD released in 40nm. The 480 was very hot and very loud, regardless of whether AMD even existed in the market.

AMD may have a history of using loud inefficient cooling, but my beef with the article is that Nvidia developed a more efficient cooler (580's vapor chamber) and then didn't bother to us it for the 680, probably to save a little money.

CeriseCogburn - Wednesday, July 25, 2012 - link

The 680 is cooler and quieter and higher performing all at the same time than anything we've seen in a long time, hence "your beef" is a big pile of STUPID dung, and you should know it, but of course, idiocy never ends here with people like you.Let me put it another way for the faux educated OWS corporate "profit watcher" jack***: " It DOESN'T NEED A VAPOR CHAMBER YOU M*R*N ! "

Hopefully that penetrates the inbred "Oxford" stupidity.

Thank so much for being such a doof. I really appreciate it. I love encountering complete stupidity and utter idiocy all the time.