AMD Radeon HD 7970 GHz Edition Review: Battling For The Performance Crown

by Ryan Smith on June 22, 2012 12:01 AM EST- Posted in

- GPUs

- AMD

- GCN

- Radeon HD 7000

Crysis: Warhead

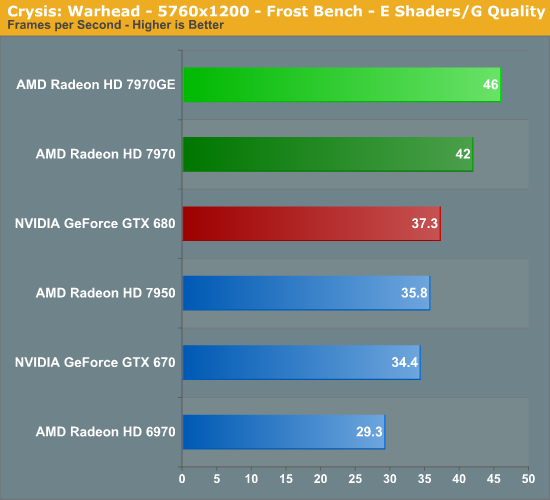

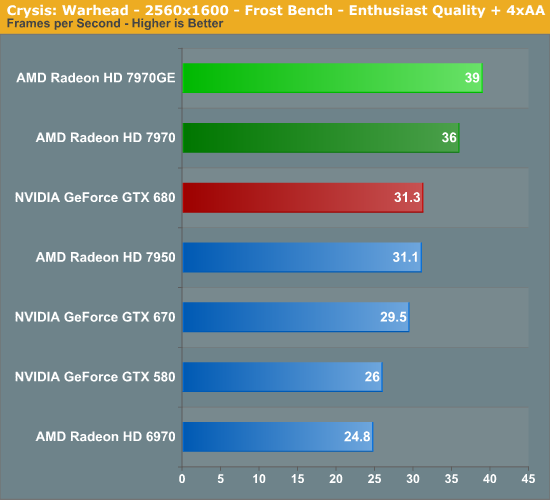

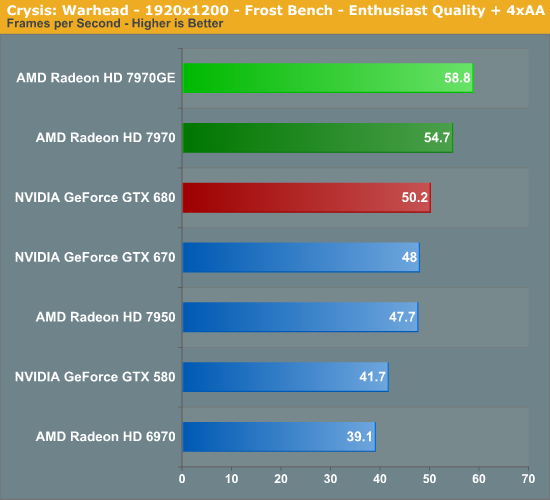

Kicking things off as always is Crysis: Warhead. It’s no longer the toughest game in our benchmark suite, but it’s still a technically complex game that has proven to be a very consistent benchmark. Thus even four years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” While we’re closer than ever, full Enthusiast settings at a 60fps is still beyond the grasp of a single-GPU card.

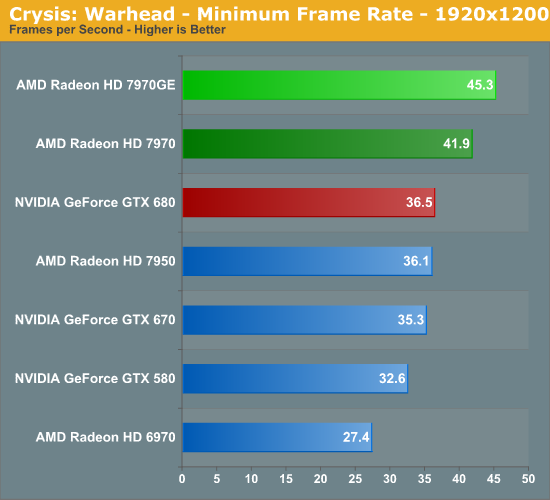

Since the launch of the GTX 680 it’s been clear that Crysis is a game that favors AMD’s products and this is no clearer than with the 7970GE. AMD was already handily beating the GTX 680 here, most likely due to the GTX 680’s more limited memory bandwidth – so the faster 7970GE widens that gap even further. The 7970GE is a full 25% faster than the GTX 680 here at 2560 and is extremely close to hitting 60fps at 1920, which given Crysis’s graphically demanding nature is quite incredible, and for all practical purposes puts the 7970GE in its own category. Obviously this is one of AMD’s best games, but it’s solid proof that the 7970GE can really trounce the GTX 680 in the right situation.

As for the 7970GE versus the 7970, this is a much more straightforward comparison. We aren’t seeing the full extent of the 7970GE’s clockspeed advantage over the 7970 here, but the 7970GE is still at the lower bounds of its theoretical performance advantage over its lower clocked sibling with a gain of 8% at 2560. The 7970GE is priced some 16% higher than the 7970 so the performance gains aren’t going to keep pace with the price increases, but this is nothing new for flagship cards.

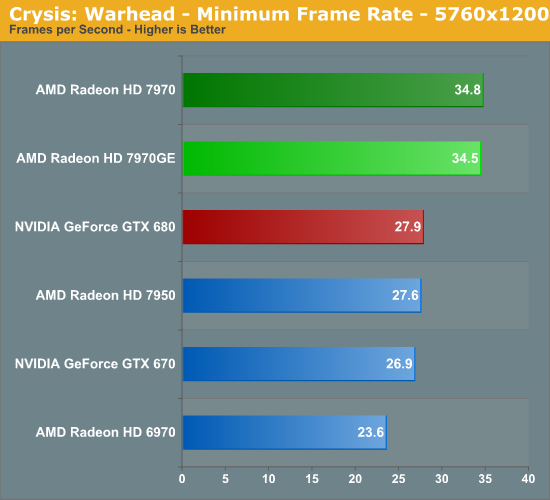

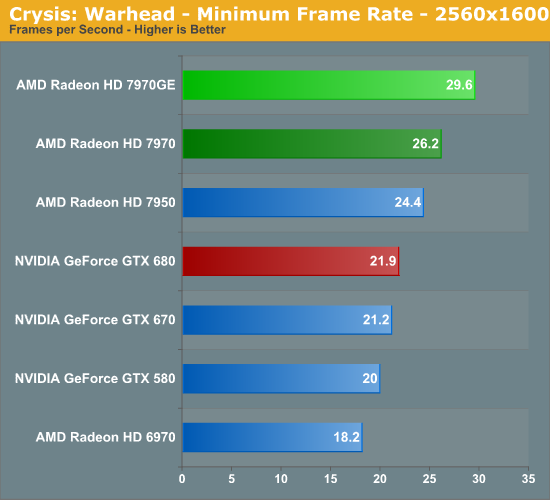

When it comes to minimum framerates the 7970GE further expands its lead. It’s now 35% faster than the GTX 680 (and just short of 30fps) at 2560, which neatly wraps up the 7970GE’s domination in Crysis. Even its performance lead versus the 7970 improves, with the 7970GE increasing its lead to 13%. A year ago NVIDIA and AMD were roughly tied with Crysis, but now AMD has clearly made it their game. So can it run Crysis? Yes, and a lot better than the GTX 680 can.

110 Comments

View All Comments

piroroadkill - Friday, June 22, 2012 - link

While the noise is bad - the manufacturers are going to spew out non-reference, quiet designs in moments, so I don't think it's an issue.silverblue - Friday, June 22, 2012 - link

Toms added a custom cooler (Gelid Icy Vision-A) to theirs which reduced noise and heat noticably (about 6 degrees C and 7-8 dB). Still, it would be cheaper to get the vanilla 7970, add the same cooling solution, and clock to the same levels; that way, you'd end up with a GHz Edition clocked card which is cooler and quieter for about the same price as the real thing, albeit lacking the new boost feature.ZoZo - Friday, June 22, 2012 - link

Would it be possible to drop the 1920x1200 definition for test? 16/10 is dead, 1080p has been the standard for high definition on PC monitors for at least 4 years now, it's more than time to catch up with reality... Sorry for the rant, I'm probably nitpicking anyway...Reikon - Friday, June 22, 2012 - link

Uh, no. 16:10 at 1920x1200 is still the standard for high quality IPS 24" monitors, which is a fairly typical choice for enthusiasts.paraffin - Saturday, June 23, 2012 - link

I haven't been seeing many 16:10 monitors around thesedays, besides, since AT even tests iGPU performance at ANYTHING BUT 1080p your "enthusiast choice" argument is invalid. 16:10 is simply a l33t factor in a market dominated by 16:9. I'll take my cheap 27" 1080p TN's spaciousness and HD content nativiness over your pricy 24" 1200p IPS' "quality" anyday.CeriseCogburn - Saturday, June 23, 2012 - link

I went over this already with the amd fanboys.For literally YEARS they have had harpy fits on five and ten dollar card pricing differences, declaring amd the price perf queen.

Then I pointed out nVidia wins in 1920x1080 by 17+% and only by 10+% in 1920x1200 - so all of a sudden they ALL had 1920x1200 monitors, they were not rare, and they have hundreds of extra dollars of cash to blow on it, and have done so, at no extra cost to themselves and everyone else (who also has those), who of course also chooses such monitors because they all love them the mostest...

Then I gave them egg counts, might as well call it 100 to 1 on availability if we are to keep to their own hyperactive price perf harpying, and the lowest available higher rez was $50 more, which COST NOTHING because it helps amd, of course....

I pointed out Anand pointed out in the then prior article it's an ~11% pixel difference, so they were told to calculate the frame rate difference... (that keeps amd up there in scores and winning a few they wouldn't otherwise).

Dude, MKultra, Svengali, Jim Wand, and mass media, could not, combined, do a better job brainwashing the amd fan boy.

Here's the link, since I know a thousand red-winged harpies are ready to descend en masse and caw loudly in protest...

http://translate.google.pl/translate?hl=pl&sl=...

1920x1080: " GeForce GTX680 is on average 17.61% more efficient than the Radeon 7970.

Here, the performance difference in favor of the GTX680 are even greater"

So they ALL have a 1920x1200, and they are easily available, the most common, cheap, and they look great, and most of them have like 2 or 3 of those, and it was no expense, or if it was, they are happy to pay it for the red harpy from hades card.

silverblue - Monday, June 25, 2012 - link

Your comparison article is more than a bit flawed. The PCLab results, in particular, have been massively updated since that article. Looks like they've edited the original article, which is a bit odd. Still, AMD goes from losing badly in a few cases to not losing so badly after all, as the results on this article go to show. They don't displace the 680 as the best gaming card of the moment, but it certainly narrows the gap (even if the GHz Edition didn't exist).Also, without a clear idea of specs and settings, how can you just grab results for a given resolution from four or five different sites for each card, add them up and proclaim a winner? I could run a comparison between a 680 and 7970 in a given title with the former using FXAA and the latter using 8xMSAA, doesn't mean it's a good comparison. I could run Crysis 2 without any AA and AF at all at a given resolution on one card and then put every bell and whistle on for the other - without the playing field being even, it's simply invalid. Take each review at its own merits because at least then you can be sure of the test environment.

As for 1200p monitors... sure, they're more expensive, but it doesn't mean people don't have them. You're just bitter because you got the wrong end of the stick by saying nobody owned 1200p monitors then got slapped down by a bunch of 1200p monitor owners. Regardless, if you're upset that NVIDIA suddenly loses performance as you ramp up the vertical resolution, how is that AMD's fault? Did it also occur to you that people with money to blow on $500 graphics cards might actually own good monitors as well? I bet there are some people here with 680s who are rocking on 1200p monitors - are you going to rag (or shall I say "rage"?) on them, too?

If you play on a 1080p panel then that's your prerogative, but considering the power of the 670/680/7970, I'd consider that a waste.

FMinus - Friday, June 22, 2012 - link

Simply put; No!1080p is the second worst thing that happened to the computer market in the recent years. The first worst thing being phasing out 4:3 monitors.

Tegeril - Friday, June 22, 2012 - link

Yeah seriously, keep your 16:9, bad color reproduction away from these benchmarks.kyuu - Friday, June 22, 2012 - link

16:10 snobs are seriously getting out-of-touch when they start claiming that their aspect ratio gives better color reproduction. There are plenty of high-quality 1080p IPS monitors on the market -- I'm using one.That being said, it's not really important whether it's benchmarked at x1080 or x1200. There is a neglible difference in the number of pixels being drawn (one of the reasons I roll my eyes at 16:10 snobs). If you're using a 1080p monitor, just add anywhere from 0.5 to 2 FPS to the average FPS results from x1200.

Disclaimer: I have nothing *against* 16:10. All other things being equal, I'd choose 16:10 over 16:9. However, with 16:9 monitors being so much cheaper, I can't justify paying a huge premium for a measily 120 lines of vertical resolution. If you're willing to pay for it, great, but kindly don't pretend that doing so somehow makes you superior.