Zotac GeForce GT 640 DDR3 Review: Glacial Gaming & Heavenly HTPC

by Ryan Smith & Ganesh T S on June 20, 2012 12:00 PM ESTPower, Temperature, & Noise

As always, we wrap up our look at a new video card with a look at the physical performance attributes: power consumption, temperatures, and noise. NVIDIA is breaking new ground for desktop Kepler with their first sub-75W card, so it will be interesting to see just what the tradeoff is for such low power consumption.

| Zotac GeForce GT 640 DDR3 Voltages | |||

| GT 640 Idle | GT 640 Load | ||

| 0.95v | 1.00v | ||

NVIDIA doesn’t do a lot of voltage scaling with the GT 640. At idle it runs at 0.95v, and makes a short jump to 1.00v under full load. For a 28nm GPU 0.95v under idle is a bit higher than what we’ve seen in the past, which may explain the official 15W idle TDP.

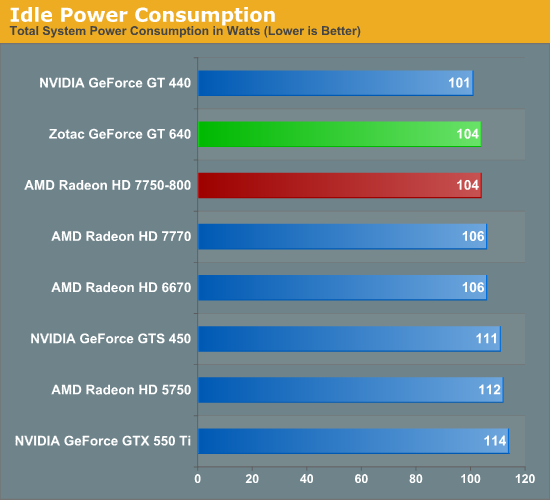

Earlier we theorized that the GT 640 would have worse idle power characteristics than the GT 440, and this appears to be the case. The difference at the wall is all of 3W but it’s a solid indication that NVIDIA has at best not improved on their idle power consumption, if not made it a bit worse. The good news for them is that in spite of this slight rise in idle power consumption it’s still enough to tie the 7750 and beat older cards like the 6670 and GTS 450.

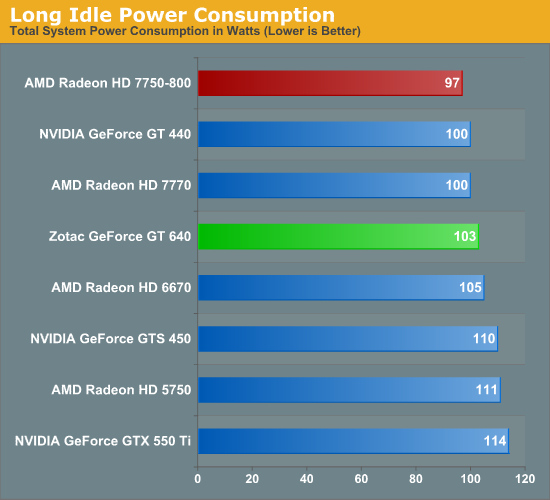

Long idle on the other hand sees the 7750 jump back into the lead, as NVIDIA doesn’t have anything resembling AMD’s ZeroCore power technology.

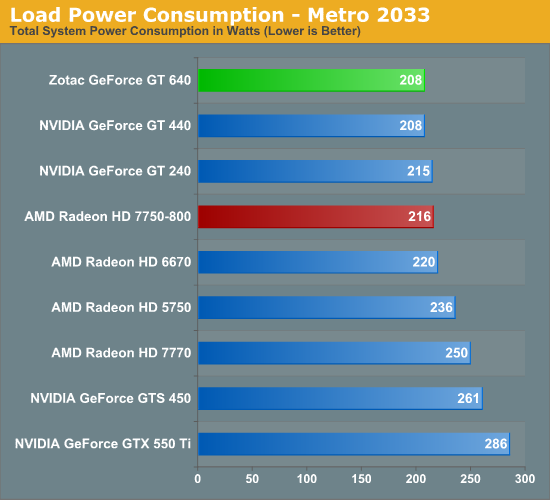

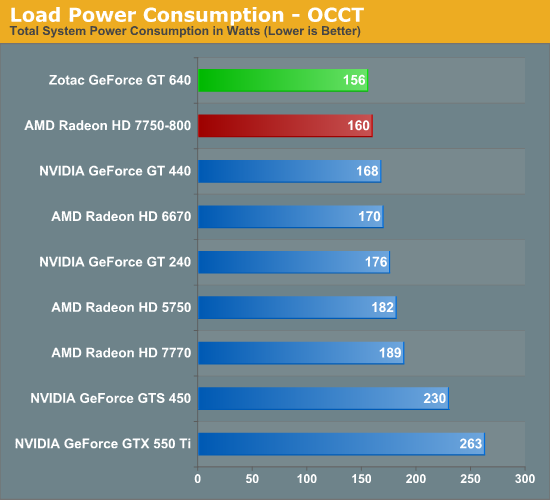

Of all of the sub-75W cards in our benchmark suite, the GT 640 ends up having the lowest load power consumption. Under both Metro and OCCT it’s equal to or better than the GT 440, GT 240, 6670, and 7750. AMD’s official PowerTune limit on the latter is 75W versus the GT 640’s 65W TDP, so this is not unexpected, but it’s always nice to have it confirmed in numbers. That said, for desktop usage I’m not sure 4-8W at the wall is all that big of a deal. So while NVIDIA’s power consumption is marginally lower than the 7750 they aren’t necessarily gaining anything tangible from it, particularly when you consider the loss in performance.

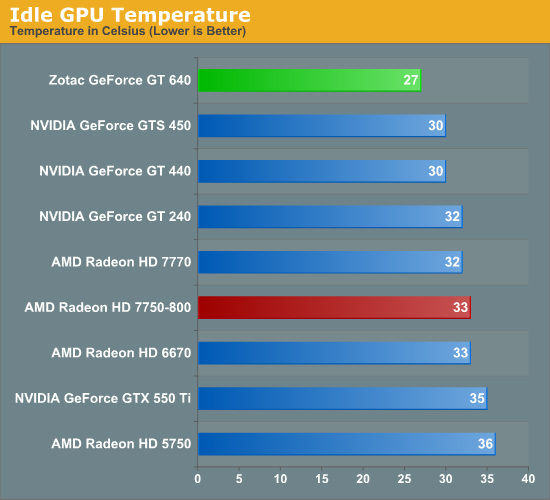

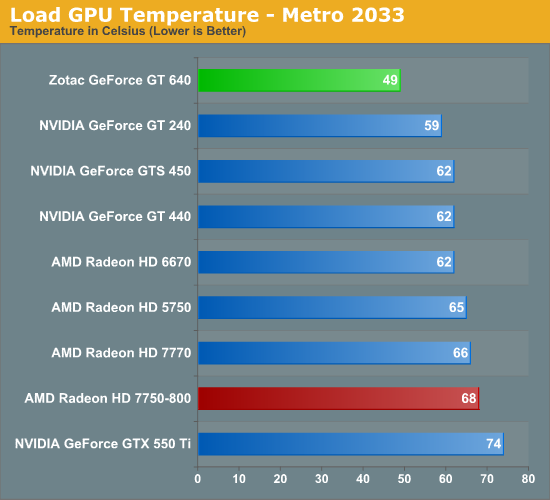

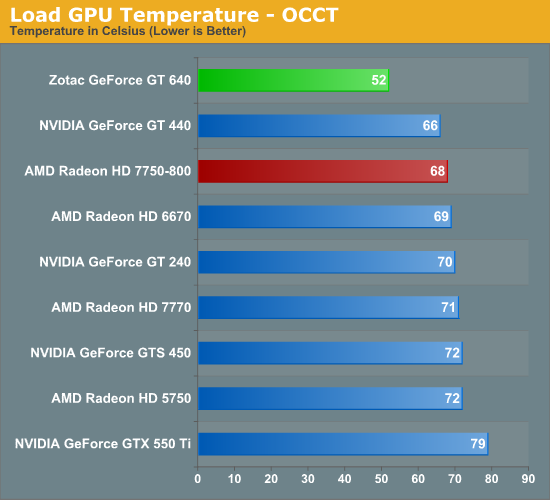

GPU temperatures look absolutely stunning here, and in fact it’s better than we would have expected. Zotac’s heatsink is not particularly large, and while these type of cards typically stay under 70C we would not have expected a single-slot heatsink to perform this well. If these kinds of temperatures can carry over into other designs there’s a very good chance we’re going to see some nice passively cooled cards in the near future. In the meantime buyers sheepish about high temperatures are going to find that Zotac’s GT 640 is an exceptional card.

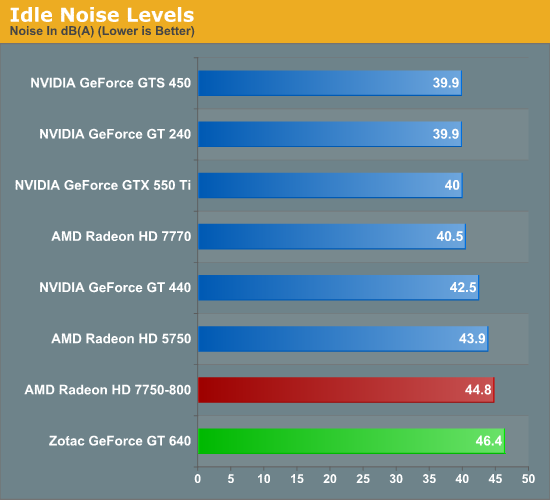

Last but not least we have noise. Zotac’s card may be exceptionally cool, but that single slot cooler and small fan comes back to bite them when it comes to noise. That little fan simply doesn’t idle well, leading to the Zotac GT 640 being one of the loudest idling sub-75W card we’ve seen in quite some time. Zotac fares much better under load – where the 7750’s equally tiny and tinny fan leads to the 7750 ending up as the louder card by about 1dB – but really neither of these cards is particularly quiet for as little power as they consume. HTPC users who aren’t already looking at passively cooled cards are probably going to want to look elsewhere unless they absolutely need a single-slot card, as there’s a very clear tradeoff on size versus noise here.

60 Comments

View All Comments

cjs150 - Thursday, June 21, 2012 - link

"God forbid there be a technical reason for it.... "Intel and Nvidia have had several generations of chip to fix any technical issue and didnt (HD4000 is good enough though). AMD have been pretty close to the correct frame rate for a while.

But it is not enough to have the capability to run at the correct frame rate is you make it too difficult to change the frame rate to the correct setting. That is not a hardware issue just bad design of software.

UltraTech79 - Wednesday, June 20, 2012 - link

Anyone else really disappointed in 4 still being standardized around 24 fps? I thought 60 would be the min standard by now with 120 in higher end displays. 24 is crap. Anyone that has seen a movie recorded at 48+FPS know whats I'm talking about.This is like putting shitty unleaded gas into a super high-tech racecar.

cjs150 - Thursday, June 21, 2012 - link

You do know that Blu-ray is displayed at 23.976 FPS? That looks very good to me.Please do not confuse screen refresh rates with frame rates. Screen refresh runs on most large TVs at between 60 and 120 Hz, anything below 60 tends to look crap. (if you want real crap trying running American TV on an European PAL system - I mean crap in a technical sense not creatively!)

I must admit that having a fps of 23.976 rather than some round number such as 24 (or higher) FPS is rather daft and some new films are coming out with much higher FPS. I have a horrible recollection that the reason for such an odd FPS is very historic - something to do with the length of 35mm film that would be needed per second, the problem is I cannot remember whether that was simply because 35mm film was expensive and it was the minimum to provide smooth movement or whether it goes right back to days when film had a tendency to catch light and then it was the maximum speed you could put a film through a projector without friction causing the film to catch light. No doubt there is an expert on this site who could explain precisely why we ended up with such a silly number as the standard

UltraTech79 - Friday, June 22, 2012 - link

You are confusing things here. I clearly said 120(fps) would need higher end displays (120Hz) I was rounding up 23.976 FPS to 24, give me a break.It looks good /to you/ is wholly irrelevant. Do you realize how many people said "it looks very good to me." Referring to SD when resisting the HD movement? Or how many will say it again referring to 1080p thinking 4k is too much? It's a ridiculous mindset.

My point was that we are upping the resolution, but leaving another very important aspect in the dust that we need to improve. Even audio is moving faster than framerates in movies, and now that most places are switching to digital, the cost to goto the next step has dropped dramatically.

nathanddrews - Friday, June 22, 2012 - link

It was NVIDIA's choice to only implement 4K @ 24Hz (23.xxx) due to limitations of HDMI. If NVIDIA had optimized around DisplayPort, you could then have 4K @ 60Hz.For computer use, anything under 60Hz is unacceptable. For movies, 24Hz has been the standard for a century - all film is 24fps and most movies are still shot on film. In the next decade, there will be more and more films that will use 48, 60, even 120fps. Cameron was cock-blocked by the studio when he wanted to film Avatar at 60fps, but he may get his wish for the sequels. Jackson is currently filming The Hobbit at 48fps. Eventually all will be right with the world.

karasaj - Wednesday, June 20, 2012 - link

If we wanted to use this to compare a 640M or 640M LE to the GT640, is this doable? If it's built on the same card, (both have 384 CUDA cores) can we just reduce the numbers by a rough % of the core clock speed to get rough numbers that the respective cards would put out? I.E. the 640M LE has a clock of 500mhz, the 640M is ~625Mhz. Could we expect ~55% of this for the 640M LE and 67% for the 640M? Assuming DDR3 on both so as not to have that kind of difference.Ryan Smith - Wednesday, June 20, 2012 - link

It would be fairly easy to test a desktop card at a mobile card's clocks (assuming memory type and functional unit count was equal) but you can't extrapolate performance like that because there's more to performance than clockspeeds. In practice performance shouldn't drop by that much since we're already memory bandwidth bottlenecked with DDR3.jstabb - Wednesday, June 20, 2012 - link

Can you verify if creating a custom resolution breaks 3D (frame packed) blu-ray playback?With my GT430, once a custom resolution has been created for 23/24hz, that custom resolution overrides the 3D frame-packed resolution created when 3D vision is enabled. The driver appeared to have a simple fall through logic. If a custom resolution is defined for the selected resolution/refresh rate it is always used, failing that it will use a 3D resolution if one is defined, failing that it will use the default 2D resolution.

This issue made the custom resolution feature useless to me with the GT430 and pushed me to an AMD solution for their better OOTB refresh rate matching. I'd like to consider this card if the issue has been resolved.

Thanks for the great review!

MrSpadge - Wednesday, June 20, 2012 - link

It consumes about just as much as the HD7750-800, yet performs miserably in comparison. This is an amazing win for AMD, especially comparing GTX680 and HD7970!UltraTech79 - Wednesday, June 20, 2012 - link

This preform about as well as an 8800GTS for twice the price. Or half the preformance of a 460GTX for the same price.These should have been priced at 59.99.