NVIDIA GeForce GTX 690 Review: Ultra Expensive, Ultra Rare, Ultra Fast

by Ryan Smith on May 3, 2012 9:00 AM ESTBattlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost.

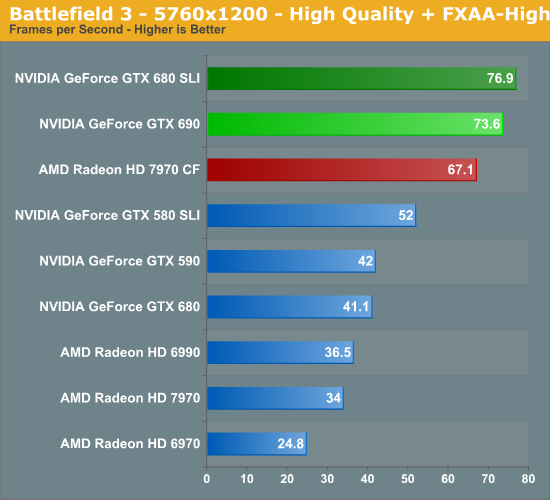

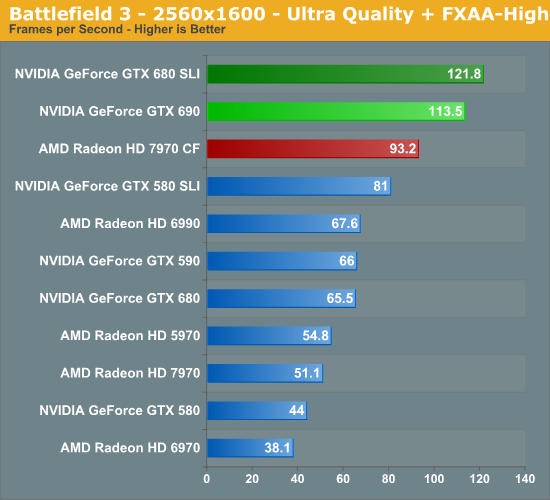

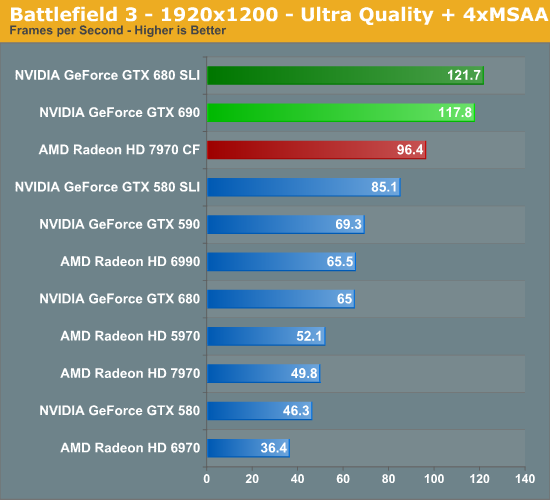

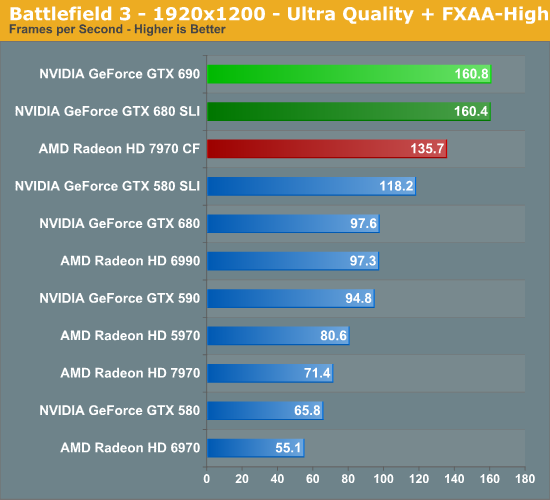

Battlefield 3 has been NVIDIA’s crown jewel; a widely played multiplayer game with a clear lead for NVIDIA hardware. And with multi-GPU thrown into the picture that doesn’t change, leading to the GTX 690 once again taking a very clear lead here over the 7970CF at all resolutions. With that said, we see something very interesting at 5760, with NVIDIA’s lead shrinking by quite a bit. What was a 21% lead at 2560 is only a 10% at 5760. So far we haven’t seen any strong evidence of NVIDIA being VRAM limited with only 2GB of VRAM and while this isn’t strong evidence that the situation has changed is does warrant consideration. If anything is going to be VRAM limited after all it’s BF3.

Meanwhile compared to the GTX 680 SLI the GTX 690 is doing okay here. It’s only achieving 93% of the GTX 680 SLI’s performance at 2560, but for some reason pulls ahead at 5760, covering that to 96% of the performance of the dual video card setup.

200 Comments

View All Comments

InsaneScientist - Sunday, May 6, 2012 - link

Or don't...It's 2 days later, and you've been active in the comments up through today. Why'd you ignore this one, Cerise?

CeriseCogburn - Sunday, May 6, 2012 - link

Because you idiots aren't worth the time and last review the same silverblue stalker demanded the links to prove my points and he got them, and then never replied.It's clear what providing proof does for you people, look at the sudden 100% ownership of 1920x1200 monitors..

ROFL

If you want me to waste my time, show a single bit of truth telling on my point on the first page.

Let's see if you pass the test.

I'll wait for your reply - you've got a week or so.

KompuKare - Thursday, May 3, 2012 - link

It is indeed sad. AMD comes up with really good hardware features like eyefinity but then never polishes up the drivers properly. Looking some of crossfire results is sad too: in Crysis and BF3 CF scalling is better than SLI (unsure but I think the trifire and quadfire results for those games are even more in AMD's favour), but in Skyrim it seems that CF is totally broken.Of course compared to Intel, AMD's drivers are near perfect but with a bit more work they could be better than Nvidia's too rather than being mostly at 95% or so.

Tellingly, JHH did once say that Nvidia were a software company which was a strange thing for a hardware manufacturer to say. But this also seems to mean that they forgotten the most basic primary thing which all chip designers should know: how to design hardware which works. Yes I'm talking about bumpgate.

See despite all I said about AMD's drivers, I will never buy Nvidia hardware again after my personal experience of their poor QA. My 8800GT, my brother's 8800GT, this 8400M MXM I had, plus number of laptops plus one nForce motherboard: they all had one thing in common, poorly made chips made by BigGreen and they all died way before they were obsolete.

Oh, and as pointed out in the Anand VC&G forums earlier today:

"Well, Nvidia has the title of the worst driver bug in history at this point-

http://www.zdnet.com/blog/hardware/w...hics-card/7... "

killing cards with a driver is a record.

Filiprino - Thursday, May 3, 2012 - link

Yep, that's true. They killed cards with a driver. They should implement hardware auto shutdown, like CPUs. As for the nForce, I had one motherboard, the best nForce they made: nForce 2 for AMD Athlon. The rest of mobo chipsets were bullshit, including nForce 680.The QA I don't think is NVIDIA's fault but videocard manufacturers.

KompuKare - Thursday, May 3, 2012 - link

No, 100% Nvidia's fault. Although maybe QA isn't the right word. I was referring to Nvidia using the wrong solder underfil for a few million chips (the exact number is unknown): they were mainly mobile parts and Nvidia had to put $250 million aside to settle a class action.

http://en.wikipedia.org/wiki/GeForce_8_Series#Prob...

Although that wiki article is rather lenient towards Nvidia since that bit about fan speeds is red herring: more accurately it was Nvidia which spec'ed their chips to a certain temperature and designs which run way below that will have put less stress on the solder but to say it was poor OEM and AIB design which lead to the problem is not correct. Anyway, the proper expose was by Charlie D. in the Inquirer and later SemiAccurate

CeriseCogburn - Friday, May 4, 2012 - link

But in fact it was a bad heatsink design, thank HP, and view the thousands of heatsink repairs, including the "add a copper penny" method to reduce the giant gap between the HS and the NV chip.Charlie was wrong, a liar, again, as usual.

KompuKare - Friday, May 4, 2012 - link

Don't be silly. While HP's DV6000s were the most notorious failures and that was due to HP's poorly designed heatsink / cooling bumpgate also saw Dells, Apples and others:http://www.electronista.com/articles/10/09/29/suit...

http://www.nvidiadefect.com/nvidia-settlement-t874...

The problem was real, continues to be real and also affects G92 desktop parts and certain nForce chipsets like the 7150.

Yes, the penny shim trick will fix it for a while but if you actually were to read up on technicians forums who fix laptops, that plus reflows are only a temporary fix because the actual chips are flawed. Re-balling with new, better solder is a better solution but not many offer those fixes since it involves 100s of tiny solder balls per chip.

Before blindly leaping to Nvidia's defence like a fanboy, please do some research!

CeriseCogburn - Saturday, May 5, 2012 - link

Before blindly taking the big lie from years ago repeated above to attack nvidia for no reason at all other than all you have is years old misinformation, then wail on about it, while telling someone else some more lies about it, check your own immense bias and lack of knowledge, since I had to point out the truth for you to find, and you forgot DV9000, dv2000 and dell systems with poor HS design, let alone apple amd console video chip failings, and the fact that payment was made and restitution was delivered, which you also did not mention, because of your fanboy problems, obviously in amd's favor.Ashkal - Thursday, May 3, 2012 - link

In price comparison in Final words you are not referring with AMD products. I think AMD is better in price performance ratio.prophet001 - Thursday, May 3, 2012 - link

I agree