The HTC One X for AT&T Review

by Brian Klug on May 1, 2012 6:00 PM EST- Posted in

- Smartphones

- Snapdragon

- HTC

- Qualcomm

- MSM8960

- Krait

- Mobile

- Tegra 3

- HTC One

- NVIDIA

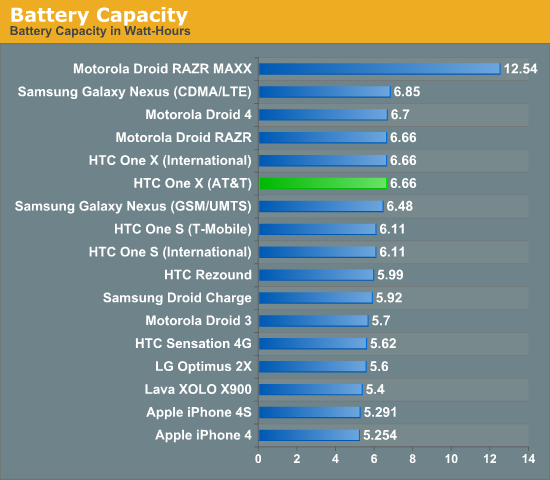

This is our the first smartphone we’ve seen with a 28nm SoC, and thus battery life is the big question. Further, the handset includes all the onboard MSM8960 radio goodness as we’ll mention in a bit. The problem with some HTC phones for the longest time was that they shipped with smaller than average batteries - while the competition continued up past 6 Whr, HTC would ship phones with 5 or so. That changes with the HTC One X, which includes a 6.66 Whr (1800 mAh, 3.7V) internal battery. I’m presenting the same battery capacity chart that we did in the Xolo X900 review for a frame of reference.

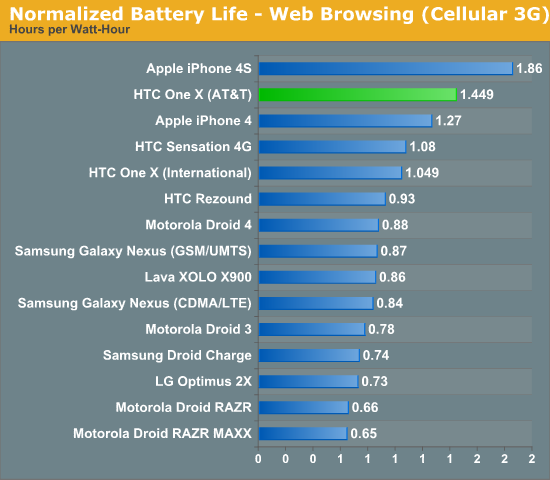

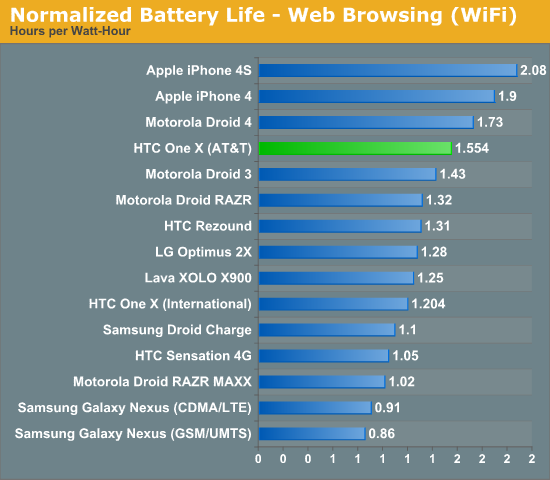

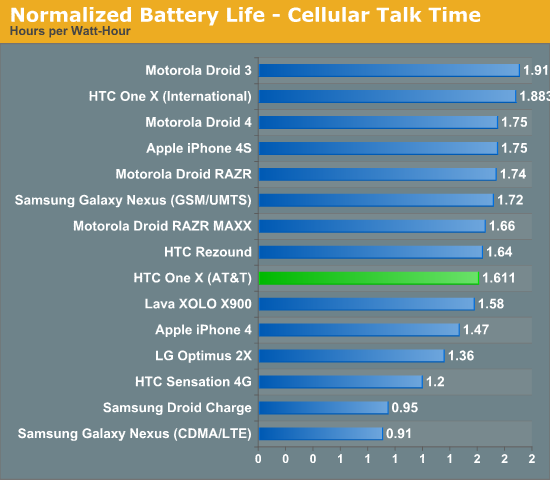

Like we did with the X900 review, we’re going to present the normalized battery performance - battery life divided by battery capacity - to give a better idea for how this compares with the competition.

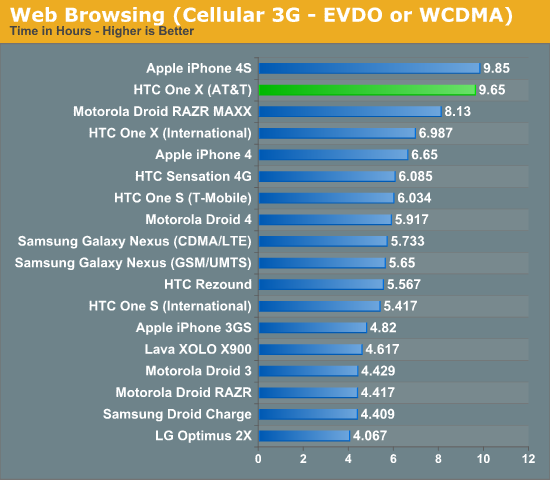

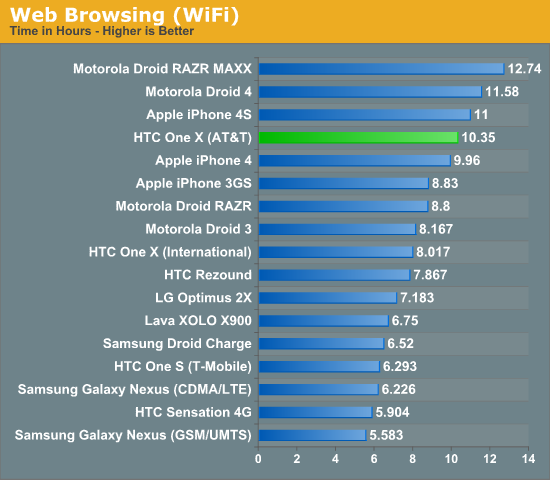

As a reminder, the browsing tests happen at 200 nits and consist of a few dozen pages loaded endlessly over WCDMA or WiFi (depending on the test) until the phone powers off. The WiFi hotspot tethering test consists of a single attached client streaming 128 kbps MP3 audio and loading four tabs of the page loading test through the handset over WCDMA with the display off.

HTC is off to an incredible start with our 3G web browsing tests. Even if you assume that Android and iOS are on even footing from a power efficiency standpoint, the HTC One X is easily able to equal Apple's best in terms of battery life. In reality my guess is that the 4S is at a bit of an unfair advantage in this test due to how aggressive iOS/mobile Safari can be about reducing power consumption, but either way the AT&T One X does amazing here. The advantage isn't just because of the larger battery either, if we look at normalized results we see that the One X is simply a more efficient platform than any other Android smartphone we've tested:

The Tegra 3 based international One X doesn't do as well. NVIDIA tells us that this is because of differences in software. We'll be testing a newer build of the One X's software to see how much of an improvement there is in the coming days.

Moving onto WiFi battery life the AT&T One X continues to do quite well, although the Droid 4 and RAZR MAXX are both able to deliver longer battery life in this case:

There are too many variables at play here (panel efficiency, WiFi stack, browser/software stack) to pinpoint why the One X loses its first place position, but it's still an extremely strong performer. Once again we see a noticeable difference between it and the international One X.

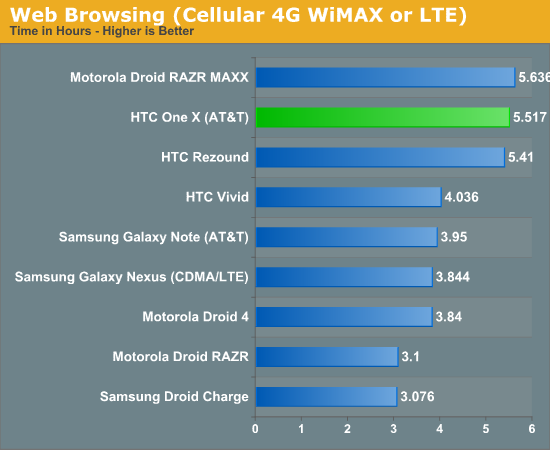

The big question is how well does the AT&T One X do when we're using the MSM8960's LTE baseband? Pretty darn well, when you consider that it's bested only by the RAZR MAXX with its gargantuan battery. Probably the most notable comparison point here is the HTC Vivid or Galaxy Note on AT&T which both are based on the APQ8060 + MDM9200 combination.

As a reminder, the Verizon / CDMA2000 LTE devices here are at a bit of a disadvantage due to virtually all of those handsets camping CDMA2000 1x for voice and SMS. The AT&T LTE enabled devices use circuit switched fallback (CSFB) and essentially only camp one air interface at a time, falling back from LTE to WCDMA to exchange a call.

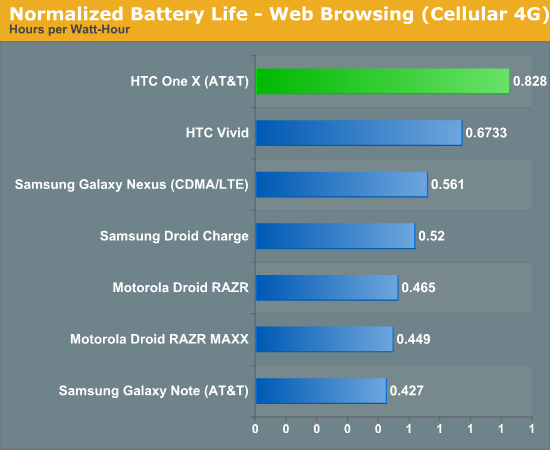

This particular graph doesn't tell the full story however. In practice the AT&T One X seems to last a lot longer using LTE than any LTE Android phone we've tested in the past. Nipping at the heels of the RAZR MAXX, we need to look at normalized battery life to get an idea of just how efficient the new 28nm LTE enabled SoC is:

Now that we have 28nm baseband we've immediately realized some power gains. As time progresses, the rest of the RF chain will also get better. Already most of the LTE power amplifiers vendors have newer generation parts with higher PAE (Power-Added Efficiency), and such improvements will hopefully continue to improve things and gradually bring LTE battery life closer to that of 3G WCDMA or EVDO.

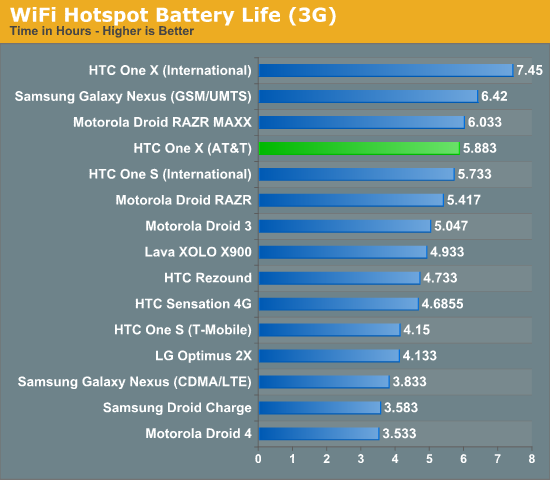

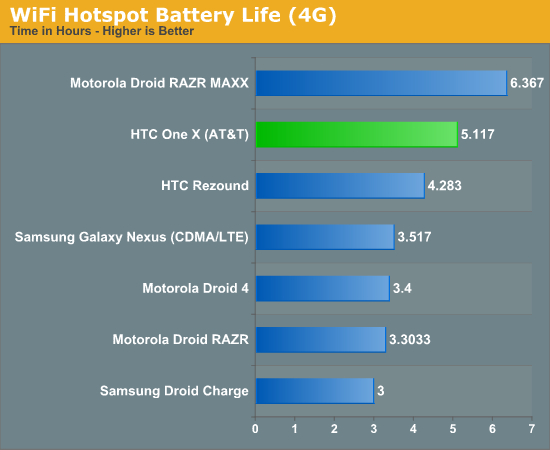

Using the AT&T One X as a WiFi hotspot is also going deliver a pretty great experience:

As an LTE hotspot the RAZR MAXX's larger battery is able to deliver a longer run time, however the One X does very well given its battery capacity and size.

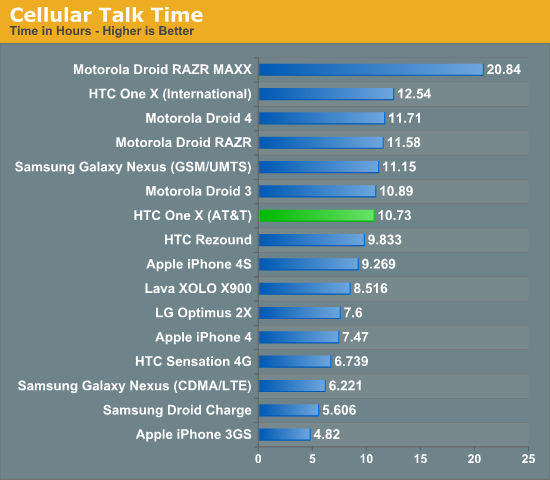

Finally, our cellular talk time charts put the AT&T One X in the upper half of our results. Overall the AT&T One X appears to do very well across the board, but it's very strong in the 3G/LTE tests.

137 Comments

View All Comments

MrMilli - Tuesday, May 1, 2012 - link

"On the GPU side, there's likely an NVIDIA advantage there as well."How do you get to this conclusion?

Qualcomm scores a little bit higher in Egypt as in the Pro test of GLBenchmark. I don't know why you would put any importance to the off-screen tests for these two devices since they both run the same resolution (which is even 720p) which takes me to my next points. Actual games will be v-synced and how does the Tegra suddenly become faster than the Adreno even though they both are still rendering at the same resolution as on-screen but just with v-sync off. I've always had a hard time accepting the off-screen results of GLBenchmark because there's no way to verify if a device is actually rendering correctly (or maybe even cheating). Can you imagine testing a new videocard in the same fashion?

metafor - Tuesday, May 1, 2012 - link

Results can vary with v-sync because Tegra could be bursting to higher fps values. The offscreen isn't a perfect test either but it gives you an idea of what would happen if a heavier game that didn't approach the 60fps limit would be like.Of course, those games likely won't have the same workloads as GLBenchmark, so it really wouldn't matter all that much.

ChronoReverse - Tuesday, May 1, 2012 - link

The offscreen test is worthless really.If at 720p, the same benchmark, except it puts an image on the screen, shows that the S4 GPU is faster than the Tegra3 GPU, then how useless is the offscreen test showing the opposite?

Furthermore, neither the S4 nor Tegra3 comes close to 59-60FPS, both tipping at around the 50FPS range.

It's pretty clear that by skipping the rendering, the offscreen test is extremely unrealistic.

metafor - Wednesday, May 2, 2012 - link

It doesn't need to come close. It just needs to burst higher than 60fps. Let's say that it would normally reach 80fps 10% of the time and remain 40fps the other 90%. Let's say S4 were to only peak to 70fps 10% of the time but remained at 45fps the other 905. The S4's average would be higher with v-sync while Tegra's would be higher without v-sync.The point of the benchmark isn't how well the phone renders the benchmark -- after all, nobody's going to play GLBenchmark :)

The point is to show relative rendering speed such that when heavier games that don't get anywhere close to 60fps are being played, you won't notice stutters.

Of course, as I mentioned, heavier games may have a different mix of shaders. As Basemark shows, Adreno is very very good at complex shaders due to its disproportional ALU strength.

Its compiler unfortunately is unable to translate this into simple shader performance.

ChronoReverse - Wednesday, May 2, 2012 - link

That's still wrong. If you spike a lot, then your experience is worse for 3D games. It's not like we don't know that minimum framerate is just as important.As you mentioned stutters, a device that dips to 40FPS would be more stuttery than one that dips only to 45FPS.

metafor - Thursday, May 3, 2012 - link

I'm not disagreeing. I'm just saying that v-sync'ed results will vary even if it's not close to 60fps. Because some scenes will require very little rendering (say, a panning shot of the sky) and some scenes will require a lot of heavy rendering (say, multiple characters sword fighting, like in Egypt).The average fps may be well below 60fps. But peak fps may be a lot higher. In such cases, the GPU that peaks higher (or more often) will seem worse than it is.

Now, an argument can be made that a GPU that also has very low minimum framerates is worse. But we don't know the distribution here.

Chloiber - Monday, May 7, 2012 - link

Well the benchmark doesn't measure your experience in 3D games but the fps.snoozemode - Tuesday, May 1, 2012 - link

The ATRIX has a LCD pentile RGBW display, as well as the HTC one S, so LCD is definitely not a guarantee for RGB. Maybe you should correct the article with that.snoozemode - Tuesday, May 1, 2012 - link

Sorry one s is obviously amoled.ImSpartacus - Tuesday, May 1, 2012 - link

It's also the passable RGBG pentile, not the viled RGBW pentile.