NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTCompute: What You Leave Behind?

As always our final set of benchmarks is a look at compute performance. As we mentioned in our discussion on the Kepler architecture, GK104’s improvements seem to be compute neutral at best, and harmful to compute performance at worst. NVIDIA has made it clear that they are focusing first and foremost on gaming performance with GTX 680, and in the process are deemphasizing compute performance. Why? Let’s take a look.

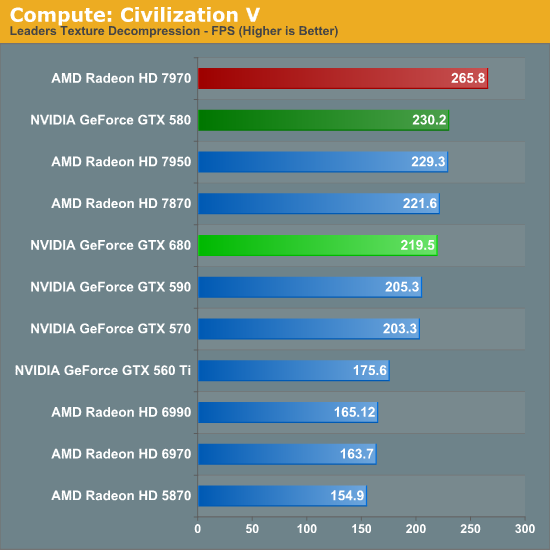

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. Note that this is a DX11 DirectCompute benchmark.

Remember when NVIDIA used to sweep AMD in Civ V Compute? Times have certainly changed. AMD’s shift to GCN has rocketed them to the top of our Civ V Compute benchmark, meanwhile the reality is that in what’s probably the most realistic DirectCompute benchmark we have has the GTX 680 losing to the GTX 580, never mind the 7970. It’s not by much, mind you, but in this case the GTX 680 for all of its functional units and its core clock advantage doesn’t have the compute performance to stand toe-to-toe with the GTX 580.

At first glance our initial assumptions would appear to be right: Kepler’s scheduler changes have weakened its compute performance relative to Fermi.

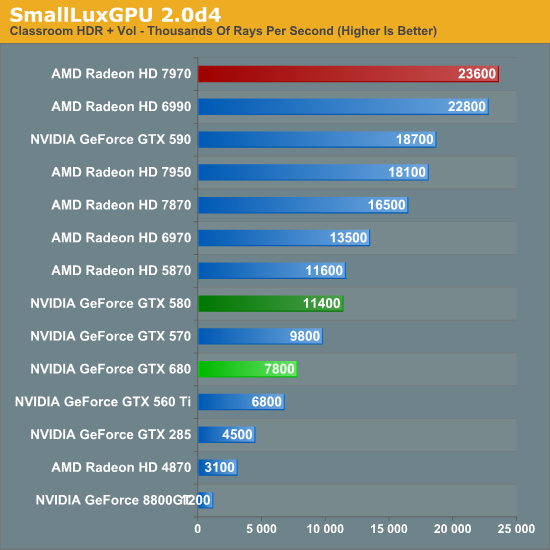

Our next benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. We’re now using a development build from the version 2.0 branch, and we’ve moved on to a more complex scene that hopefully will provide a greater challenge to our GPUs.

CivV was bad; SmallLuxGPU is worse. At this point the GTX 680 can’t even compete with the GTX 570, let alone anything Radeon. In fact the GTX 680 has more in common with the GTX 560 Ti than it does anything else.

On that note, since we weren’t going to significantly change our benchmark suite for the GTX 680 launch, NVIDIA had a solid hunch that we were going to use SmallLuxGPU in our tests, and spoke specifically of it. Apparently NVIDIA has put absolutely no time into optimizing their now all-important Kepler compiler for SmallLuxGPU, choosing to focus on games instead. While that doesn’t make it clear how much of GTX 680’s performance is due to the compiler versus a general loss in compute performance, it does offer at least a slim hope that NVIDIA can improve their compute performance.

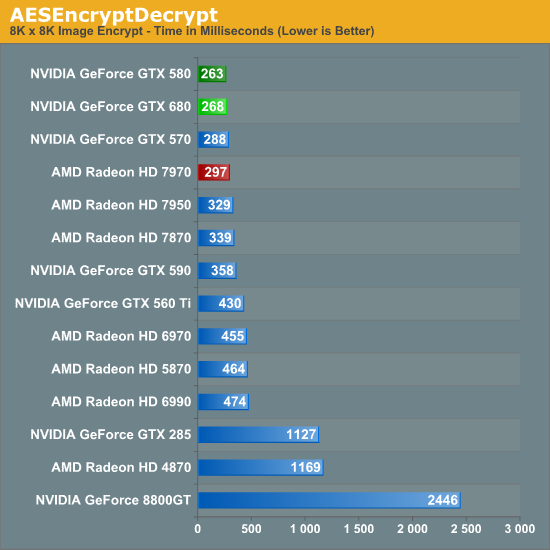

For our next benchmark we’re looking at AESEncryptDecrypt, an OpenCL AES encryption routine that AES encrypts/decrypts an 8K x 8K pixel square image file. The results of this benchmark are the average time to encrypt the image over a number of iterations of the AES cypher.

Starting with our AES encryption benchmark NVIDIA begins a recovery. GTX 680 is still technically slower than GTX 580, but only marginally so. If nothing else it maintains NVIDIA’s general lead in this benchmark, and is the first sign that GTX 680’s compute performance isn’t all bad.

For our fourth compute benchmark we wanted to reach out and grab something for CUDA, given the popularity of NVIDIA’s proprietary API. Unfortunately we were largely met with failure, for similar reasons as we were when the Radeon HD 7970 launched. Just as many OpenCL programs were hand optimized and didn’t know what to do with the Southern Islands architecture, many CUDA applications didn’t know what to do with GK104 and its Compute Capability 3.0 feature set.

To be clear, NVIDIA’s “core” CUDA functionality remains intact; PhysX, video transcoding, etc all work. But 3rd party applications are a much bigger issue. Among the CUDA programs that failed were NVIDIA’s own Design Garage (a GTX 480 showcase package), AccelerEyes’ GBENCH MatLab benchmark, and the latest Folding@Home client. Since our goal here is to stick to consumer/prosumer applications in reflection of the fact that the GTX 680 is a consumer card, we did somewhat limit ourselves by ruling out a number of professional CUDA applications, but there’s no telling that compatibility there would fare any better.

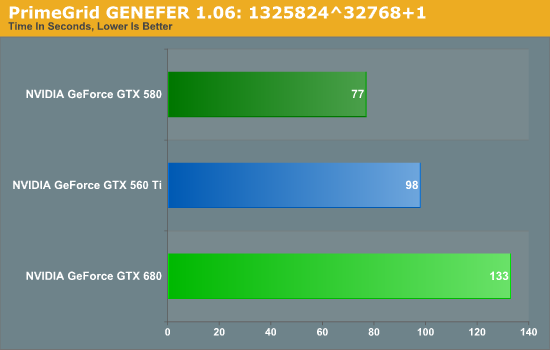

We ultimately started looking at Distributed Computing applications and settled on PrimeGrid, whose CUDA accelerated GENEFER client worked with GTX 680. Interestingly enough it primarily uses double precision math – whether this is a good thing or not though is up to the reader given the GTX 680’s anemic double precision performance.

Because it’s based around double precision math the GTX 680 does rather poorly here, but the surprising bit is that it did so to a larger degree than we’d expect. The GTX 680’s FP64 performance is 1/24th its FP32 performance, compared to 1/8th on GTX 580 and 1/12th on GTX 560 Ti. Still, our expectation would be that performance would at least hold constant relative to the GTX 560 Ti, given that the GTX 680 has more than double the compute performance to offset the larger FP64 gap.

Instead we found that the GTX 680 takes 35% longer, when on paper it should be 20% faster than the GTX 560 Ti (largely due to the difference in the core clock). This makes for yet another test where the GTX 680 can’t keep up with the GTX 500 series, be it due to the change in the scheduler, or perhaps the greater pressure on the still-64KB L1 cache. Regardless of the reason, it is becoming increasingly evident that NVIDIA has sacrificed compute performance to reach their efficiency targets for GK104, which is an interesting shift from a company that was so gung-ho about compute performance, and a slightly concerning sign that NVIDIA may have lost faith in the GPU Computing market for consumer applications.

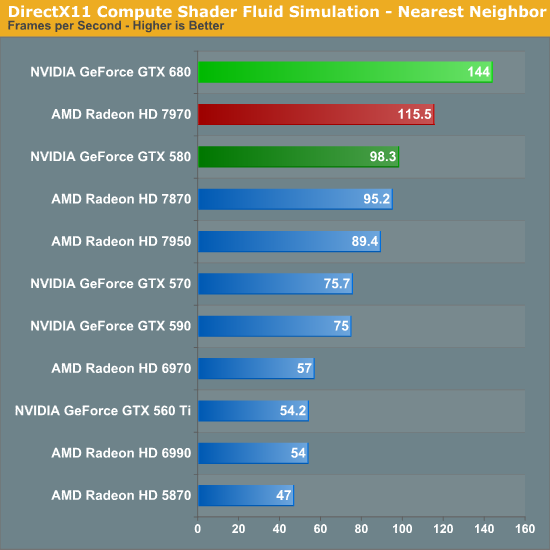

Finally, our last benchmark is once again looking at compute shader performance, this time through the Fluid simulation sample in the DirectX SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we’re using an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

Redemption at last? In our final compute benchmark the GTX 680 finally shows that it can still succeed in some compute scenarios, taking a rather impressive lead over both the 7970 and the GTX 580. At this point it’s not particularly clear why the GTX 680 does so well here and only here, but the fact that this is a compute shader program as opposed to an OpenCL program may have something to do with it. NVIDIA needs solid compute shader performance for the games that use it; OpenCL and even CUDA performance however can take a backseat.

404 Comments

View All Comments

Sabresiberian - Thursday, March 22, 2012 - link

Do you work for AMD's marketing department, or are you just a fanboy with tunnel vision?silverblue - Thursday, March 22, 2012 - link

Could be beenthere under a different name... ;)CeriseCogburn - Thursday, March 22, 2012 - link

Youtube has settled that lie - all the "bumpgate" models have defectively designed heatsinks - end users are inserting a penny (old for copper content) above the gpu to solve the large gap while removing the laughable quarter inch thick spongepad.It was all another lie that misplaced blame. Much like the ati chip that failed in xbox360 - never blamed on ati strangely.... (same thing bad HS design).

Arbie - Thursday, March 22, 2012 - link

IMHO the only game worth basing a purchase decision on is Crysis / Waheard. There, even the 7950 beats the GTX680, especially in the crucial area of minimum frame rate. The AMD cards also take significantly less power long-term (which is most important) and at load. They are noisier under load but not enough to matter while I'm playing.

So for me it's still AMD.

kallogan - Thursday, March 22, 2012 - link

Don't know if you can say that. Crysis is old now. No directx 11. But it's true the GTX 680 does not particularly shine in heavy games like Metro 2033 or Crysis warhead compared to other games that may be more Nvidia optimised like BF3.CeriseCogburn - Tuesday, March 27, 2012 - link

Except in the most punishing benchmark Shotun 2 total War, the GTX680 by Nvidia spanks the 7970 and wins at all 3 resolutions !*

*

Can we get a big fanboy applause for the 7970 not doing well at all in very punishing high quality games comparing to the GTX680 ?

Sabresiberian - Thursday, March 22, 2012 - link

The key phrase you use here is "where it matters to me". I wouldn't argue with that at all - your decision is clearly the right one for your gaming tastes.That being said, you change your wording a bit, and it seems to me to imply (softening it "IMHO") that everyone should choose by your standards; that is also clearly wrong. The games I play are World of Warcraft, and Skyrim. WoW test results can be best compared to BF3, of those benches that were used in this article. I've never played Crysis passed a demo - so choosing based on that benchmark would be shooting myself in the proverbial foot.

Clearly, the GTX 680 is the better choice for me.

I've always said, choose your hardware by application, not by overall results (unless, of course, overall results matches your application cross-section :) ), and the benches in this article are more data to back up that recommendation.

;)

3DoubleD - Thursday, March 22, 2012 - link

Please don't buy a GTX 680 for WoW...It's even overkill for Skyrim, since you don't really need much more than 30 fps. You'd be fine using more economical variants.

CeriseCogburn - Thursday, March 22, 2012 - link

Wrong, but enjoy your XFX amd D double D.The cards, all of them, are not good enough yet.

Always turning down settings and begging the vsync.

They all fail our current gams and single monitor resolutions.

Iketh - Thursday, March 22, 2012 - link

for pvp you most certainly do need more than 30 FPS, try 60 at the least and 75 as ideal with a 120hz monitor... the more FPS I can get, the better I perform... your statement is true for raiding only