The Radeon HD 7970 Reprise: PCIe Bandwidth, Overclocking, & The State Of Anti-Aliasing

by Ryan Smith on January 27, 2012 4:30 PM EST- Posted in

- GPUs

- AMD

- Radeon

- Radeon HD 7000

Overclocking Revisited

While we’ve taken a look at overclocking the Radeon HD 7970 in our review of XFX’s Radeon HD 7970 Black Edition Double Dissipation, that was a look at XFX’s custom cooled card. We’ve had a number of requests for overclocking performance on our reference card, so we’ve gone ahead and done that.

In the meantime though a couple interesting facts have come to light. While both our reference card and our XFX card ran at 1.175v, it turns out that this is not the only voltage the 7970 ships at. Retail buyers have reported receiving cards that run at 1.112v and 1.05v, and Alexey Nicolaychuk (aka Unwinder), the author of MSI Afterburner, has discovered that there’s a 4th voltage according to the fuses on Tahiti: 1.025v. 1.025v has not been seen in any retail cards so far, and it’s most likely a bin that’s reserved for future products (e.g. the eventual 7990), while out of the remaining 3 voltages 1.175 appears to be the most common.

| Radeon HD 7900 Series Voltages | ||||

| Ref 7970 Load | Ref 7970 Idle | XFX 7970 Black Edition DD | ||

| 1.17v | 0.85v | 1.17v | ||

In any case, in overclocking our reference 7970 we’ve found that the results are very close to our XFX 7970. Whereas our XFX 7970 could hit 1125MHz without overvolting, our reference 7970 topped out at a flat 1100MHz. Meanwhile our memory speeds reached 6.3GHz before performance began to dip, which was the same point we reached on the XFX 7970 and not at all surprising since both boards use the same PCB.

Overall this represents a 175MHz (18%) core overclock and 800MHz (15%) memory overclock over the stock clocks of our reference 7970. As we’ll see, since this is being done without overvolting the power consumption hit (and all consequences thereof) from this is minimal to non-existent, making this a rather sizable free overclock.

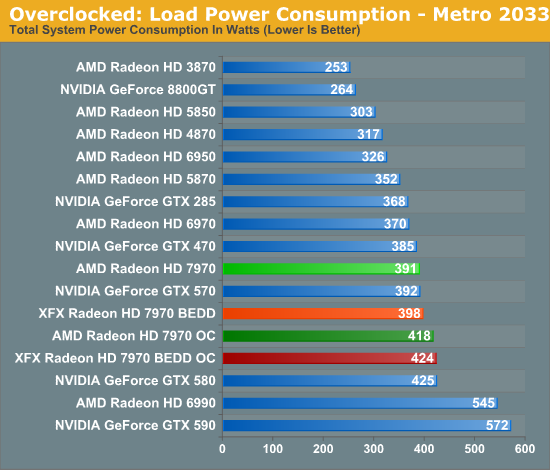

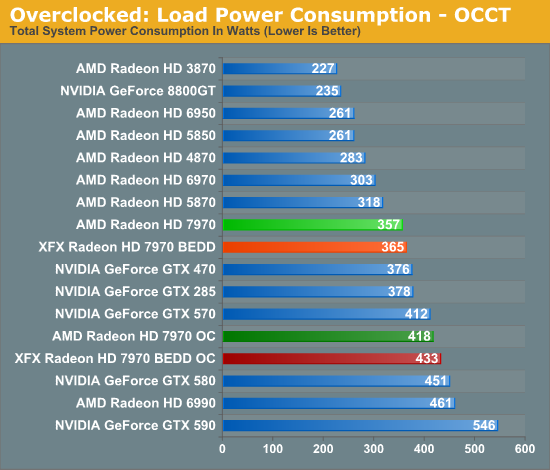

Taking a quick look at power/temp/noise, we see that the increase in load power is consistent with our earlier results with the XFX 7970. Under Metro the power increase is largely the CPU ramping up to feed the now-faster 7970. While under OCCT we’re seeing the consequence of increasing the PowerTune limit so that we don’t inadvertently throttle performance when gaming.

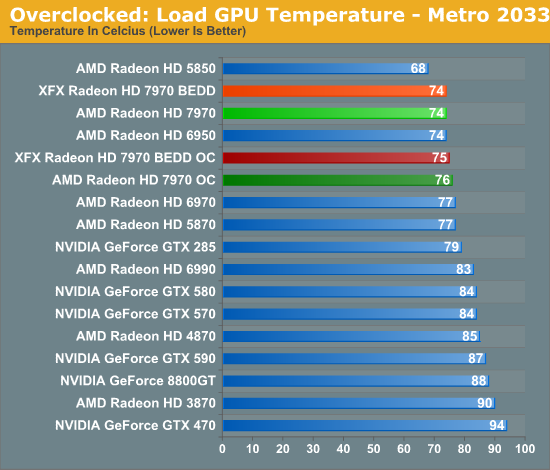

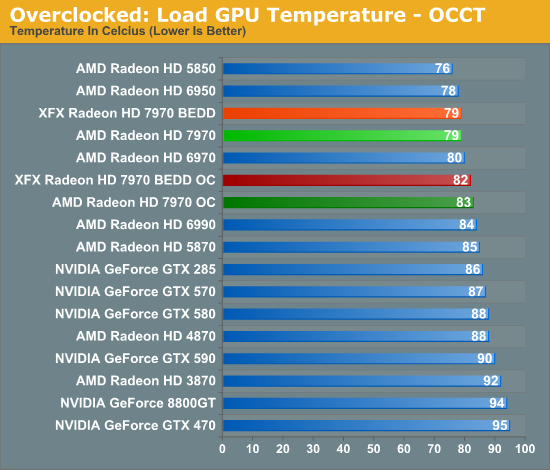

With almost no increase in GPU power consumption while gaming, temperatures hold steady. This translates to a 2C increase in temperatures, while under OCCT the difference is 4C.

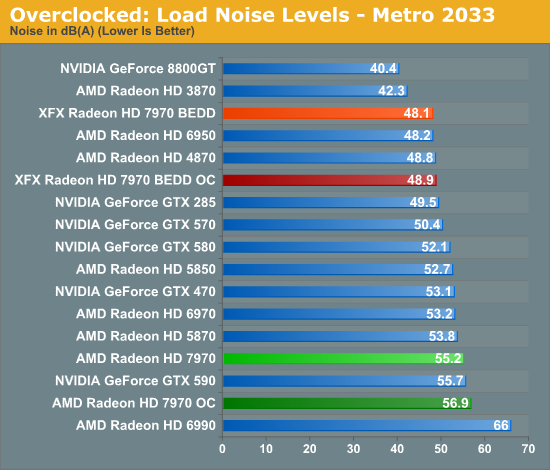

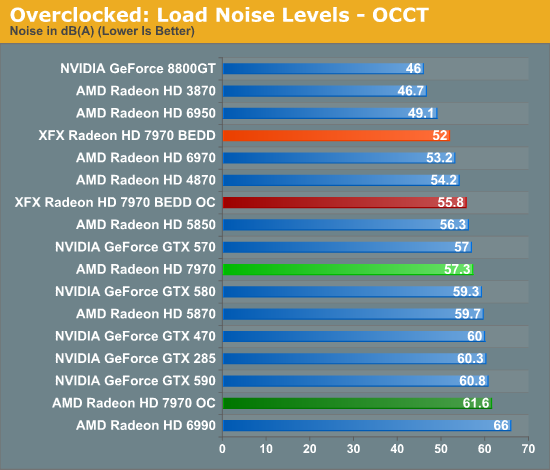

Finally, as with temperature, noise only ever so slightly creeps up. Unfortunately for most users, AMD’s already aggressive fan profile makes this relatively loud card just a bit louder yet; the temperatures are fantastic, but the noise less so. Things look even worse under OCCT, but again this is a pathological test with PowerTune increased to 300W.

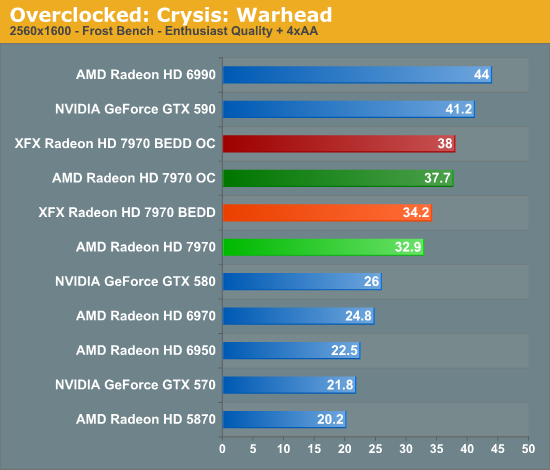

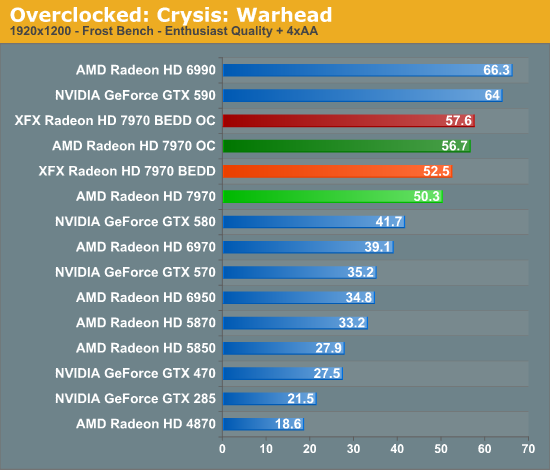

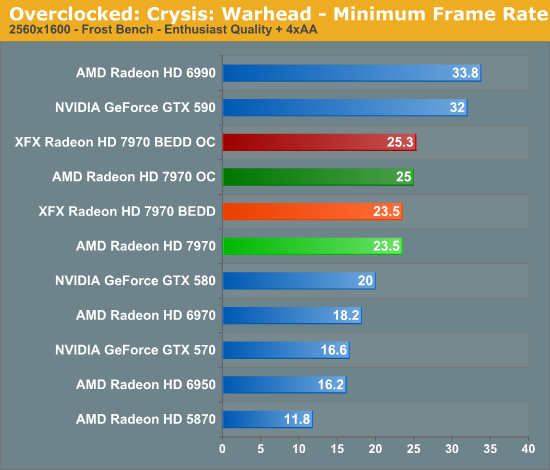

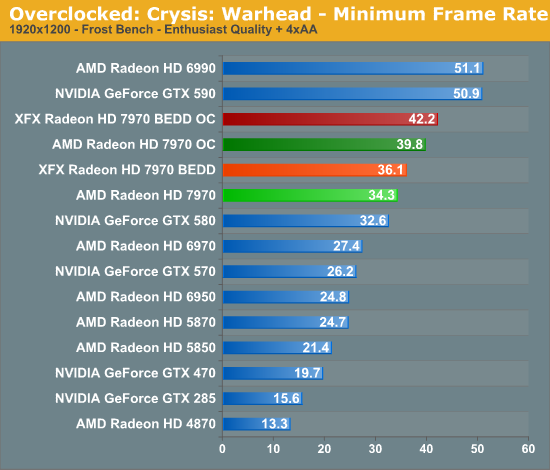

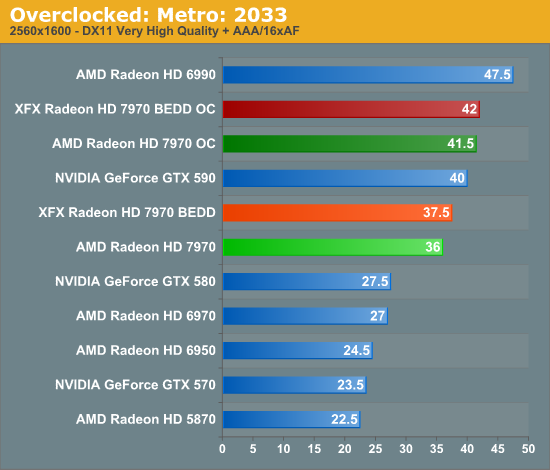

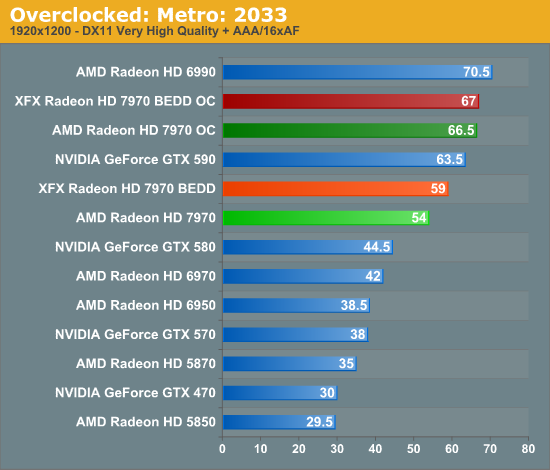

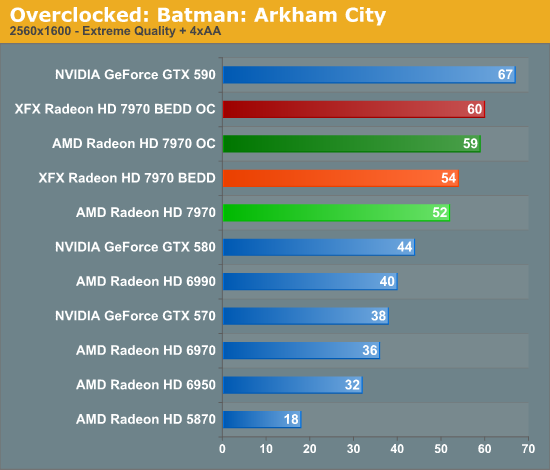

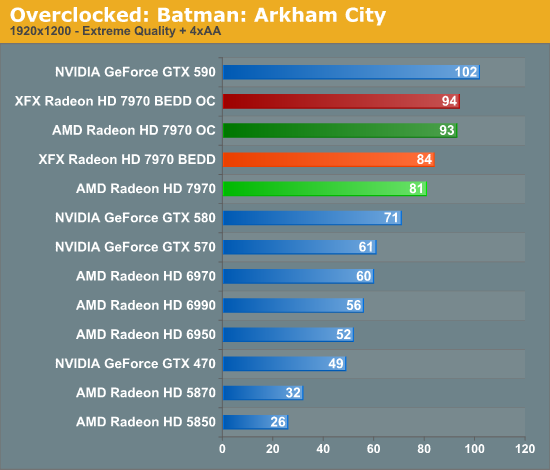

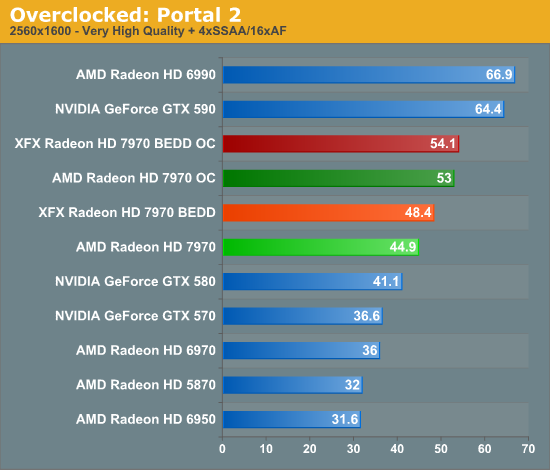

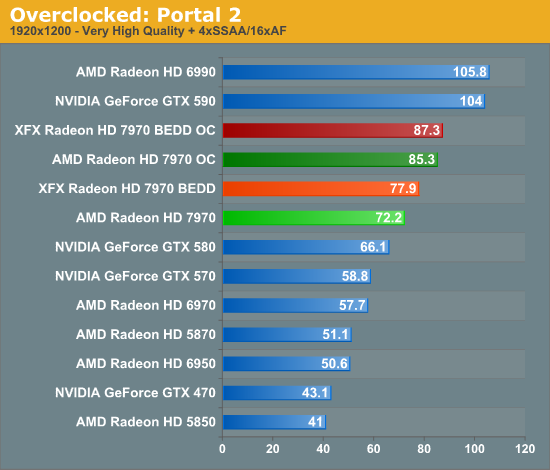

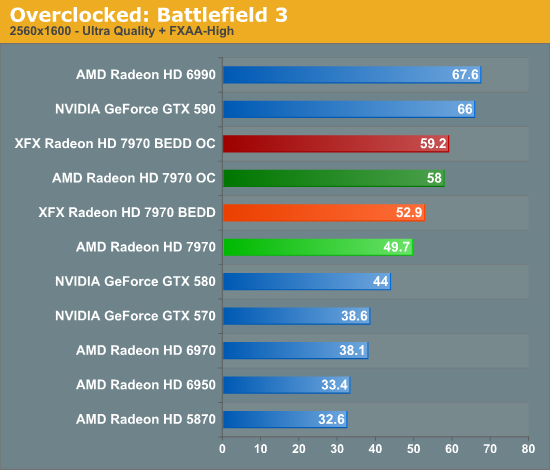

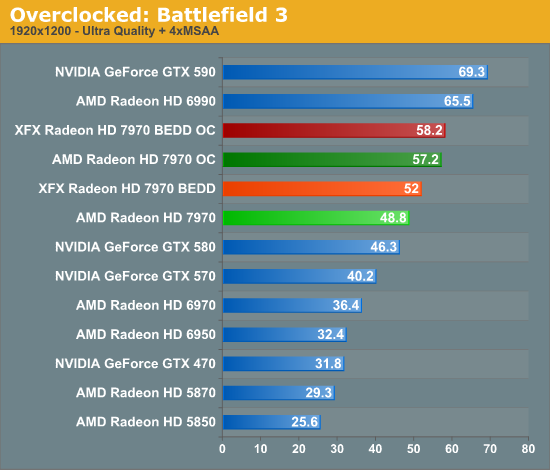

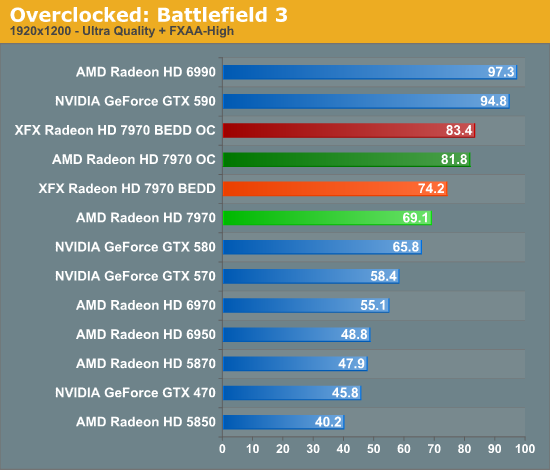

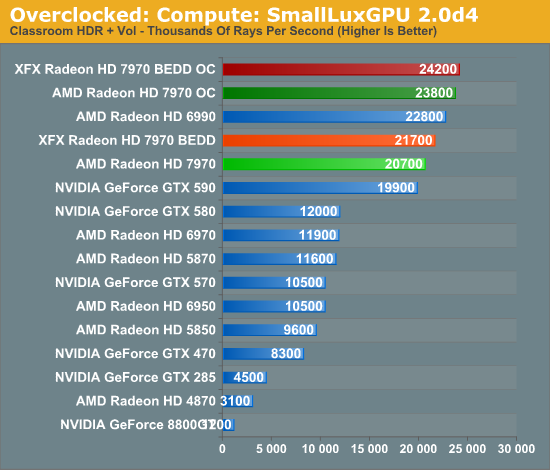

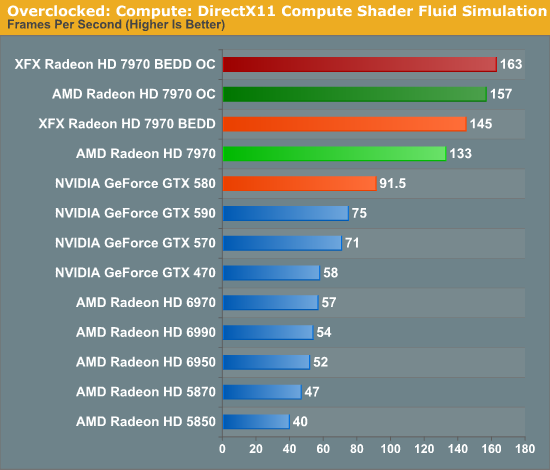

Meanwhile gaming performance is where you’d expect it to be for a card with this degree of an overclock. As most tests scale very well with the increase in the core clock virtually all of our games are 15-20% faster over a reference 7970. Whereas compared to the XFX 7970, at 98% of the XFX 7970’s overclock, our overclocked reference 7970 is only a couple percent behind the XFX card and barely outside the margin of error of most tests.

All things considered, outside of warranty restrictions there seems to be very little reason not to overclock the 7970 on its default voltage. Even a conservative overclock of 1050MHz would add 13% to the core clocks (and as a result performance in virtually all GPU-limited scenarios), which is a big enough leap in performance to justify spending the time setting up and testing the overclock. By not raising the core voltage there’s effectively no power/noise tradeoff and this seems to be achievable by virtually every 7970, making this a freebie overclock the likes of which we’re more accustomed to seeing on high-end CPUs than we are flagship GPUs.

47 Comments

View All Comments

evilspoons - Friday, January 27, 2012 - link

MSI is working on that already :)http://www.anandtech.com/show/5352/msis-gus-ii-ext...

tipoo - Friday, January 27, 2012 - link

Yeah, that looks sweet...Now for non-Mac laptops to get Thunderbolt. I think some Sony's already have it, but Ivy Bridge laptops for sure.repoman27 - Saturday, January 28, 2012 - link

TB controllers have a PCIe 2.0 x4 back end, but the protocol adapter can only pump at 10Gbps, so Thunderbolt devices essentially share the equivalent of 2.5 lanes of PCIe 2.0. I was hoping that PCIe 3.0 x1 performance would be tested as well, since that would show bottlenecking very similar to what could be expected from a Thunderbolt connected GPU.Torrijos - Sunday, January 29, 2012 - link

I was wondering this too...Is there word of Thunderbolt adaptation to the evolution of PCIe technology version?

The first release being using PCIe 2, are we going to see (with Ivy Bridge) TB using PCIe 3 with more than an effective doubling of bandwidth (since they reduced overhead with PCIe 3)?

All of the sudden we would end up closer to external graphics in docking stations (or directly with large high res displays) for ultra light laptops.

DanNeely - Sunday, January 29, 2012 - link

We'd see less than a doubling of band with if TB2.0 just went from PCIe2.0 to 3.0 clocks because TB already incorporates a high efficiency encoding like 3.0 does. That's why a TB1.0 connection can carry 2.5x PCIe 2.0 lanes of data over a channel that's raw capacity is only 2 lanes wide.tynopik - Friday, January 27, 2012 - link

If your main conclusion is that x8 3.0 is plenty for crossfire, shouldn't, you know, ACTUALLY TEST crossfire at x8 3.0?bumble12 - Friday, January 27, 2012 - link

First sentence on the second paragraph of the first page:"Next week we’ll be taking a look at CrossFire performance and the performance of AMD’s first driver update. "

Guspaz - Friday, January 27, 2012 - link

I don't really understand why dumn SSAA would be so hard to implement in a game-independent, API-independent, renderer-independent fashion. The driver can simply present a larger framebuffer to the game (say, 3840x2160 for a 1080p game) and as a final step before swapping the buffer, average the pixel values in 2x2 blocks, supersampling down to the target resolution.I mean, this is how antialiasing used to work in the days before MSAA, and while there's a big performance penalty there, it has the virtue of working in any scenario, on any content or geometry.

ItsDerekDude - Friday, January 27, 2012 - link

Here it is!http://demo.ovh.com/download/37a53453c137425e584a1...

chizow - Friday, January 27, 2012 - link

So PCIe 4GB/s (2.0x8 or 3.0x4) is where high-end cards start dropping off and showing noticeable differences in performance. That is definitely going to be the big advantage IVB brings to the mainstream as you'll be able to get 8GB/s in an x8/x8 config with PCIe 3.0 cards.It'd be interesting if you could do a comparison at some point on the impact of VRAM and bandwidth and PCIe bus speeds. An ideal candidate would be a card that has 2xVRAM variants like a GTX 580 or 6970 that's still fast enough to make things interesting.

Also interesting discussion on the MSAA situation. That helps explain why enabling MSAA has caused VRAM amounts to balloon incredibly in recent games, like BF3, Skyrim, Crysis etc. That extra G-buffer with all that geometry data. Is this what Nvidia was doing in the past with their AA override compatibility bits? Telling their driver to store intermediate buffers for MSAA? Also, wasn't DX10.1/11 supposed to help with this with the ability to read back the multisample depth buffer?

In any case, I for one welcome FXAA. While it does have a blurring effect, the AA it provides with virtually no loss in performance is amazing. It allows me to run much lower levels of AA (4xMSAA + 4xTSAA max, or even 2x+2x) in conjunction with FXAA to achieve better overall AA at the expense of slight blurring. MSAA+TSAA+FXAA provides similar full-scene AA results as the much more performance expensive SGSSAA for me.