The Radeon HD 7970 Reprise: PCIe Bandwidth, Overclocking, & The State Of Anti-Aliasing

by Ryan Smith on January 27, 2012 4:30 PM EST- Posted in

- GPUs

- AMD

- Radeon

- Radeon HD 7000

Improving the State of Anti-Aliasing: Leo Makes MSAA Happen, SSAA Takes It Up A Notch

As you may recall in our initial review of the 7970, I expressed my bewilderment that AMD had not implemented Adaptive Anti-Aliasing (AAA) and Super Sample Anti-Aliasing (SSAA) for DX10+ in the Radeon HD 7000 series. There has never been a long-term AA mode gap in recent history, and it was AMD who made DX9 SSAA popular again in the first place when they made it a front-and-center feature of the Radeon HD 5000 series. AMD’s response at the time was that they preferred to find a way to have games implement these AA modes natively, which is not an unreasonable position, but an unfortunate one given the challenge in just getting game developers to implement MSAA these days.

So it was with a great deal of surprise and glee on our part that when AMD released their first driver update last week, they added an early version of AAA and SSAA support for DX10+ games. Given their earlier response this was unexpected, and in retrospect either AMD was already working on this at the time (and not ready to announce it), or they’ve managed to do a lot of work in a very short period of time.

As it stands, AMD’s DX10+ AAA/SSAA implementation is still a work in progress, and it will only work on games that natively support MSAA. Given the way the DX10+ rendering pipeline works, this is a practical compromise as it’s generally much easier to implement SSAA after the legwork has already been done to get MSAA working.

We haven’t had a lot of time to play with the new drivers, but in our testing AAA/SSAA do indeed work. A quick check with Crysis: Warhead finds that AMD’s DX10+ SSAA implementation is correctly resolving transparency aliasing and shader aliasing as it should be.

Crysis: Warhead SSAA: Transparent Texture Anti-Aliasing

Crysis: Warhead SSAA: Shader Anti-Aliasing

Of course, if DX10+ SSAA only works with games that already implement MSAA, what does this mean for future games? As we alluded to earlier, built-in MSAA support is not quite prevalent across modern games, and the DX10+ pipeline makes forcing it from the driver side a tricky endeavor at best. One of the biggest technical culprits (as opposed to quickly ported console games) is the increasing use of deferred rendering, which makes MSAA more difficult for developers to implement.

In short, in a traditional (forward) renderer, the rendering process is rather straightforward and geometry data is preserved until the frame is done rendering. And while this normally is all well and good, the one big pitfall of a forward renderer is that complex lighting is very expensive to run because you don’t know precisely which lights will hit which geometry, resulting in the lighting equivalent of overdraw where objects are rendered multiple times to handle all of the lights.

In deferred rendering however, the rendering process is modified, most fundamentally by breaking it down into several additional parts and using an additional intermediate buffer (the G-Buffer) to store the results. Ultimately through deferred rendering it’s possible to decouple lighting from geometry such that the lighting isn’t handled until after the geometry is thrown away, which reduces the amount of time spent calculating lighting as only the visible scene is lit.

The downside to this however is that in its most basic implementation deferred rendering makes MSAA impossible (since the geometry has been thrown out), and it’s difficult to efficiently light complex materials. The MSAA problem in particular can be solved by modifying the algorithm to save the geometry data for later use (a deferred geometry pass), but the consequence is that MSAA implemented in such a manner is more expensive than usual both due to the amount of memory the saved geometry consumes and the extra work required to perform the extra sampling.

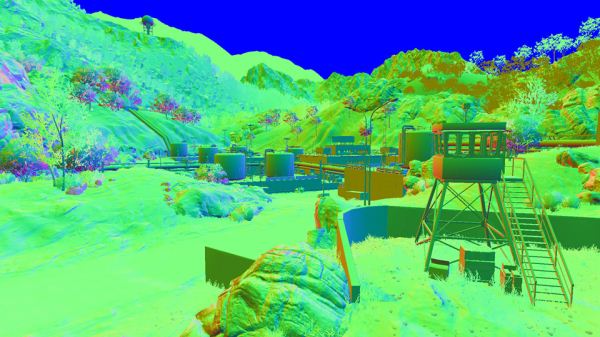

Battlefield3 G-Buffer. Image Courtesy DICE

For this reason developers have quickly been adopting post-process AA methods, primarily NVIDIA’s Fast Approximate Anti-Aliasing (FXAA). Similar in execution to AMD’s driver-based Morphological Anti-Aliasing, FXAA works on the fully rendered image and attempts to look for aliasing and blur it out. The results generally aren’t as good as MSAA (and especially not SSAA), but it’s very quick to implement (it’s just a shader program) and has a very small performance hit. Compared to the difficultly of implementing MSAA on a deferred renderer, this is faster and cheaper, and it’s why MSAA support for DX10+ games is anything but universal.

But what if there was a way to have a forward renderer with performance similar to that of a deferred renderer? That’s what AMD is proposing with one of their key tech demos for the 7000 series: Leo. Leo showcases AMD’s solution to the forward rendering lighting performance problem, which is to use a compute shader to implement light culling such that the compute shader identifies the tiles that any specific light will hit ahead of time, and then using that information only the relevant lights are computed on any given tile. The overhead for lighting is still greater than pure deferred rendering (there’s still some unnecessary lighting going on), but as proposed by AMD, it should make complex lighting cheap enough that it can be done in a forward renderer.

As AMD puts it, the advantages are twofold. The first advantage of course is that MSAA (and SSAA) compatibility is maintained, as this is still a forward render; the use of the compute shader doesn’t have any impact on the AA process. The second advantage relates to lighting itself: as we mentioned previously, deferred rendering doesn’t work well with complex materials. On the other hand forward rendering handles complex materials well, it just wasn’t fast enough until now.

Leo in turn executes on both of these concepts. Anti-aliasing is of course well represented through the use of 4x MSAA, but so are complex materials. AMD’s theme for Leo is stop motion animation, so a number of different material types are directly lit, including fabric, plastic, cardboard, and skin. The total of these parts may not be the most jaw-dropping thing you’ve ever seen, but the fact that it’s being done in a forward renderer is amazingly impressive. And if this means we can have good lighting and excellent support for real anti-aliasing, we’re all for it.

Unfortunately it’s still not clear at this time when 7970 owners will be able to get their hands on the demo. The version released to the press is still a pre-final version (version number 0.9), so presumably AMD’s demo team is still hammering out the demo before releasing it to the public.

Update: AMD has posted the Leo demo, along with their Ptex demo over at AMD Developer Central. It should work with any DX11 card, though a quick check has it failing on NVIDIA cards

47 Comments

View All Comments

dac7nco - Friday, January 27, 2012 - link

I was wondering when we'd start seeing bandwidth restrictions from 2.0 x8; Looks like Ivy Bridge will be a better than anticipated upgrade for those Z68 boards with 3.0 slots.Daimon

OblivionLord - Friday, January 27, 2012 - link

You'll see the limitation on the current 5970, 590, 6990, Mars2 when used on 8x and 16x 2.0. You won't see any limitation on 3.0 8x and 16x with current cards.If I had to guess then I'd say that in 2 years the highend videocards at that time will be powerful enough to finally show a limitation on 16x 2.0 and 8x 3.0, but not 16x 3.0

dragonsqrrl - Saturday, January 28, 2012 - link

So you'll be limited by 16x 2.0, but not 8x 3.0? How does that work exactly?Revdarian - Saturday, January 28, 2012 - link

I think, and might be mistaken, that he refers to using those dual gpu cards on multiple card solutions.In that case, well yeah, 8x and 16x 2.0 would be halve the bandwidth of the same setup with 3.0 (this is for cuadruple gpu solutions, a niche market)

Revdarian - Saturday, January 28, 2012 - link

*half even... meh grammar nazi-ing my own post heheheTermie - Friday, January 27, 2012 - link

Just as a counterpoint, Techspot just did an article on overclocking, and found that several mid-range cards hit around 15-17% overclock (and I believe this is on stock voltage). Link: http://www.techspot.com/review/486-graphics-card-o...You may want to note that what's unique about the 7970 is not that it can get up to an 18% overclock on stock volts, but that it is a top-end card that has 18% headroom. The 6970 had nothing close to that as Techspot found, for instance, so with the more expensive 7970, the headroom should be factored into the cost equation - the price premium for top-end cards rarely come with this bonus.

As an example, the HD5850, which was introduced as a high mid-range card, typically could reach a 17% overclock at stock volts (both of mine do), and the GTX460 was similar in this regard. That's why they were such value cards. But there's nothing entirely new about this kind of overclocking headroom at stock volts - it's not reserved only for CPUs, as you suggested.

Ryan Smith - Friday, January 27, 2012 - link

To clarify things, the point I was attempting to make was in reference to high end cards - the 580, 6970, 5870, and the like. Mid-range cards have traditionally overclocked better because there's plenty of thermal and power headroom to work with, which is consistent with Techspot's findings. In any case I've slightly edited the article to clarify this point.darkswordsman17 - Friday, January 27, 2012 - link

I think people will be disappointed in the overclocking part of this article, namely that you didn't do any voltage adjustments. I think people were wanting to see where the sweet spot for voltage is (best overclock without going too high, how increased voltage affects heat and power), like you often do with CPUs.On the flipside, I would have liked to see about undervolting. I saw someone mention that they had dropped voltage and were able to maintain clocks which cut the power consumption by a fair margin with no loss in performance.

Ryan Smith - Friday, January 27, 2012 - link

Considering that this is a reference card, I consider overclocking without voltage adjustment to be far more important. The 7970 is not an overengineered card like the 6990/5970 that was specifically built to be overvolted. It should be possible to give it some more voltage, but given the lack of design headroom in the power circuitry and the cooler, what you can achieve on stock voltage is much more important since it's all "free" performance.Termie - Saturday, January 28, 2012 - link

Ryan - as usual, thanks so much for being responsive to feedback. And thanks for putting this article together - very informative. That PCIe scaling analysis will be referenced for years to come, in my opinion.By the way, I agree that stock voltage overclocking is something worthy of being explored. It is a totally separate beast from overvolted overclocking, which not everyone has the skill or knowledge to do. The promise of higher performance and essentially no risk of hardware damage is truly a freebie, as you noted.