AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

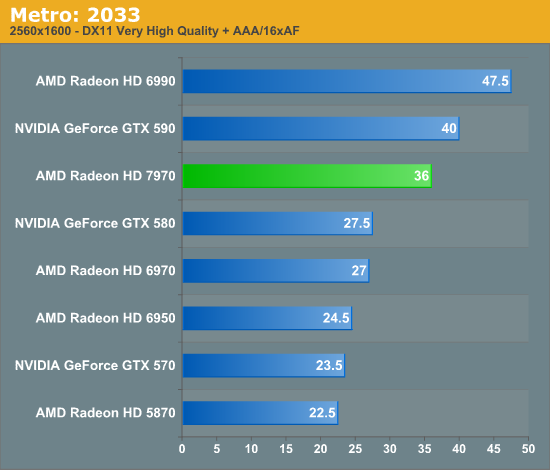

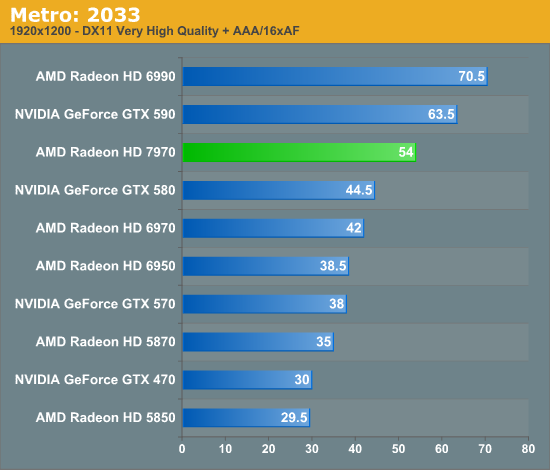

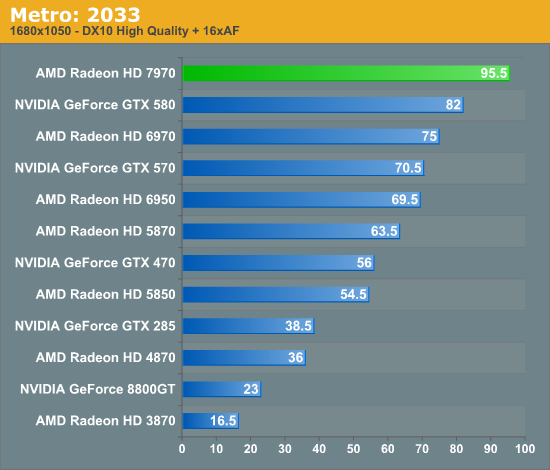

Metro: 2033

Paired with Crysis as our second behemoth FPS is Metro: 2033. Metro gives up Crysis’ lush tropics and frozen wastelands for an underground experience, but even underground it can be quite brutal on GPUs, which is why it’s also our new benchmark of choice for looking at power/temperature/noise during a game. If its sequel due this year is anywhere near as GPU intensive then a single GPU may not be enough to run the game with every quality feature turned up.

As often as we concern ourselves with Crysis and 60fps, with Metro it’s a struggle just to break 30fps. The 7970 becomes the first video card that can accomplish this at 2560 though it’s going to need quite a bit more performance to run Metro fluidly from start to end.

Compared to the GTX 580 the 7970’s lead in Metro is similar, but the scaling with resolution is even more pronounced. At 2560 it’s ahead by 30%, while at 1920 this drops to 21%, and finally at 1680 it’s only 16%. Meanwhile compared to the 6970 the response is much flatter; 33% at 2560, 28% at 1920, and 27% at 1680. From this data it’s becoming increasingly evident that the value proposition of the 7970 is going to hinge on not only what you consider its competitors to be, but what resolutions you would use it at.

While we’re on the subject of Metro, this is a good time to bring up the performance of the 5870, the forerunner of the DX11 generation. The performance of the 7970 may only be 20-30% higher than its immediate predecessors, but that’s not to say that performance hasn’t increased a great deal more over the full 2 year period of the 40nm cycle. The 7970 leads the 5870 by 50-60% here and in a number of other games, and while this isn’t as great as some past leaps it’s clear that there’s still plenty of growth in GPU performance occurring.

292 Comments

View All Comments

Scali - Saturday, December 24, 2011 - link

I have never heard Jen-Hsun call the mock-up a working board.They DID however have working boards on which they demonstrated the tech-demos.

Stop trying to make something out of nothing.

Scali - Saturday, December 24, 2011 - link

Actually, since Crysis 2 does not 'tessellate the crap' out of things (unless your definition of that is: "Doesn't run on underperforming tessellation hardware"), the 7970 is actually the fastest card in Crysis 2.Did you even bother to read some other reviews? Many of them tested Crysis 2, you know. Tomshardware for example.

If you try to make smart fanboy remarks, at least make sure they're smart first.

Scali - Saturday, December 24, 2011 - link

But I know... being a fanboy must be really hard these days..One moment you have to spread nonsense about how Crysis 2's tessellation is totally over-the-top...

The next moment, AMD comes out with a card that has enough of a boost in performance that it comes out on top in Crysis 2 again... So you have to get all up to date with the latest nonsense again.

Now you know what the AMD PR department feels like... they went from "Tessellation good" to "Tessellation bad" as well, and have to move back again now...

That is, they would, if they weren't all fired by the new management.

formulav8 - Tuesday, February 21, 2012 - link

Your worse than anything he said. Grow upCeriseCogburn - Sunday, March 11, 2012 - link

He's exactly correct. I quite understand for amd fanboys that's forbidden, one must tow the stupid crybaby line and never deviate to the truth.crazzyeddie - Sunday, December 25, 2011 - link

Page 4:" Traditionally the ROPs, L2 cache, and memory controllers have all been tightly integrated as ROP operations are extremely bandwidth intensive, making this a very design for AMD to use. "

Scali - Monday, December 26, 2011 - link

Ofcourse it isn't. More polygons is better. Pixar subdivides everything on screen to sub-pixel level.That's where games are headed as well, that's progress.

Only fanboys like you cry about it.... even after AMD starts winning the benchmarks (which would prove that Crysis is not doing THAT much tessellation, both nVidia and new AMD hardware can deal with it adequately).

Wierdo - Monday, January 2, 2012 - link

http://techreport.com/articles.x/21404"Crytek's decision to deploy gratuitous amounts of tessellation in places where it doesn't make sense is frustrating, because they're essentially wasting GPU power—and they're doing so in a high-profile game that we'd hoped would be a killer showcase for the benefits of DirectX 11

...

But the strange inefficiencies create problems. Why are largely flat surfaces, such as that Jersey barrier, subdivided into so many thousands of polygons, with no apparent visual benefit? Why does tessellated water roil constantly beneath the dry streets of the city, invisible to all?

...

One potential answer is developer laziness or lack of time

...

so they can understand why Crysis 2 may not be the most reliable indicator of comparative GPU performance"

I'll take the word of professional reviewers.

CeriseCogburn - Sunday, March 11, 2012 - link

Give them a month or two to adjust their amd epic fail whining blame shift.When it occurs to them that amd is actually delivering some dx11 performance for the 1st time, they'll shift to something else they whine about and blame on nvidia.

The big green MAN is always keeping them down.

Scali - Monday, December 26, 2011 - link

Wrong, they showed plenty of demos at the introduction. Else the introduction would just be Jen-Hsun holding up the mock card, and nothing else... which was clearly not the case.They demo'ed Endless City, among other things. Which could not have run on anything other than real Fermi chips.

And yea, I'm really going to go to SemiAccurate to get reliable information!