AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

A Quick Refresher, Cont

Having established what’s bad about VLIW as a compute architecture, let’s discuss what makes a good compute architecture. The most fundamental aspect of compute is that developers want stable and predictable performance, something that VLIW didn’t lend itself to because it was dependency limited. Architectures that can’t work around dependencies will see their performance vary due to those dependencies. Consequently, if you want an architecture with stable performance that’s going to be good for compute workloads then you want an architecture that isn’t impacted by dependencies.

Ultimately dependencies and ILP go hand-in-hand. If you can extract ILP from a workload, then your architecture is by definition bursty. An architecture that can’t extract ILP may not be able to achieve the same level of peak performance, but it will not burst and hence it will be more consistent. This is the guiding principle behind NVIDIA’s Fermi architecture; GF100/GF110 have no ability to extract ILP, and developers love it for that reason.

So with those design goals in mind, let’s talk GCN.

VLIW is a traditional and well proven design for parallel processing. But it is not the only traditional and well proven design for parallel processing. For GCN AMD will be replacing VLIW with what’s fundamentally a Single Instruction Multiple Data (SIMD) vector architecture (note: technically VLIW is a subset of SIMD, but for the purposes of this refresher we’re considering them to be different).

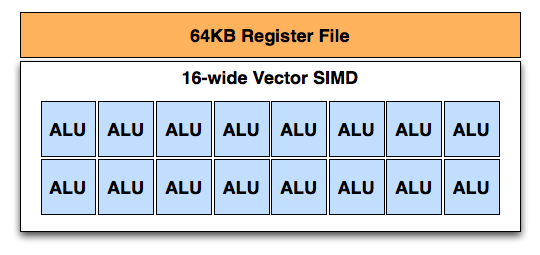

A Single GCN SIMD

At the most fundamental level AMD is still using simple ALUs, just like Cayman before it. In GCN these ALUs are organized into a single SIMD unit, the smallest unit of work for GCN. A SIMD is composed of 16 of these ALUs, along with a 64KB register file for the SIMDs to keep data in.

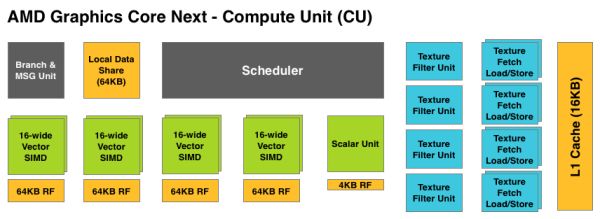

Above the individual SIMD we have a Compute Unit, the smallest fully independent functional unit. A CU is composed of 4 SIMD units, a hardware scheduler, a branch unit, L1 cache, a local date share, 4 texture units (each with 4 texture fetch load/store units), and a special scalar unit. The scalar unit is responsible for all of the arithmetic operations the simple ALUs can’t do or won’t do efficiently, such as conditional statements (if/then) and transcendental operations.

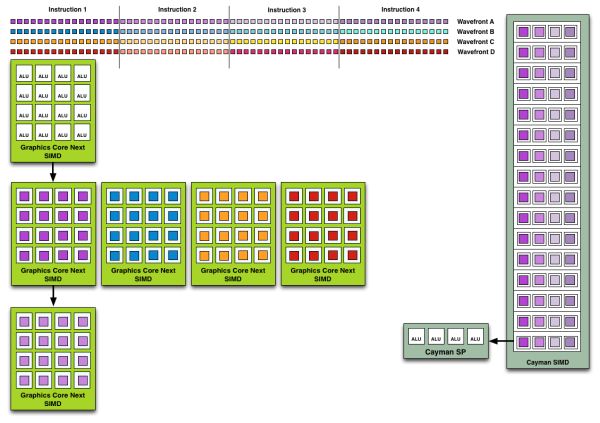

Because the smallest unit of work is the SIMD and a CU has 4 SIMDs, a CU works on 4 different wavefronts at once. As wavefronts are still 64 operations wide, each cycle a SIMD will complete ¼ of the operations on their respective wavefront, and after 4 cycles the current instruction for the active wavefront is completed.

Cayman by comparison would attempt to execute multiple instructions from the same wavefront in parallel, rather than executing a single instruction from multiple wavefronts. This is where Cayman got bursty – if the instructions were in any way dependent, Cayman would have to let some of its ALUs go idle. GCN on the other hand does not face this issue, because each SIMD handles single instructions from different wavefronts they are in no way attempting to take advantage of ILP, and their performance will be very consistent.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

There are other aspects of GCN that influence its performance – the scalar unit plays a huge part – but in comparison to Cayman, this is the single biggest difference. By not taking advantage of ILP, but instead taking advantage of Thread Level Parallism (TLP) in the form of executing more wavefronts at once, GCN will be able to deliver high compute performance and to do so consistently.

Bringing this all together, to make a complete GPU a number of these GCN CUs will be combined with the rest of the parts we’re accustomed to seeing on a GPU. A frontend is responsible for feeding the GPU, as it contains both the command processors (ACEs) responsible for feeding the CUs and the geometry engines responsible for geometry setup. Meanwhile coming after the CUs will be the ROPs that handle the actual render operations, the L2 cache, the memory controllers, and the various fixed function controllers such as the display controllers, PCIe bus controllers, Universal Video Decoder, and Video Codec Engine.

At the end of the day if AMD has done their homework GCN should significantly improve compute performance relative to VLIW4 while gaming performance should be just as good. Gaming shader operations will execute across the CUs in a much different manner than they did across VLIW, but they should do so at a similar speed. And for games that use compute shaders, they should directly benefit from the compute improvements. It’s by building out a GPU in this manner that AMD can make an architecture that’s significantly better at compute without sacrificing gaming performance, and this is why the resulting GCN architecture is balanced for both compute and graphics.

292 Comments

View All Comments

Zingam - Thursday, December 22, 2011 - link

I think this card is a kinda fail. Well, maybe it is a driver issue and they'll up the performance 20-25% in the future but it is still not fast enough for such huge jump - 2 nodes down!!!It smell like a graphics Bulldozer for AMD. Good ideas on paper but in practice something doesn't work quite right. Raw performance is all that counts (of course raw performance/$).

If NVIDIA does better than usual this time. AMD might be in trouble. Well, will wait and see.

Hopefully they'll be able to release improved CPUs and GPUs soon because this generation does not seem to be very impressive.

I've expected at least triple performance over the previous generation. Maybe the drivers are not that well optimized yet. After all it is a huge architecture change.

I don't really care that much about that GPU generation but I'm worried that they won't be able to put something impressively new in the next generation of consoles. I really hope that we are not stuck with obsolete CPU/GPU combination for the next 7-8 years again.

Anyway: massively parallel computing sounds tasty!

B3an - Thursday, December 22, 2011 - link

You dont seem to understand that all them extra transistors are mostly there for computing. Thats mostly what this was designed for. Not specifically for gaming performance. Computing is where this card will offer massive increases over the previous AMD generation.Look at Nvidia's Fermi, that had way more transistors than the previous generation but wasn't that much faster than AMD's cards at the time. Because again all the extra transistors were mainly for computing.

And come on LOL, expecting over triple the performance?? That has never happened once with any GPU release.

SlyNine - Friday, December 23, 2011 - link

The 9700pro was up to 4x faster then the 4600 in certian situations. So yes it has happened.tzhu07 - Thursday, December 22, 2011 - link

LOL, triple the performance?Do you also have a standard of dating only Victoria's Secret models?

eanazag - Thursday, December 22, 2011 - link

I have a 3870 which I got in early 2007. It still does well for the main games I play: Dawn of War 2 and Starcraft 2 (25 fps has been fine for me here with settings mostly maxed). I have eyeing a new card. I like the power usage and thermals here. I am not spending $500+ though. I am thinking they are using that price to compensate for the mediocre yields they getting on 28nm, but either way the numbers look justified. I will be look for the best card between $150-$250, maybe $300. I am counting on this cards price coming down, but I doubt it will hit under $400-350 next year.No matter what this looks like a successful soft launch of a video card. For me, anything smokes what I have in performance but not so much on power usage. I'd really not mind the extra noise as the heat is better than my 3870.

I'm in the single card strategy camp.

Monitor is a single 42" 1920x1200 60 Hz.

Intel Core i5 760 at stock clocks. My first Intel since the P3 days.

Great article.

Death666Angel - Thursday, December 22, 2011 - link

Can someone explain the different heights in the die-size comparison picture? Does that reflect processing-changes? I'm lost. :D Otherwise, good review. I don't see the HD7970 in Bench, am I blind or is it just missing.Ryan Smith - Thursday, December 22, 2011 - link

The Y axis is the die size. The higher a GPU the bigger it is (relative to the other GPUs from that company).Death666Angel - Friday, December 23, 2011 - link

Thanks! I thought the actual sizes were the sizes and the y-axis meant something else. Makes sense though how you did it! :-)MonkeyPaw - Thursday, December 22, 2011 - link

As a former owner of the 3870, mine had the short-lived GDDR4. That old card has a place in my nerd heart, as it played Bioshock wonderfully.Peichen - Thursday, December 22, 2011 - link

The improvement is simply not as impressive as I was led to believed. Rumor has it that a single 7970 would have the power of a 6990. In fact, if you crunch the numbers, it would be at least 50% faster than 6970 which should put it close to 6990. (63.25% increase in transistors, 40.37% in TFLOP and 50% increase in memory bandwidth.)What we got is a Fermi 1st gen with the price to match. Remember, this is not a half-node improvement in manufacturing process, it is a full-node and we waited two years for this.

In any case, I am just ranting because I am waiting for something to replace my current card before GTA 5 came out. Nvidia's GK104 in Q1 2012 should be interesting. Rumored to be slightly faster than GTX 580 (slower than 7970) but much cheaper. We'll see.