AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Managing Idle Power: Introducing ZeroCore Power

AMD has been on an idle power crusade for years now. Their willingness to be early adopters of new memory standards has allowed them to offer competitive products on narrower (and thereby cheaper) memory buses, but the tradeoff is that they get to experience the problems that come with the first revision of any new technology.

The most notable case where this has occurred would be the Radeon HD 4870 and 4890, the first cards to use GDDR5. The memory performance was fantastic; the idle power consumption was not. At the time AMD could not significantly downclock their GDDR5 products, resulting in idle power usage that approached 50W. Since then Cypress introduced a proper idle mode, allowing AMD to cut their idle power usage to 27W, while AMD has continued to further refine their idle power consumption.

With the arrival of Southern Islands comes AMD’s latest iteration of their idle power saving technologies. For 7970 AMD has gotten regular idle power usage down to 15W, roughly 5W lower than it was on the 6900 series. This is accomplished through a few extra tricks such as framebuffer compression, which reduce the amount of traffic that needs to move over the relatively power hungry GDDR5 memory bus.

However the big story with Southern Islands for idle power consumption isn’t regular idle, rather it’s “long idle.” Long idle is AMD’s term for any scenarios where the GPU can go completely idle, that is where it doesn’t need to do any work at all. For desktop computers this would primarily be for when the display is put to sleep, as the GPU does not need to do at work when the display itself can’t show anything.

Currently video cards based on AMD’s GPUs can cut their long idle power consumption by a couple of watts by turning off any display transmitters and their clock sources, but the rest of the GPU needs to be minimally powered up. This is what AMD seeks to change.

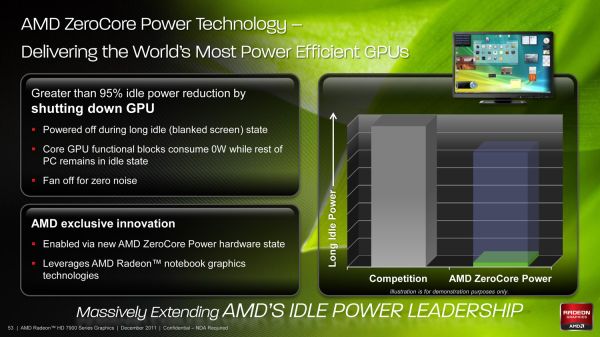

With Southern Islands AMD is introducing ZeroCore Power, their long idle power saving technology. By implementing power islands on their GPUs AMD can now outright shut off most of the functional units of a GPU when the GPU is going unused, leaving only the PCIe bus interface and a couple other components active. By doing this AMD is able to reduce their power consumption from 15W at idle to under 3W in long idle, a power level low enough that in a desktop the power consumption of the video card becomes trivial. So trivial in fact that with under 3W of heat generation AMD doesn’t even need to run the fan – ZeroCore Power shuts off the fan as it’s rendered an unnecessary device that’s consuming power.

Ultimately ZeroCore Power isn’t a brand new concept, but this is the first time we’ve seen something quite like this on the desktop. Even AMD will tell you the idea is borrowed from their mobile graphics technology, where they need to be able to power down the GPU completely for power savings when using graphics switching capabilities. But unlike mobile graphics switching AMD isn’t fully cutting off the GPU, rather they’re using power islands to leave the GPU turned on in a minimal power state. As a result the implementation details are very different even if the outcomes are similar. At the same time a technology like this isn’t solely developed for desktops so it remains to be seen how AMD can leverage it to further reduce power consumption on the eventual mobile Southern Islands GPUs.

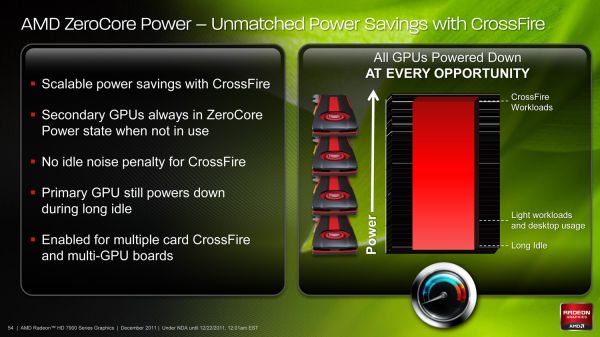

Of course as impressive as sub-3W long idle power consumption is on a device with 4.3B transistors, at the end of the day ZeroCore Power is only as cool as the ways it can be used. For gaming cards such as the 7970 AMD will be leveraging it not only as a way to reduce power consumption when driving a blanked display, but more importantly will be leveraging it to improve the power consumption of CrossFire. Currently AMD’s Ultra Low Power State (ULPS) can reduce the idle power usage of slave cards to a lower state than the master card, but the GPUs must still remain powered up. Just as with long idle, ZeroCore Power will change this.

Fundamentally there isn’t a significant difference between driving a blank display and being a slave card card in CrossFire, in both situations the video card is doing nothing. So AMD will be taking ZeroCore Power to its logical conclusion by coupling it with CrossFire; ZeroCore Power will put CrossFire slave cards in ZCP power state whenever they’re not in use. This not only means reducing the power consumption of the slave cards, but just as with long idle turning off the fan too. As AMD correctly notes, this virtually eliminates the idle power penalty for CrossFire and completely eliminates the idle noise penalty. With ZCP CrossFire is now no noisier and only ever so slightly more power hungry than a single card at idle.

Furthermore the benefits of ZCP in CrossFire not only apply to multiple cards, but multiple-GPU cards too. When AMD launches their eventual multi-GPU Tahiti card the slave GPU can be put in a ZCP state, leaving only the master GPU and the PCIe bridge active. Coupled with ZCP on the master GPU when in long idle and even a beastly multi-GPU card should be able to reduce its long idle power consumption to under 10W after accounting for the PCIe bridge.

Meanwhile as for load power consumption, not a great deal has changed from Cayman. AMD’s PowerTune throttling technology will be coming to the entire Southern Islands lineup, and it will be implemented just as it was in Cayman. This means it remains operationally the same by calculating the power draw of the card based on load, and then altering clockspeeds in order to keep the card below its PowerTune limit. For the 7970 the limit is the same as it was for the 6970: 250W, with the ability to raise or lower it by 20% in the Catalyst Control Center.

On that note, at this time the only way to read the core clockspeed of the 7970 is through AMD’s drivers, which don’t reflect the current status of PowerTune. As a result we cannot currently tell when PowerTune has started throttling. If you recall our 6970 results we did find a single game that managed to hit PowerTune’s limit: Metro 2033. So we have a great deal of interest in seeing if this holds true for the 7970 or not. Looking at frame rates this may be the case, as we picked up 1.5fps on Metro after raising the PowerTune limit by 20%. But at 2.7% this is on the edge of being typical benchmark variability so we’d need to be able to see the core clockspeed to confirm it.

292 Comments

View All Comments

RussianSensation - Thursday, December 22, 2011 - link

That's not what the review says. The review clearly explains that it's the best single-GPU for gaming. There is nothing biased about not being mind-blown by having a card that's only 25% faster than GTX580 and 37% faster than HD6970 on average, considering this is a brand new 28nm node. Name a single generation where AMD's next generation card improved performance so little since Radeon 8500?There isn't any!

SlyNine - Friday, December 23, 2011 - link

2900XT ? But I Don't remember if that was a new node and what the % of improvement was beyond the 1950XT.But still this is a 500$ card, and I don't think its what we have come to expect from a new node and generation of card. However some people seem more then happy with it, Guess they don't remember the 9700PRO days.

takeulo - Thursday, December 22, 2011 - link

as ive read the review this is not a disappointment infact its only a single gpu card but it toughly competing or nearly chasing with the dual gpu's graphics card like 6990 and gtx 590 performance...imagine that 7970 is also a dual gpu?? it will tottally dominate the rest... sorry for my bad english..

eastyy - Thursday, December 22, 2011 - link

the price vs performance is the most important thing for me at the moment i have a 460 that cost me about £160 at the time and that was a few years ago...seems like the cards now for the same price dont really give that much of a increaseMorg. - Thursday, December 22, 2011 - link

What seems unclear to the writer here is that in fact 6-series AMD was better in single GPU than nVidia.Like miles better.

First, the stock 6970 was within 5% of the gtx580 at high resolutions (and excuse me, but if you like a 500 bucks graphics board with a 100 bucks screen ... not my problem -- ).

Second, if you put a 6970 OC'd at GTX580 TDP ... the GTX580 is easily 10% slower.

So overall . seriously ... wake the f* up ?

The only thing nVidia won at with fermi series 2 (gtx5xx) is making the most expensive highest TDP single GPU card. It wasn't faster, they just picked a price point AMD would never target .. and they got i .. wonderful.

However, AMD raped nVidia all the way in perf/watt/dollar as they did with Intel in the Server CPU space since Opteron Istanbul ...

If people like you stopped spouting random crap, companies like AMD would stand a chance of getting the market share their products deserve (sure their drivers are made of shit).

Leyawiin - Thursday, December 22, 2011 - link

The HD 7970 is a fantastic card (and I can't wait to see the rest of the line), but the GTX 580 was indisputably better than the HD 6970. Stock or OC'd (for both).Morg. - Friday, December 23, 2011 - link

Considering TDP, price and all - no.The 6970 lost maximum 5% to the GTX580 above full HD, and the bigger the resolution, the smaller the GTX advantage.

Every benchmark is skewed, but you should try interpreting rather than just reading the conclusion --

Keep in mind the GTX580 die size is 530mm² whereas the 6970 is 380mm²

Factor that in, aim for the same TDP on both cards . and believe me .. the GTX580 was a complete total failure, and a total loss above full HD.

Yes it WAS the biggest single GPU of its time . but not the best.

RussianSensation - Thursday, December 22, 2011 - link

Your post is ill-informed.When GTX580 and HD6970 are both overclocked, it's not even close. GTX580 destroyed it.

http://www.xbitlabs.com/articles/graphics/display/...

HD6950 was an amazing value card for AMD this generation, but HD6970 was nothing special vs. GTX570. GTX580 was overpriced for the performance over even $370 factory preoverclocked GTX570 cards (such as the almost eerily similar in performance EVGA 797mhz GTX570 card for $369).

All in all, GTX460 ~ HD6850, GTX560 ~ HD6870, GTX560 Ti ~ HD6950, GTX570 ~ HD6970. The only card that had really poor value was GTX580. Of course if you overclocked it, it was a good deal faster than the 6970 that scaled poorly with overclocking.

Morg. - Friday, December 23, 2011 - link

I believe you don't get what I said :AT THE SAME TDP, THE HD6xxx TOTALLY DESTROYED THE GTX 5xx

THAT MEANS : the amd gpu was better even though AMD decided to sell it at a TDP / price point that made it cheaper and less performing than the GTX 5xx

The "destroyed it" statement is full HD resolution only . which is dumb . I wouldn't ever get a top graphics board to just stick with full HD and a cheap monitor.

Peichen - Friday, December 23, 2011 - link

According to your argument, all we'd ever need is IGP because no stand-alone card can compete with IGP at the same TDP / price point.