Understanding TLC NAND

by Kristian Vättö on February 23, 2012 1:14 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Indilinx Everest

- TLC

A Brief Introduction to SSDs and Flash Memory

In almost every SSD review we have published, Anand has mentioned how an SSD is the biggest performance upgrade you can make today. Why would anyone use regular hard drives then? There is one big reason: price. SSD prices are still up in the clouds when compared to hard drive prices (especially before the Thailand floods) so for many, SSDs have not been a realistic option.

Forking over $700 for a 512GB SSD sounds crazy because a 500GB hard drive can be had for less than $50. Smaller capacities like 64GB and 128GB can already be bought for around $100 and $200 respectively, but unless you have the ability to have an SSD plus hard drive combo, such a small SSD doesn't usually cut it. If you have a desktop, the SSD + HDD combo should not be a problem but many laptops only have space for one 2.5" drive (unless you are willing to mod it afterwards by replacing the optical drive). SSD prices have been dropping for years now, but if the current rate continues it will take years before a $399 Walmart PC includes a reasonable size SSD. So what can be done?

Most of the time, SSD production costs are cut by shrinking the NAND die. Shrinking the die is the same as with CPUs: you move to a smaller manufacturing process, e.g. from 34nm to 25nm. In flash memory, this means you can increase the density per die and usually the physical die size is also smaller, meaning more dies from a single wafer. A die shrink is an effective way to lower costs but moving from one process to another takes time and the initial ramp of the new flash isn't necessarily cheaper. Once the new process has matured and supply has met demand, prices start to fall.

Since die shrinks are a relatively slow way to lower SSD prices and only contribute to steady reduction of prices, anyone looking to push higher capacity SSDs into the mainstream today will need something more. Right now, that "something more" is called Triple Level Cell flash, commonly abbreviated as TLC.

Rather than shrinking the die to improve density/capacity, TLC (like MLC) increases the number of bits per cell. In our SSD Anthology article, Anand described how SLC and MLC flash work, and TLC works the same way but takes things a step further. Normally, you apply a voltage to a cell and keep increasing it until you reach a point where the result is far enough from the "off" state that you now consider the cell as being "on". This is how SLC works, storing one bit per cell. For MLC, you store two bits per cell, which means instead of two voltage states (0 and 1) you have four states (00, 01, 10, 11). TLC takes that a step further and stores three bits per cell, or eight voltage states (000, 001, 010, 011, 100, 101, 110, and 111). We will take a deeper look into voltage states and how they work in the next page.

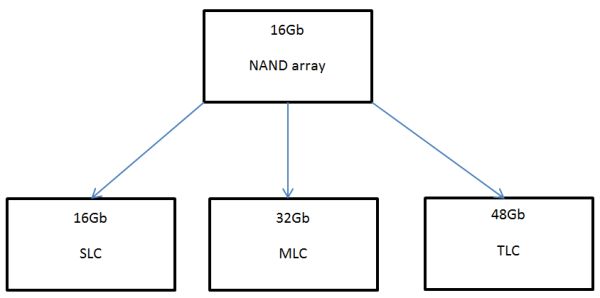

Even though SLC, MLC and TLC operate the same way, there is one crucial difference. Lets take a look at what happens to a NAND array depending on the amount of data per cell. The image above is a NAND array with ~16 billion transistors (one transistor is required per cell), i.e. 16 gigabits (Gb). This array can be turned into either SLC, MLC, or TLC. The actual array and transistors are equivalent in all three flash types; there is no physical difference. In the case of SLC flash, only one bit of data will be stored in one cell, hence your final product has a 16Gb capacity. When you up the bits per cell to two (MLC), you get 32Gb because now you have two bits per cell and there are still 16 billion cells. Likewise, three bits per cell (TLC) yields 48Gb.

However, TLC is a horse of slightly different color in this case. Capacities usually go in powers of two (2, 4, 8, 16 and so on) and 48 is not a power of two. To get a number that is a power of two, the original NAND array is chopped down. In our example, the array must be 10.67Gb in order to be 32Gb with three bits per cell, but since that is the same capacity as an MLC die, what is the benefit? You don't get more storage per die, but the actual die is smaller because the original 16Gb array has been reduced to a 10.7Gb array. That means more dies per wafer and hence lower cost.

| Comparison of NAND Wholesale Prices | |||

| Cell Type | SLC | MLC | TLC |

| Price per GB | $3.00 | $0.90 |

$0.60 |

Prices provided by OCZ

The theoretical price advantage of TLC isn't as great as SLC versus MLC, but it's still significant. In percentage, that is over a 30% reduction. The main reason is that MLC provides twice the capacity when compared to SLC (2bits per cell versus 1bit per cell), whereas TLC provides only 50% more than MLC (3bits per cell versus 2bits per cell). In fact, the price difference between MLC and TLC is directly proportional. TLC die is 33% smaller than a similar MLC die and in the prices provided by OCZ, TLC is also 33% cheaper than MLC. In theory, SLC should follow this equation as well and be priced at $1.80/GB, but there's limited 2Xnm SLC out in the wild, making SLC significantly more expensive than MLC and TLC at this point.

The reality of the matter is a little less clear. TLC NAND today isn't all that much cheaper than MLC NAND, which has contributed to its relative absence in the consumer SSD space. There's also a lack of controller support and market interest, which contribute to the higher prices of course.

90 Comments

View All Comments

Kougar - Thursday, February 23, 2012 - link

First, thanks for the article! However it has reignited a question I've had for some time.How is this regulated exactly... does the manufacturer still set a mandatory limit to the number or writes, or is a modern SSD capable of detecting this delay and automatically correcting for it up until the point that it is able to detect the block has exceeded the time limits (and hence write endurance) allowed? In another manner of phrasing it, are arbitrary write count limits utilized or is a modern SSD self-aware enough to determine on its own when a flash block needs to be retired, regardless of the write counts?

Kristian Vättö - Friday, February 24, 2012 - link

Each chip is slightly different so there is no set maximum of writes. One can last 3000 P/E cycles while the other can last 3200.I'm not 100% sure but I think the controller is the one who decides when a certain block is too slow. I.e. it's capable of detecting the delay and when it reaches a certain point, it decides to retire the block to avoid further performance decrease. Hence it may be controller specific and some will retire blocks sooner than others, although at least Intel is saying that there is a certain delay and after that the block is retired (but it may just be a recommendation).

Kougar - Friday, February 24, 2012 - link

Thank you for your reply, Kristian!When you mention every chip is different, that's a very excellent point and one of several reasons for the question.

The other reason behind my question was simply SSD lifespan... Anand has (several times) mentioned that even after the NAND "wears out" the data should remain readable for at least one year after that date.

Yet, all the SSD failures a huge number of others (including myself) have experienced has always been from an SSD suddenly failing outright, and not even being detected in the BIOS. I've yet to come across anyone that's claiming their drive became read only, or something else other than an outright failure or firmware related bug.Basically it seems like SSDs don't wear out, they just completely die outright for some reason. Going by your answer to my question, I'm going to safely assume NAND longevity isn't the factor in these episodes, but any input you may have on this would be quite welcome!

Kristian Vättö - Friday, February 24, 2012 - link

It's true that NAND remains readable when it wears out. For MLC, the period is about one year (eMLC is only 3 months, though).I can't say for sure what is the reason behind these early failures but I would claim that it's often controller related. In general, drives equipped with SandForce controllers experience more early failures than other drives (see the link below).

http://www.behardware.com/articles/843-7/component...

All the drives with +5% return rate are SandForce based, more specifically SF-1222 based. NewEgg yields similar data. SF-2281 based SSDs have quite a few one-star ratings, usually around 20%. Switch to Crucial or Intel (or any other non-SF drive) and we are looking at less than 10% one-star ratings, which usually imply a dead drive.

Of course, even non-SF drives experience early failures but the rate is much smaller and more common for consumer electronics. In any case, it's not the NAND that is causing the failure :-)

Sivar - Thursday, February 23, 2012 - link

I understand the necessity of reducing cost, but a sharp drop in durability coupled with a rapidly diminishing return on $savings/capacity due to the necessary greater redundancy seems a high price to pay for a linear increase in capacity.This is one of those articles that has the excellent writing and technical thoroughness characteristic of something written by Anand himself. To top it off, it doesn't use an inefficient image format for the photos with large areas of flat color, like the first image.

themossie - Friday, February 24, 2012 - link

Second that. Unusual clarity for any technical explanation. Thank you for the article, Kristian!hechacker1 - Friday, February 24, 2012 - link

I think the article got confusing by adding that that you can use less flash at 10.67Gb, along with 3bits per cell, giving 32Gb. Do the math: 10.67Gb * 3bits per cell = 32Gb.It's easier to just keep in mind:

16Gb NAND * 1 bit per cell = 16Gb capacity

16Gb NAND * 2 bit per cell = 32Gb capacity

16Gb NAND * 3 bit per cell = 48Gb capacity

Kristian Vättö - Friday, February 24, 2012 - link

The reason is that no final product has capacity of 48Gb. Capacities go in powers of 2: 2Gb, 4Gb, 8Gb, 16Gb, 32Gb, 64Gb and so on. 48Gb isn't a power of two (and no X*3 is). Hence you have to make the die smaller so that the X*3 is a power of two, like 10.67Gb is.In theory, you could make a 48Gb TLC die and it would work just fine. It's simply considered as an odd number in the NAND industry and hence not used.

themossie - Friday, February 24, 2012 - link

Kristian says this is awkward because TLC capacities will not scale from MLC capacities at a power of 2, like MLC did from SLC. I am not convinced that's an issue, as scaling capacity by a power of 2 has never been a requirement in the hard drive industry.Indeed, 80/90 GB SSDs - located between power-of-2-inspired 64 GB and 128 GB capacities - have been quite popular. For that matter, 64GB/128GB SSDs are often marketed as 60GB/120GB SSDs, partially due to provisioning...

It is awkward to describe 48Gb as 10.67Gb*3, where Gb represents physical transistors rather than bits; Gb is a unit for digital information in this context, not the physical representation of such.

This is exacerbated as the cells are physically identical - an array could store 48Gb using TLC, but only 10.67Gb with SLC. I find hechacker1's explanation more intuitive. 16Gb SLC = (16*2) 32Gb MLC = (16*3) 48Gb TLC...

The takeaway point here is that you get 50% more wafers per die for a given capacity with TLC over MLC, and this shows up directly in the cost ($0.60 cents/gb vs $0.90 cents/gb) but results in greatly reduced write cycles.

Kristian Vättö - Friday, February 24, 2012 - link

Remember that I'm not the one who came up with this idea ;-)This info is straight from Micron and they indeed say that the TLC die is chopped down to 10.67 billion transistors so that it becomes a 32Gb die. Maybe OEMs are afraid of adapting "odd number" capacities. In SSDs it wouldn't be so big deal but TLC is more commonly used in devices like USB flash drives and low-end smartphones. In fact, some OEMs may even use MLC and TLC in the same model (I don't have any examples but I wouldn't be surprised).

As for why some drives have an odd capacity, it has to do with the controller design and over-provisioning. Intel's SATA 3Gb/s controller has 10 channels while most controllers have 8. That's why Intel drives have weird capacities. Populate all 10 channels with 64Gb (8GB) dies and you get 80GB. For other drives, populating all the channels works out to be only 64GB. As for SandForce drives, they have no on-board cache (DRAM) so some of the NAND (~7%) is preserved for that. That's why 128GB SF drive is marketed as 120GB.

I agree that 10.67 is an awkward number but then again, this is stuff that an average consumer doesn't really need to know. For them, the final product will look the same, thanks to the power of two capacity. The gain of TLC is the same, no matter is the die smaller or the same as MLC. TLC provides more GB per die, which means cheaper $/GB.