Understanding TLC NAND

by Kristian Vättö on February 23, 2012 1:14 PM EST- Posted in

- Storage

- SSDs

- OCZ

- Indilinx Everest

- TLC

Weaknesses of TLC: One Degree Worse than MLC

In a perfect world, increasing the number of bits per cell sounds like a very easy way to increase capacities while keeping the prices down. So, why not put a thousand bits inside every cell? Unfortunately, there's a downside to storing more bits per cell.

Fundamentally, TLC shares the same problems as MLC when compared to SLC, but takes things one step further. Now that there are eight voltage levels to check, random reads will take more time: 100µs for TLC. That's four times longer than what it takes SLC to random read one bit, and twice as long as what it takes for MLC to complete the same task. Programming will also take longer, but unfortunately we don't have any figures for TLC yet.

| SLC | MLC | TLC | |

| Bits per Cell | 1 | 2 | 3 |

| Random Read | 25 µs | 50 µs | 100 µs |

| Erase | 2ms per block | 2ms per block | ? |

| Programming | 250 µs | 900 µs | ? |

On top of the decrease in performance, TLC also has worse endurance than MLC and SLC. Precise P/E cycle figures are not yet known, but we are most likely looking at around 1000 cycles. Hynix has a brief product sheet for their 48nm TLC flash, which has 2500 P/E cycles. At least in MLC flash, the move to 3Xnm halved the P/E cycles so we would be looking at 1250 cycles. 2Xnm brought even fewer cycles, roughly 3,000, and with same math we get 750 cycles for 2Xnm TLC. X-bit labs reported 1,000 cycles for TLC, which sounds fair. It's also good to keep in mind that endurance can vary depending on the manufacturer and maturity of the process. For example the first 25nm NANDs were good for only ~1,000 cycles, whereas today's chips should last for over 3,000 cycles.

| 5Xnm | 3Xnm | 2Xnm | |

| SLC | 100,000 |

100,000 |

N/A |

| MLC | 10,000 | 5,000 | 3,000 |

| TLC | 2,500 | 1,250 |

750 |

But why does NAND with more bits degrade quicker? The reason lies in the physics of silicon. To understand this, we need to take a look at our beloved Mr. N-channel MOSFET again.

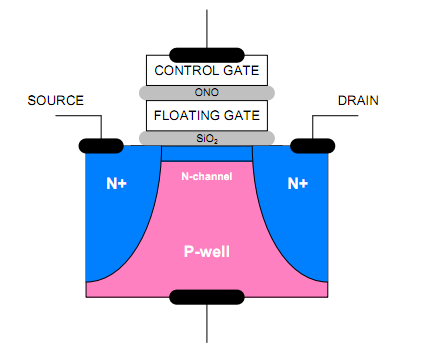

When you program a cell, you are placing a voltage on the control gate, while source and drain regions are held at 0V. The voltage forms an electric field, which allows electrons to tunnel through the silicon oxide barrier from the N-channel to the floating gate. This process is called tunneling. The silicon oxide acts as an insulator and will not allow electrons to enter or escape the floating gate unless an electrical field is formed. To erase a cell, you apply voltage on the silicon substrate (P-well in the picture) and keep control gate voltage at zero. An electric field will be formed which allows the electrons to get through the silicon oxide barrier. This is why NAND flash needs to be erased before it can be re-programmed: you need to get rid of the old electrons (i.e. old data) before you can apply new electrons (i.e. new data).

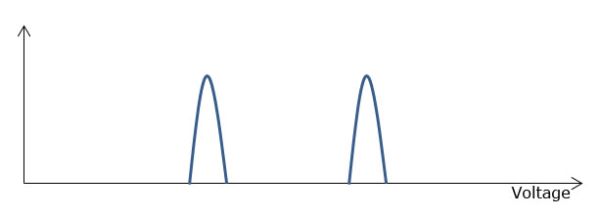

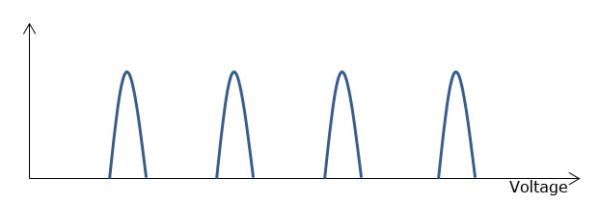

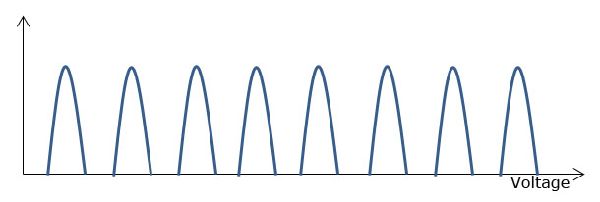

But what does this have to do with SLC, MLC and TLC? The actual MOSFET is exactly the same in all three cases, but take a look at the table below.

| SLC | "0" | High Voltage |

| "1" | Low Voltage | |

| MLC | "00" | High Voltage |

| "01" | Med-High Voltage | |

| "10" | Med-Low Voltage | |

| "11" | Low Voltage | |

| TLC | "000" | Highest Voltage |

| "001" | High Voltage | |

| "010" | Med-High Voltage | |

| "100" | High-Medium Voltage | |

| "011" | Low-Medium Voltage | |

| "101" | Med-Low Voltage | |

| "110" | Low Voltage | |

| "111" | Lowest Voltage |

SLC only has two program states, "0" and "1". Hence either a high or low voltage is required. When the amount of bits goes up, you need more voltage stages. With MLC, there are four states, and eight states with TLC. The problem is that the silicon oxide layer is only about 10nm thick and it's not immortal; it wears out every time it's used in the tunneling process. When the silicon oxide layer wears out, the atomic bonds break and during the tunneling process, some electrons may get trapped inside the silicon oxide. This builds up negative charge in the silicon oxide, which negates some of the the control gate voltage.

At first, erasing becomes slower because higher voltages need to be applied (and for a longer time) before the right voltage is found. Higher voltage causes more stress on the oxide, wearing it out even more. Eventually, erasing will take so long that the block has to be retired to maintain the performance. There is a side effect, though. Programming will be faster because there is already some voltage in the cell due to the electron trapping. However, the time won because of that is much smaller than the time it takes to erase the cell when more voltage pulses are required to erase the cell. That's why the block has to be retired when the wear level reaches a certain point.

Here comes the differerence between SLC, MLC and TLC. The fewer bits you have per cell, the more voltage room you have. In other words, SLC can tolerate more changes in the voltage states because it has only two states. In TLC, there are eight, so the margin for errors is a lot smaller.

Lets assume that we have an SLC NAND that takes voltage between 0V and 14V. To program the cell to "1", a voltage between 4V and 5V needs to be applied. Likewise, you need a voltage from 9V to 10V to program the cell to "0". In this scenario, there is 4V of "spare" voltage between the states. If we apply this example to MLC NAND, the spare voltage will be cut to half, 2V. With TLC, that spare value is only 0.67V if we use the same 1V per voltage state ideaology.

However, when the oxide wears out and a higher voltage is needed, the programming voltages go up. To use the SLC example above, you would now need a voltage between 4V and 6V to program the cell to "0". That means a 1V loss in the spare voltage. And here comes the difference. Since SLC has more spare voltage between the states, it can tolerate a higher voltage change until the erase will be so slow that the block needs to be retired. This is why SLC has a substantially higher P/E cycle count; you can erase and reprogram the cell more times. Likewise, TLC tolerates the least change in voltage states, so it has the lowest amount of P/E cycles.

90 Comments

View All Comments

Beenthere - Thursday, February 23, 2012 - link

While the transition from SLC to MLC and now TLC sounds good, the reality is SSD makers have yet to resolved all reliability or compatibility issues with MLC consumer grade SSDs.Last time I checked OCZ was on firmware version (15) and people are still experiencing issues. The issues are with all SSD suppliers including Intel, Smasung, Corsair, etc. not just OCZ.

If data security is important it would be wise to heed Anand's advice to WAIT 6-12 months to see if the SSD makers resolve the BUGS.

extide - Thursday, February 23, 2012 - link

Go with an Intel, Samsung, or Crucial drive. They are reliable and fast.Beenthere - Thursday, February 23, 2012 - link

Actually no one has any lock on SSD reliability. Intel, Samsung and Crucial have ALL had issues that required firmware updates to fix BUGS. We don't know how many more BUGS exist in their or other brands of consumer grade SSDs.Not all HDD drives have issues. Yes some do especially the low quality high-capacity SATA drives. That however is not a good reason to buy a defective SSD.

SSD makers are just cashing in on gullible consumers. If people will pay top dollar for defective goods, that's what unscrupulous companies will ship. If consumers refuse to accept CRAP products, then the makers will fix the products or go broke.

ckryan - Thursday, February 23, 2012 - link

Yes, because everyone knows HDDs are infallible, never die, and are very fast...Oh wait, none of that is true.

MonkeyPaw - Thursday, February 23, 2012 - link

As someone who tried to use a Sandisk controlled SSD recently, it's not as obnoxiously simple as you make it sound. It's one thing to know a drive will fail, it's another to experience BSODs every 20 minutes.Making proper backups is the solution to drive failure, but a PC that crashes with regularity is utterly useless. I don't hate SSDs, I just want more assurance that they can be as stable as they are fast.

martyrant - Thursday, February 23, 2012 - link

So I've had the Intel 80GB X-25M G2s since launch with zero issues, no reason to upgrade firmware, no BSODs or issues. I recently bought one of their 310 80GB SSDs for an HTPC--again, 5 months later, no issues, no problems, no firmware updates.I've had a friend who's had two Vertex 2's in RAID 0 since launch with zero issues.

I also have a friend who has had a Vertex 2 drive die 4 times on him in under 2 months (this is more recent).

As of late, it seems that a lot of manufacturers are having issues but most I believe are the latest SandForce controllers which are causing the issues.

This is why you see people who use their own controllers, or one other than a recent SF controller, not having issues.

I feel bad, I really do, for those people who have been screwed over recently by the SSDs that have been failing--but I mean generally doing the research before hand benefits you down the road in the long run.

The reason Crucial, Intel, and Samsung SSDs are not having issues is because Crucial uses a Marvell controller, Intel uses its own controller, and Samsung uses it's own controller as well. This may not be true for all their drives, but most of their drives (the reliable ones) are of those controller types.

Just do your research before hand and don't be an SSD hater because they really are, when you shell out the cash to not get the cheapest thing on the market, the biggest upgrade you can do to your computer in the last 3-5 years. I haven't upgraded my mobo/cpu in either of my 3 computers in years but you bet I bought SSDs.

Holly - Saturday, February 25, 2012 - link

My OCZ Vertex 3 serves without glitch since 2.13 firmware was released. Before that occasional system freezing was major pain. Otoh I don't feel like updating to 2.15 firmware, rather being happy with what's working now :-)jwcalla - Thursday, February 23, 2012 - link

Yeah but even HDDs have major reliability problems... especially the high-capacity consumer drives.psuedonymous - Thursday, February 23, 2012 - link

There have been a few products already mixing an SSD with a HDD to allow oft-used data to be quickly read and written while rarely used bulk data that get's streamed (rather than random access) e.g. video is relegated to the HDD. Why not do the same with two grades of NAND? A few GB of SLC (or MLC) for OS files and frequently accessed and rewritten program files, and several hundred GB of TCL (or QLC, etc) for less frequently written data that it is still desirable to access quickly (e.g. game textures & FMVs). Faster than a HDD hybrid, cheaper than an all-SLC/MLC design, and just as fast in the vast majority of consumer use cases (exceptions including non-linear video editing, large-array data processing).kensiko - Thursday, February 23, 2012 - link

Yes that's what I thought reading this article.We just have to make the majority of writes on MLC and put the static data on TLC. Pretty simple and probably feasible in a 2.5in casing.