The Bulldozer Aftermath: Delving Even Deeper

by Johan De Gelas on May 30, 2012 1:15 AM ESTZooming in on SPEC CPU2006: the Good

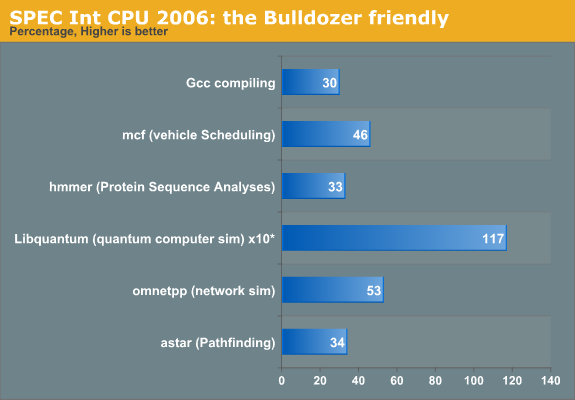

We filtered out those benchmarks that showed a 30% improvement over Magny-Cours (based on the K10 core). Remember the Bulldozer architecture has been designed to deliver 33% more cores in the same power envelope while keeping the IPC more or less at 95% of the K10. The rest of the performance should have come from a clock speed increase. The clock speed increases did not materialize in the real world, and we also kept the clock speed the same to focus on the architecture. Where a 30-35% performance increase is good, anything over 35% indicates that the Bulldozer architecture handles that particular sort of software better than Magny-Cours.

The Libquantum score is the most spectacular. Bulldozer performs over twice as fast and the score of 2750 is not that far from the all mighty Xeon 2660 at 2.2GHz (3310). Bulldozer here is only 17% slower.

At first sight, there is nothing that should make Libquantum run very fast on Bulldozer. Libquantum contains a high amount of branches (27%) and we have seen before that although Bulldozer has a somewhat improved branch predictor, the deeper pipeline and higher branch misprediction penalty can cause a lot of trouble. In fact, Perlbench (23%), Sjeng Chess (21%), and Gobmk (AI, 21%) are branchy software and are among the worst performing tests on Bulldozer. Luckily, Libquantum has a much easier to predict branches: libquantum is among the software pieces that has the lowest branch misprediction rates (less than six per 1000 instructions).

We all know that Bulldozer can deal much better with loads and stores than Magny-Cours. However, libquantum has the lowest (!) amount of load/stores (19%=14% Loads, 5% Stores). The improved Memory Level Parallelism of Bulldozer is not the answer. The table below gives an idea of the instruction mix of SPEC CPU2006int.

| SPEC Int 2006 Application | IPC* | Branches | Stores | Loads |

Total Loads/ Stores |

|---|---|---|---|---|---|

| perlbench | 1.67 | 23 | 12 | 24 | 36 |

| Bzip compression | 1.43 | 15 | 9 | 26 | 35 |

| Gcc | 0.83 | 22 | 13 | 26 | 39 |

| mcf | 0.28 | 19 | 9 | 31 | 40 |

| Go AI | 1.00 | 21 | 14 | 28 | 42 |

| hmmer | 1.67 | 8 | 16 | 41 | 57 |

| Chess | 1.25 | 21 | 8 | 21 | 29 |

| libquantum | 0.43 | 27 | 5 | 14 | 1 |

| h264 encoding | 2.00 | 8 | 12 | 35 | 47 |

| omnetppp | 0.38 | 21 | 18 | 34 | 52 |

| astar | 0.56 | 17 | 5 | 27 | 32 |

| XML processing | 0.66 | 26 | 9 | 32 | 41 |

* IPC as measured on Core 2 Duo.

Libquantum has a relatively high amount of cache misses on most CPUs as it works with a 32MB data set, so it benefits from a larger cache. The 8MB L3 vs 6MB L3 might have boosted performance a bit, but the main reason is vastly improved prefetching inside Bulldozer. According to the researchers of the university of Austin and Microsoft, the prefetch requests in libquantum are very accurate. If you check AMD's own publications you'll notice that there were two major improvements to improve the single-threaded performance of the Bulldozer architecture (compared to the previous ones): an improved Turbo Core and vastly improved prefetching.

Next, let's look at the excellent mcf result. mcf is by far the most memory intensive SPEC CPU Int benchmark out there. mcf misses the L1 data cache about five times more than all the other benchmarks on average. The hit rate is lower than 70%! mcf also misses the last level cache up to eight times more than all other benchmarks. Clearly mcf is a prime candidate to benefit from the vastly improved L/S units of Bulldozer.

Omnetpp is not that extreme, but the instruction mix has 52% loads and stores, and the L2 and last level cache misses are twice as high as the rest of the pack. In contrast to mcf, the amount of branch mispredictions is much lower, despite the fact that it has a similar, relatively high percentage of branches (20%). So the somewhat lower reliance on the memory subsystem is largely compensated for by a much lower amount of branch mispredictions. To be more precise: the amount of branch predictions is about three times lower! This most likely explains why Bulldozer makes a slightly larger step forward in omnetpp compared to the previous AMD architecture than in it does in mcf.

84 Comments

View All Comments

Schmide - Wednesday, May 30, 2012 - link

I do remember from some analysis that the L2 cache reads were as slow as main memory. That's great if you hit a L2 cache, but it's not going to buy you anything if it's that slow.SocketF - Wednesday, May 30, 2012 - link

Impossible, you probably mix some things up, maybe latency and bandwidth?Schmide - Wednesday, May 30, 2012 - link

Yup. It was late at night, I was thinking writes. the L1 write through basically makes L1 writes the same as L2 writes.Homeles - Wednesday, May 30, 2012 - link

Not even close. L2 is about 10 times faster than main memory.http://www.anandtech.com/show/4955/the-bulldozer-r...

jcollake - Wednesday, May 30, 2012 - link

Through research here at Bitsum on the AMD Bulldozer platform (specifically the 9150), I found a couple things of interest.First, disabling CPU core parking seems to make a big difference in performance. I believe that by default the CPU core parking is just too aggressive. I wrote a tool to let you enable or disable CPU parking in *real time* without a reboot, so you can test this yourself. It is called ParkControl, http://bitsum.com/about_cpu_core_parking.php . For *me*, it seemed to make a night and day difference.

Second, I am working on a neat little benchmarking tool called ThreadRacer, currently only in alpha prototype. It allows you to really see the effects of these paired cores, and how much it matters that the scheduler is properly aware of them. Take this 1 second or so sample, as seen in the screenshot here (downloads available, but it is an early prototype that I'll quickly be finishing up): http://bitsum.com/forum/index.php/topic,1434.0.htm...

The scheduler update that Microsoft issued of course treats these paired cores as it would a hyper-threaded core. Indeed, the concept is very similar, except perhaps to avoid patents, AMD took the 'share a little' instead of 'share a lot' approach when it comes to shared computational resources. This was the proper way to *quickly* address the issue, but I believe the scheduler is still suboptimal on these processors (likely to be resolved in Windows 8 or a later update to Windows 7/Vista).

For Bulldozer, as you know, they are two real processors, but because they have shared dependencies, the performance can really be drained if the other processor in the 'pair' is busy. You can see the effects from ThreadRacer, the core without its pair busy quickly out-paced the paired cores that were both busy.

jcollake - Wednesday, May 30, 2012 - link

I should have also mentioned that ThreadRacer also allows you to see how a single CPU consuming thread gets swapped around to different cores (the multi-core thread in the utility). This is its other use. The less the thread gets swapped from core to core, the greater the performance will be. It is interesting to compare and contrast the behavior of the scheduler. I fully believe that most the problems with Bulldozer are due to the Windows scheduler, something that could be tested by using linux and replacing the scheduler with a custom one, or an off the shelf alternative that may behave substantially differently than the Windows scheduler.SocketF - Wednesday, May 30, 2012 - link

Some people running BOINC programs have reported that Windows-applications run faster when they use a Linux and WINE or a VM.The Win-scheduler especially hurts AMD chips, because of the huge exclusive caches. If a thread on an intel CPU is switched to another core, it can load the warmed up L2 portion from the L2 inclusive L3.

I did some google-search and it seems that under Linux, each core has its own run-queue, whereas on Windows, there is only one run queue for all cores.

But i didn't delve into it deeply, there are so many different schedulers for Linux, seems to be a complex issue ;-)

Btw. your link to download is off limits for non-members of your discussion board:

-------------------------

Warning!

The topic or board you are looking for appears to be either missing or off limits to you.

Please login below or register an account with Bitsum Forums.

----------------------------

Maybe you can upload it somewhere else?

jcollake - Saturday, September 1, 2012 - link

Sorry for the late reply. First, the forum permissions were fixed. Second, the utility (still in early stages) is included in Process Lasso *and* available here: http://bitsum.com/threadracer.phpeoerl - Wednesday, May 30, 2012 - link

Very interesting article, together with the hardware.fr report there's a lot of information. One question though, if you read commentaries : you didn't speak much about the influence of compilers. This proved to change a lot of things on Linux (see phoronix extensive tests on both ivy bridge and bulldozer depending on compiler used and compiler options, for examplehttp://www.phoronix.com/scan.php?page=article&...

http://www.phoronix.com/scan.php?page=article&...

Benchmark results really change a lot with bulldozer, much more than with ivy or sandy bridge. Do you think AMD lost being oversensitive to compiler optimisations, due to a very original architecture ?

JohanAnandtech - Thursday, May 31, 2012 - link

I deliberately avoided the compiler issues as this would make the article too convoluted. But notice that what we found is not influenced by compiler choice: we find the same indications in SAP and SQL server (compiled by "conservative" compilers and compiler settings) as in CPU CPU 2006, which uses the best optimized settings and compiler as possible.