The Intel SSD 710 (200GB) Review

by Anand Lal Shimpi on September 30, 2011 8:53 PM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 710

AnandTech Storage Bench 2011

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

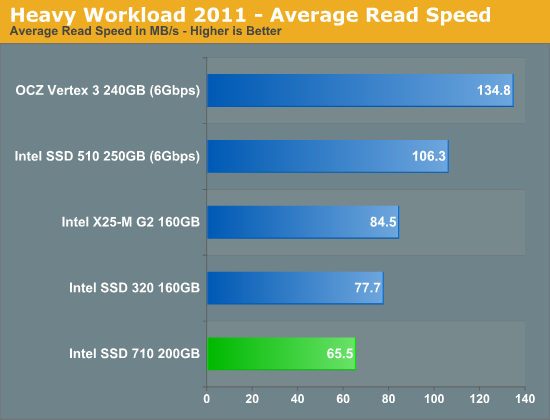

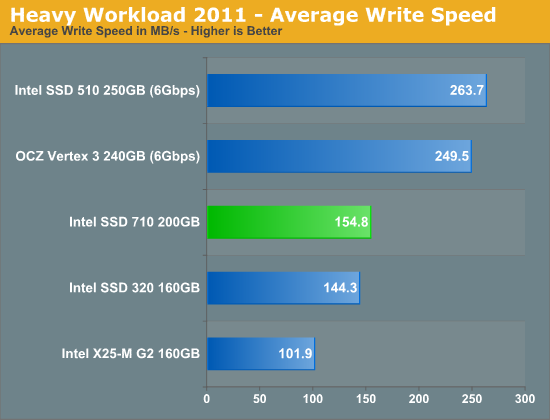

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

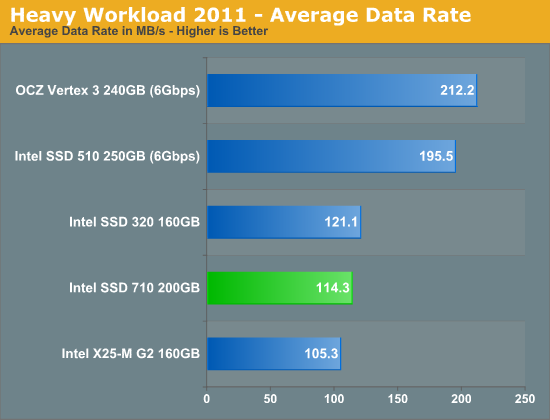

We'll start out by looking at average data rate throughout our new heavy workload test:

I threw in our standard desktop tests just to hammer home the point that the 710 simply shouldn't be used for client computing. Not only is MLC-HET overkill for client workloads, but the Intel SSD 320's firmware is better optimized for client computing.

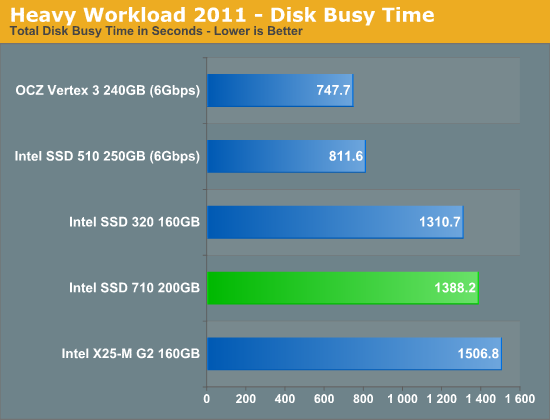

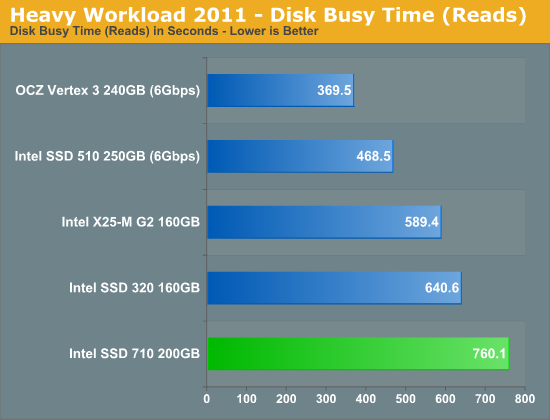

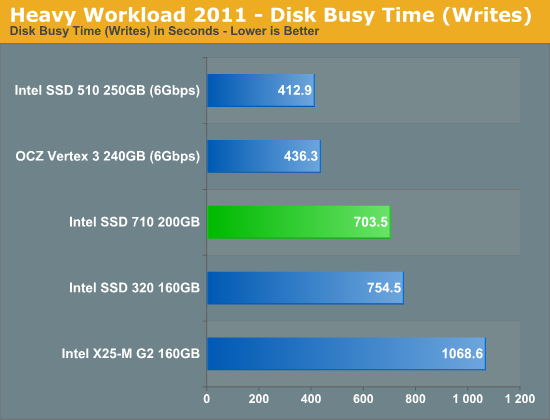

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

68 Comments

View All Comments

Juri_SSD - Saturday, October 1, 2011 - link

Anand, I have read your previous articles and there where all somehow good. But this one misses one important thing and therefore there are many comparisons, that aren´t correct. When I saw the video, I just thought what is wrong with you.First of all: How dare you to compare a 50nm Flash-SSD with a 25 nm Flash-SSD and say that there is only a saving of cost because of use the cheaper MLC instead of SLC? That is so wrong! You can just shrink the 50nm SLC to 35nm SLC and you have lowered the price to half, then you go on and shrink it to 25nm and you have a further reduction in price and end up at a 1/4 price of an 50nm SLC-NAND just by shrinking the Cells.

Secondly: How dare you compare a 50nm FLASH-SSD with a 25nm Flash-SSD and then say that you have now more than 64 GB just because Intel wisely uses MLC? Hello? What about shrinking again? Your video is so wrong… 64 GB 50nm SLC -> shrinking -> 128 GB 34nm SLC -> shrinking -> 256 GB 25 nm SLC!

What do we have? Intel could make a 256 GB SLC-drive just by shrinking. Instead of pointing this out, you told the people how “good” Intel does his job by sorting out good MLC-NAND to compete against an very very very old, really old SSD. The only winner on this “good” job is Intel itself. The enterprise-consumer waits for a competitor who actually shrinks the SLC-Nand to 25nm.

Then again: You compare GB/Dollar. That is nice. And then you do a long speech about servers that really need all this p/e-cycles. But, if the servers really need all this p/e-cycles, why do you not compare p/e-cycles/Dollar? Perhaps, because the new 710-SSD really sucks on that comparison, also against an really old SLC-SSD like the Intel X25-E?

Then again, you can say: “All right, you are right Juri, but there are no 34nm SLC-Flash” Ups, this is also untrue, there are 34nm SLC-Flash-drives, so why you don’t compare GB/Dollar with these drives? You don’t know what I mean? How about Intel SSD 311? If you compare that 20 GB SLC 34nm NAnd-Flash drive, you see that the price of an 710-SSD you could easily make with a simple shrinking of SLC-NAND, just like I told in the first point.

I am really disappointed by your review.

PS: If you think my english is bad, you can try reading in german: http://hardware-infos.com/news.php?news=3946

lemonadesoda - Saturday, October 1, 2011 - link

I disagree with the statement that the SSD market is a race to the bottom. I think this is a lazy catchphrase that demonstrates a company's unwillingness to innovate. It is like saying the CPU or GPU or TFT or mobile handset business is a race to the bottom. Clearly, this is not true!There is plenty of room for Intel to innovate, differentiate, and gain margin on consumer SSD.

What SSD "technologies" would be interesting for the consumer? Encryption; Response-to-theft management; Wear leveling; SMART 2; Thunderbolt, etc. that would allow Intel to lead and to charge a premium on the consumer product.

Intel owns the Light Peak/Thunderbolt technology. Intel should get Thunderbolt onto it's PC chipset and get a range of SSDs onto Thunderbolt. Why are we using (e)SATA as a slow intermediary layering protocol when thunderbolt could do this and do it better? With Intel thunderbolt on the Intel mainboard, and compatible Intel SSD, we would no longer find PCIe based SSD or RAID0 SATA interesting. Intel could claim the enthusiast (not just enterprise) market in one swoop. And enthusiast drives consumer branding and perception.

There's still a lot of room for Intel in the SSD market. Or perhaps the current team has run out of ideas and motivation?

Friendly0Fire - Saturday, October 1, 2011 - link

Actually, no, there's a point you're missing. At the moment the biggest barrier to adoption with SSDs is... price. Specifically cost/GB. CPUs, GPUs and mobile handsets can be had for all price ranges, thus you see a good amount of spread between low and high end. CPUs and GPUs also have the advantage of being bundled in prefab computers, while mobiles get heavy price cuts through mobile plans.SSDs, however, are still restricted to a niche market, only seen as an optional component on high-end computers or bought directly as a separate piece. Sadly, most people still consider "performance" to be summarized by how many GHz and GBs your computer has. SSDs can improve performance tremendously, but good luck explaining what IOPS or bandwidth mean. Until prices are closer to that of magnetic drives, most people won't even be interested in learning about them.

So yeah, for the time being SSDs are a race to the bottom in the customer market. Performance is what I'd call good enough for 99.95% of computer users, even when you consider 3Gbps last-generation drives. What matters now is price drops.

EddyKilowatt - Tuesday, October 4, 2011 - link

I agree that price is the #1 barrier in the minds of potential adopters, but right after that comes reliability, and I think this looms equally large once people get used to the price and understand the performance benefit.Many are waiting for all the myriad 'issues' to get sorted out... until they do, it won't truly be a price-driven commodity market. And until they do, Intel can offer added value -- if they're careful about reliability themselves -- that justifies the price premium they'd like to charge.

Perhaps SSDs aren't as architecture and innovation driven as CPUs, but there's way more to them than just bulk memory mass produced at sweatshop wages.

AnnonymousCoward - Saturday, October 1, 2011 - link

Synthetic hard drive comparisons are not reality.Luke212 - Sunday, October 2, 2011 - link

Anand, Businesses do not run SSDs as single drives or raid 0. Failures being 1-2% it is too disruptive to business (unless they are read only). Can you consider testing these drives in Raid 1, which is how they are used in real life?Iketh - Monday, October 17, 2011 - link

That would depend on the raid controller's performance, not the drive.ClagMaster - Sunday, October 2, 2011 - link

"It wouldn't be untrue to say that Intel accomplished its mission."Means after the reader deciphers this ...

"It would be true to say that Intel accomplished its mission."

Do not do this. I have skinned engineers alive for making this kind of double-negative grammatical error in their reports I often have to shovel through. I hate teaching engineers English. Do make the change.

Your comments about Intel's leadership in the consumer SSD and enterprise SSD development pretty much hit the nail on the head.

Intel essentially created the consumer market for these SSDs. Not OCZ, Marvel and Sandforce. They are the dogs eating the crumbs.

Intel does some serious prototype testing before these products hit the shelves. Far more than its competitors.

This is another well balanced, high quality SSD.

ClagMaster - Sunday, October 2, 2011 - link

When I mean well balanced, I mean this is not a SSD for the obscessive-compulsive speed free with money to burn.This SSD is a good balance of cost, performance and reliability for the enterprise space. Its optimized for cost and reliability which limits performance somewhat.

Althoug slow compared to a Vertex 3, the SSD710 would still provide fine performance for consumer PC's as a boot drive.

AnnonymousCoward - Sunday, October 2, 2011 - link

"slow compared to a Vertex 3, the SSD710 would still provide fine performance"Wouldn't it be nice to have quantified results??? Like Windows boot time, time to launch programs, and time to open big files.

Synthetic benchmarks are both inaccurate, and provide no relative information. And synthetic benchmarks have been known to be inaccurate, on Anand's own site!

____________

http://tinyurl.com/yamfwmg

In IOPS, RAID0 was 20-38% faster; then the loading *time* comparison had RAID0 giving equal and slightly worse performance! Anand concluded, "Bottom line: RAID-0 arrays will win you just about any benchmark, but they'll deliver virtually nothing more than that for real world desktop performance."

____________

Anand stays stubborn to his flawed SSD performance test methods. If anyone is deciding between a Vertex 3 or an Intel, the single most important data would be the quantified time differences in doing different operation. You'll have to go to another website to find that out.