NVIDIA's GeForce GTX 560: The Top To Bottom Factory Overclock

by Ryan Smith on May 17, 2011 9:00 AM ESTNVIDIA’s GF104 and GF114 GPUs have been a solid success for the company so far. 10 months after GF104 launched the GTX 460 series, NVIDIA has slowly been supplementing and replacing their former $200 king. In January we saw the launch of the GF114 based GTX 560 Ti, which gave us our first look at what a fully enabled GF1x4 GPU could do. However the GTX 560 Ti was positioned above the GTX 460 series in both performance and price, so it was more an addition to their lineup than a replacement for GTX 460.

With each GF11x GPU effectively being a half-step above its GF10x predecessor, NVIDIA’s replacement strategy has been to split a 400 series card’s original market between two GF11x GPUs. For the GTX 460, on the low-end this was partially split off into the GTX 550 Ti, which came fairly close to the GTX 460 768MB’s performance. The GTX 460 1GB has remained in place however, and today NVIDIA is finally starting to change that with the GeForce GTX 560. Based upon the same GF114 GPU as the GTX 560 Ti, the GTX 560 will be the GTX 460 1GB’s eventual high-end successor and NVIDIA’s new $200 card.

| GTX 570 | GTX 560 Ti | GTX 560 | GTX 460 1GB | |

| Stream Processors | 480 | 384 | 336 | 336 |

| Texture Address / Filtering | 60/60 | 64/64 | 56/56 | 56/56 |

| ROPs | 40 | 32 | 32 | 32 |

| Core Clock | 732MHz | 822MHz | >=810MHz | 675MHz |

| Shader Clock | 1464MHz | 1644MHz | >=1620MHz | 1350MHz |

| Memory Clock | 950MHz (3800MHz data rate) GDDR5 | 1002Mhz (4008MHz data rate) GDDR5 | >=1001Mhz (4004MHz data rate) GDDR5 | 900Mhz (3.6GHz data rate) GDDR5 |

| Memory Bus Width | 320-bit | 256-bit | 256-bit | 256-bit |

| Frame Buffer | 1.25GB | 1GB | 1GB | 1GB |

| FP64 | 1/8 FP32 | 1/12 FP32 | 1/12 FP32 | 1/12 FP32 |

| Transistor Count | 3B | 1.95B | 1.95B | 1.95B |

| Manufacturing Process | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

| Price Point | $329 | ~$239 | ~$199 | ~$160 |

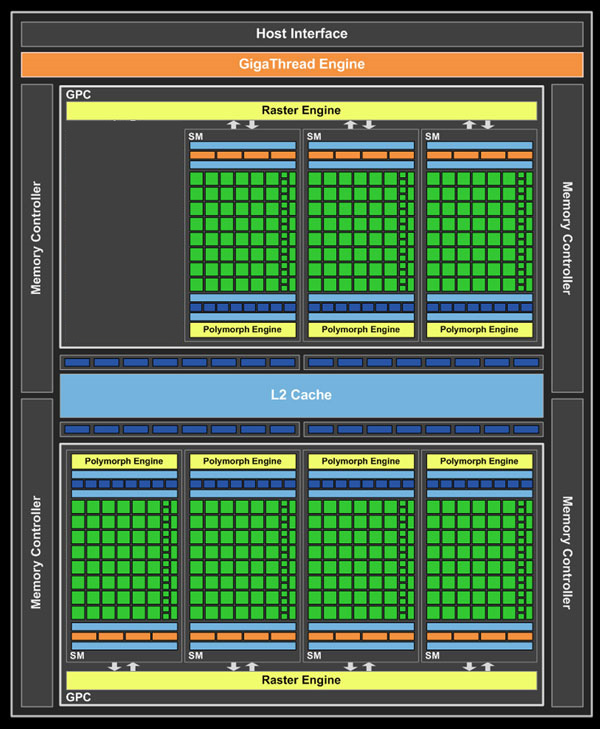

The GTX 560 is basically a higher clocked version of the GTX 460 1GB. The GTX 460 used a cut-down configuration of the GF104, and GTX 560 will be doing the same with GF114. As a result both cards have the same 336 SPs, 7 SMs, 32 ROPs, 512KB of L2 cache, and 1GB of GDDR5 on a 256-bit memory bus. In terms of performance the deciding factor between the two will be the clockspeed, and in terms of power consumption the main factors will be a combination of clockspeed, voltage, and GF114’s transistor leakage improvements over GF104. All told, NVIDIA’s base configuration for a GTX 560 puts the card at 810MHz for the core clock and 4004MHz (data rate) for the memory clock, which compared to the reference GTX 460 1GB is 135MHz (20%) faster for the core clock and 404MHz (11%) faster for the memory clock. NVIDIA puts the TDP at 150W, which is 10W under the GTX 460 1GB.

With that said, this launch is going to be more chaotic than usual for an NVIDIA mid-range product launch. While NVIDIA and AMD both encourage their partners to differentiate their mid-range cards based on a number of factors including factory overclocks and the cooler used, these products are always launched alongside a reference card. However for the GTX 560 this is going to be a reference-less launch: NVIDIA is not doing a retail reference design for the GTX 560. This is a fairly common situation for the low-end, where we’ll often test a reference design that never is used for retail cards, but it’s quite unusual to not have a reference design for a mid-range card.

As a result, in lieu of a reference card to refer to we have a bit of chaos in terms of the specs of the cards launching today. As long as you’re willing to spend a bit more in power, GF114 clocks really well, something that we’ve seen in the past on the GTX 560 Ti. This has lead to partners launching a number of factory overclocked GTX 560 Ti cards and few if any reference clocked cards, as the retail market does not have the stringent power requiements of the OEM market. So while OEMs have been using reference clocked cards for the lowest power consumption, most retail cards are overclocked. Here are the clocks we're seeing with the GTX 560 launch lineup.

| GeForce GTX 560 Launch Card List | ||||

| Card | Core Clock | Memory Clock | ||

| ASUS GeForce GTX 560 Top | 925 MHz | 4200 MHz | ||

| ASUS GeForce GTX 560 OC | 850 MHz | 4200 MHz | ||

| Palit GeForce GTX 560 SP | 900 MHz | 4080 MHz | ||

| MSI GeForce GTX 560 Twin FrozrII OC | 870 MHz | 4080 MHz | ||

| Zotac GeForce GTX 560 AMP! | 950 MHz | 4400 MHz | ||

| KFA2 GeForce GTX 560 EXOC | 900 MHz | 4080 MHz | ||

| Sparkle GeForce GTX 560 Calibre | 900 MHz | 4488 MHz | ||

| EVGA GeForce GTX 560 SC | 850 MHz | 4104 MHz | ||

| Galaxy GeForce GTX 560 GC | 900 MHz | 4004 MHz | ||

This is why NVIDIA has decided to forgo a reference card altogether, and is leaving both card designs and clocks up to their partners. As a result, we expect every GTX 560 we’ll see on the retail market will have some kind of a factory overclock, and all of them will be using a custom design. Clocks will be all over the place, while designs are largely recycled GTX 460/GTX 560 Ti designs. This means we’ll see a variety of cards, but there’s a lack of anything we can point to as a baseline. Reference clocked cards may show up in the market, but even NVIDIA is unsure of it at this time. The list of retail cards that NVIDIA has given us has a range of core clocks between 850MHz and 950MHz, meaning the performance of some of these cards is going to be noticeably different from the others. Our testing methodology has changed some as a result, which we’ll get to in depth in our testing methodology section.

With a wide variety of GTX 560 card designs and clocks, there’s also going to be a variety of prices. The MSRP for the GTX 560 is $199, as NVIDIA’s primary target for this card is the lucrative $200 market. However with factory overclocks in excess of 125MHz, NVIDIA’s partners are also using these cards to fill in the gap between the GTX 560 and the GTX 560 Ti. So the slower 850MHz-900MHz cards will be around $199, while the fastest cards will be closer to $220-$230. Case in point, the card we’re testing today is the ASUS GTX 560 DirectCU II Top, ASUS’s highest clocked card. While their 850MHz OC card will be $199, the Top will be at $219.

For the time being NVIDIA won’t have a ton of competition from AMD right at $200. With the exception of an errant card now and then, Radeon HD 6950 prices are normally $220+; meanwhile Radeon HD 6870 prices are between $170 and $220, with the bulk of those cards being well under $200. So for the slower GTX 560s their closest competition will be factory overclocked 6870s and factory overclocked GTX 460s, the latter of which are expected to persist for at least a few more months. Meanwhile for the faster GTX 560s the competition will be cheap GTX 560 Tis and potentially the 1GB 6950. The mid-range market is still competitive, but for the moment NVIDIA is the only one with a card specifically aligned for $199.

| May 2011 Video Card Prices | ||

| NVIDIA | Price | AMD |

| $700 | Radeon HD 6990 | |

| $480 | ||

| $320 | Radeon HD 6970 | |

| $260 | Radeon HD 6950 2GB | |

| $230 | Radeon HD 6950 1GB | |

| $200 | ||

| $180 | Radeon HD 6870 | |

| $160 | Radeon HD 6850 | |

| $150 | Radeon HD 6790 | |

| $130 | ||

Finally, I’d like to once again make note of the naming choice of a video card. I’m beginning to sound like a broken record here and I know it, but video card naming this last year has been frustrating. NVIDIA has a prefix (GTX), a model number (560), and a suffix (Ti), except when they don’t have a suffix. With the existence of a prefix and a model number, a suffix was already superfluous, but it’s particularly problematic when some cards have a suffix and some don’t. Remember the days of the GeForce 6800 series, and how whenever you wanted to talk about the vanilla 6800, no one could easily tell if you were talking about the series or the non-suffixed card? Well we’re back to those days; GTX 560 is both a series and a specific video card. Suffixes are fine as long as they’re always used, but when they’re not these situations can lead to confusion.

66 Comments

View All Comments

L. - Thursday, May 19, 2011 - link

You'll have some trouble doing an apples to apples comparison between a 580 and a 6970 ...The 580 tdp goes through the roof when you OC it, not so much with the 6970.

The 580 is a stronger gpu than the 6970 by a fair margin (2% @ 2560 to 10+%@1920), does not depend much on drivers.

The 580 costs enough to make you consider a 6950 crossfire as a better alternative . or even 6970 cf ...

The day drivers will be comparable is about a few months from now still as both cards are relatively fresh.

mosox - Tuesday, May 17, 2011 - link

And of course the only factory OCed card in there is from Nvidia.Can you show me ONE review in which you did the same for AMD? Including a factory OCed card in a general review and compare it to stock Nvidia cards?

Are you trying to inform your readers or to pander to Nvidia by following to the letter their "review guides"? No transparency in the game selection (again that TWIMTBP-heavy list), OCed cards, what's next? Changing the site's color from blue to green? Letting the people at Nvidia to do your reviews and you just post them in here?

:(

TheJian - Wednesday, May 18, 2011 - link

The heavily overclocked card is from ASUS. :)NV didn't send them a card. There is no ref design for this card (reference clocks, but not a card). They tested at 3 speeds, giving us a pretty good idea of multiple variants you'd see in the stores. What more do you want?

Nvidia didn't have anything to do with the article. They put NV's name on the SLOWER speeds in the charts, but NV didn't send them a card. Get it? They down-clocked the card ASUS sent to show what NV would assume they'd be clocked at on the shelves. AT smartly took what they had to work with (a single 560 from ASUS - mentioned as why they have no SLI benchmarks in with 560), clocked it at the speeds you'd buy (based on checking specs online) and gave us the best idea they could of what we'd expect on a shelf or from oem.

Or am I just missing something in this article?

Is it Anandtech's problem NV has spent money on devs trying to get them to pay attention to their tech? AMD could do this stuff too if they weren't losing 6Bil in 3 years time (more?). I'm sure they do it some, but obviously a PROFITABLE company (for many more years than AMD - AMD hasn't made a dime since existence as a whole), with cash in the bank and no debt, can blow money on game devs and give them some engineers to help with drivers etc.

http://moneycentral.msn.com/investor/invsub/result...

That link should work..(does in FFox). If you look at a 10 year summary, AMD has lost about 6bil over 10yrs. That's NOT good. It's not easy coming up with a top games list that doesn't include TWIMTBP games.

I tend to agree with the link below. We'd have far more console ports if PC companies (Intel,AMD,Nvidia) didn't hand some money over to devs in some way shape or form. For example, engineers working with said game companies etc to optimize for new tech etc. We should thank them (any company that practices this). This makes better PC games.

Not a fan of fud, but they mention Dirt2, Hawx, battleforge & Halflife2 were all ATI enhanced games. Assassins Creed for NV and a ton more now of course.

http://www.fudzilla.com/graphics/item/11037-battle...

http://www.bit-tech.net/news/gaming/2009/10/03/wit...

Many more sites about both sides on their "enhancements" to games by working with devs. It's not straight cash they give, but usually stuff like engineers, help with promotions etc. With Batman, NV engineers wrote the AA part for their cards in the game. It looks better too. AMD was offered the same (probably couldn't afford it, so just complained saying "they made it not like our cards". Whatever. They paid, you didn't so it runs better on their cards in AA. More on NV's side due to more money, but AMD does this too.

bill4 - Tuesday, May 17, 2011 - link

Crysis 1, but not Crysis 2? Wheres Witcher 2 benches (ok, that one may have been time constraints). Doesnt LA Noire have a PC version you could bench? Maybe even Homefront?It's the same old ancient tired PC bench staples that most sites use. I can only guess this is because of lazyness.

Ryan Smith - Tuesday, May 17, 2011 - link

I expect to be using Crysis 1 for quite a bit longer. It's still the de-facto ourdoor video card killer. The fact that it still takes more than $500 in GPUs to run it at 1920 with full Enthusiast settings and AA means it's still a very challenging game.Crysis 2 I'm not even looking at until the DX11 update comes out. We want to include more games that fully utilize the features of today's GPUs, not fewer.

LA Noire isn't out on the PC. In fact based on Rockstar's history with their last game, I'm not sure we'll ever see it.

In any case, the GPU test suite is due for a refresh in the next month. We cycle it roughly every 6 months, though we don't replace every single game every time.

mosox - Wednesday, May 18, 2011 - link

Make sure you don't slip in any game that Nvidia doesn't like or they might cut you off from the goodies. 100% TWIMTBP please, no matter how obscure are the games.

TheJian - Wednesday, May 18, 2011 - link

Ignore whats on a box. Go to metacritic and pick top scoring games from last 12 months up to now. If the game doesn't get 80+/100 you pass. Not enough like or play it probably below there. You could toss in a beta of duke forever or something like that if you can find a popular game that's about to come out and has a benchmark built in. There's only a few games that need to go anyway (mostly because newer ones are out in the same series - Crysis 2 w/dx11 update when out).Unfortunately mosox, you can't make an AMD list (not a long one) as they aren't too friendly with devs (no money or free manpower, duh), and devs will spend more time optimizing for the people that give them the most help. Plain and simple. If you reversed the balance sheets, AMD would be doing the same thing (more of it than now anyway).

In 2009 when this cropped up Nvidia had 220 people in a dept. that was purely losing money (more now?). It was a joke that they never made nvidia any money, but they were supplying devs with people that would create physx effects, performance enhancements etc to get games to take advantage of Nvidia's features. I don't have any problem with that until AMD doesn't have the option to send over people to do the same. AFAIK they are always offered, but can't afford it, decline and then whine about Nvidia. Now if NV says "mr gamemaker you can't let AMD optimize because you signed with us"...ok. Lawsuit.

mosox - Wednesday, May 18, 2011 - link

I don't want "AMD games" that would be the same thing. I just don't want obscure games that are fishy and biased as well.Games in which a GTX 460/768 is better than a HD 6870 AND they're not even popular - but are in there to skew the final results exactly like in this review. Take out HAWX 2 and LP2 and do the performance summary again.

Lately in every single review you can see some nvidia cards beating the AMD counterparts with 2-5% ONLY because of the biased game selection.

A HW site has to choose between being fair and unbiased and serve its readers or sell out to some company and become a shill for that company.

HAWX 2 is only present because Nvidia (not Ubisoft!) demanded that. It's a shame.

Spoelie - Wednesday, May 18, 2011 - link

Both HAWX 2 and Lost Planet 2 are not in this review?mosox - Wednesday, May 18, 2011 - link

I was talking in general. HAWX2 isn't but HAWX is. And Civ 5 in which the AMD cards are lumped together at the bottom and there's no difference whatsoever between a HD 6870 and a HD 6950.