Z68 SSD Caching with Corsair's F40 SandForce SSD

by Anand Lal Shimpi on May 13, 2011 3:06 AM ESTAnandTech Storage Bench 2011 - Heavy Workload

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

First, some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

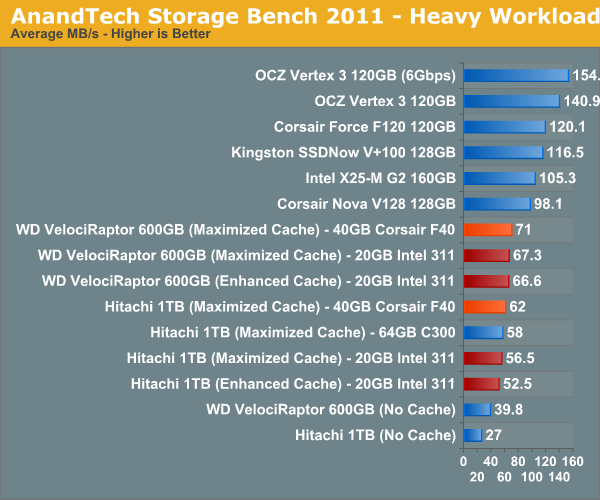

We'll start out by looking at average data rate throughout our new heavy workload test:

In our launch article we found that even Crucial's 64GB RealSSD C300 wasn't able to significantly outperform the 311, the situation isn't much different with the F40. The good news is that you do get twice the capacity and technically better performance, which could result in more of your data being in the cache at once.

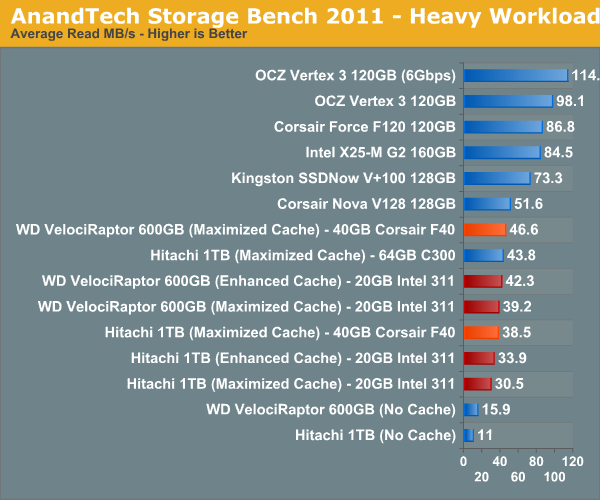

The breakdown of reads vs. writes tells us more of what's going on:

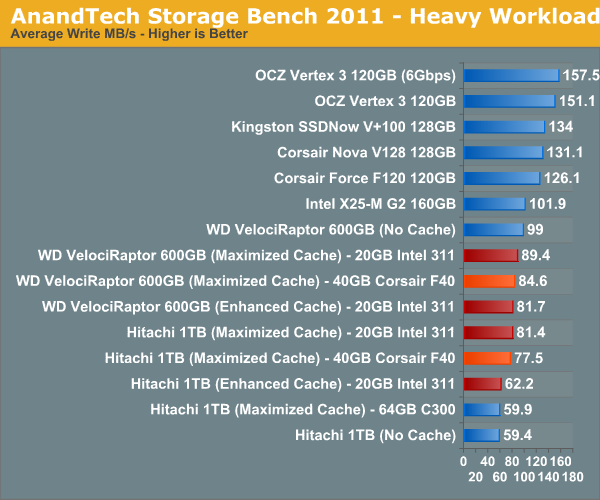

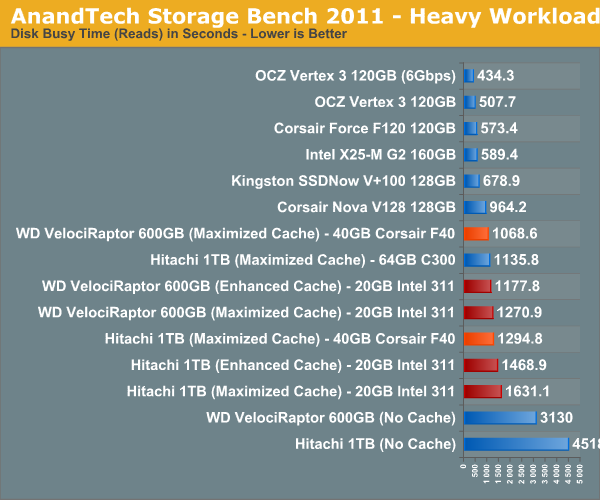

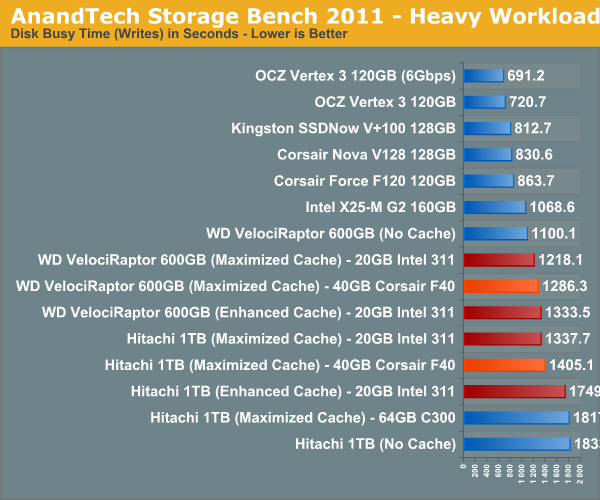

Read performance is actually where the F40 shines, surprisingly enough. This would imply that the majority of reads being cached are highly compressible in nature, playing to the F40's strengths. The write performance is a different story however:

There isn't a huge difference here, but the 311 does pull ahead in the writes that occur during our test. Overall I'd say the F40 and 311 are pretty equal here, which is a good thing given the capacity advantage.

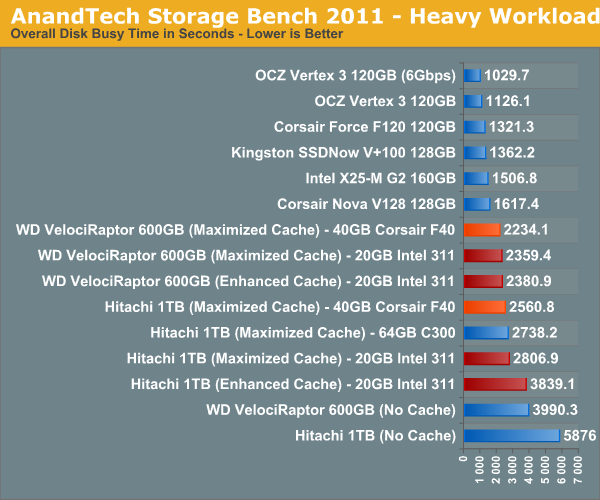

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

81 Comments

View All Comments

Hrel - Friday, May 13, 2011 - link

Does anyone else notice how silly measuring a 4KB write in MB/s? Haha, seriously, anything at even point 5MB/s is more than fast enough to write 4KB and have me not even notice. It's not a fault on the SSD that it doesn't do those quite as well. It's still WAY faster than I need it to be.Hrel - Friday, May 13, 2011 - link

perspective peoplecbass64 - Friday, May 13, 2011 - link

uhhh...hardly anyone ever just writes a single 4KB file...different programs different IO specs. If I recall, Vantage mostly uses 4KB reads/writes, at low queue depths too i think. That doesn't mean it writes a 4KB file and times how long it takes, it writes TONS of 4KB files are measures how long that takes.You do make a good point about perspective though...when you see drives boasting that they can achieve ridiculously huge amounts of IOps, it's almost always achieved by running 512byte IO for like 5 seconds (useless metrics)

ZmaxDP - Friday, May 13, 2011 - link

Anand,I'd really like to see you take one of the highest performing drives (M4, SF2400, Intel500 series) like the Vertex 3 240GB and partition 64GB of it as cache. Theoretically, both the size increase and the significant speed bump would make a big difference and make it more like running with a full SSD.

For me, the real win in terms of configuration is a 2 or 3 TB drive cached by one of these high performance drives, with the leftover space dedicated to "always fast" programs. You get full SSD speed all the time for particular things, and the best cached performance possible from the storage drive...

Unless of course this tech works with ramdisks, in which case I'd love to see numbers with a ramdisk as the cache and a Vertex 3 as the main drive just for a sense of total IO overkill...

araczynski - Friday, May 13, 2011 - link

looks like i'll be getting an OCZ Vertex 3 in my next rig.mervincm - Friday, May 13, 2011 - link

Might this be a suitable job for an old JMicron SSD? Some of us have these and are looking for a job. With the last firmware from SuperTalent, they were not AS terrrible, and they were always reasonable at reading. While its poor write IOPS might be a negative at the start, their size (most are 64GB or larger) and (still way better than HDD) read performance might give a decent boost! Besides, we paid good money for them, and need to find something to do with them!Comon Anand, take a trip back to 2009,dig out an old G1 SSD, lets see if it helps or hurts :) this 2011 systemboard.

GTVic - Friday, May 13, 2011 - link

"I have to admit that Intel's Z68 launch was somewhat anti-climactic for me"Of course, the theory that enthusiasts want to utilize the on-chip graphics and overclock at the same time is ridiculous. Anyone that pays big bucks for a higher end motherboard, cooling apparatus, a high end video card, etc. is not going to then complain about not being able to use the built-in GPU. Likewise, anyone with such a setup is not going to blink at the cost of an SSD for their system drive once they see the performance improvement in person. No wonder Intel put this on the back burner.

fic2 - Monday, May 16, 2011 - link

I would expect that the use of the built-in GPU would be for the ability of QuickSync.Although since I am not a gamer I would rather just use the built-in graphics - I know 'shock' that people other than gamers like fast cpus!.

Tinface - Friday, May 13, 2011 - link

I too would like to see some numbers on using a highend SSD like a Vertex 3 240GB for caching. I'm intending to get such an SSD for my next gaming rig. Right now I have a massive Steam folder weighing in at 275GB. Since Steam want all games in a subfolder I have to uninstall a lot to fit it on the SSD. Other games such as World of Warcraft benefits massively from an SSD so that's got 25GB reserved. Am I better off using 64GB for cache or save it for a handful of games more on the SSD instead of the harddrive? Any other options I missed?JNo - Sunday, May 15, 2011 - link

Yeah there is another much better option that you missed. Google "Steam Mover" - it's a small free app that allows you to move individual steam games to from one drive (eg a mechanial one) to another (eg SSD) and have it all still work because it uses junction pointers to redirect to computer to look in another place for the folders whilst still thinking that they're in the original place.Alternatively GameSave Manager (I use) has the same feature built in as well as performing game backups to the cloud. With either you can just keep your most played games on the SSD running full pelt and switch them around occasionally.