Intel Z68 Chipset & Smart Response Technology (SSD Caching) Review

by Anand Lal Shimpi on May 11, 2011 2:34 AM ESTSSD Caching

We finally have a Sandy Bridge chipset that can overclock and use integrated graphics, but that's not what's most interesting about Intel's Z68 launch. This next feature is.

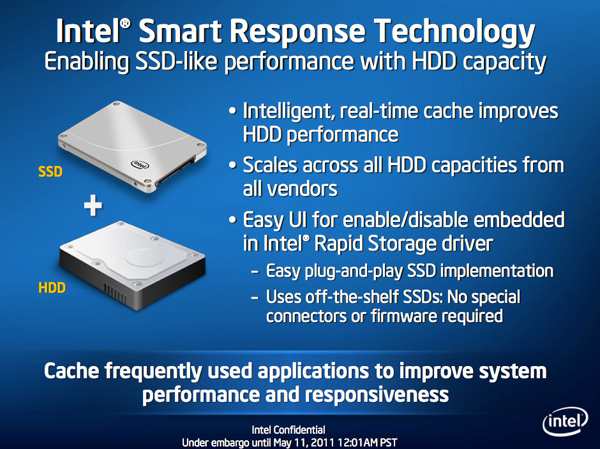

Originally called SSD Caching, Intel is introducing a feature called Smart Response Technology (SRT) alongside Z68. Make no mistake, this isn't a hardware feature but it's something that Intel is only enabling on Z68. All of the work is done entirely in Intel's RST 10.5 software, which will be made available for all 6-series chipsets but Smart Response Technology is artificially bound to Z68 alone (and some mobile chipsets—HM67, QM67).

It's Intel's way of giving Z68 owners some value for their money, but it's also a silly way to support your most loyal customers—the earliest adopters of Sandy Bridge platforms who bought motherboards, CPUs and systems before Z68 was made available.

What does Smart Response Technology do? It takes a page from enterprise storage architecture and lets you use a small SSD as a full read/write cache for a hard drive or RAID array.

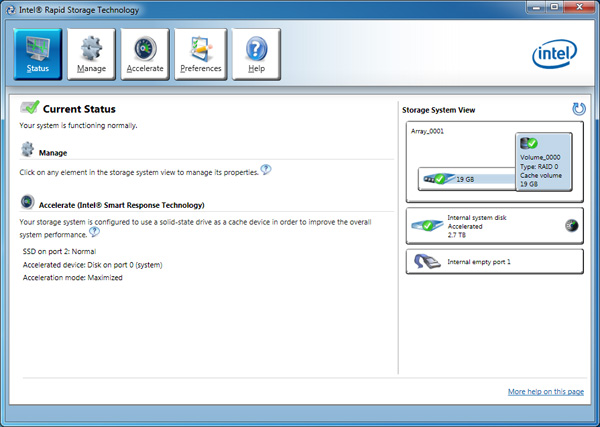

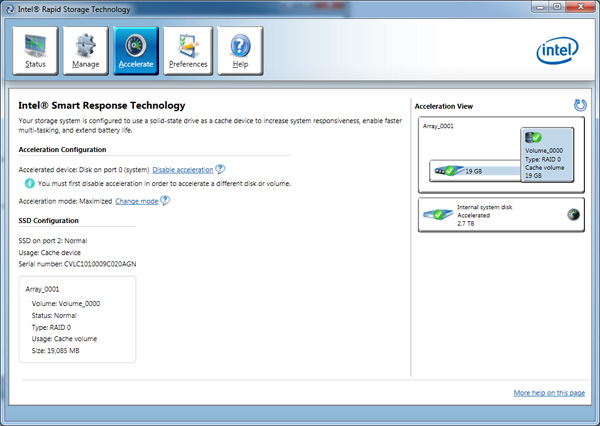

With the Z68 SATA controllers set to RAID (SRT won't work in AHCI or IDE modes) just install Windows 7 on your hard drive like you normally would. With Intel's RST 10.5 drivers and a spare SSD installed (from any manufacturer) you can choose to use up to 64GB of the SSD as a cache for all accesses to the hard drive. Any space above 64GB is left untouched for you to use as a separate drive letter.

Intel limited the maximum cache size to 64GB as it saw little benefit in internal tests to making the cache larger than that. Admittedly after a certain size you're better off just keeping your frequently used applications on the SSD itself and manually storing everything else on a hard drive.

Unlike Seagate's Momentus XT, both reads and writes are cached with SRT enabled. Intel allows two modes of write caching: enhanced and maximized. Enhanced mode makes the SSD cache behave as a write through cache, where every write must hit both the SSD cache and hard drive before moving on. Whereas in maximized mode the SSD cache behaves more like a write back cache, where writes hit the SSD and are eventually written back to the hard drive but not immediately.

Enhanced mode is the most secure, but it limits the overall performance improvement you'll see as write performance will still be bound by the performance of your hard drive (or array). In enhanced mode, if you disconnect your SSD cache or the SSD dies, your system will continue to function normally. Note that you may still see an improvement in write performance vs. a non-cached hard drive because the SSD offloading read requests can free up your hard drive to better fulfill write requests.

Maximized mode offers the greatest performance benefit, however it also comes at the greatest risk. There's obviously the chance that you lose power before the SSD cache is able to commit writes to your hard drive. The bigger issue is that if something happens to your SSD cache, there's a chance you could lose data. To make matters worse, if your SSD cache dies and it was caching a bootable volume, your system will no longer boot. I suspect this situation is a bit overly cautious on Intel's part, but that's the functionality of the current version of Intel's 10.5 drivers.

Moving a drive with a maximized SSD cache enabled requires that you either move the SSD cache with it, or disable the SSD cache first. Again, Intel seems to be more cautious than necessary here.

The upside is of course performance as I mentioned before. Cacheable writes just have to hit the SSD before being considered serviced. Intel then conservatively writes that data back to the hard drive later on.

An Intelligent, Persistent Cache

Intel's SRT functions like an actual cache. Rather than caching individual files, Intel focuses on frequently accessed LBAs (logical block addresses). Read a block enough times or write to it enough times and those accesses will get pulled into the SSD cache until it's full. When full, the least recently used data gets evicted making room for new data.

Since SSDs use NAND flash, cache data is kept persistent between reboots and power cycles. Data won't leave the cache unless it gets forced out due to lack of space/use or you disable the cache altogether. A persistent cache is very important because it means that the performance of your system will hopefully match how you use it. If you run a handful of applications very frequently, the most frequently used areas of those applications should always be present in your SSD cache.

Intel claims it's very careful not to dirty the SSD cache. If it detects sequential accesses beyond a few MB in length, that data isn't cached. The same goes for virus scan accesses, however it's less clear what Intel uses to determine that a virus scan is running. In theory this should mean that simply copying files or scanning for viruses shouldn't kick frequently used applications and data out of cache, however that doesn't mean other things won't.

106 Comments

View All Comments

dagamer34 - Wednesday, May 11, 2011 - link

Like most technologies, stay away from first-gen implementations.iwod - Wednesday, May 11, 2011 - link

I wonder if you could install 32GB DDR3 RAM, and just use 20GB of that as Intel SRT.It would be interesting to see how its performance went.

kmmatney - Wednesday, May 11, 2011 - link

Wouldn't be persistent between restarts, so that's a problem right there. It would have to build up the cache every time you reboot, and you couldn't use "Max Cache" mode, so you'd have to wait for the HDD for all writes.liveonc - Wednesday, May 11, 2011 - link

In this article, it was pointed out that using an SSD is still better for those who want speed. But is a SATA3 SSD & SATA2 Velociraptor combo possible? Or what about an SSD + SSD/HDD combo? Some sort of comprimise w/o the great penalties, or smaller penalties & greater value?dgingeri - Wednesday, May 11, 2011 - link

I've seen similar numbers using a smaller SSD with Windows 7's ReadyBoost, and it kept the most used data in the cache better. I'd prefer just using that, as it seems more predictable.jordanclock - Wednesday, May 11, 2011 - link

This IS a big deal. However, a comparison of performance between SRT and ReadyBoost would be handy. Especially ReadyBoost with USB3.dgingeri - Wednesday, May 11, 2011 - link

you can (and I have) set up ReadyBoost to a SATA SSD. I had a 60GB OCZ Apex as my ReadyBoost drive for about 6 months, before I got my dual Vertex 2s as a new boot drive. Windows 7 has a limit of 32GB for Readyboost usage, though. It made a heck of a difference in boot time and some program load time, however, it took a little while to get the caching set up right to cache what I actually used on a regular basis. It started caching Firefox rather quickly, but took it a while to pick up on caching Diablo 2.randinspace - Wednesday, May 11, 2011 - link

I still haven't been able to finish the multiplayer mode due to hardware issues stranding me on a glorified netbook.DesktopMan - Wednesday, May 11, 2011 - link

Anand: http://soerennielsen.dk/mod/VGAdummy/index_en.phpShouldn't this work perfectly fine to enable the IGPU when connected to the DGPU without any of the driver nonsense?

Hrel - Wednesday, May 11, 2011 - link

I'd REALLY like to see you guys compare this SRT caching to two of the fastest 7200rpm drives out there in RAID 0. Cause 1-4 seconds on launching applications on loading game levels isn't work 100 extra bucks.So compare configurations: 1 MD

1 MD with Cache

2MD in RAID 0 (MD = Mechanical Disk)

2 MD in RAID 0 with cache

Vertex 3 SSD by itself (and/or the really fast Corsair one)

You already have most of this testing done and in this article.

PLEASE PLEASE PLEASE PLEASE do this soon! Thanks guys!