Intel Z68 Chipset & Smart Response Technology (SSD Caching) Review

by Anand Lal Shimpi on May 11, 2011 2:34 AM ESTAnandTech Storage Bench 2011

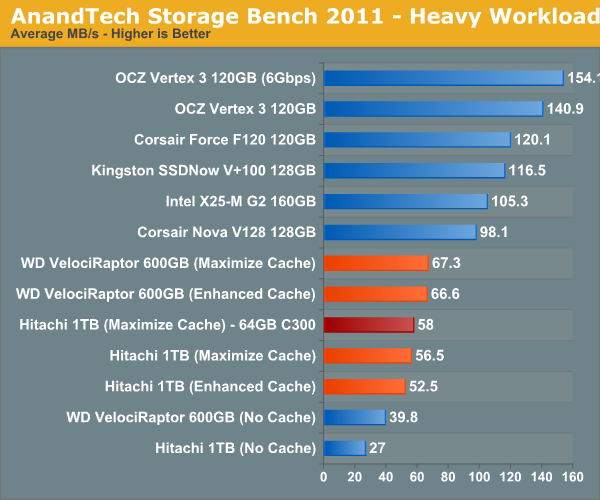

With the hand timed real world tests out of the way, I wanted to do a better job of summarizing the performance benefit of Intel's SRT using our Storage Bench 2011 suite. Remember that the first time anything is ever encountered it won't be cached and even then, not all operations afterwards will be cached. Data can also be evicted out of the cache depending on other demands. As a result, overall performance looks more like a doubling of standalone HDD performance rather than the multi-x increase we see from moving entirely to an SSD.

Heavy 2011—Background

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

First, some details:

1) The MOASB, officially called AnandTech Storage Bench 2011—Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011—Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011—Heavy Workload

We'll start out by looking at average data rate throughout our new heavy workload test:

For this comparison I used two hard drives: 1) a Hitachi 7200RPM 1TB drive from 2008 and 2) a 600GB Western Digital VelociRaptor. The Hitachi 1TB is a good large, but aging drive, while the 600GB VR is a great example of a very high end spinning disk. With a modest 20GB cache enabled, the 3+ year old Hitachi drive is easily 41% faster than the VelociRaptor. We're still not into dedicated SSD territory, but the improvement is significant.

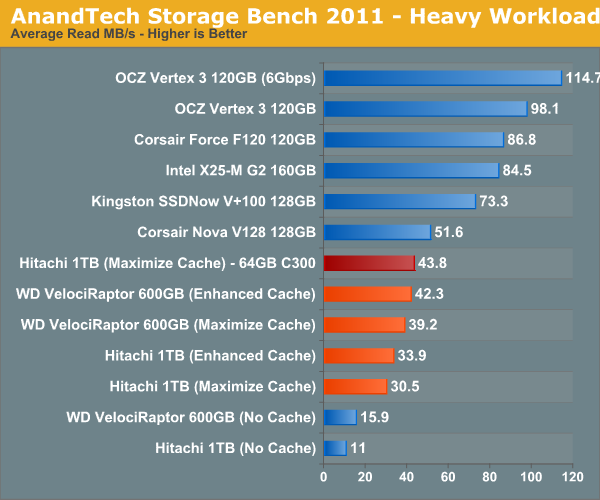

I also tried swapping the cache drive out with a Crucial RealSSD C300 (64GB). Performance went up a bit but not much. You'll notice that average read speed got the biggest boost from the C300 as a cache drive since it does have better sequential read performance. Overall I am impressed with Intel's SSD 311, I just wish the drive were a little bigger.

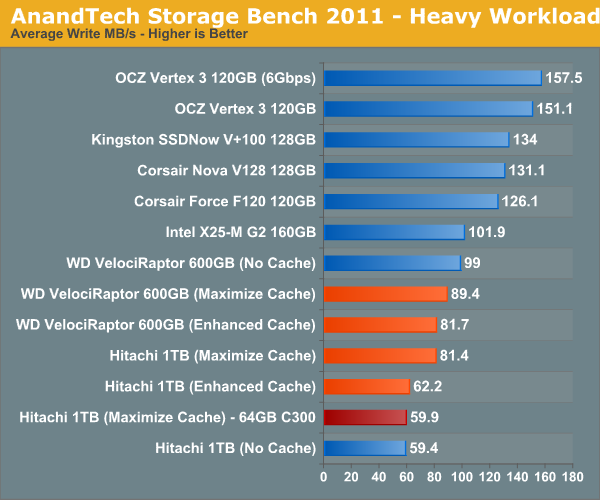

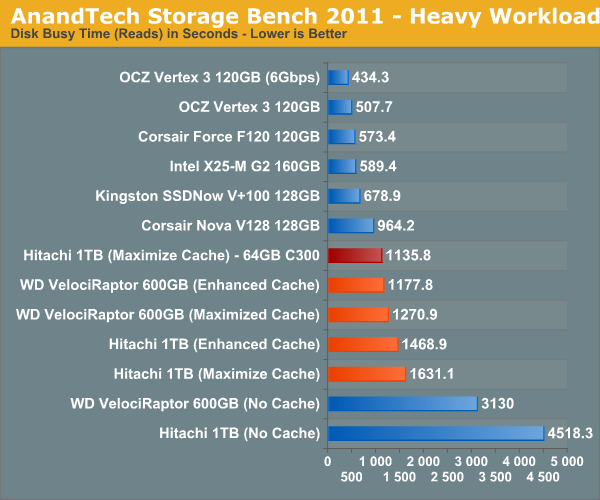

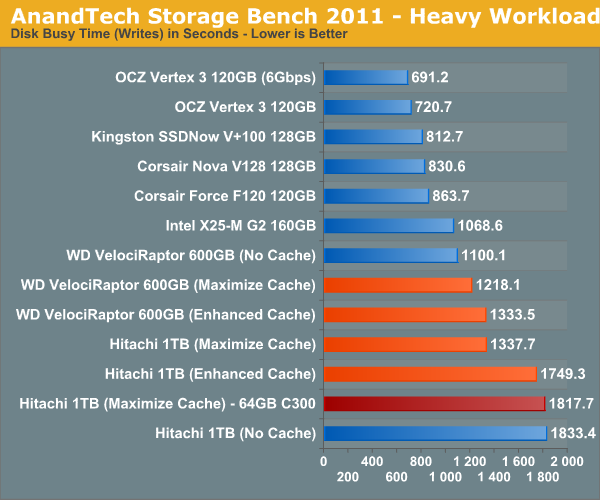

The breakdown of reads vs. writes tells us more of what's going on:

This isn't too unusual—pure write performance is actually better with the cache disabled than with it enabled. The SSD 311 has a good write speed for its capacity/channel configuration, but so does the VelociRaptor. Overall performance is still better with the cache enabled, but it's worth keeping in mind if you are using a particularly sluggish SSD with a hard drive that has very good sequential write performance.

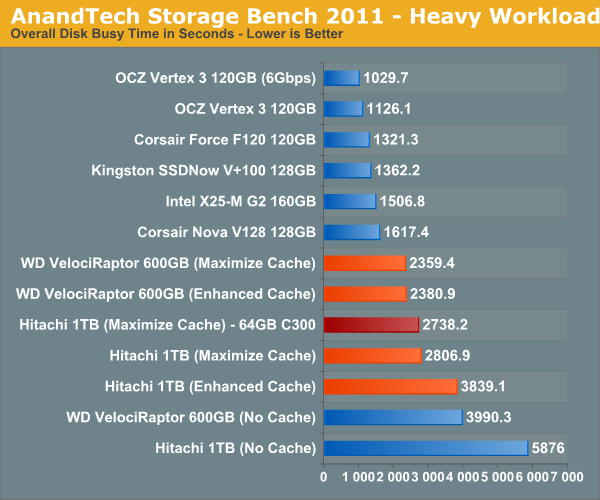

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

106 Comments

View All Comments

cbass64 - Wednesday, May 11, 2011 - link

Who says you can't use your old 100 or 256GB SSD as an SRT device? The article clearly states that you can use whatever size drive you want. Up to 64GB of it will be used for cache and the rest can be used for data. If you have more than 64GB of data that you need to have cached at one time then SRT isn't the solution you should be looking into.As for OS limitations...you can't seriously think Intel would wait until they had this running on every platform imaginable before they released it to the public, can you? This is the first version of the driver that supports it so of course it will have limitations. You can't expect a feature of a Windows-only driver to be supported by a non-Windows OS. I'm sure this feature will be available on Linux once Intel actually makes a Linux RST driver.

futrtrubl - Wednesday, May 11, 2011 - link

And don't forget that if you don't partition the rest of the space on the SSD it will use it for wear levelling, which will be even more important in this situation.Shadowmaster625 - Wednesday, May 11, 2011 - link

I still dont get why western digital doesnt take 4GB of SLC and solder it onto the back of their hard drive controller boards. It's not like they dont have the room. Hopefully now they will do that. 1TB +4GB SLC all for under $100 in one package, with 2 sata ports.mamisano - Wednesday, May 11, 2011 - link

Seagate has the Momentus 500gb 7200rmp drive with 4GB SLC. It's in 2.5" 'Notebook' format but obviously can be using in a PC.I am wondering why such a drive wasn't included in these tests.

jordanclock - Wednesday, May 11, 2011 - link

Because, frankly, it sucks. The caching method is terrible and barely helps more than a large DRAM cache.Conficio - Wednesday, May 11, 2011 - link

What is the OS support on those drivers (Windows?, Linux?, Mac OS X?, BSD?, Open Source?, ...)?Does the SRT drive get TRIM? Does it need it?

"With the Z68 SATA controllers set to RAID (SRT won't work in AHCI or IDE modes) just install Windows 7 on your hard drive like you normally would."???

Is there any optimization to allow the hard drive to avoid seeks? If this all happens on the driver level (as opposed to on the BIOS level) then I'd expect to gain extra efficiency from optimizing the cached LBAs so as to avoid costly seeks. In other words you don't want to look at LBAs alone but at sequences of LBAs to optimize the utility. Any mention of this?

Also one could imagine a mode where the driver does automatic defragmentation and uses the SSD as the cache to allow to do that during slow times of hard drive access. Any comment from Intel?

Lonesloane - Wednesday, May 11, 2011 - link

What happened to the prposed prices? If I remember correctly the caching drive was supposed to cost only 30-40$?Now with 110$, the customer should better buy a "real" 60GB SSD.

JNo - Thursday, May 12, 2011 - link

+1It's interesting, Anand has a generally positive review and generally positive comments. Tom's Hardware, which I generally don't respect nearly as much as Anand, reviewed SRT both a while back and covered it again recently and is far less impressed as are its readers. I have to say that I agree with Tom's on this particular issue though.

It is *not* a halfway house or a cheaper way to get most of the benefit of an SSD. For $110 extra plus the premium of a Z68 mobo you may as well get an SSD that is 40-60GB bigger than Larson Creek (or 40-60GB bigger than your main system SSD) and just store extra data on it directly and with faster access and no risk of caching errors.

For those who said SRT is a way of speeding up a cheap HTPC - it doesn't seem that way as it's not really cheap and it won't cache large, sequential media files anyway. For those who said it will speed up your game loadings, it will only do so for a few games on 2nd or 3rd run only and will evict if you use a lot of different games so you're better off having the few that count directly on the SSD anyway (using Steam Mover if necessary).

For your system drive it's too risky at this point or you need to use the Enhanced mode (less impressive) and to speed up your large data (games/movies) it's barely relevant for the aforementioned reasons. For all other scenarios you're better off with a larger SSD.

It's too little too late and too expensive. The fact that it's not worth bothering is a no brainer to me which is a shame as I was excited by the idea of it.

Boissez - Wednesday, May 11, 2011 - link

Could one kindly request for the numbers from both the 64GB C300 and 20GB sans harddisk 311 to be added. It would give a good idea of the performance hit one could expect for using these in SRT vs as a standalone boot drive.Boissez - Wednesday, May 11, 2011 - link

First sentence should be: "Could one kindly request for the numbers from both the 64GB C300 and 20GB 311 sans harddisk to be added?"... sorry