Intel Z68 Chipset & Smart Response Technology (SSD Caching) Review

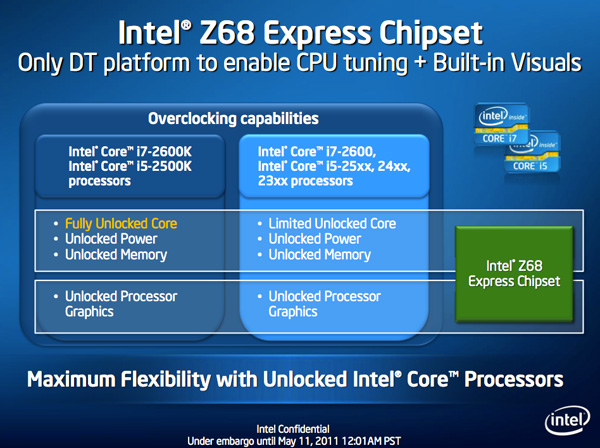

by Anand Lal Shimpi on May 11, 2011 2:34 AM ESTThe problem with Sandy Bridge was simple: if you wanted to use Intel's integrated graphics, you had to buy a motherboard based on an H-series chipset. Unfortunately, Intel's H-series chipsets don't let you overclock the CPU or memory—only the integrated GPU. If you want to overclock the CPU and/or memory, you need a P-series chipset—which doesn't support Sandy Bridge's on-die GPU. Intel effectively forced overclockers to buy discrete GPUs from AMD or NVIDIA, even if they didn't need the added GPU power.

The situation got more complicated from there. Sandy Bridge's Quick Sync was one of the best features of the platform, however it was only available when you used the CPU's on-die GPU, which once again meant you needed an H-series chipset with no support for overclocking. You could either have Quick Sync or overclocking, but not both (at least initially).

Finally, Intel did very little to actually move chipsets forward with its 6-series Sandy Bridge platform. Native USB 3.0 support was out and won't be included until Ivy Bridge, we got a pair of 6Gbps SATA ports and PCIe 2.0 slots but not much else. I can't help but feel like Intel was purposefully very conservative with its chipset design. Despite all of that, the seemingly conservative chipset design was plagued by the single largest bug Intel has ever faced publicly.

As strong as the Sandy Bridge launch was, the 6-series chipset did little to help it.

Addressing the Problems: Z68

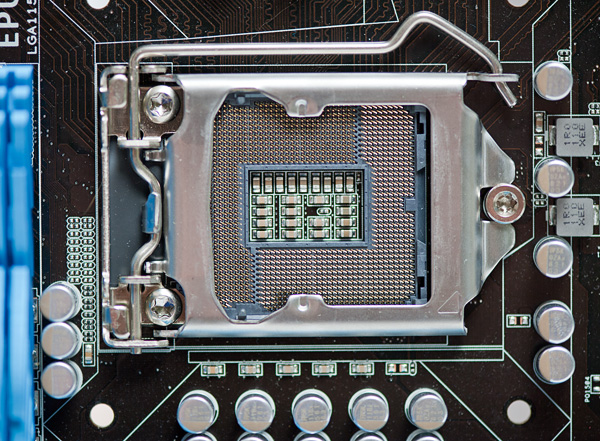

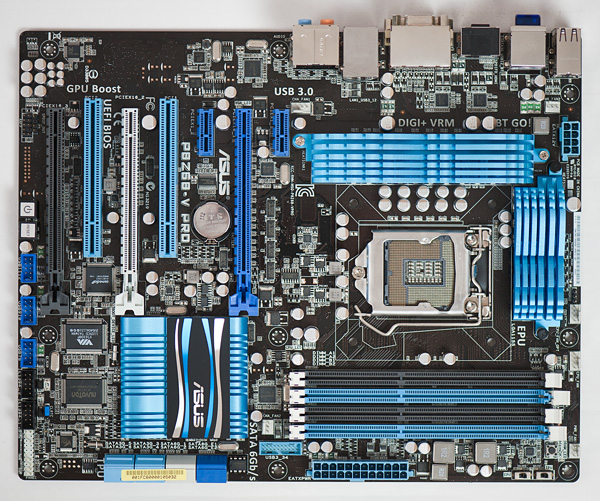

In our Sandy Bridge review I mentioned a chipset that would come out in Q2 that would solve most of Sandy Bridge's platform issues. A quick look at the calendar reveals that it's indeed the second quarter of the year, and a quick look at the photo below reveals the first motherboard to hit our labs based on Intel's new Z68 chipset:

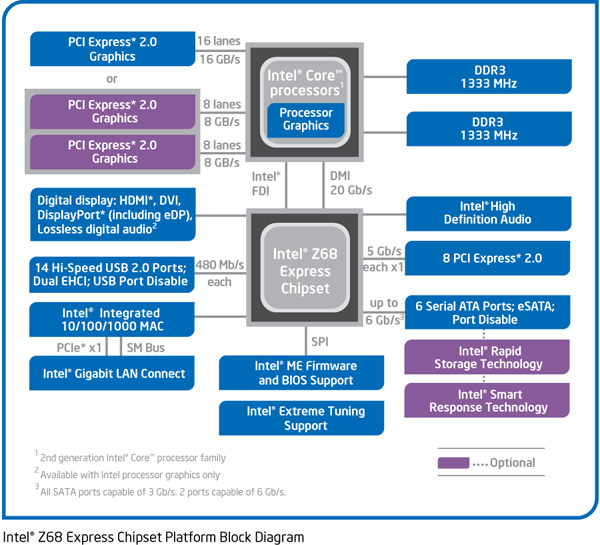

Architecturally Intel's Z68 chipset is no different than the H67. It supports video output from any Sandy Bridge CPU and has the same number of USB, SATA and PCIe lanes. What the Z68 chipset adds however is full overclocking support for CPU, memory and integrated graphics giving you the choice to do pretty much anything you'd want.

Pricing should be similar to P67 with motherboards selling for a $5—$10 premium. Not all Z68 motherboards will come with video out, those that do may have an additional $5 premium on top of that in order to cover the licensing fees for Lucid's Virtu software that will likely be bundled with most if not all Z68 motherboards that have iGPU out. Lucid's software excluded, any price premium is a little ridiculous here given that the functionality offered by Z68 should've been there from the start. I'm hoping over time Intel will come to its senses but for now, Z68 will still be sold at a slight premium over P67.

Overclocking: It Works

Ian will have more on overclocking in his article on ASUS' first Z68 motherboard, but in short it works as expected. You can use Sandy Bridge's integrated graphics and still overclock your CPU. Of course the Sandy Bridge overclocking limits still apply—if you don't have a CPU that supports Turbo (e.g. Core i3 2100), your chip is entirely clock locked.

Ian found that overclocking behavior on Z68 was pretty similar to P67. You can obviously also overclock the on-die GPU on Z68 boards with video out.

The Quick Sync Problem

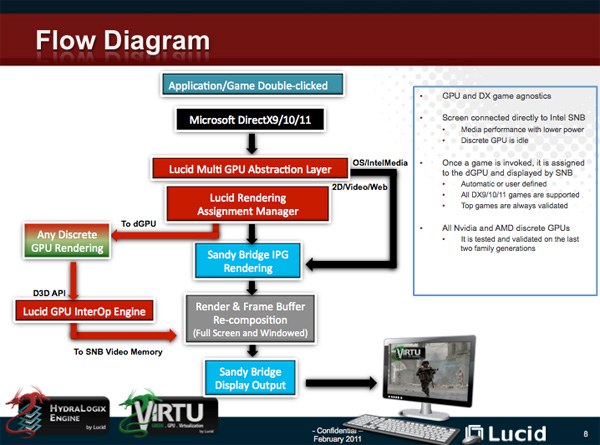

Back in February we previewed Lucid's Virtu software, which allows you to have a discrete GPU but still use Sandy Bridge's on-die GPU for Quick Sync, video decoding and basic 2D/3D acceleration.

Virtu works by intercepting the command stream directed at your GPU. Depending on the source of the commands, they are directed at either your discrete GPU (dGPU) or on-die GPU (iGPU).

There are two physical approaches to setting up Virtu. You can either connect your display to the iGPU or dGPU. If you do the former (i-mode), the iGPU handles all display duties and any rendering done on the dGPU has to be copied over to the iGPU's frame buffer before being output to your display. Note that you can run an application in a window that requires the dGPU while running another that uses the iGPU (e.g. Quick Sync).

As you can guess, there is some amount of overhead in the process, which we've measured to varying degrees. When it works well the overhead is typically limited to around 10%, however we've seen situations where a native dGPU setup is over 40% faster.

| Lucid Virtu i-mode Performance Comparison (1920 x 1200—Highest Quality Settings) | |||||||

| Metro 2033 | Mafia II | World of Warcraft | Starcraft 2 | DiRT 2 | |||

| AMD Radeon HD 6970 | 35.2 fps | 61.5 fps | 81.3 fps | 115.6 fps | 137.7 fps | ||

| AMD Radeon HD 6970 (Virtu) | 24.3 fps | 58.7 fps | 74.8 fps | 116.6 fps | 117.9 fps | ||

The dGPU doesn't completely turn off when it's not in use in this situation, however it will be in its lowest possible idle state.

The second approach (d-mode) requires that you connect your display directly to the dGPU. This is the preferred route for the absolute best 3D performance since there's no copying of frame buffers. The downside here is that you will likely have higher idle power as Sandy Bridge's on-die GPU is probably more power efficient under non-3D gaming loads than any high end discrete GPU.

With a display connected to the dGPU and with Virtu running you can still access Quick Sync. CrossFire and SLI are both supported in d-mode only.

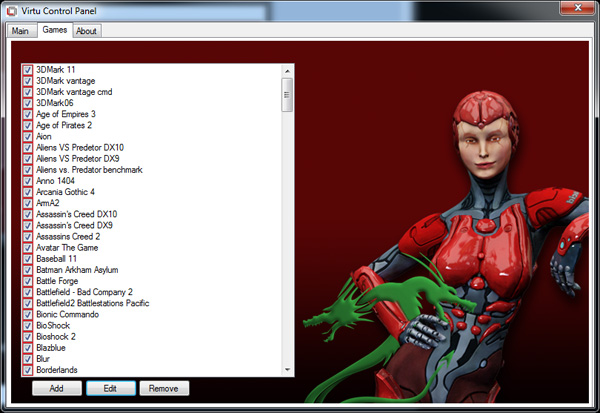

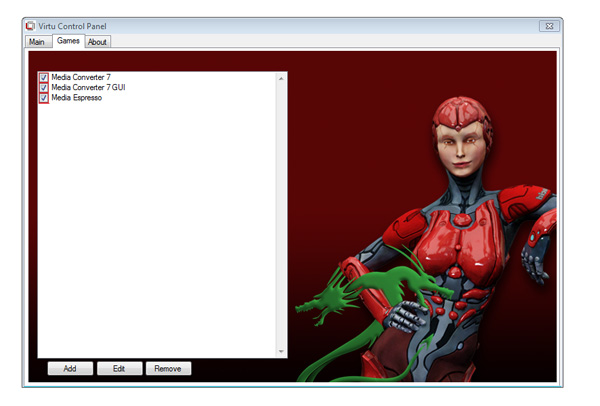

As I mentioned before, Lucid determines where to send commands based on the source of the commands. In i-mode all commands go to the iGPU by default, and in d-mode everything goes to the dGPU. The only exceptions are if there are particular application profiles defined within the Virtu software that list exceptions. In i-mode that means a list of games/apps that should run on the dGPU, and in d-mode that is a smaller list of apps that use Quick Sync (as everything else should run on the dGPU).

Virtu works although there are still some random issues when running in i-mode. Your best bet to keep Quick Sync functionality and maintain the best overall 3D performance is to hook your display up to your dGPU and only use Sandy Bridge's GPU for transcoding. Ultimately I'd like to see Intel enable this functionality without the use of 3rd party software utilities.

106 Comments

View All Comments

davidgamer - Wednesday, May 11, 2011 - link

I was wondering if it would still be possible to do a RAID set up with SRT? For example I would probably want to do a RAID 5 set up with 3 3TB drives but also have the cache enabled, not sure if this would work though.hjacobson - Thursday, May 12, 2011 - link

RE: Z68 capable of managing SRT and traditional RAID at the same time?I've looked for an answer to this without success.

I did find out the H67 express chipset can't manage more than one RAID array. I won't be surprised to learn the same for the Z68. Which is to say, your choice: either SRT or traditional RAID, but not both.

Sigh.

jjj - Thursday, May 12, 2011 - link

" I view SRT as more of a good start to a great technology. Now it's just a matter of getting it everywhere."It actually doesn't have much of a future,so ok Marvell first made it's own chip that does this,now Intel put similar tech on Z68 but lets look at what's ahead.As you said NAND prices are coming down and soon enough SSDs will start to get into the mainstream eroding the available market for SRT while at the same time HDD makers will also have much better hybrid drives.All in all SRT is a few years late.

HexiumVII - Thursday, May 12, 2011 - link

What happens if we have an SSD as a boot drive? Would it recognize it as an SSD and only cache the secondary HDD? It would be nice to have that as my boot SSD is only 80GB and my less frequent used progs are in my 2teras. This is also great for upgraders as now you have a use for your last gen SSD drives!Bytown - Thursday, May 12, 2011 - link

A feature of the Z68 is that any SSD can be used, up to 64GB in size. Anandtech does the best SSD reviews I've read, and I was dissapointed to not see some tests with a larger cache drive, especially when there were issues with bumping data off of the 20GB drive.I think that a larger cache drive will be the real life situation for a majority of users. There are some nice deals on 30GB to 64GB drives right now and it would be great to see a review that tries to pinpoint the sweet spot in cache drive size.

irsmurf - Thursday, May 12, 2011 - link

Hopefully my next workstation will have SSD for cache and an HDD for applications storage. This will greatly shorten length of time required to transition to SSD in the workplace. A one drive letter solution is just what was needed for mass adoptation.Its like a supercharger for your hard drive.

GullLars - Thursday, May 12, 2011 - link

This seems like a good usage for old "obsolete" SSDs that you wouldn't use as a boot drive any more. I have a couple of 32GB Mtrons laying around, and while their random write sucks (on par with velociraptor sustained, but not burst) the random read at low QDs is good (10K at QD 1 = 40MB/s). I've been using them as boot drives in older machines running dual cores, but it could be nice to upgrade and use them as cache drives instead.It would be nice to see a lineup of different older low-capacity SSDs (16-64GB) with the same HDDs used here, for a comparison and to see if there's any point in putting a OCZ Core, Core V2, Apex, Vertex (Barefoot), Trancend TS, Mtron Mobi/Pro, Kingston V+, or WD Silicon Drive for caching duty.

Hrel - Thursday, May 12, 2011 - link

I'd like to see if using something like a Vertex 3 at 64GB would make much difference compared to using Intels 20GB SSD. Seems like it should evict almost never; so I'd expect some pretty hefty reliability improvements.marraco - Thursday, May 12, 2011 - link

Is only matter of time until SSD caching is cracked and enabled on any motherboard.ruzveh - Thursday, May 19, 2011 - link

Not so impressive as i would like it to be.