The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTAnandTech Storage Bench 2011

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

Update: As promised, some more details about our Heavy Workload for 2011.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

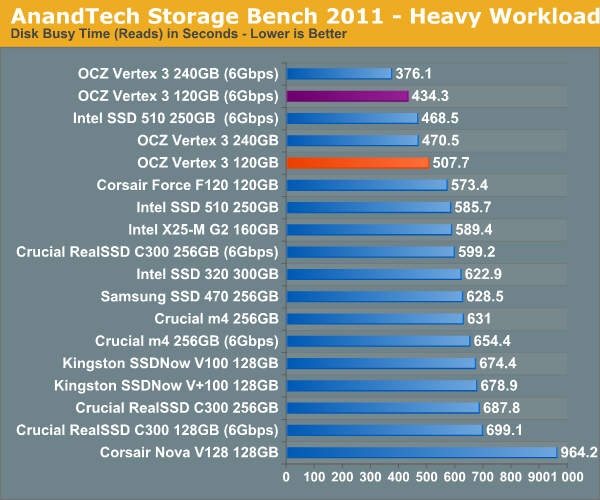

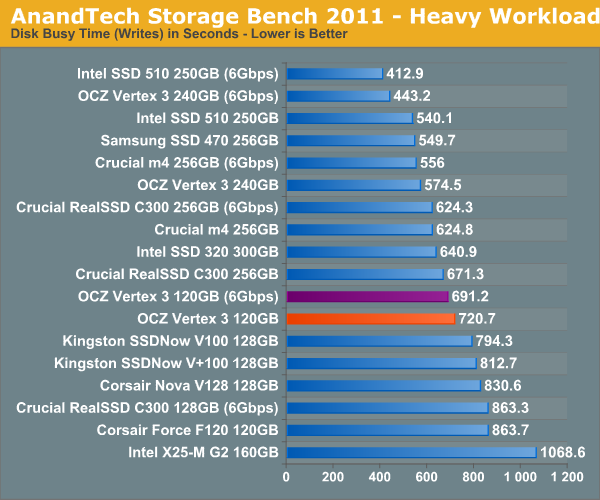

AnandTech Storage Bench 2011 - Heavy Workload

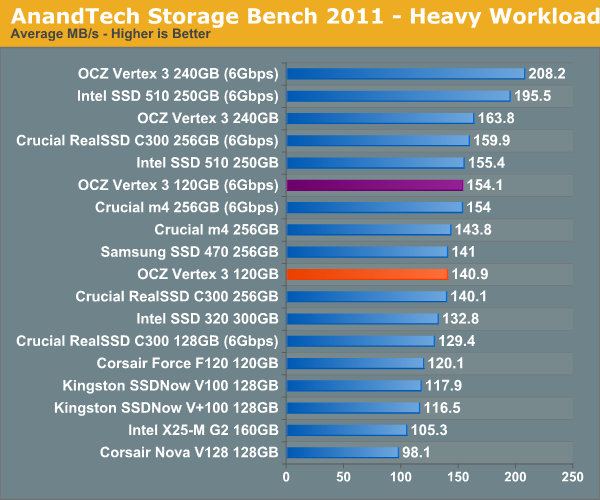

We'll start out by looking at average data rate throughout our new heavy workload test:

In our heavy test for 2011 the 120GB Vertex 3 is noticeably slower than the 240GB sample we tested a couple of months ago. Fewer available die are the primary explanation. We're still waiting on samples of the 120GB Intel SSD 320 and the Crucial m4 but it's looking like this round will be more competitive than we originally thought.

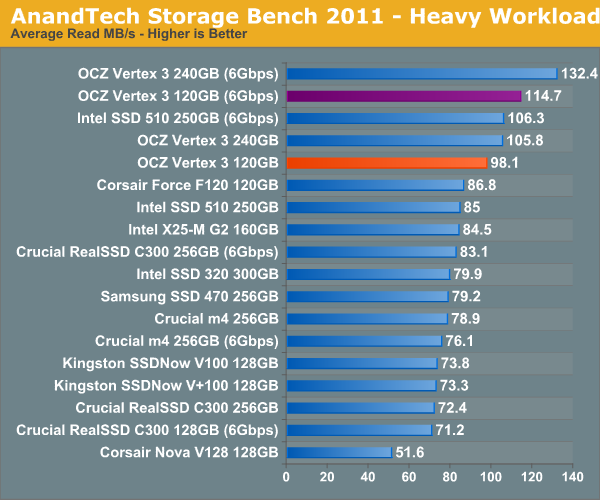

The breakdown of reads vs. writes tells us more of what's going on:

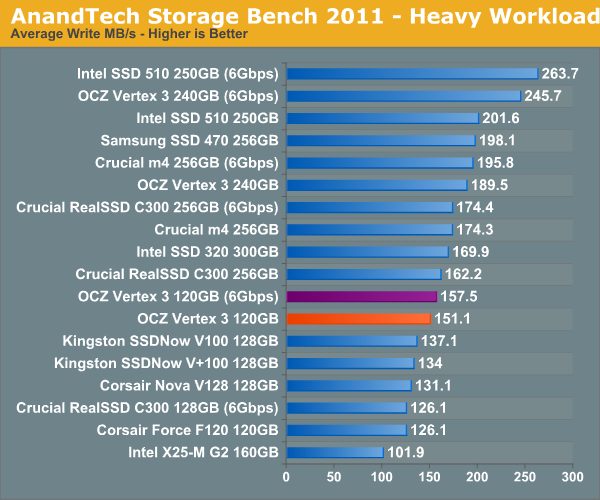

Surprisingly enough it's not read speed that holds the 120GB Vertex 3 back, it's ultimately the lower (incompressible) write speed:

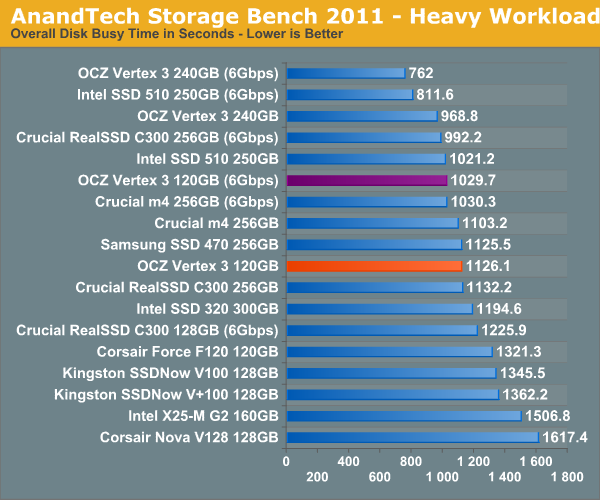

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

153 Comments

View All Comments

jjj - Wednesday, April 6, 2011 - link

any chance of a comparison soon for the new gen SSDs running on p67 vs the non native sata 3 controllers out there(the marvell controller on many 1366 and 1155 boards or/and some cheap PCIe sata3 cards) and maybe an AMD system too?A5 - Wednesday, April 6, 2011 - link

I think they did a comparison in the P67 article. The P67 controller is the fastest, followed by AMD (it's within a few %), and then the 3rd part controllers are a good bit slower.Movieman420 - Wednesday, April 6, 2011 - link

What more can I say? I've been chomping at the bit over this issue ever since SR broke the story. As a loong time Ocz customer (ok...fanboy..lol) I couldn't believe Ocz was behaving like that. The max speed rating using the fastest test available is excusable...like you said, if Ozc would have went the altruistic route then the competition would have take full advantage in about 1 millisecond. After finding out about the inevitable switch to 25nm I quickly ordered another drive for my existing array from a lesser known vendor that I hoped was still selling older stock. I received the drive and to my dismay it was a 25nm/64Gb piece. Adding this drive to my existing array of 34nm/32Gb drives would have a definite negative effect. Which brings me to my point."After a dose of public retribution OCZ agreed to allow end users to swap 25nm Vertex 2s for 34nm drives, they would simply have to pay the difference in cost. OCZ realized that was yet another mistake and eventually allowed the swap for free."

This is only partially true. Replacements were offered based on drives that formatted below IDEMA capacity. If your drive formatted to the correct size, you were not eligible to swap. The only problem is that the 64Gb dies were also used in Vertex 2/Agility 2 drives that feature 28 percent over-provisioning (i.e. 50, 100, 200gb models). In this case the decreased capacity was 'hidden' for lack of a better term. This is where I locked horns with them. The exchange was only offered for the 60'E' and 120'E' drives even tho many others suffered the same performance issue for the same reason. I had to raise a bit of hell before they agreed to replace my 64nm/64Gb 'non-E' drive with a 34nm replacement. At first they would only swap for another 25nm drive and I stated that my issue was with performance NOT die size. They ended up replacing my drive with a 34nm model only because it would have put a hurting on my existing raid array of 34nm drives...they made it clear that this was an exception since I had a raid array that would be negatively affected. So anyone who bought a 28 percent OP drive with 64Gb nand chips was DENIED any sort of exchange unless a raid array was involved. As far as I know, that policy still stands unless Ryan or Alex decides to make good on the exchange for 28 percent OP, non 'E' 64Gb die drives which are internally identical to the 'E' drives just with a different amount of OP set by the firmware. While I may have been 'lucky' if you will because I had an array involved, there's people out there that purchased a high OP model which if anything should be a slightly better performer and instead it's the complete opposite. Charge a premium for the more expensive NAND? Absolutely! Just don't offer a half hearted exchange that doesn't cover all models affected...and not just for the ones whose OP doesn't hide the issue.

CloudFire - Wednesday, April 6, 2011 - link

thanks anand! really glad you put some pressure on Ocz. I hope other companies will follow suite as well. Here's to hoping you'd continue to do the right thing for us consumers in the future! :DDennis.Huang - Wednesday, April 6, 2011 - link

Thank you for the review and for your actions on behalf of customers. This was a great review for me as a new person to SSDs. Do you have any thoughts of the performance of the 480GB version of the Vertex 3 and/or do you plan to do a review on that version too?kensiko - Thursday, April 7, 2011 - link

I saw some number on the OCZ forum, I think it came from Ryder, for the 480GB and it performs even better than the 240.kensiko - Thursday, April 7, 2011 - link

Here:IO METER (QD=1) 2008 on P67 SATAIII

120GB 240GB 480GB

4KB Random READ 16.31 15.58 17.77

4KB Random WRITE 14.45 14.97 15.99

128KB Seq. READ 190.23 255.17 355.89

128KB Seq WRITE 345.21 342.99 313.98

bennymankin - Wednesday, April 6, 2011 - link

Please include Vertex 2 120GB, as it is probably one of the most popular drives out there.Thank you.

kensiko - Thursday, April 7, 2011 - link

The F120 does it, but true it's not the 25nmShark321 - Friday, April 8, 2011 - link

I concur. Vertex 2 120 GB should be compared to Vertex 3 120GB. I suspect the differences will be minimal on SATA II. It's basically the same product, with slight controller and firmware changes.