The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTAnandTech Storage Bench 2010

To keep things consistent we've also included our older Storage Bench. Note that the old storage test system doesn't have a SATA 6Gbps controller, so we only have one result for the 6Gbps drives.

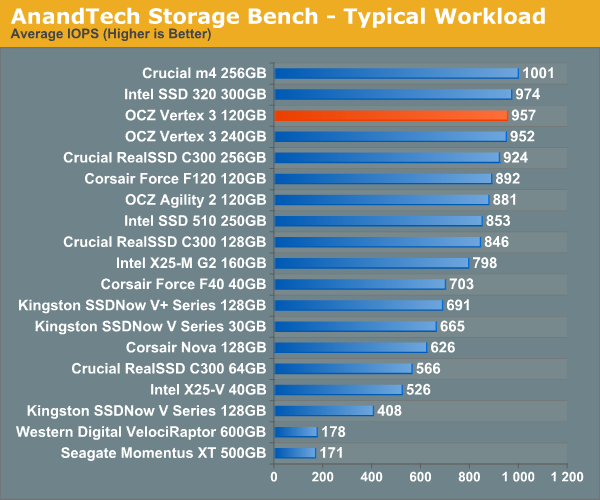

The first in our benchmark suite is a light/typical usage case. The Windows 7 system is loaded with Firefox, Office 2007 and Adobe Reader among other applications. With Firefox we browse web pages like Facebook, AnandTech, Digg and other sites. Outlook is also running and we use it to check emails, create and send a message with a PDF attachment. Adobe Reader is used to view some PDFs. Excel 2007 is used to create a spreadsheet, graphs and save the document. The same goes for Word 2007. We open and step through a presentation in PowerPoint 2007 received as an email attachment before saving it to the desktop. Finally we watch a bit of a Firefly episode in Windows Media Player 11.

There’s some level of multitasking going on here but it’s not unreasonable by any means. Generally the application tasks proceed linearly, with the exception of things like web browsing which may happen in between one of the other tasks.

The recording is played back on all of our drives here today. Remember that we’re isolating disk performance, all we’re doing is playing back every single disk access that happened in that ~5 minute period of usage. The light workload is composed of 37,501 reads and 20,268 writes. Over 30% of the IOs are 4KB, 11% are 16KB, 22% are 32KB and approximately 13% are 64KB in size. Less than 30% of the operations are absolutely sequential in nature. Average queue depth is 6.09 IOs.

The performance results are reported in average I/O Operations per Second (IOPS):

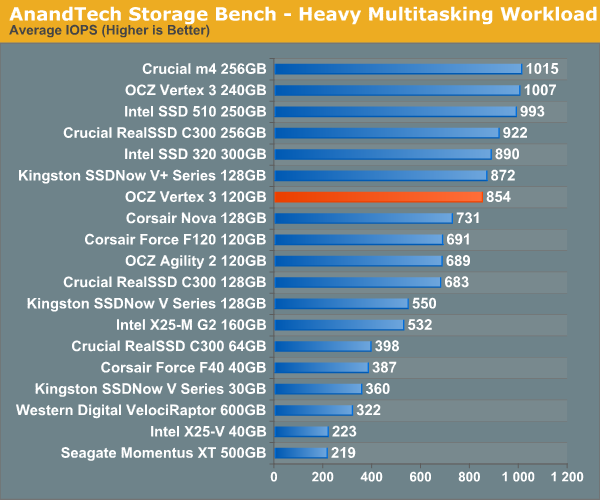

If there’s a light usage case there’s bound to be a heavy one. In this test we have Microsoft Security Essentials running in the background with real time virus scanning enabled. We also perform a quick scan in the middle of the test. Firefox, Outlook, Excel, Word and Powerpoint are all used the same as they were in the light test. We add Photoshop CS4 to the mix, opening a bunch of 12MP images, editing them, then saving them as highly compressed JPGs for web publishing. Windows 7’s picture viewer is used to view a bunch of pictures on the hard drive. We use 7-zip to create and extract .7z archives. Downloading is also prominently featured in our heavy test; we download large files from the Internet during portions of the benchmark, as well as use uTorrent to grab a couple of torrents. Some of the applications in use are installed during the benchmark, Windows updates are also installed. Towards the end of the test we launch World of Warcraft, play for a few minutes, then delete the folder. This test also takes into account all of the disk accesses that happen while the OS is booting.

The benchmark is 22 minutes long and it consists of 128,895 read operations and 72,411 write operations. Roughly 44% of all IOs were sequential. Approximately 30% of all accesses were 4KB in size, 12% were 16KB in size, 14% were 32KB and 20% were 64KB. Average queue depth was 3.59.

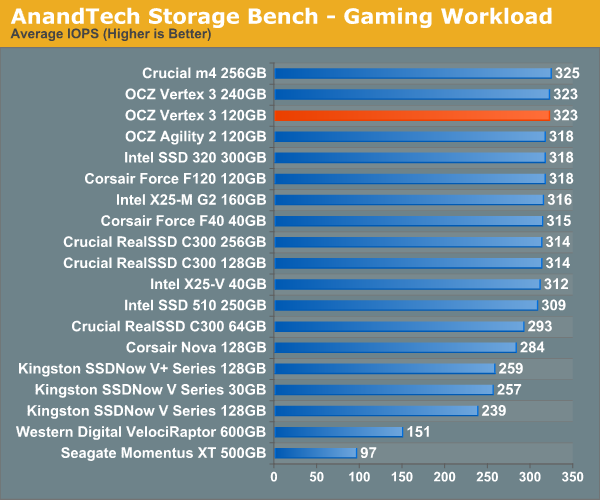

The gaming workload is made up of 75,206 read operations and only 4,592 write operations. Only 20% of the accesses are 4KB in size, nearly 40% are 64KB and 20% are 32KB. A whopping 69% of the IOs are sequential, meaning this is predominantly a sequential read benchmark. The average queue depth is 7.76 IOs.

153 Comments

View All Comments

GrizzledYoungMan - Thursday, April 7, 2011 - link

Thank you Anand for your vigilance and consumer advocacy. OCZ's disorganization remains a problem for their customers (and I'm one of them, running OCZ SSDs in all my systems).Still, I am disappointed by the fact that your benchmarks continue to exaggerate the different between SSDs, instead of realistically portraying the difference between SSDs that a user might notice in daily operation. Follow my thinking:

1. The main goal of buy an SSD, or upgrading an SSD from another SSD, is to improve system responsiveness as it appears to the user.

2. No user particularly cares about the raw performance of their drives as much as how much performance is really available in real-world use.

3. Thus, tests should focus on timing and comparing common operations, in both solo tasking and multi tasking scenarios (like booting, application loading, large catalog/edit files/database loading and manipulation for heavy duty desktop content creation applications and so on).

4. In particular, Sandforce is a huge concern when comparing benchmarks to real world use. Sure, they kill in the benchmarks everyone uses, but many of the most resource intensive (and especially disk intensive) desktop tasks are content creation related (photo and video, primarily) which use incompressible files. How is it that no one has investigated the performance of Sandforce in these situations?

Users here have complained that if we did only #3, only a small difference between SSDs would be apparent. But to my eyes, THAT IS EXACTLY WHAT WE NEED TO KNOW. If the performance delta between generations of SSDs is not really significant, and the price isn't moving, then this is a problem for the industry and consumers alike.

However, creating the perception with unrealistically heavy trace programs that SSDs have significant performance differences (or that different flash types and processes have significant performance differences) when you haven't yet demonstrated that there are real world performance differences in terms of system responsiveness (if anything, you've admitted the opposite on a few occasions) strikes me as a well intentioned but ultimately irresponsible testing method.

I'm sure it's exciting to stick it to OCZ. But really, they are one manufacturer among many, and not the core issue. The core issue is this charade we're all participating in, in which we pretend to understand how SSDs really improve the user experience when we have barely scratched the surface of this issue (or are even heading in the wrong direction).

GrizzledYoungMan - Thursday, April 7, 2011 - link

Wow, typos galore there. Too early, too much going on, too little coffee. Sorry.kmmatney - Thursday, April 7, 2011 - link

The Anand Storage Bench 2010 "Typical workload" is about as close as you can get (IMO) to a real work test. Maybe its a heavier multitasking scenario that most of us would use, but I think its the best test out there to give a real-world assessment of SSDs. Just read the description of the test - I think it already has what you are asking for:"The first in our benchmark suite is a light/typical usage case. The Windows 7 system is loaded with Firefox, Office 2007 and Adobe Reader among other applications. With Firefox we browse web pages like Facebook, AnandTech, Digg and other sites. Outlook is also running and we use it to check emails, create and send a message with a PDF attachment. Adobe Reader is used to view some PDFs. Excel 2007 is used to create a spreadsheet, graphs and save the document. The same goes for Word 2007. We open and step through a presentation in PowerPoint 2007 received as an email attachment before saving it to the desktop. Finally we watch a bit of a Firefly episode in Windows Media Player 11."

GrizzledYoungMan - Friday, April 8, 2011 - link

Actually, the storage bench is the opposite of what I'm asking for. I've written about this a couple of times, but my complaint is basically that benchmarks exaggerate the difference between SSDs, that in real world use, it might be impossible to tell one apart from another.The Anand Storage Benches might be the worst offenders in this regard, since they dutifully exaggerate the difference between SSD generations while giving the appearance of a highly precise way to test "real world" workloads.

In particular, the Sandforce architecture is an area of concern. Sure, it blows away everyone in the benchmarks, but the fact that it becomes HDD-slow when given an incompressible workload really has to be explored further. After all, the most disk-intensive desktop workloads all involve manipulating highly compressed (ie, not compressible further) image files, video files and to a lesser degree audio files. One more than one occasion, I've seen people use Sandforce drives as scratch disks for this sort of thing (given their high sequential writes, it would seem ideal) and been deeply disappointed by the resulting performance.

No response yet from Anand on this. But I'll keep posting. It's nothing personal - if anything, I'm posting here out of respect for Anand's leadership in testing.

KenPC - Thursday, April 7, 2011 - link

Nice write up. And - excellent results getting OCZ to grow up a little bit more.As a consumer, the solution of SKU's based on NAND will be confusing and complicated. How the heck am I supposed to know if the xxx.34 or the xxx.25 or some future xxxx.Hyn34 or xxxx.IMFT25 is the one that will meet one of the many performance levels offered?

A complicating factor that you mentioned in the article, is that for a specific manufacturer and process size, there can be varying levels of NAND performance.

I strongly urge you to consider working with OCZ to 'bin' the drives with establshed benchmarks that focus on BOTH random and TRUE non-conmpressible data rates. SKU suffixes then describe the binned performance.

You also have the opportunity to help set SSD 'industry standard benchmarks' here!

Then give OCZ the license to meet those binned performance levels with the best/lowest cost methods they can establish.

But until OCZ comes up with some 'assured performance level', OCZ is just off of my SSD map.

KenPC

KenPC - Thursday, April 7, 2011 - link

Yes, a reply to my own post......But how about a unique and novel idea?

What if.. a Vertex 2 is a Vertex 2 is a Vertex 2, as measured by ALL of the '4 pillars' of SSD performance?

Vertex 3's are Vertex 3's, and so on......

If different nand/fw/controller results in any of the parameters 'out of spec', then that version never ships as a 'Vertex 2'.

After all, varying levels of performance is why there is a vertex, a vertex 2, and an onyx and an agility, and an onyx2, and an agility2, and etc etc within the OCZ SSD line.

Why should the consumer need to have to look a second tier of detail to know the product performance?

KenPC

strikeback03 - Friday, April 8, 2011 - link

So any time Sandforce/OCZ upgrades the firmware you need a new product name? If something happened in the IMFT process and they had to buy up Samsung NAND instead, new product? And of course everyone wants to wait for reviews of the new drives before buying.I personally don't mind them changing stuff as necessary so long as they maintain some minimum performance that they advertise. The real-world benchmarks in the Storage Review articles showed a 2-5% difference, to me that is within margin of error and not a problem for anyone not benchmarking for fun. The Hynix NAND performing at only ~70% of the old ones are a problem, not so much the 25nm ones.

semo - Thursday, April 7, 2011 - link

You've done well. I hope you continue to do this kind of work as it benefits the general public and in this particular case, keeps the bad PR away from a very promising technology.The OCZ Core and other jmicron drives did plenty to slow down the progress of SSD adoption in to the mainstream. You caught the problem earlier than anyone else and fixed it. This time around it took you longer because of other high priority projects. I think your detective and lobbying work are what keeps us techies checking AT daily. In my opinion, the Vertex 2 section of this article deserves home page space and a catchy title!

Finally, let's not forget that OCZ have not yet fixed this issue. People may still have 25nm drives without knowing it or be capable of understanding the problems. OCZ must issue a recall of all mislabeled drives.

Shadowmaster625 - Thursday, April 7, 2011 - link

It is ridiculous to expect a company to release so many SKUs based on varying NAND types. It costs a company big money to release and keep track of all those SKUs. When you look at the actual real world differences between the different NAND types, it only comes down to a few percentage points of difference. It is like comparing different types of motherboard RAM. It is a waste of time and money to even bother looking at one vs another. OCZ should just tell you all to go pound sand. I suspect they will eventually, if you keep nitpicking like this. The 25nm Vertex 2 is virtually identical to the 34nm version. If you run a complete battery of real world and synthetic tests, you clearly see that they are within a few % of each other. There is no reason for OCZ to waste any more time or money trying to placate a nitpicking nerd mob.semo - Thursday, April 7, 2011 - link

The real issue was that it wasn't just a few % difference. Some V2 drives were nowhere near the rated capacity with the 25nm NAND. So if you bought 2 V2 drives and they happen to be different versions, RAID wouldn't work. There is still no way to confirm if the V2 you are trying to buy is one of the affected drives as OCZ haven't issued a recall or taken out affected drives from retail shelves. Best way to avoid unnecesary hassle is not to buy OCZ at all. Corsair did a much better job at informing the customer about the transition:http://www.corsair.com/blog/force25nm/

The performance difference was higher than a few % as well.