The Intel SSD 320 Review: 25nm G3 is Finally Here

by Anand Lal Shimpi on March 28, 2011 11:08 AM EST- Posted in

- IT Computing

- Storage

- SSDs

- Intel

- Intel SSD 320

Spare Area and Redundant NAND

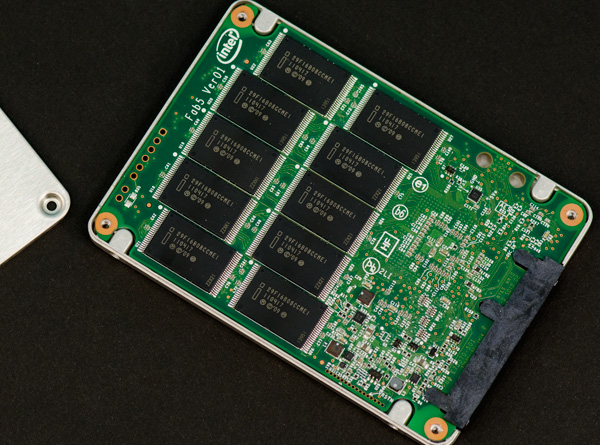

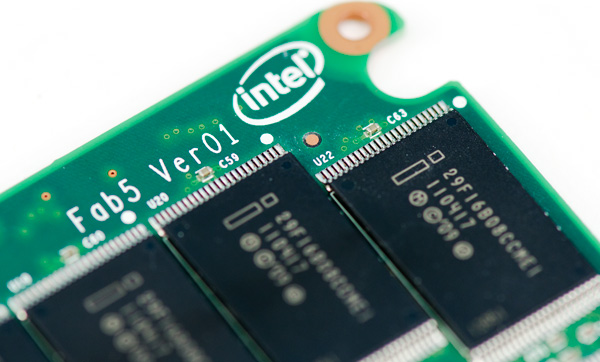

Intel's controller is a 10-channel architecture and thus drive capacities are still a little wonky compared to the competition. Thanks to 25nm NAND we now have some larger capacities to talk about: 300GB and 600GB.

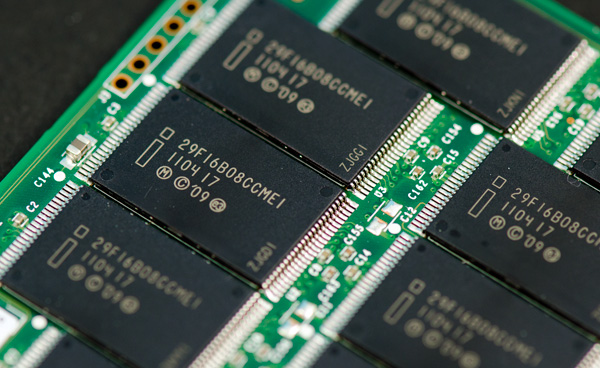

Intel sent a 300GB version of the 320 for us to take a look at. Internally the drive has 20 physical NAND devices. Each NAND device is 16GB in size and features two 64Gbit 25nm 2-bit MLC NAND die. That works out to be 320GB of NAND for a drive whose rated capacity is 300GB. In Windows you'll see ~279GB of free space, which leaves 12.8% of the total NAND capacity as spare area.

Around half of that spare area is used to keep write amplification low and for wear leveling, both typical uses of spare area. The other half is for surplus NAND arrays, a RAID-like redundancy that Intel is introducing with the SSD 320.

As SandForce realized in the development of its controller, smaller geometry NAND is more prone to failure. We've seen this with the hefty reduction in rated program/erase cycles since the introduction of 50nm NAND. As a result, wear leveling algorithms are very important. With higher densities however comes the risk of huge amounts of data loss should there be a failure in a single NAND die. SandForce combats the problem by striping parity data across all of the NAND in the SSD array, allowing the recovery of up to a full NAND die should a failure take place. Intel's surplus NAND arrays work in a similar manner.

Instead of striping parity data across all NAND devices in the drive, Intel creates a RAID-4 style system. Parity bits for each write are generated and stored in the remaining half of the spare area in the SSD 320's NAND array. There's more than a full NAND die (~20GB on the 300GB drive) worth of parity data on the 320 so it can actually deal with a failure of more than a single 64Gbit (8GB) die.

Sequential Write Cap Gone, but no 6Gbps

The one thing that plagued Intel's X25-M was its limited sequential write performance. While we could make an exception for the G1, near the end of the G2's reign as most-recommended-drive the 100MB/s max sequential write speed started being a burden(especially as competing drives caught up and surpassed its random performance). The 320 fixes that by increasing rated sequential write speed to as high as 220MB/s.

You may remember that with the move to 25nm Intel also increased page size from 4KB to 8KB. On the 320, Intel gives credit to the 8KB page size as a big part of what helped it overcome its sequential write speed limitations. With twice as much data coming in per page read it's possible to have a fully page based mapping system and still increase sequential throughput.

Given that the controller hasn't changed since 2009, the 320 doesn't support 6Gbps SATA. We'll see this limitation manifest itself as a significantly reduced sequential read/write speed in the benchmark section later.

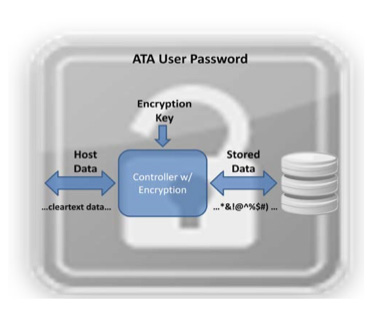

AES-128 Encryption

SandForce introduced full disk encryption starting in 2010 with its SF-1200/SF-1500 controllers. On SandForce drives all data written to NAND is stored in an encrypted form. This encryption only protects you if someone manages to desolder the NAND from your SSD and probes it directly. If you want your drive to remain for your eyes only you'll need to set an ATA password, which on PCs is forced by setting a BIOS password. Do this on a SandForce drive and try to move it to another machine and you'll be faced with an unreadable drive. Your data is already encrypted at line speed and it's only accessible via the ATA password you set.

Intel's SSD 320 enables a similar encryption engine. By default all writes the controller commits to NAND are encrypted using AES-128. The encryption process happens in realtime and doesn't pose a bottleneck to the SSD's performance.

The 320 ships with a 128-bit AES key from the factory, however a new key is randomly generated every time you secure erase the drive. To further secure the drive the BIOS/ATA password method I described above works as well.

A side effect of having all data encrypted on the NAND is that secure erases happen much quicker. You can secure erase a SF drive in under 3 seconds as the controller just throws away the encryption key and generates a new one. Intel's SSD 320 takes a bit longer but it's still very quick at roughly 30 seconds to complete a secure erase on a 300GB drive. Intel is likely also just deleting the encryption key and generating a new one. Without the encryption key, the data stored in the NAND array is meaningless.

The Test

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011, AS SSD & ATTO |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

194 Comments

View All Comments

bji - Monday, March 28, 2011 - link

But don't they? I am pretty sure that the listed price for a 160 GB drive is about the same as I paid for my 80 GB G2 a year ago. Maybe these prices are higher than current prices on the older generation drives but you really should compare products at the same point of their life cycle to be fair. The G3 is half the cost that the G2 was at launch (actually less than half if I am remembering correctly) and in a year when they are at a later point in the product life cycle they wil be half as expensive or less per GB than the G2 is at the same point of its life cycle.qwertymac93 - Monday, March 28, 2011 - link

I think we all knew it wouldn't be as fast as The new sandforces, but i didn't expect it to be so expensive. i guess intel figured people will buy it just because it's intel. Not me though, I'm waiting for next gen(<25nm) ssd's before i make the plunge, i want sub $1 per gig.A5 - Monday, March 28, 2011 - link

Oh well - $170 for 120GB is still pretty good.AnnonymousCoward - Sunday, April 3, 2011 - link

G2 120GB is $230.aork - Monday, March 28, 2011 - link

"That works out to be 320GB of NAND for a drive whose rated capacity is 300GB. In Windows you'll see ~279GB of free space, which leaves 12.8% of the total NAND capacity as spare area."Actually you only see 279 GB of free space in Windows because Windows displays in GiB (2^30 bytes), not true GB (10^9 bytes). In truth, you are only getting the expected 6.25% of the total NAND capacity as spare area.

Stahn Aileron - Monday, March 28, 2011 - link

Flash storage is, as far as I know, always treated as binary multiples, not decimal. SSD drive manufacturers take advantage of the discrepancy between the OS's definition and the HD manufacturers' definition of storage units (GiB - 2^(x*10) - vs GB - 10^(x*3)) to help cover the spare area.It also helps keep everything consistent within the storage industry. Can you imagine the fallout from having a 300GB SSD actually being 300GiB vs an "identically sized" 300GB HDD reporting only 279GiB? If the consumers don't get pissy about that, I'm positive the HDD manufacturers would.

Mr Perfect - Monday, March 28, 2011 - link

Yeah, disappointing really. The switch to SSDs would have been a golden opportunity for drives to format to what the label says. Oh well.Taft12 - Monday, March 28, 2011 - link

Marketing would never allow that. We're not going back to binary measures of storage ever again :(Zan Lynx - Monday, March 28, 2011 - link

And why would we? RAM is the only thing that was ever calculated in powers of 2. Ever.CPU MHz? 10-base.

Network speed? 10-base.

Hard drive capacity? Always 10-base. Since forever!

Making Flash size in base-2 would introduce a new exception, not restore any wondrous old measurement system.

If the label says 300 GB and the box contains 300,000,000,000 bytes, then it does contain exactly what was advertised. Giga as a prefix has always meant 1 billion. Gigahertz? 1 billion cycles per second. Gigameter? 1 billion meters. 1 gigaflops? 1 billion floating point operations per second.

jwilliams4200 - Monday, March 28, 2011 - link

Well said, Zan. I thought that almost everyone knew this, but it is disappointing to see Anand still using the wrong units in his reviews and tables. Windows reports sizes in GiB, but incorrectly labels it GB. Unfortunately, Anand makes the same mistake. Sad.