NVIDIA's Project Kal-El: Quad-Core A9s Coming to Smartphones/Tablets This Year

by Anand Lal Shimpi on February 15, 2011 9:05 PM ESTIf there's any one takeaway from both CES and Mobile World Congress this year it's that NVIDIA is unequivocally a player in the SoC space. With design wins from LG, Motorola and Samsung, NVIDIA may not have the entire market but it has enough of it to be taken seriously.

In our Optimus 2X Review I mentioned that it looked like NVIDIA was going to be moving to a 6-month product cycle in the SoC space. The intention is to out execute its competitors frequently enough that they are either forced out of the market or into making a mistake trying to keep up. It's the same strategy that NVIDIA used to compete with 3dfx almost fifteen years ago.

I wrote that in 2011 NVIDIA would release Tegra 2 followed by the Tegra 2 3D (a higher clocked version of the Tegra 2 with support for 3D content) and finally the Tegra 3 before the end of the year. While it wasn't too long ago that NVIDIA was telling people about its 6-month product cycle, things have changed.

The Tegra 2 3D looks like it's not going to happen. The higher clocked SoC is not currently in any designs that are in the pipeline. There are Tegra 2 based smartphones and tablets that are due out this year, but nothing based on T25/AP25 as far as I can tell.

Although the middle of the roadmap changed, it's the end of 2011 that's sort of amazing. Internally NVIDIA referred to this chip as Tegra 3, and externally we expected it at the tail end of 2011 with devices launching in Q1 2012.

NVIDIA got the first silicon back from the fab 12 days ago. While the chip may end up being called Tegra 3 or some variation of that, for now NVIDIA refers to it as Project Kal-El. Named after young superman (or Nicholas Cage's son), Kal-El will be sampling this year and shipping in devices as early as August 2011.

The Roadmap

I must say that this is highly unlikely behavior for a SoC manufacturer. Qualcomm recently announced its dual-core MSM8960 would be sampling in Q2 2011 and shipping in devices starting next year. NVIDIA is announcing sampling starting sometime very soon (the chip is only 12 days old after all) and device availability before the end of the year.

NVIDIA went on to be even more specific. Tablets based on Kal-El will be available starting August 2011, while smartphones will be available this Christmas and into the first half of next year. This is either NVIDIA over committing to an unrealistic future or the most aggressive schedule we've seen from an SoC vendor yet. NVIDIA won some points by actually pulling off the coup with Tegra 2 this year, however it's still too early to tell whether we'll see the whole thing repeated again just 9 months from now. I'm willing to at least give NVIDIA the benefit of the doubt here.

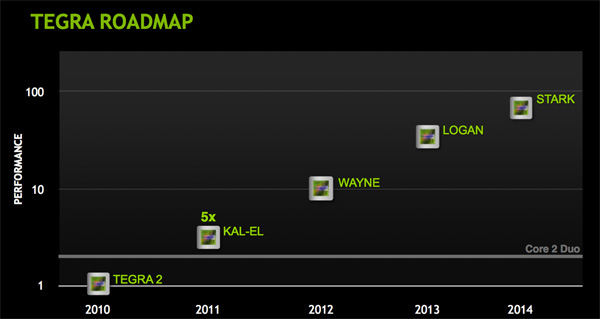

It doesn't stop with Kal-El either. NVIDIA is committing to a yearly refresh of its architecture, NVIDIA quantifies the move from Tegra 2 to Kal-El as a 5x increase in performance. By 2012 we'll have Wayne, which doulbes performance over Kal-El. Then we've got another 5x increase over Wayne with Logan in 2013. The furthest NVIDIA is willing to go out is 2014 with Stark, at roughly a doubling of the performance offered by Logan.

The baseline reference point is Tegra 2, which NVIDIA expects Stark to outperform by a factor of 100x. NVIDIA also expects Kal-El to be somewhere in the realm of the performance of a Core 2 Duo processor (more on this later).

Based on the cadence that NVIDIA presented, it looks like every year we'll either get a doubling or 5x increase in performance over the previous year. Kal-El is one of those 5x years, followed by a doubling with Wayne, 5x again with Logan and a doubling with Stark. Now the performance axis in the chart above is really vague, so end users will likely not see 5x Tegra 2 with Kal-El, but they will see something tangible at least.

76 Comments

View All Comments

theagentsmith - Wednesday, February 16, 2011 - link

There is the Mobile World Congress happening right now in the nice city of Barcelona.... almost every company involved in mobile electronics sector is showing off new products, that's why you see only news about smartphones!R3MF - Wednesday, February 16, 2011 - link

nvidia, you have not lost the magic!Dribble - Wednesday, February 16, 2011 - link

@40nm the power draw would be too high for a phone so I don't suppose there's much point having this processor in one until 28nm arrives.However for the new tablet market you have larger batteries so you can target them with a higher power draw soc (it's still going to be much much smaller then any x86 chip and I expect the big screen will still be sucking most of the power).

Impressive they got it working first time, puts a lot of pressure on competitors who are still struggling to catch up with tegra 2 let alone compete with this.

SOC_speculation - Wednesday, February 16, 2011 - link

Very cool chip, lots of great technology. But it will not be successful in the market.a 1080p high profile decode onto a tablet's SXGA display can easily jump into the 1.2GB/s range. if you drive it over HDMI to a TV and then run a small game or even a nice 3D game on the tablet's main screen, you can easily get into the 1.7 to 2GB/s range.

why is this important? a 533Mhz lpddr2 channel has a max theoretical bandwidth of 4.3GB/s. Sounds like enough right? well, as you increase frequency of ddr, your _actual_ bandwidth lowers due to latency issues. in addition, across workloads, the actual bandwidth you can get from any DDR interface is between 40 to 60% of the theoretical max.

So that means the single channel will get between 2.5GBs (60%) down to 1.72 (40%). Trust me, ask anyone who designs SOCs, they will confirm the 40 to 60% bandwidth #.

So the part will be restricted to use cases that current single core/single channel chips can do.

So this huge chip with 4 cores, 1440p capable, probably 150MT/s 3D, has an Achilles heel the size of Manhattan. Don't believe what Nvidia is saying (that dual channel isn't required). They know its required but for some reason couldn't get it into this chip.

overzealot - Monday, February 21, 2011 - link

Actually, as memory frequency increases bandwidth and latency improve.araczynski - Wednesday, February 16, 2011 - link

so if i know that what i'm about to buy is outdated by a factor of two or five not even a year later, i'm not very likely to bother buying at all.kenyee - Wednesday, February 16, 2011 - link

Crazy how fast stuff is progressing. I want one...at least this might justify the crazy price of a Moto Xoom tablet.... :-)OBLAMA2009 - Wednesday, February 16, 2011 - link

it makes a lot of sense to differentiate phones from tablets by giving them much faster cpus, higher resolutions and longer battery life. otherwise why get a tablet if you have a cell phoneyvizel - Wednesday, February 16, 2011 - link

" NVIDIA also expects Kal-El to be somewhere in the realm of the performance of a Core 2 Duo processor (more on this later)."I don't think that you referred to this statement anywhere in the article.

Can you elaborate?

Quindor - Wednesday, February 16, 2011 - link

Seems to me NVidia might be pulling a Qualcomm, meaning they are going with what they have and are trying to stretch it out longer and wider before giving us the complete redesign/refresh. You can see this quite clearly at the MWC right now.Not a bad strategy as far as I can tell right now. Only threat that I see is that Qualcomm is actually scheduled to release their new core design around the time Nvidia will releasing the Kal-El.

So who's going to win that bet? ;) More IPC VS Raw Ghz/cores. Quite a reversed world too if you ask me, because Qualcomm was never big on IPC and went for the 1Ghz hype.

Hopefully NVidia doesn't make the same mistakes as with the GPU market, building such a revolutionary designs that they actually design "sideways" from the market. Making their GPU's fantastic in certain area's, which might not take off at all.

Mind you, I'm an NVidia fan... but it won't be the first time NVidia releases a revolutionary architecture, which isn't as efficient as they thought it would be. ;)