OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTThe Unmentionables: NAND Mortality Rate

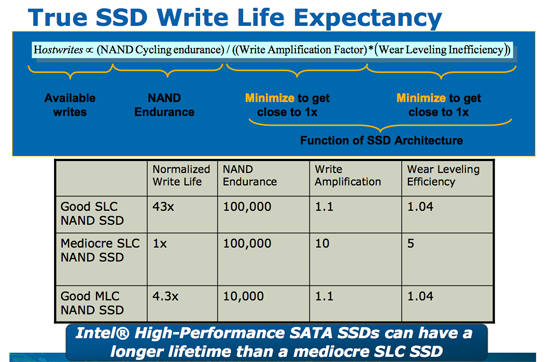

When Intel introduced its X25-M based on 50nm NAND technology we presented this slide:

A 50nm MLC NAND cell can be programmed/erased 10,000 times before it's dead. The reality is good MLC NAND will probably last longer than that, but 10,000 program/erase cycles was the spec. Update: Just to clarify, once you exceed the program/erase cycles you don't lose your data, you just stop being able to write to the NAND. On standard MLC NAND your data should be intact for a full year after you hit the maximum number of p/e cycles.

When we transitioned to 34nm, the NAND makers forgot to mention one key fact. MLC NAND no longer lasts 10,000 cycles at 34nm - the number is now down to 5,000 program/erase cycles. The smaller you make these NAND structures, the harder it is to maintain their integrity over thousands of program/erase cycles. While I haven't seen datasheets for the new 25nm IMFT NAND, I've heard the consumer SSD grade stuff is expected to last somewhere between 3000 - 5000 cycles. This sounds like a very big problem.

Thankfully, it's not.

My personal desktop sees about 7GB of writes per day. That can be pretty typical for a power user and a bit high for a mainstream user but it's nothing insane.

Here's some math I did not too long ago:

| My SSD | |

| NAND Flash Capacity | 256 GB |

| Formatted Capacity in the OS | 238.15 GB |

| Available Space After OS and Apps | 185.55 GB |

| Spare Area | 17.85 GB |

If I never install another application and just go about my business, my drive has 203.4GB of space to spread out those 7GB of writes per day. That means in roughly 29 days my SSD, if it wear levels perfectly, I will have written to every single available flash block on my drive. Tack on another 7 days if the drive is smart enough to move my static data around to wear level even more properly. So we're at approximately 36 days before I exhaust one out of my ~10,000 write cycles. Multiply that out and it would take 360,000 days of using my machine for all of my NAND to wear out; once again, assuming perfect wear leveling. That's 986 years. Your NAND flash cells will actually lose their charge well before that time comes, in about 10 years.

Now that calculation is based on 50nm 10,000 p/e cycle NAND. What about 34nm NAND with only 5,000 program/erase cycles? Cut the time in half - 180,000 days. If we're talking about 25nm with only 3,000 p/e cycles the number drops to 108,000 days.

Now this assumes perfect wear leveling and no write amplification. Now the best SSDs don't average more than 10x for write amplification, in fact they're considerably less. But even if you are writing 10x to the NAND what you're writing to the host, even the worst 25nm compute NAND will last you well throughout your drive's warranty.

For a desktop user running a desktop (non-server) workload, the chances of your drive dying within its warranty period due to you wearing out all of the NAND are basically nothing. Note that this doesn't mean that your drive won't die for other reasons before then (e.g. poor manufacturing, controller/firmware issues, etc...), but you don't really have to worry about your NAND wearing out.

This is all in theory, but what about in practice?

Thankfully one of the unwritten policies at AnandTech is to actually use anything we recommend. If we're going to suggest you spend your money on something, we're going to use it ourselves. Not in testbeds, but in primary systems. Within the company we have 5 SandForce drives deployed in real, every day systems. The longest of which has been running, without TRIM, for the past eight months at between 90 and 100% of its capacity.

SandForce, like some other vendors, expose a method of actually measuring write amplification and remaining p/e cycles on their drives. Unfortunately the method of doing so for SandForce is undocumented and under strict NDA. I wish I could share how it's done, but all I'm allowed to share are the results.

Remember that write amplification is the ratio of NAND writes to host writes. On all non-SF architectures that number should be greater than 1 (e.g. you go to write 4KB but you end up writing 128KB). Due to SF's real time compression/dedupe engine, it's possible for SF drives to have write amplification below 1.

So how did our drives fare?

The worst write amplification we saw was around 0.6x. Actually, most of the drives we've deployed in house came in at 0.6x. In this particular drive the user (who happened to be me) wrote 1900GB to the drive (roughly 7.7GB per day over 8 months) and the SF-1200 controller in turn threw away 800GB and only wrote 1100GB to the flash. This includes garbage collection and all of the internal management stuff the controller does.

Over this period of time I used only 10 cycles of flash (it was a 120GB drive) out of a minimum of 3000 available p/e cycles. In eight months I only used 1/300th of the lifespan of the drive.

The other drives we had deployed internally are even healthier. It turns out I'm a bit of a write hog.

Paired with a decent SSD controller, write lifespan is a non-issue. Note that I only fold Intel, Crucial/Micron/Marvell and SandForce into this category. Write amplification goes up by up to an order of magnitude with the cheaper controllers. Characterizing this is what I've been spending much of the past six months doing. I'm still not ready to present my findings but as long as you stick with one of these aforementioned controllers you'll be safe, at least as far as NAND wear is concerned.

144 Comments

View All Comments

FCss - Thursday, February 17, 2011 - link

"My personal desktop sees about 7GB of writes per day." maybe a stupid question but how do you check the amount of your daily writes?And one more question: if you have a 128Gb SSD and you leave let's say 40Gb unformated so the user can't fill up the disk, will the controller use this space the same way as it would belong to the spare area?

Quindor - Thursday, February 17, 2011 - link

I use a program called "HDDLED" for this. It shows you some easily accessible leds on your screen and if you hover over it, you can see the current and total disk usage since your PC was booted up.FCss - Thursday, February 17, 2011 - link

thanks, a great softwareBreit - Thursday, February 17, 2011 - link

isn't the totally written bytes to the drive since manufacturing be part of the smart data you can read from your drive? all you have to do then is noting down the value when you boot up your pc in the morning and subtract that from the actual value you read there the next day.Chloiber - Thursday, February 17, 2011 - link

Or you can just take the average..marraco - Thursday, February 17, 2011 - link

Vertex 2 takes advantage of unformated space. So OCZ advices to leave 20% of space unformated , (although to improve garbage collection, but it means that unformated space is used)7Enigma - Thursday, February 17, 2011 - link

Comon Anand! In your example you have 185GB free on a 256GB drive. I think that is the least likely scenario that paints an overly optimistic case in terms of write life. Everyone knows not to completely fill up their drive but are you telling me that the vast majority of users are going to have 78% of their drive free at all times? I just don't buy it.The more common scenario is that a consumer purchases a drive slightly larger then needed (due to how expensive these luxuries still are). So that 256GB drive probably will only have 20-40GB free. Do that and that 36 days for a single use of the NAND becomes ~5-8 days (no way to move static data around at this capacity level). Factor in write amplification (0.6X to 10X) and you lower the time to between 4-25 years for hitting that 3000X cap.

Still not a HUGE problem, but much more relevant then saying this drive will last for hundreds of years (not counting NAND lifespan itself).

7Enigma - Thursday, February 17, 2011 - link

Bah I thought the write amplification was 1.6X. That changes the numbers considerably (enough that the point is moot). I still think the example in the article was not a normal circumstance but it seems to still not be an issue.<pie to face>

mark53916 - Thursday, February 17, 2011 - link

Encrypted files are not compressible, so you won't get any advantagefrom the hardware write compression.

7Enigma - Thursday, February 17, 2011 - link

Hi Anand,Looks like one of the numbers is incorrect in this chart. Right now it shows LOWER performance after TRIM then when the drive was completely full. The 230MB/sec value seems to be incorrect.