OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTPerformance vs. Transfer Size

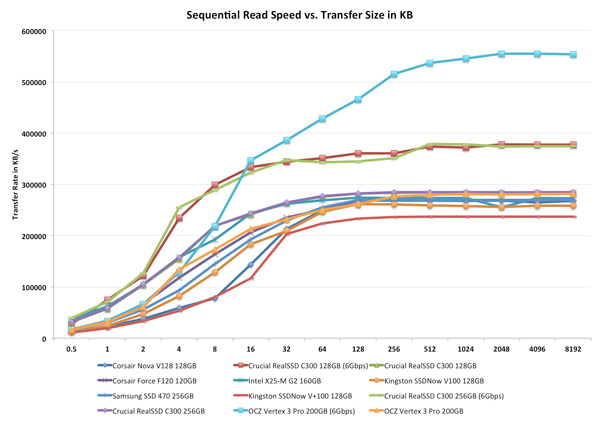

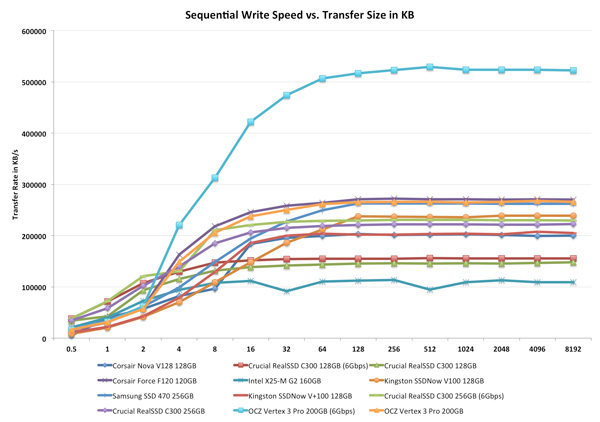

All of our Iometer sequential tests happen at a queue depth of 1, which is indicative of a light desktop workload. It isn't too far fetched to see much higher queue depths on the desktop. The performance of these SSDs also greatly varies based on the size of the transfer. For this next test we turn to ATTO and run a sequential write over a 2GB span of LBAs at a queue depth of 4 and varying the size of the transfers.

On a 6Gbps SATA port the Vertex 3 Pro is unstoppable. For transfer sizes below 16KB it's actually a bit average, and definitely slower than the RealSSD C300. But once you hit 16KB and above, the performance is earth shattering. The gap at 128KB isn't even as big as it gets, we don't see leveling off of performance until 2048KB transfers.

The 3Gbps performance is pretty unimpressive. In fact, the Vertex 3 Pro actually comes in a bit slower than the SF-1200 based Corsair Force F120. If you're going to get the most out of this drive you had better have a good 6Gbps controller.

ATTO's writes are fully compressible, indicative of the sort of performance you'd get on applications/libraries/user data and not highly compressed multimedia files. Here the advantage is just hilarious. By the 8KB mark the Vertex 3 Pro is already faster than everything else, but by 128KB the gap is more of a chasm separating the 6Gbps Vertex 3 Pro from its competitors.

Over a 3Gbps interface the Vertex 3 Pro once again does well but still doesn't really differentiate itself from the SF-1200 based Force F120. Real world performance is probably a bit higher as most transfers aren't perfectly compressible, but again if you don't have a good 6Gbps interface (think Intel 6-series or AMD 8-series) then you probably should wait and upgrade your motherboard first.

144 Comments

View All Comments

jwilliams4200 - Friday, February 18, 2011 - link

In that case, it would be helpful to print two after-TRIM benchmarks: (1) immediately after TRIM and (2) steady-state after-TRIM (i.e., TRIM, let the drive sit idle for long enough for GC to complete, then benchmark again)jcompagner - Thursday, February 17, 2011 - link

what i never understood or maybe i should read a bit more the previous articles, is that how come that a SSD can write many times faster then it can read?It seems to me that read is way easier to do then write...

vol7ron - Friday, February 18, 2011 - link

I originally thought that, but SSDs first write to the controller, which organizes the data for storing it to the disk. The major point is that the data can go anywhere in the array of NAND nodes and the list of the next available node in the stack is available almost immediately, whereas a read requires a hash lookup of where the data is stored, which means the seek could take longer to accomplish.I, as well, am not certain that's true, but that's my best guess.

AnnihilatorX - Saturday, February 19, 2011 - link

Only for Sandforce controllers.Sandforce compresses the incoming data at real time. If the incoming data is highly compressible, in a very extreme example, writting a 500MB blank text file, will be instantaneous. So you see 500MB/ms or something ridiculous.

It is also possible for write speeds to exceed read in burst when small amount of data is written to DRAM on other controllers

Soul_Master - Thursday, February 17, 2011 - link

For zero impact from source performance, I suggest to copy data from RAM drive to your test hard disk.Anand Lal Shimpi - Thursday, February 17, 2011 - link

That's a great suggestion. I ran out of time before I left the country but I'll be playing with it some more upon my return :)Take care,

Anand

MrBrownSound - Thursday, February 17, 2011 - link

I think the intel x25m was a pretty good control group to send the data from. I would auctally like to see the changes when sending the data through the RAM; that would be interesting.Hacp - Thursday, February 17, 2011 - link

Anand,You still direct your readers to your Vertex2 article but OCZ has changed its performance on those drives. Your results are no longer valid and it would be dishonest to link the old Vertex2 performance numbers in this new article when they do not reflect the new slower performance of the Vertex2 today.

Anand Lal Shimpi - Thursday, February 17, 2011 - link

I've seen the discussion and based on what I've seen it sounds like very poor decision making on OCZ's behalf. Unfortunately my 25nm drive didn't arrive before I left for MWC. I hope to have it by the time I get back next week and I'll run through the gamut of tests, updating as necessary. I also plan on speaking with OCZ about this. Let me get back to the office and I'll begin working on it :)As far as old Vertex 2 numbers go, I didn't actually use a Vertex 2 here (I don't believe any older numbers snuck in here). The Corsair Force F120 is the SF-1200 representative of choice in this test.

Take care,

Anand

Quindor - Thursday, February 17, 2011 - link

Good to hear that you are addressing the problems surrounding the Vertex 2 drives. There aren't many websites out there which deliver well thought through reviews and bechmarks such as Anandtech does, although some are getting better.I did some benchmarks on my own and with the new 25nm NAND the new 180GB OCZ Vertex2 can actually be slower then my more then a year old 120GB OCZ Vertex1.

If anyone is interested. They can find an overview of the benchmarks performed on the following page. https://picasaweb.google.com/quindor/Benchmarks#

Still, I would love to see an in depth comparsion as you are famous for. ;)

For my personal usage scenario (my own ESXi server), the speed decrease will be of minimal effect because running multiple template cloned guests, the dedup and compression should be able to do their work just fine. ;)