AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTPower, Temperature, & Noise

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the Radeon HD 6900 series. This is an area where AMD has traditionally had an advantage, as their small die strategy leads to less power hungry and cooler products compared to their direct NVIDIA counterparts. However NVIDIA has made some real progress lately with the GTX 570, while Cayman is not a small die anymore.

AMD continues to use a single reference voltage for their cards, so the voltages we see here represent what we’ll see for all reference 6900 series cards. In this case voltage also plays a big part, as PowerTune’s TDP profile is calibrated around a specific voltage.

| Radeon HD 6900 Series Load Voltage | ||||

| Ref 6970 | Ref 6950 | 6970 & 6950 Idle | ||

| 1.175v | 1.100v | 0.900v | ||

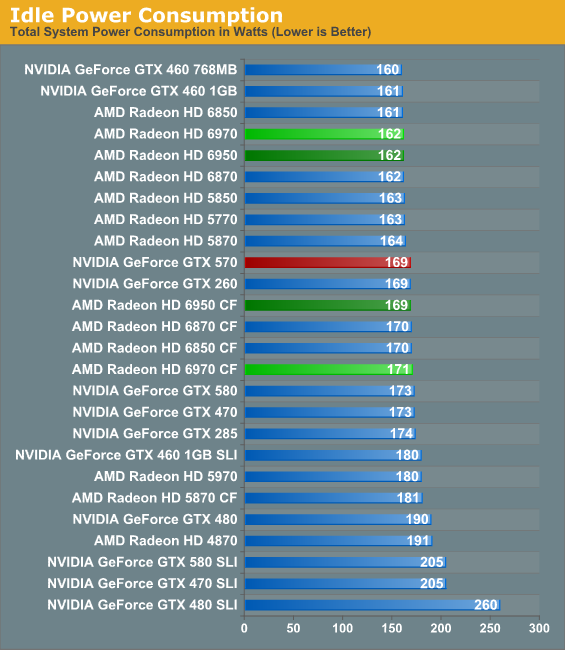

As we discussed at the start of our look at these cards, AMD has been tweaking their designs to take advantage of TSMC’s more mature 40nm process. As a result they’ve been able to bring down idle power usage slightly, even though Cayman is a larger chip than Cypress. For this reason the 6970 and 6950 both can be found at the top of our charts, running into the efficiency limits of our 1200W PSU.

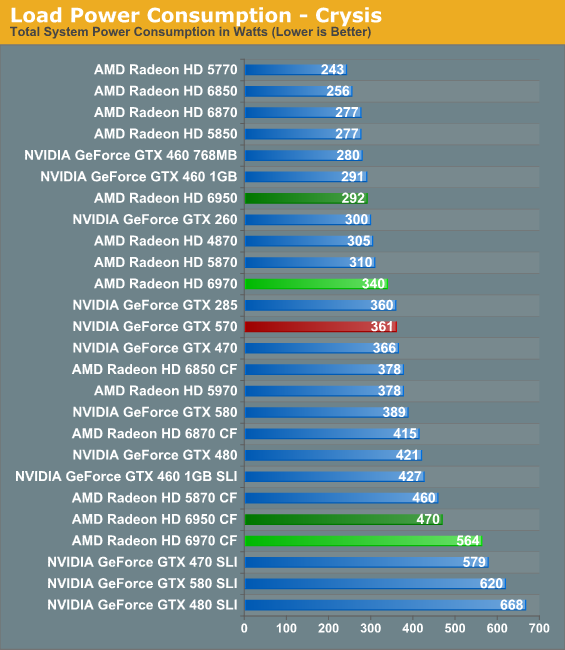

Under Crysis PowerTune is not a significant factor, as Crysis does not generate enough of a load to trigger it. Accordingly our results are rather straightforward, with the larger, more power hungry 6970 drawing around 30W more than the 5870. The 6950 meanwhile is rated 50W lower and draws almost 50W less on the dot. At 292W it’s 15W more than the 5850, or effectively tied with the GTX 460 1GB.

Between Cayman’s larger die and NVIDIA’s own improvements in power consumption, the 6970 doesn’t end up being very impressive here. True, it does draw 20W less, but with the 5000 series AMD’s higher power efficiency was much more pronounced.

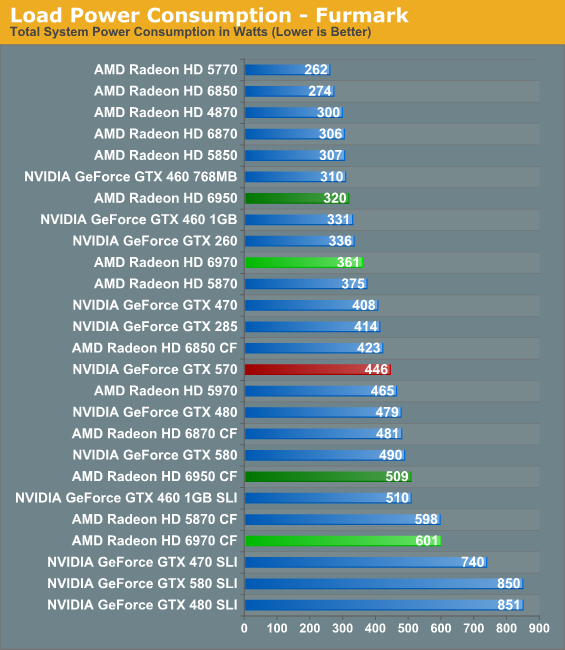

It’s under FurMark that we finally see the complete ramifications of AMD’s PowerTune technology. The 6970, even with a TDP over 60W above the 5870, still ends up drawing less power than the 5870 due to PowerTune throttling. This puts our FurMark results at odds with our Crysis results which showed an increase in power usage, but as we’ve already covered PowerTune tightly clamps power usage to AMD’s TDP, keeping the 6900 series’ worst case scenario for power consumption far below the 5870. While we could increase the TDP to 300W we have no practical reason to, as even with PowerTune FurMark still accurately represents the worst case scenario for a 6900 series GPU.

Meanwhile at 320W the 6950 ends up drawing more power than its counterpart the 5850, but not by much. It’s CrossFire variant meanwhile is drawing 509W,only 19W over a single GTX 580, driving home the point that PowerTune significantly reduces power usage for high load programs such as FurMark.

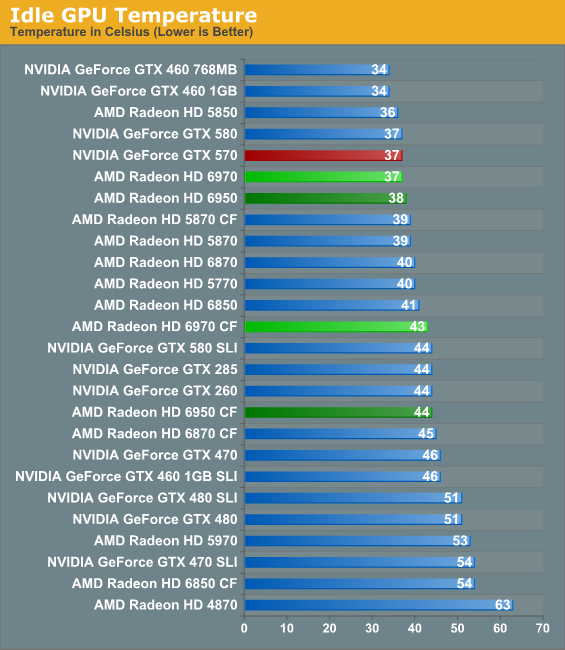

At idle the 6900 series is in good company with a number of other lower power and well-built GPUs. 37-38C is typical for these cards solo, meanwhile our CrossFire numbers conveniently point out the fact that the 6900 series doesn’t do particularly well when its cards are stacked right next to each other.

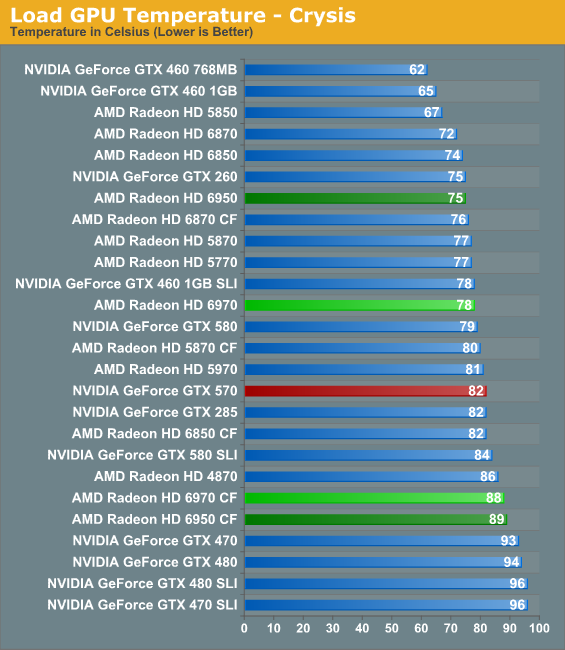

When it comes to Crysis our 6900 series cards end up performing very similarly to our 5800 series cards, a tradeoff between the better vapor chamber cooler and the higher average power consumption when gaming. Ultimately it’s going to be noise that ties all of this together, but there’s certainly nothing objectionable about temperatures in the mid-to-upper 70s. Meanwhile our 6900 series CF cards approach the upper 80s, significantly worse than our 5800 series CF cards.

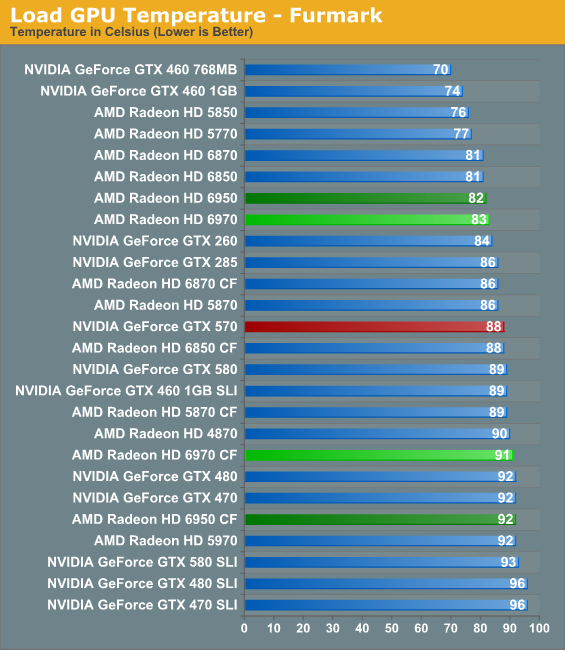

Faced once more with FurMark, we see the ramifications of PowerTune in action. For the 6970 this means a temperature of 83C, a few degrees better than the 5870 and 5C better than the GTX 570. Meanwhile the 6950 is at 82C in spite of the fact that it uses a similar cooler in a lower powered configuration; it’s not as amazing as the 5850, but it’s still quite reasonable.

The CF cards on the other hand are up to 91C and 92C despite the fact that PowerTune is active. This is within the cards’ thermal range, but we’re ready to blame the cards’ boxy design for the poor CF cooling performance. You really, really want to separate these cards if you can.

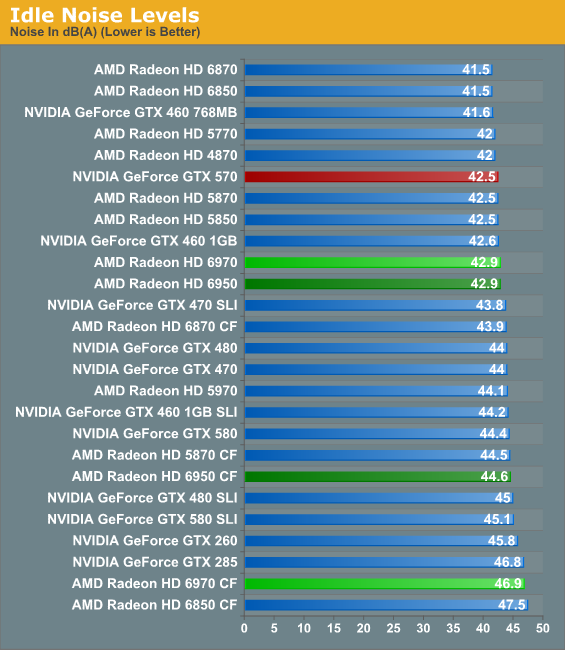

At idle both the 6970 and 6950 are on the verge of running in to our noise floor. With today’s idle power techniques there’s no reason a card needs to have high idle power usage, or the louder fan that often leads to.

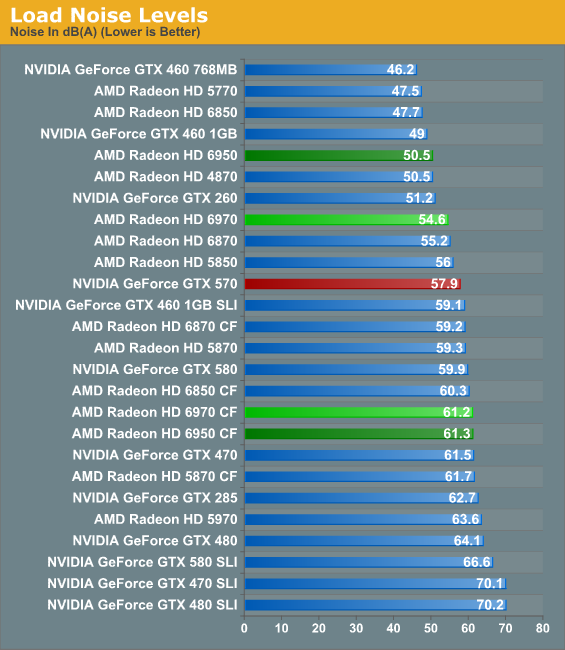

Last but not least we have our look at load noise. Both cards end up doing quite well here, once more thanks to PowerTune. As is the case with power consumption, we’re looking at a true worst case scenario for noise, and both cards do very well. At 50.5db and 54.6db neither card is whisper quiet, but for the gaming performance they provide it’s a very good tradeoff and quieter than a number of slower cards. As for our CrossFire cards, the poor ventilation pours over in to our noise tests. Once more, if you can separate your cards you should do so for greatly improved temperature and noise performance.

168 Comments

View All Comments

AnnonymousCoward - Wednesday, December 15, 2010 - link

First of all, 30fps is choppy as hell in a non-RTS game. ~40fps is a bare minimum, and >60fps all the time is hugely preferred since then you can also use vsync to eliminate tearing.Now back to my point. Your counter was "you know that non-AA will be higher than AA, so why measure it?" Is that a point? Different cards will scale differently, and seeing 2560+AA doesn't tell us the performance landscape at real-world usage which is 2560 no-AA.

Dug - Wednesday, December 15, 2010 - link

Is it me, or are the graphs confusing.Some leave out cards on certain resolutions, but add some in others.

It would be nice to have a dynamic graph link so we can make our own comparisons.

Or a drop down to limit just ati, single card, etc.

Either that or make a graph that has the cards tested at all the resolutions so there is the same number of cards in each graph.

benjwp - Wednesday, December 15, 2010 - link

Hi,You keep using Wolfenstein as an OpenGL benchmark. But it is not. The single player portion uses Direct3D9. You can check this by checking which DLLs it loads or which functions it imports or many other ways (for example most of the idTech4 renderer debug commands no longer work).

The multiplayer component does use OpenGL though.

Your best bet for an OpenGL gaming benchmark is probably Enemy Territory Quake Wars.

Ryan Smith - Wednesday, December 15, 2010 - link

We use WolfMP, not WolfSP (you can't record or playback timedemos in SP).7Enigma - Wednesday, December 15, 2010 - link

Hi Ryan,What benchmark do you use for the noise testing? Is it Crysis or Furmark? Along the same line of questioning I do not think you can use Furmark in the way you have the graph setup because it looks like you have left Powertune on (which will throttle the power consumption) while using numbers from NVIDIA's cards where you have faked the drivers into not throttling. I understand one is a program cheat and another a TDP limitation, but it seems a bit wrong to not compare them in the unmodified position (or VERBALLY mention this had no bearing on the test and they should not be compared).

Overall nice review, but the new cards are pretty underwhelming IMO.

Ryan Smith - Thursday, December 16, 2010 - link

Hi 7Enigma;For noise testing it's FurMark. As is the case with the rest of our power/temp/noise benchmarks, we want to establish the worst case scenario for these products and compare them along those lines. So the noise results you see are derived from the same tests we do for temperatures and power draw.

And yes, we did leave PowerTune at its default settings. How we test power/temp/noise is one of the things PowerTune made us reevaluate. Our decision is that we'll continue to use whatever method generates the worst case scenario for that card at default settings. For NVIDIA's GTX 500 series, this means disabling OCP because NVIDIA only clamps FurMark/OCCT, and to a level below most games at that. Other games like Program X that we used in the initial GTX 580 article clearly establish that power/temp/noise can and do get much worse than what Crysis or clamped FurMark will show you.

As for the AMD cards the situation is much more straightforward: PowerTune clamps everything blindly. We still use FurMark because it generates the highest load we can find (even with it being reduced by over 200MHz), however because PowerTune clamps everything, our FurMark results are the worst case scenario for that card. Absolutely nothing will generate a significantly higher load - PowerTune won't allow it. So we consider it accurate for the purposes of establishing the worst case scenario for noise.

In the long run this means that results will come down as newer cards implement this kind of technology, but then that's the advantage of such technology: there's no way to make the card louder without playing wit the card's settings. For the next iteration of the benchmark suite we will likely implement a game-based noise test, even though technologies like PowerTune are reducing the dynamic range.

In conclusion: we use FurMark, we will disable any TDP limiting technology that discriminates based on the program type or is based on a known program list, and we will allow any TDP limiting technology that blindly establishes a firm TDP cap for all programs and games.

-Thanks

Ryan Smith

7Enigma - Friday, December 17, 2010 - link

Thanks for the response Ryan! I expected it to be lost in the slew of other posts. I highly recommend (as you mentioned in your second to last paragraph) that a game-based benchmark is used along with the Furmark for power/noise. Until both adopt the same TDP limitation it's going to put the NVIDIA cards in a bad light when comparisons are made. This could be seen as a legitimate beef for the fanboys/trolls, and we all know the less ammunition they have the better. :)Also to prevent future confusion it would be nice to have what program you are using for the power draw/noise/heat IN the graph title itself. Just something as simple as "GPU Temperature (Furmark-Load)" would make it instantly understandable.

Thanks again for the very detailed review (on 1 week nonetheless!)

Hrel - Wednesday, December 15, 2010 - link

I really hope these architexture changes lead to better minimum FPS results. AMD is ALWAYS behind Nvidia on minimum FPS and in many ways that's the most important measurment since min FPS determines if the game is playable or not. I dont' care if it maxes out 122 FPS if when the shit hits the fan I get 15 FPS, I won't be able to accurately hit anything.Soldier1969 - Wednesday, December 15, 2010 - link

I'm dissapointed in the 6970, its not what I was expecting over my 5870. I will wait to see what the 6990 brings to the table next month. I'm looking for a 30-40% boost from my 5870 at 2560 x 1600 res I game at.stangflyer - Wednesday, December 15, 2010 - link

Now that we see the power requirements for the 6970 and that it needs more power than the 5870 how would they make a 6990 without really cutting off the performance like the 5970?I had a 5970 for a year b4 selling it 3 weeks ago in preparation of getting 570 in sli or 6990.

It would obviously have to be 2x8 pin power! Or they would have to really use that powertune feature.

I liked my 5970 as I didn't have the stuttering issues (or i don't notice them) And actually have no issues with eyefinity as i have matching dell monitors with native dp inputs.

If I was only on one screen I would not even be thinking upgrade but the vram runs out when using aa or keeping settings high as I play at 5040x1050. That is the only reason I am a little shy of getting the 570 in sli.

Don't see how they can make a 6990 without really killing the performance of it.

I used my 5970 at 5870 and beyond speeds on games all the time though.