NVIDIA's GeForce GTX 580: Fermi Refined

by Ryan Smith on November 9, 2010 9:00 AM ESTCrysis: Warhead

Kicking things off as always is Crysis: Warhead, still one of the toughest game in our benchmark suite. Even 2 years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and the answer continues to be “no.” One of these years we’ll actually be able to run it with full Enthusiast settings…

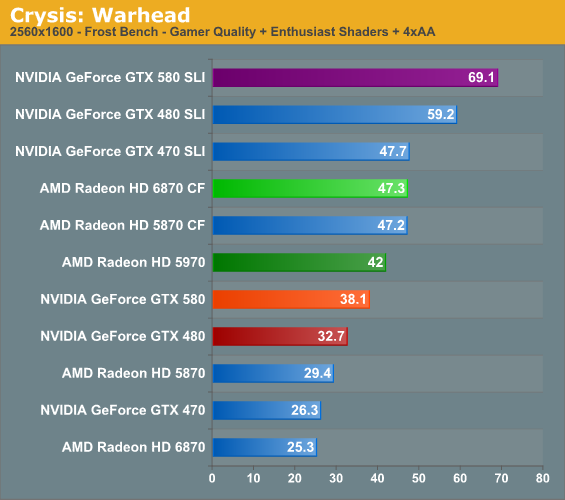

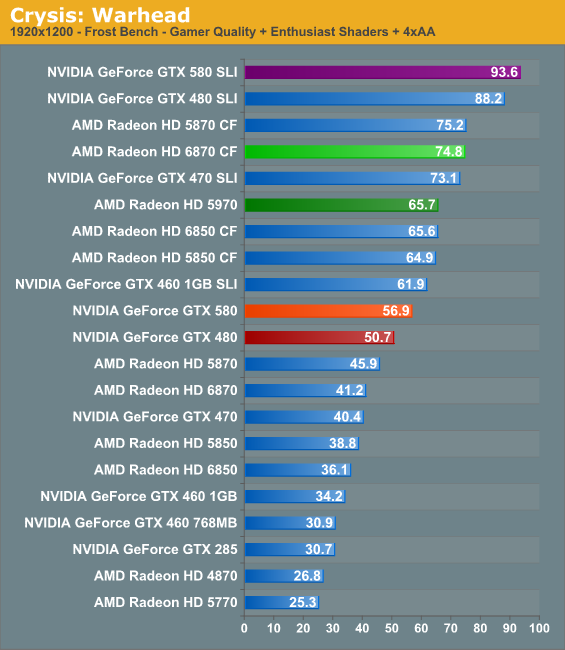

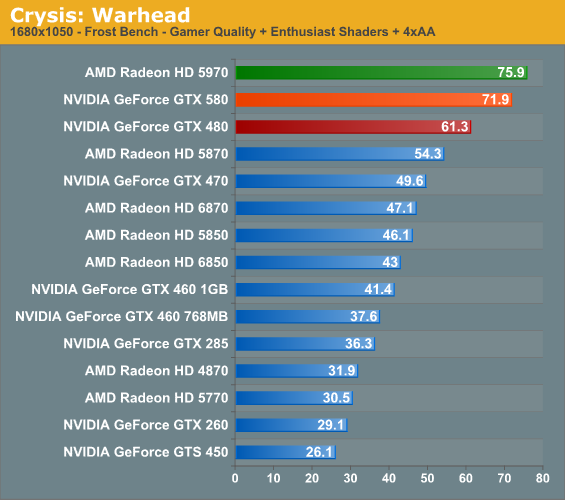

Right off the bat we see the GTX 580 do well, which as the successor to what was already the fastest single-GPU card on the market is nothing less than we expect. At 2560 it’s around 16% faster than the GTX 480, and at 1920 that drops to 12%. Bear in mind that the theoretical performance improvement for clock + shader is 17%, so in reality it would be nearly impossible get that close without the architectural improvements also playing a role.

Meanwhile AMD’s double-GPU double-trouble lineup of the 5970 and 6870CF both outscore the GTX 580 by around 12% and 27% respectively. It shouldn’t come as a shock that they’re going to win most tests – ultimately they’re priced much more competitively than the GTX 580, making them price-practical alternatives to the GTX 580.

And speaking of competition the GTX 470 SLI is in much the same boat, handily surpassing the GTX 580. This will come full circle however when we look at power consumption.

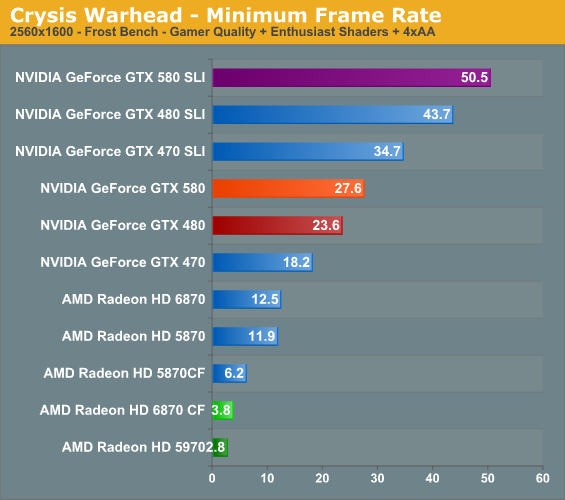

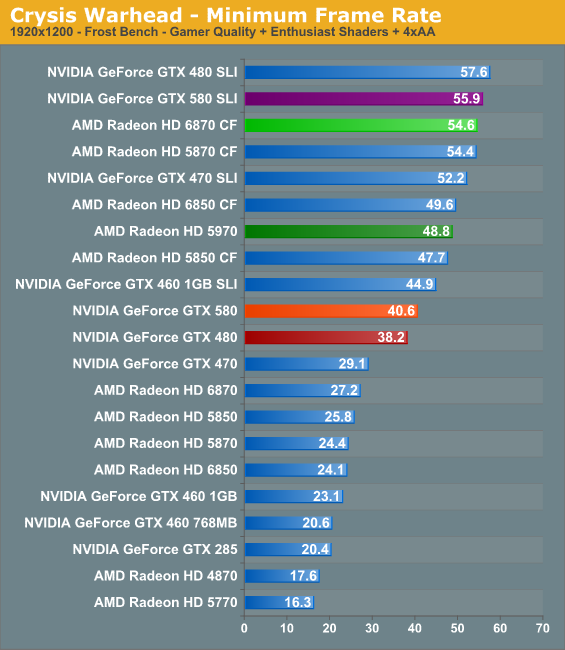

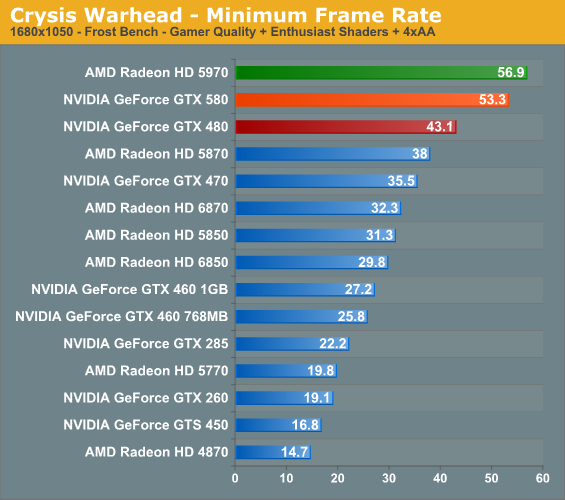

Meanwhile looking at minimum framerates we have a different story. AMD’s memory management in CrossFire mode has long been an issue with Crysis at 2560, and it continues to show here with a minimum framerate that simply craters. At 2560 there’s a world of difference between NVIDIA and AMD here, and it’s all in NVIDIA’s favor. 1920 however roughly mirrors our earlier averages, with the 580 taking a decent lead over the GTX 480, but falling to multi-GPU cards.

160 Comments

View All Comments

Taft12 - Tuesday, November 9, 2010 - link

In this article, Ryan does exactly what you are accusing him of not doing! It is you who need to be asked WTF is wrongIketh - Thursday, November 11, 2010 - link

ok EVERYONE belonging to this thread is on CRACK... what other option did AMD have to name the 68xx? If they named them 67xx, the differences between them and 57xx are too great. They use nearly as little power as 57xx yet the performance is 1.5x or higher!!!im a sucker for EFFICIENCY... show me significant gains in efficiency and i'll bite, and this is what 68xx handily brings over 58xx

the same argument goes for 480-580... AT, show us power/performance ratios between generations on each side, then everyone may begin to understand the naming

i'm sorry to break it to everyone, but this is where the GPU race is now, in efficiency, where it's been for cpus for years

MrCommunistGen - Tuesday, November 9, 2010 - link

Just started reading the article and I noticed a couple of typos on p1."But before we get to deep in to GF110" --> "but before we get TOO deep..."

Also, the quote at the top of the page was placed inside of a paragraph which was confusing.

I read: "Furthermore GTX 480 and GF100 were clearly not the" and I thought: "the what?". So I continued and read the quote, then realized that the paragraph continued below.

MrCommunistGen - Tuesday, November 9, 2010 - link

well I see that the paragraph break has already been fixed...ahar - Tuesday, November 9, 2010 - link

Also, on page 2 if Ryan is talking about the lifecycle of one process then "...the processes’ lifecycle." is wrong.Aikouka - Tuesday, November 9, 2010 - link

I noticed the remark on Bitstreaming and it seems like a logical choice *not* to include it with the 580. The biggest factor is that I don't think the large majority of people actually need/want it. While the 580 is certainly quieter than the 480, it's still relatively loud and extraneous noise is not something you want in a HTPC. It's also overkill for a HTPC, which would delegate the feature to people wanting to watch high-definition content on their PC through a receiver, which probably doesn't happen much.I'd assume the feature could've been "on the board" to add, but would've probably been at the bottom of the list and easily one of the first features to drop to either meet die size (and subsequently, TDP/Heat) targets or simply to hit their deadline. I certainly don't work for nVidia so it's really just pure speculation.

therealnickdanger - Tuesday, November 9, 2010 - link

I see your points as valid, but let me counterpoint with 3-D. I think NVIDIA dropped the ball here in the sense that there are two big reasons to have a computer connected to your home theater: games and Blu-ray. I know a few people that have 3-D HDTVs in their homes, but I don't know anyone with a 3-D HDTV and a 3-D monitor.I realize how niche this might be, but if the 580 supported bitstreaming, then it would be perfect card for anyone that wants to do it ALL. Blu-ray, 3-D Blu-Ray, any game at 1080p with all eye-candy, any 3-D game at 1080p with all eye-candy. But without bitstreaming, Blu-ray is moot (and mute, IMO).

For a $500+ card, it's just a shame, that's all. All of AMD's high-end cards can do it.

QuagmireLXIX - Sunday, November 14, 2010 - link

Well said. There are quite a few fixes that make the 580 what I wanted in March, but the lack of bitstream is still a hard hit for what I want my PC to do.Call me niche.

QuagmireLXIX - Sunday, November 14, 2010 - link

Actually, this is killing me. I waited for the 480 in March b4 pulling the trigger on a 5870 because I wanted HDMI to a Denon 3808 and the 480 totally dropped the ball on the sound aspect (S/PDIF connector and limited channels and all). I figured no big deal, it is a gamer card after all, so 5870 HDMI I went.The thing is, my PC is all-in-one (HTPC, Game & typical use). The noise and temps are not a factor as I watercool. When I read that HDMI audio got internal on the 580, I thought, finally. Then I read Guru's article and seen bitstream was hardware supported and just a driver update away, I figured I was now back with the green team since 8800GT.

Now Ryan (thanks for the truth, I guess :) counters Gurus bitstream comment and backs it up with direct communication with NV. This blows, I had a lofty multimonitor config in mind and no bitstream support is a huge hit. I'm not even sure if I should spend the time to find out if I can arrange the monitor setup I was thinking.

Now I might just do a HTPC rig and Game rig or see what 6970 has coming. Eyefinity has an advantage for multiple monitors, but the display-port puts a kink in my designs also.

Mr Perfect - Tuesday, November 9, 2010 - link

So where do they go from here? Disable one SM again and call it a GTX570? GF104 is to new to replace, so I suppose they'll enable the last SM on it for a GTX560.