Quad Xeon 7500, the Best Virtualized Datacenter Building Block?

by Johan De Gelas on August 10, 2010 5:10 PM EST- Posted in

- IT Computing

Stress Testing the High End

Our previous vApus Mark I gave an idea on how well systems perform when running several virtualized “heavy duty applications”: complex network bandwidth gobbling web servers, large OLAP databases, and write intensive OLTP databases. Our benchmark was mostly based on vApus, a software client that fires off requests as if real users were stressing the server. Several client machines run with a vApus “slave” instance and a “master” vApus instance manages them (for example: start tests in sync) and collects the end results.

The first version of vApus had several limitations: it could simulate a maximum of about 1500 users per client (a limit of 32-bit Windows based software) and the number of clients to could be kept in sync was also limited. In the meantime, the core count of the servers that we test has been increasing at an almost ridiculous pace. When the first lines of vApus were written (at the end of 2006), octal core servers were considered the high-end. Only four years later we are now looking at 64-thread and 48-core monsters. Our ambitious way of benchmarking—simulating real-world users, not scripting benchmarks—resulted in scalability problems.

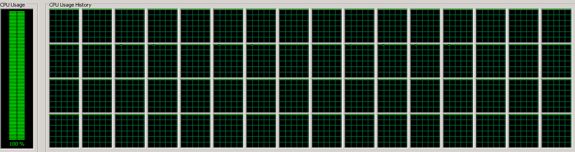

The lead developer of vApus, Dieter Vandroemme, decided to take all the lessons learned from 2.5 years of vApus development and apply them to a new vApus, built from scratch. Based on a new .Net 4.0 and 64-bit Windows foundation, and spending a lot of time on software tuning, Dieter came up with a new vApus Client that was capable of producing 10,000 threads in about 3.5 seconds; up to 15000 threads can be active on one client. If you know that every simulated user needs one thread, you’ll understand why this is very cool: we can now test extremely strong servers with only one humble client. A Core i7-750 (2.66GHz) needs only 20% CPU load to sustain 15000 “users” sending off SQL statements to the server. Our mighty 64-thread, 32-core quad Xeon X7560 at 2.26GHz was brought to its knees, as you can see below.

We were excited to see this happen: finally we tamed the beast with 64 threads. Yes, you can easily stress out a server with HPC benchmarks such as Linpack or SpecFP, but measuring the potential of a server using popular business software is no easy feat. We had to deal with severe thread contention at the client side for example. With several vApus instances, we are now ready to test the strongest servers including those coming out in the next few years. We are even able to stress test complete clusters of modern servers with just a few clients.

vApus' ultimate goal is not to stress servers to their maximum; we use it mostly for measuring response time at a given workload and to test stability of applications. But of course, we could not resist the chance to use it as a benchmark too. It was time to build a new benchmark, and vApus Mark II was born.

51 Comments

View All Comments

fynamo - Wednesday, August 11, 2010 - link

WHERE ARE THE POWER CONSUMPTION CHARTS??????Awesome article, but complete FAIL because of lack of power consumption charts. This is only half the picture -- and I dare to say it's the less important half.

davegraham - Wednesday, August 11, 2010 - link

+1 on this.JohanAnandtech - Thursday, August 12, 2010 - link

Agreed. But it wasn't until a few days before I was going to post this article that we got a system that is comparable. So I kept the power consumption numbers for the next article.watersb - Wednesday, August 11, 2010 - link

Wow, you IT Guys are a cranky bunch! :-)I am impressed with the vApus client-simulation testing, and I'm humbled by the complexity of enterprise-server testing complexity.

A former sysadmin, I've been an ignorant programmer for lo these past 10 years. Reading all these comments makes me feel like I'm hanging out on the bench in front of the general store.

Yeah, I'm getting off your lawn now...

Scy7ale - Wednesday, August 11, 2010 - link

Does this also apply to consumer HDDs? If so is it a bad idea to have an intake fan in front of the drives to cool them as many consumer/gaming cases have now?JohanAnandtech - Thursday, August 12, 2010 - link

Cold air comes from the bottom of the server aisle, sometimes as low as 20°C (68F) and gets blown at high speed over the disks. Several studies now show that this is not optimal for a HDD. In your desktop, the temperature of the air that is blown over the hdd should be higher, as the fans are normally slower. But yes, it is not good to keep your harddisk at temperatures lower than 30 °C . use hddsentinel or speedfan to check on this. 30-45°C is acceptable.Scy7ale - Monday, August 16, 2010 - link

Good to know, thanks! I don't think this is widely understood.brenozan - Thursday, August 12, 2010 - link

http://en.wikipedia.org/wiki/UltraSPARC_T22 sockets =~ 153GHz

4 sockets =~ 306GHz

Like the T1, the T2 supports the Hyper-Privileged execution mode. The SPARC Hypervisor runs in this mode and can partition a T2 system into 64 Logical Domains, and a two-way SMP T2 Plus system into 128 Logical Domains, each of which can run an independent operating system instance.

why SUN did not dominate the world in 2007 when it launched the T2? Besides the two 10G Ethernet builtin processor they had the most advanced architecture that I know, see in

http://www.opensparc.net/opensparc-t2/download.htm...

don_k - Thursday, August 12, 2010 - link

"why SUN did not dominate the world in 2007 when it launched the T2?"Because it's not actually that good :) My company bought a few T2s and after about a week of benchmarking and testing it was obvious that they are very very slow. Sure you get lots and lots of threads but each of those threads is oh so very slow. You would not _want_ to run 128 instances of solaris, one on each thread, because each of those instances would be virtually unusable.

We used them as webservers.. good for that. Or file servers that you don't need to do any cpu intensive work.

The theory is fine and all but you obviously have never used a T2 or you would not be wondering why it failed.

JohanAnandtech - Thursday, August 12, 2010 - link

"http://en.wikipedia.org/wiki/UltraSPARC_T22 sockets =~ 153GHz

4 sockets =~ 306GHz"

You are multiplying threads times clockspeed. IIRC, the T2 is a finegrained multithread CPU where 8 (!!) threads share two pipelines of *one* core.

Compare that with the Nehalem core where 2 threads share 4 "pipelines" (sustained decode/issue/execution/retire) per cycle. So basically, a dual socket T2 is nothing more than 16 relatively weak cores which can execute 2 instructions per clockcycle at the most, or 32 instructions per cycle. The only advantage of having 8 threads per core is that (with enough indepedent software threads) the T2 is able to come relatively close to that kind of throughput.

A dual six-core Xeon has a maximum throughput of 12 cores x 4 instructions or 48 instructions per cycle. As the Xeon has only 2 threads per core, it is less likely that the CPU will ever come close to that kind of output (in business apps). On the other hand, it performs excellent when you have some amount of dependent threads, or simply not enough threads in parallel. The T2 will only perform well if you have enough independent threads.