The RV870 Story: AMD Showing up to the Fight

by Anand Lal Shimpi on February 14, 2010 12:00 AM EST- Posted in

- GPUs

The Cost of Jumping to 40nm

This part of the story could almost stand on its own, but it directly impacts much of what happened with Cypress and the entire Evergreen stack that it’s worth talking about here.

By now you’ve most likely heard about issues with TSMC’s 40nm process. While the word is that the issues are finally over, poor yields and a slower than expected ramp lead to Cypress shortages last year and contributed to NVIDIA’s Fermi/GF100 delay. For the next couple of pages I want to talk about the move to 40nm and why it’s been so difficult.

The biggest issue with being a fabless semiconductor is that you have one more vendor to deal with when you’re trying to get out a new product. On top of dealing with memory companies, component manufacturers and folks who have IP you need, you also have to deal with a third party that’s going to actually make your chip. To make matters worse, every year or so, your foundry partner comes to you with a brand new process to use.

The pitch always goes the same way. This new process is usually a lot smaller, can run faster and uses less power. As with any company whose job it is to sell something, your foundry partner wants you to buy its latest and greatest as soon as possible. And as is usually the case in the PC industry, they want you to buy it before it's actually ready.

But have no fear. What normally happens is your foundry company will come to you with a list of design rules and hints. If you follow all of the guidelines, the foundry will guarantee that they can produce your chip and that it will work. In other words, do what we tell you to do, and your chip will yield.

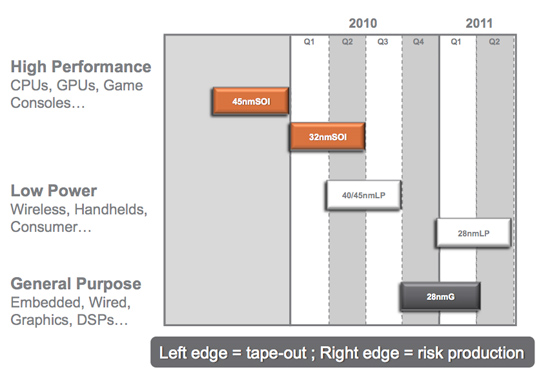

Global Foundries' 2010 - 2011 Manufacturing Roadmap

The problem is that if you follow every last one of these design rules and hints your chip won’t be any faster than it was on the older manufacturing process. Your yield will be about the same but your cost will be higher since you’ll bloat your design taking into account these “hints”.

Generally between process nodes the size of the wafer doesn’t change. We were at 200mm wafers for a while and now modern fabs use 300mm wafers. The transistor size does shrink however, so in theory you could fit more die on a wafer with each process shrink.

The problem is with any new process, the cost per wafer goes up. It’s a new process, most likely more complex, and thus the wafer cost is higher. If the wafer costs are 50% higher, then you need to fit at least 50% more die on each wafer in order to break even with your costs on the old process. In reality you actually need to fit more than 50% die per wafer on the new process because yields usually suck at the start. But if you follow the foundry’s guidelines to guarantee yield, you won’t even be close to breaking even.

The end result is you get zero benefit from moving to the new process. That’s not an option for anyone looking to actually use Moore’s Law to their advantage. Definitely not for a GPU company.

The solution is to have some very smart people in your company that can take these design rules and hints the foundry provides, and figure out which ones can be ignored, and ways to work around the others. This is an area where ATI and NVIDIA differ greatly.

132 Comments

View All Comments

Spoelie - Thursday, February 18, 2010 - link

phoronix.comfor all things ATi + Linux

SeanHollister - Monday, February 15, 2010 - link

Fantastic work, Anand. It's so difficult to make pieces like this work without coming across as puffery, but everything here feels genuine and evenhanded. Here's hoping for similar articles featuring individuals at NVIDIA, Intel and beyond in the not-too-distant future.boslink - Monday, February 15, 2010 - link

Just like many others i'm also reading/visiting anandtech for years but this article made me register just to say damn good job.Also for the long time i didn't read article from cover to cover. Usually i read first page and maybe second (enough to guess what's in other pages) and than skip to conclusions.

But this article remind us that Graphic card/chip is not only silicon. Real people story is what makes this article great.

Thanks Anand

AmdInside - Monday, February 15, 2010 - link

Great article as usual. Sunspot seems like the biggest non-factor in the 5x00 series. Except for hardware reviews sites which have lots of monitors lying around, I just don't see a need for it. It is like NVIDIA's 3D Vision. Concept sounds good but in general practice, it is not very realistic that a user will use it. Just another check box that a company can point to to an OEM and say we have it and they don't. NVIDIA has had Eyefinity for a while (SLI Mosaic). It just is very expensive since it is targeted towards businesses and not consumers and offers some features Eyefinity doesn't offer.I think NVIDIA just didn't believe consumers really wanted it but added it afterwards just so that ATI doesn't have a checkbox they can brag about. But NVIDIA probably still believes this is mainly a business feature.It is always interesting to learn how businesses make product decisions internally. I always hate reading interviews of PR people. I learn zero. Talk to engineers if you really want to learn something.

BelardA - Tuesday, February 16, 2010 - link

I think the point of Eyefinity is that its more hardware based and natural... not requiring so much work from the game publisher. A way of having higher screen details over a span of monitors.A few games will actually span 2 or 3 monitors. Or some will use the 2nd display as a control panel. With Eyefinity, it tells the game "I have #### x #### pixels" and auto divides the signal onto 3 or 6 screens and be playable. That is quite cool.

But as you say, its a bit of a non-factor. Most users will still only have one display to work with. Hmmm. there was a monitor that was almost seamless 3-monitors built together, where is that?

Also, I think the TOP-SECRET aspect of Sun-Spots was a way of testing security. Eyefinity isn't a major thing... but the hiding of it was.

While employees do move about in the business, the sharing of trade-secrets could still get them in trouble - if caught. It does happen, but how much?

gomakeit - Monday, February 15, 2010 - link

I love these insightful articles! This is why Anandtech is one of my favorite tech sites ever!Smell This - Monday, February 15, 2010 - link

Probably could have done without the snide reference to the CPU division at the end of the article - it added nothing and was a detraction from the overall piece.It also implies a symbiotic relationship between AMDs 40+ year battle with Chipzilla and the GPU Wars with nV. Not really an accurate correlation. The CPU division has their own headaches.

It is appropriate to note, however, that both divisions must bring their 'A' Game to the table with the upcoming convergence on-die of the CPU-GPU.

mrwilton - Monday, February 15, 2010 - link

Thank you, Anand, for this great and fun-to-read article. It really has been some time where I have read an article cover to cover.Keep up the excellent work.

Best wishes, wt

Ananke - Monday, February 15, 2010 - link

I have 5850, it is a great card. However, what people saying about PC gaming is true - gaming on PC slowly fades towards consoles. You cannot justify several thousand-dollar PC versus a 2-300 multimedia console.So powerful GPU is a supercomputer by itself. Please ATI, make better Avivo transcoder, push open software development using Steam further. We need many applications, not just Photoshop and Cyberlink. We need hundreds, and many free, to utilize this calculation power. Then, it will make sense to use this cards.

erple2 - Tuesday, February 16, 2010 - link

Perhaps. However, this "PC Gaming is being killed off by the 2-300 multimedia console" war has been going on since the Playstation 1 came out. PC gaming is still doing very well.I think that there will always be some sort of market (even if only 10% - that's significant enough to make companies take notice) for PC Gaming. While I still have to use the PC for something, I'll continue to use it for gaming, as well.

Reading the article, I find it poignant that the focus is on //execution// rather than //ideas//. It reminds me of a blog written by Jeff Atwood (http://www.codinghorror.com/blog/2010/01/cultivate...">http://www.codinghorror.com/blog/2010/01/cultivate... if you're interested) about the exact same thing. Focus on what you //do//. Execution (ie "what do we have an 80%+ chance of getting done on time) is more important than the idea (ie features you can claim on a spec sheet).

As a hardware developer (goes the same for any software developer), your job is to release the product. That means following a schedule. That means focusing on what you can do, not on what you want to do. It sounds to me like ATI has been following that paradigm, which is why they seem to be doing so well these days.

What's particularly encouraging about the story written was that Management had the foresight to actually listen to the technical side when coming up with the schedules and requirements. That, in and of itself, is something that a significant number of companies just don't do well.

It's nice to hear from the internal wing of the company from time to time, and not just the glossy presentation of hardware releases.

I for one thoroughly enjoyed the read. I liked the perspective that the RV5-- err Evergreen gave on the process of developing hardware. What works, and what doesn't.

Great article. Goes down in my book with the SSD and RV770 articles as some of the best IT reads I've done.