3D Vision Surround: NVIDIA’s Eyefinity

During our meeting with NVIDIA, they were also showing off 3D Vision Surround, which was announced at the start of CES at their press conference. 3D Vision Surround is not inherently a GF100 technology, but since it’s being timed for release along-side GF100 cards, we’re going to take a moment to discuss it.

If you’ve seen Matrox’s TripleHead2Go or AMD’s Eyefinity in action, then you know what 3D Vision Surround is. It’s NVIDIA’s implementation of the single large surface concept so that games (and anything else for that matter) can span multiple monitors. With it, gamers can get a more immersive view by being able to surround themselves with monitors so that the game world is projected from more than just a single point in front of them.

NVIDIA tells us that they’ve been sitting on this technology for quite some time but never saw a market for it. With the release of TripleHead2Go and Eyefinity it became apparent to them that this was no longer the case, and they unboxed the technology. Whether this is true or a sudden reaction to Eyefinity is immaterial at the moment, as it’s coming regardless.

This triple-display technology will have two names. When it’s used on its own, NVIDIA is calling it NVIDIA Surround. When it’s used in conjunction with 3D Vision, it’s called 3D Vision Surround. Obviously NVIDIA would like you to use it with 3D Vision to get the full effect (and to require a more powerful GPU) but 3D Vision is by no means required to use it. It is however the key differentiator from AMD, at least until AMD’s own 3D efforts get off the ground.

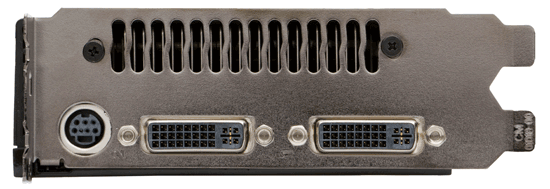

Regardless of to what degree this is a sudden reaction from NVIDIA over Eyefinity, ultimately this is something that was added late in to the design process. Unlike AMD who designed the Evergreen family around it from the start, NVIDA did not, and as a result they did not give a GF100 the ability to drive more than 2 displays at once. The shipping GF100 cards will have the traditional 2 monitor limit, meaning that gamers will need 2 GF100 cards in SLI to drive 3+ monitors, with the second card needed to provide the 3rd and 4th display outputs. We expect that the next NVIDIA design will include the ability to drive 3+ monitors from a single GPU, as for the moment this limitation precludes any ability to do Surround for cheap.

GTX 280 with 2 display outputs: GF100 won't be any different

As for some good news, as we stated earlier this is not a technology inherent to the GF100. NVIDIA can do it entirely in software and as a result will be backporting this technology to the GT200 (GTX 200 series). The drivers that get released for the GF100 will allow GTX 200 cards to do Surround in the same manner: with 2 cards, you can run a single large surface across 3+ displays. We’ve seen this in action and it works, as NVIDIA was demoing a pair of GTX 285s running in NVIDIA Surround mode in their CES booth.

The big question of course is going to be what this does for performance on both the GF100 and GT200, along with compatibility. That’s something that we’re going to have to wait on the actual hardware for.

115 Comments

View All Comments

Zool - Tuesday, January 19, 2010 - link

There are still plenty of questions.Like how tesselation efects MSAA with increased geametry per pixel. Also the flat stairs in uniengine (and very plastic, realistic after tesselation and displacement mapping), would they work with collision detection as after tesselation or before as completely flat and somewhere else in the 3d space. The same with some physix efects. The uniengine heaven is more of a showcase of tesselation and what can be done than a real game engine.

marraco - Monday, January 18, 2010 - link

Far Cry Ranch Small, and all the integrated benchmark, reads constantly the hard disk, so is dependent of HD speed.It's not unfair, since FC2 updates textures from hard disk all the time, making the game freeze constantly, even in the better computers.

I wish to see that benchmark run with and without SSD.

Zool - Monday, January 18, 2010 - link

I want also note that for the stream of fps/3rd person shooters/rts/racing games that look all same sometimes upgrading the graphic card doesnt have much sense these days.Can anyone make a game that will use pc hardware and it wont end in running and shoting at each other from first or third person ? Dragon age was a quite weak overhyped rpg.

Suntan - Monday, January 18, 2010 - link

Agreed. That is one of the main reasons I've lost interest in PC gaming. Ironically though, my favorite console games on the PS3 have been the two Uncharted games...-Suntan

mark0409mr01 - Monday, January 18, 2010 - link

Does anybody know if Fermi, GF100 or whatever it's going to be called have support for bitstream of HD audio codecs?Also do we know anything else about the video capabilites of the new card, there doesn't really seem to have been much mentioned about this.

Thanks

Slaimus - Monday, January 18, 2010 - link

Seeing how the GF100 chip has no display components at all on-chip (RAMDAC, TMDS, Displayport, PureVideo), they will probably be using a NVIO chip like the GT200. Would it not be possible to just put multiple NVIO chips to scale with the number of display outputs?Ryan Smith - Wednesday, January 20, 2010 - link

If it's possible, NVIDIA is not doing it. I asked them about the limit on display outputs, and their response (which is what brought upon the comments in the article) was that GF100 cards were already too late in the design process after they greenlit Surround to add more display outputs.I don't have more details than that, but the implication is that they need to bake support for more displays in to the GPU itself.

Headfoot - Monday, January 18, 2010 - link

Best comment for the entire page, I am wondering the same thing.Suntan - Monday, January 18, 2010 - link

Looking at the image of the chip on the first page, it looks like a miniature of a vast city complex. Man, when are they going to remake “TRON”……although, at the speeds that chips are running now-a-days, the whole movie would be over in a ¼ of a second…

-Suntan

arnavvdesai - Monday, January 18, 2010 - link

In you conclusion you mentioned that the only thing which would matter would be price/performance. However, from the article I wasnt really able to make out a couple of things. When NVIDIA says they can make something look better than the competition, how would you quantify that?I am a gamer & I love beautiful graphics. It's one of the reasons I still sometimes buy games for PCs instead of consoles. I have a 5870 & a 1080p 24" monitor. I would however consider buying this card if it made my game look better. After a certain number(60fps) I really only care about beautiful graphics. I want no grass to look like paper or jaggies to show on distant objects. Also, will game makers take advantage of this? Unlike previous generations game manufacturers are very deeply tied to the current console market. They have to make sure the game performs admirably on current day consoles which are at least 3-5 years behind their PC counterparts, so what incentive do they have to try and advance graphics on the PC when there arent enough people buying them. I am looking at current games and frankly just playing it, other than an obvious improvement in framerate, I cannot notice any visual improvements.

Coming back to my question on architecture. Will this tech being built by Nvidia help improve visual quality of games without additional or less additional work from the game manufacturing studios.