NVIDIA's Bumpy Ride: A Q4 2009 Update

by Anand Lal Shimpi on October 14, 2009 12:00 AM EST- Posted in

- GPUs

Final Words

Is NVIDIA in trouble? In the short term there are clearly causes to worry. AMD’s Eric Demers often tells me that the best way to lose a fight is by not showing up. NVIDIA effectively didn’t show up to the first DX11 battles, that’s going to hurt. But as I said in the things get better next year section, they do get better next year.

Fermi devotes a significant portion of its die to features that are designed for a market that currently isn’t generating much revenue. That needs to change in order for this strategy to make sense.

NVIDIA told me that we should see exponential growth in Tesla revenues after Fermi, but what does that mean? I don’t suspect that the sort of customers buying Tesla boards and servers will be lining up on day 1. I’d say best case scenario, Tesla revenues should see a bump one to two quarters after Fermi’s launch.

Nexus, ECC, and better double precision performance will all make Fermi more attractive in the HPC space than Cypress. The question is how much revenue will that generate in the short term.

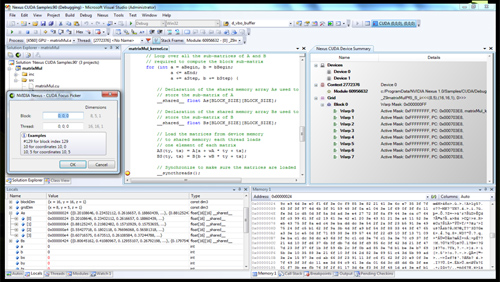

Nexus enables full NVIDIA GPU debugging from within Visual Studio. Not so useful for PC gaming, but very helpful for Tesla

Then there’s the mobile space. NVIDIA could do very well with Tegra. NVIDIA is an ARM licensee, and that takes care of the missing CPU piece of the puzzle. Unlike the PC space, x86 isn’t the dominant player in the mobile market. NVIDIA has a headstart in the ultra mobile space much like it does in the GPU computing space. Intel is a bit behind with its Atom strategy. NVIDIA could use this to its advantage.

The transition needs to be a smooth one. The bulk of NVIDIA’s revenues today come from PC graphics cards. There’s room for NVIDIA in the HPC and ultra mobile spaces, but it’s not revenue that’s going to accumulate over night. The changes in focus we’re seeing from NVIDIA today are in line with what it’d have to do in order to establish successful businesses outside of the PC industry.

And don’t think the PC GPU battle is over yet either. It took years for NVIDIA to be pushed out of the chipset space, even after AMD bought ATI. Even if the future of PC graphics are Intel and AMD GPUs, it’s going to take a very long time to get there.

106 Comments

View All Comments

Scali - Sunday, October 18, 2009 - link

If you sort by percentage change:http://store.steampowered.com/hwsurvey/videocard/?...">http://store.steampowered.com/hwsurvey/videocard/?...

Then add up all the increases from nVidia, you get to +1.42%.

Do the same for all ATi parts, and you get +1.06%.

(Obviously you can't do a direct comparison of the 260 to the entire 4800-series, as the 260 is just a single part. You would then ignore various other parts that also compete with the 4800 series, such as the 9800GTX).

shin0bi272 - Thursday, October 15, 2009 - link

It does MIMD processing and parallel kernel processing.true 32bit floating point processing in 1 clock (previous only did 24bit and emulated 32bit) and is now using the IEE754-2008 standard instead of the 1984 standard. so its 64bit or double precision performance is 1/2 that of the single precision now, while their previous was 1/8th as fast and AMD's is 1/5th.

With the addition of a second dispatch unit they are able to send special functions to the sfu at the same time they are sending gp shaders... meaning they no longer have to take up the entire SM (group of shader cores acting as a unit from what I can gather) to do an interpolation calculation.

They added an actual cache hierarchy instead of the software managed memory in the gt200 series which eliminates a large problem they were having which was shared memory size an that means increased performance.

Nvidia says that its switching between cuda and gpu is now 10x faster which means that physx performance will increase dramatically annnnnd it supports parallel transfers to the cpu vs serial in previous cards... so multiple cpu cores and multiple connections to the gpu means much better performance.

Nvidia claims they learned their lesson on moving to different die sizes and guessing on their pricing. They were really pushing the new die with the old 5800fx chip and they got beat bad by ati... conversely they took their time with the gt200's and ended up pricing them according to what they thought the ati 48xx would be and ended up overshooting by a LOT. Whether or not they are full of it remains to be seen here but hopefully we can expect a 450-500 dollar gt300 flagship card early in 2010. The biggest issue is that ati already has boards in production and 1600 simd (single instruction multiple data) cores vs 512 mimd cores in the fermi. The ati card also runs at 850mhz vs probably 650mhz in the nvidia. We will just have to wait and see the benchmarks I guess.

Plus add in the horrible state of the economy (which is only going to get worse when congress goes to do the budget for 2011 in march), the falling value of the dollar, the continuing increase of the unemployment rate, the impending deficit/debt issues, the oil producing countries talking of dumping the dollar, the UN wanting to dump the dollar as the international reserve currency, etc., etc. and Nvidia cutting back on production might not be such a bad idea. If the economy collapses again once the government has to raise the interest rates and taxes to pay for their spending then AMD might be hurting with so much product sitting on the shelves collecting dust.

(fermi data from http://anandtech.com/video/showdoc.aspx?i=3651&...">http://anandtech.com/video/showdoc.aspx?i=3651&...

Scali - Thursday, October 15, 2009 - link

You should put the 1600 SIMD vs 512 SIMD in the proper perspective though...Last generation it was 800 SIMD (HD4870/HD4890) vs 240 SIMD (GTX280/GTX285).

With 1600 vs 512, the ratio actually improves in nVidia's favour, going from 3.33:1 to 3.1:1.

shin0bi272 - Friday, October 16, 2009 - link

Ahh thats true I didnt think about that. With all the other improvements and the move to ddr5 it should be a great card.Zool - Thursday, October 15, 2009 - link

In the worst case scenariou ati has 320 ALUs and in the best case 1600.Nvidia has in best and worst case same 512 ALUs. And the nvidia shaders runs at much higher frequency. Let we say double altough for gt300 the 1700 Mhz shaders wont be too realistic.

So actualy in the worst case scenario the gt300 has more than 3 times the ALUs than the radeon. In the best case scenario radeon has around 60% advantage over nvidia (with the unrealistic shader clocks).

And in the end some instructions may take more clock cycles on the GT300 some on the radeon 5800.

So your perspective is way off.

Scali - Thursday, October 15, 2009 - link

My perspective was purely that the gap in number of SIMDs between AMD becomes smaller with this generation, not larger. It's spot on, as there simply ARE 800 and 240 units in the respective parts I mentioned.Now you can go and argue about clockspeeds and best case/worst case, but that's not going to much good since we don't know any of that information on Fermi. We'll just have to wait for some actual benchmarks.

Zool - Friday, October 16, 2009 - link

The message of my reply was that just plain shader vs shader conclusions are very inaccurate and mean usualy nothing.Scali - Friday, October 16, 2009 - link

I never claimed otherwise. I just pointed out that this particular ratio would move more towards nVidia, as the post I was replying to appeared to assume the opposite, speaking out a concern on the large difference in raw numbers.Zool - Thursday, October 15, 2009 - link

The gt240 doesnt seem to be much better if they follow the gt220 price scenario.link http://translate.google.com/translate?prev=hp&...">http://translate.google.com/translate?p...sl=zh-CN...

The core/shader clocks are prety low for 40nm. Lets hope that gt300 will run on higher than that.

shotage - Thursday, October 15, 2009 - link

Just read this article and found it pretty ensightful, it's focus is the shift in microarchitecture of the GPU - primary focus is on Nvidia and their upcoming Fermi chipset. Very good technical read for those that are interested:http://www.realworldtech.com/page.cfm?ArticleID=RW...">http://www.realworldtech.com/page.cfm?ArticleID=RW...