AMD’s Radeon HD 5850: The Other Shoe Drops

by Ryan Smith on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Battleforge: The First DX11 Game

As we mentioned in our 5870 review, Electronic Arts pushed out the DX11 update for Battleforge the day before the 5870 launched. As we had already left for Intel’s Fall IDF we were unable to take a look at it at the time, so now we finally have the chance.

Being the first DX11 title, Battleforge makes very limited use of DX11’s features given that the hardware and the software are still brand-new. The only thing Battleforge uses DX11 for is for Compute Shader 5.0, which replaces the use of pixel shaders for calculating ambient occlusion. Notably, this is not a use that improves the image quality of the game; pixel shaders already do this effect in Battleforge and other games. EA is using the compute shader as a faster way to calculate the ambient occlusion as compared to using a pixel shader.

The use of various DX11 features to improve performance is something we’re going to see in more games than just Battleforge as additional titles pick up DX11, so this isn’t in any way an unusual use of DX11. Effectively anything can be done with existing pixel, vertex, and geometry shaders (we’ll skip the discussion of Turing completeness), just not at an appropriate speed. The fixed-function tessellater is faster than the geometry shader for tessellating objects, and in certain situations like ambient occlusion the compute shader is going to be faster than the pixel shader.

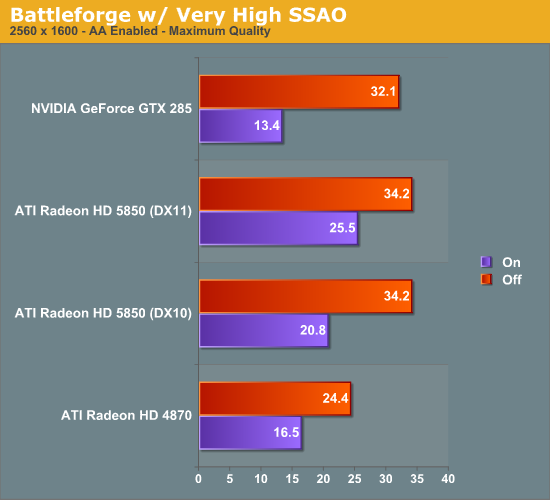

We ran Battleforge both with DX10/10.1 (pixel shader SSAO) and DX11 (compute shader SSAO) and with and without SSAO to look at the performance difference.

Update: We've finally identified the issue with our results. We've re-run the 5850, and now things make much more sense.

As Battleforge only uses the compute shader for SSAO, there is no difference in performance between DX11 and DX10.1 when we leave SSAO off. So the real magic here is when we enable SSAO, in this case we crank it up to Very High, which clobbers all the cards as a pixel shader.

The difference from in using a compute shader is that the performance hit of SSAO is significantly reduced. As a DX10.1 pixel shader it lobs off 35% of the performance of our 5850. But calculated using a compute shader, and that hit becomes 25%. Or to put it another way, switching from a DX10.1 pixel shader to a DX11 compute shader improved performance by 23% when using SSAO. This is what the DX11 compute shader will initially be making possible: allowing developers to go ahead and use effects that would be too slow on earlier hardware.

Our only big question at this point is whether a DX11 compute shader is really necessary here, or if a DX10/10.1 compute shader could do the job. We know there are some significant additional features available in the DX11 compute shader, but it's not at all clear on when they're necessary. In this case Battleforge is an AMD-sponsored showcase title, so take an appropriate quantity of salt when it comes to this matter - other titles may not produce similar results

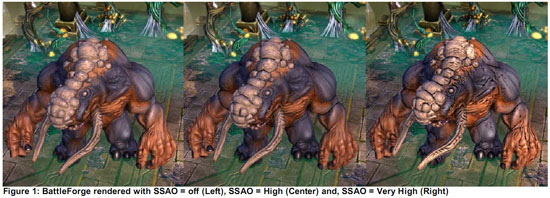

At any rate, even with the lighter performance penalty from using the compute shader, 25% for SSAO is nothing to sneeze at. AMD’s press shot is one of the best case scenarios for the use of SSAO in Battleforge, and in the game it’s very hard to notice. For the 25% drop in performance, it’s hard to justify the slightly improved visuals.

95 Comments

View All Comments

Ryan Smith - Wednesday, September 30, 2009 - link

An excellent question! This isn't something we had a chance to put in the article, but I'm working on something else for later this week to take a look at exactly that. The 5850 gives us more of an ability to test that, since Overdrive isn't capped as low on a percentage basis.Zool - Wednesday, September 30, 2009 - link

You could make some raw shader tests that doesnt depend on memory bandwith to see if the gpu internal bandwith is somehow limited or the external bandwith. And maybe try out some older games(quake3 or 3dmark2001).In DX11 games will use more shader power for other things which hawe litle impact on bandwith. Maybe they tested those heawy dx11 scenarios and ended with much less costly 256bit interface as a compromis.

Dante80 - Wednesday, September 30, 2009 - link

Up to 80watts lower consumption in load120$ less

Quieter

Cooler

Shorter

Performance hit around 10-15% against 5870 (that means far better perf/watt and perf/$)

~12% More performance than GT285

Overclocks to 5870 perf easily

Ok, this is an absolute killer for the lower performance market segment. Its 4870vs4850 all over again. Only this time, they get the performance crown for single cards too.

Another thing to remember, is that nvidia does not currently have a countermeasure for this card. The GT380 will be priced for the enthusiast segment, and we can only hope for the architecture to be flexible enough to provide a 360 for the upper performance segment without killing profits due to diesize constraints. Things will get even more messy as soon as Juniper lands, the greens have to act now (thats our interest as consumers too)! And I don't think that GT200 respins will cut it.

the zorro - Wednesday, September 30, 2009 - link

maybe if intel heavily overclocks a gma 4500 can can compete with amd?haplo602 - Wednesday, September 30, 2009 - link

hmm ... my next system shoudl feature a GTS 250. Unless ATI releases a 5670 and finaly hits opengl 3.2 and opencl support in their linux drivers.anyway the 5850 will kill lot of Nvidia cards.

san1s - Wednesday, September 30, 2009 - link

interesting results, can't wait to see how gt300 will comparepalladium - Thursday, October 1, 2009 - link

"interesting results, can't wait to see how gt300 will compare "SiliconDoc: WTF?! 5870< GTX295, top end GT300>>295 because it has 384bit GDDR5 ( 5870 only 256 bit), so naturally GT300 will KICK RV8xx's A**!!!!

That's my prediction anyway (hopefully he decides not to troll here)

Dobs - Wednesday, September 30, 2009 - link

My guess is - GT300 wont compare to 5850 or 5870.It will compare with the 5870X2 and be in the price bracket. (Too much for most of us.)

When the GT300 eventually gets released that is.... Then a few months later again nvidia will bring out the scaled down versions in the same price brackets as the 5850/5870 that will probably compete pretty well.

Only question is - can you wait?

You could wait for the 6870 as well:P

Vinas - Wednesday, September 30, 2009 - link

No, I don't have to wait because I have a 5870 :-)The0ne - Wednesday, September 30, 2009 - link

I really think enthusiast that spends hundreds on the MB alone isn't the regular enthusiast. So price wouldn't be an issue. I love building PCs and testing them but I'm not going to spend $200+ of a MB knowing that I will be building another system in few months with better performance parts and pricing. Unless I'm really keeping the system for a long time then I'll pour my hard earn money into the high end parts. But then if you're doing this I don't think you're really an enthusiast as it's really a one shot deal?