AMD’s Radeon HD 5850: The Other Shoe Drops

by Ryan Smith on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Battleforge: The First DX11 Game

As we mentioned in our 5870 review, Electronic Arts pushed out the DX11 update for Battleforge the day before the 5870 launched. As we had already left for Intel’s Fall IDF we were unable to take a look at it at the time, so now we finally have the chance.

Being the first DX11 title, Battleforge makes very limited use of DX11’s features given that the hardware and the software are still brand-new. The only thing Battleforge uses DX11 for is for Compute Shader 5.0, which replaces the use of pixel shaders for calculating ambient occlusion. Notably, this is not a use that improves the image quality of the game; pixel shaders already do this effect in Battleforge and other games. EA is using the compute shader as a faster way to calculate the ambient occlusion as compared to using a pixel shader.

The use of various DX11 features to improve performance is something we’re going to see in more games than just Battleforge as additional titles pick up DX11, so this isn’t in any way an unusual use of DX11. Effectively anything can be done with existing pixel, vertex, and geometry shaders (we’ll skip the discussion of Turing completeness), just not at an appropriate speed. The fixed-function tessellater is faster than the geometry shader for tessellating objects, and in certain situations like ambient occlusion the compute shader is going to be faster than the pixel shader.

We ran Battleforge both with DX10/10.1 (pixel shader SSAO) and DX11 (compute shader SSAO) and with and without SSAO to look at the performance difference.

Update: We've finally identified the issue with our results. We've re-run the 5850, and now things make much more sense.

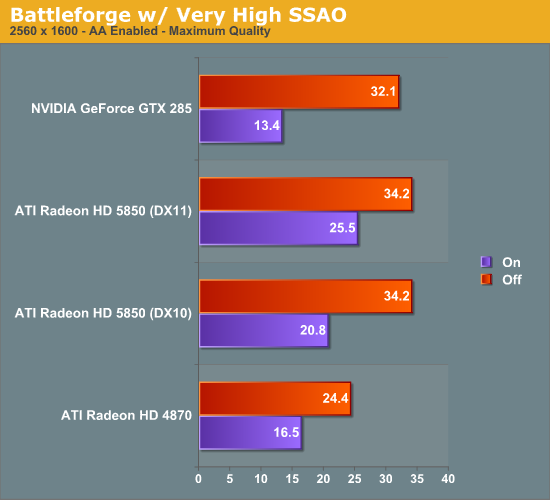

As Battleforge only uses the compute shader for SSAO, there is no difference in performance between DX11 and DX10.1 when we leave SSAO off. So the real magic here is when we enable SSAO, in this case we crank it up to Very High, which clobbers all the cards as a pixel shader.

The difference from in using a compute shader is that the performance hit of SSAO is significantly reduced. As a DX10.1 pixel shader it lobs off 35% of the performance of our 5850. But calculated using a compute shader, and that hit becomes 25%. Or to put it another way, switching from a DX10.1 pixel shader to a DX11 compute shader improved performance by 23% when using SSAO. This is what the DX11 compute shader will initially be making possible: allowing developers to go ahead and use effects that would be too slow on earlier hardware.

Our only big question at this point is whether a DX11 compute shader is really necessary here, or if a DX10/10.1 compute shader could do the job. We know there are some significant additional features available in the DX11 compute shader, but it's not at all clear on when they're necessary. In this case Battleforge is an AMD-sponsored showcase title, so take an appropriate quantity of salt when it comes to this matter - other titles may not produce similar results

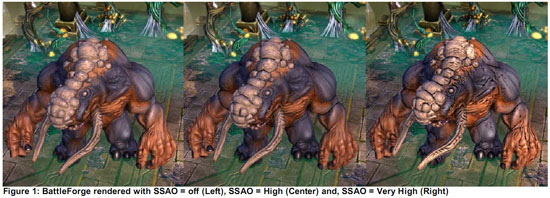

At any rate, even with the lighter performance penalty from using the compute shader, 25% for SSAO is nothing to sneeze at. AMD’s press shot is one of the best case scenarios for the use of SSAO in Battleforge, and in the game it’s very hard to notice. For the 25% drop in performance, it’s hard to justify the slightly improved visuals.

95 Comments

View All Comments

ThePooBurner - Wednesday, October 7, 2009 - link

A good repeatable test would be to have a RAID group in an instance and have them all cast a set of spells at once. the instance server separates from the rest of the server load and allows for a bit better testing. While it's true that the game is generally more CPU/RAM limited than GPU limited, especially if you have a lot of add-ons doing post processing on all the information that is shooting around. However, having been in raids with and without add-ons and such, i can tell you that i can get 45-50fps when we are just standing there waiting to attack, and then as soon as the spell effects start going off my frame rate drops like a rock. The spell effects are particle effects that overlap and mix and are all transparent to one degree or another. All those effects going off on a single target creates a lot of overlap that the GPU has to sort out in order to render correctly.What you might try is to see if you can get Blizz to put a target dummy in an instance to isolate it from the rest of the masses, and allow for sustained testing with spell effects going off in a predictable manner. (not having every testing go balls to the wall, but simply repeat a set rotation in a timed manner so that you can get an accurate gauge.

Per Hansson - Thursday, October 1, 2009 - link

I second your questionAnd also just want to say that with a very heavily volt modded and overclocked 8800GTS 512MB the performance in WoW at maximum settings with 2xAA will totally kill my card

For example in heavily populated areas it will use more than 512MB video ram (confirmed using rivatuner)

And in heavily populated areas I get like 20FPS, for example in Dalaran at peak hours (like, when I play :P)

The numbers you provide for WoW are welcome, very few sites do these tests

But more realistic numbers would be nice, representing what a big guild would see in a 20 or 40 man RAID...

Perhaps you could setup a more realistic test with private servers, or if you are unwilling to go that route ask Blizzard if they could setup a testserver for you to use so you can get reproducible tests?

biigfoot - Wednesday, September 30, 2009 - link

I can't wait till we find out if those extra SIMD engines can be unlocked like the good ol' ati2mtag softmod for the 9500 -> 9700 :) Even if not, this looks like the card for my new HTPC :)The0ne - Wednesday, September 30, 2009 - link

The charts are a bit confusing. My main focus is at the 2560x1600 and the review references 5850 CF and 285 CF but they are not to be found in any of the charts. Same for 285 SLI"With and without ambient occlusion, the 5850 comes in right where we expect it. The 5850 Crossfire on the other hand loses once again to the GTX 285 SLI in spite of beating the GTX 285 in a single card matchup."

Ryan Smith - Wednesday, September 30, 2009 - link

Whoops. The full 2560 w/o SSAO chart went AWOL. Fixed.giantpandaman2 - Wednesday, September 30, 2009 - link

Should be heels, not heals. Unless you're referring to some MMORPG priest. :)KeithP - Wednesday, September 30, 2009 - link

I understand you want a consistent platform to test all the video cards, but is there any possibility of testing the 5850 on a more realistic platform?Maybe something like a dual core 2.8GHz machine? I have to think the bulk of the potential buyers for this card won't have a machine anywhere near as powerful as the one you are testing on.

-KeithP

v1001 - Wednesday, September 30, 2009 - link

I wonder if we'll see a single slot card. I think this is the card for me to get. I need a lower watt single slot card to work in my tiny case.RDaneel - Wednesday, September 30, 2009 - link

Ryan, I know it's not the focus of this article, but it would be great to get a small paragraph (or a blog post or whatever) on what ATI has said in reference to the lower-spec 40nm DX11 parts. I simply don't need 4850 power in a SOHO-box, but the low 40nm idle power consumption and DX11 future-proofing are tempting me away from a 4870/90 card. What kind of prices and performance scaling are we likely to see before the end of 2009? Thanks for any info!Ryan Smith - Wednesday, September 30, 2009 - link

We covered this as much as we can in our 5870 article. If it's not there, it's either not something we know, or not something we can comment on.http://www.anandtech.com/video/showdoc.aspx?i=3643...">http://www.anandtech.com/video/showdoc.aspx?i=3643...