AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Power, Temperature, & Noise

As we have mentioned previously, one of AMD’s big design goals for the 5800 series was to get the idle power load significantly lower than that of the 4800 series. Officially the 4870 does 90W, the 4890 60W, and the 5870 should do 27W.

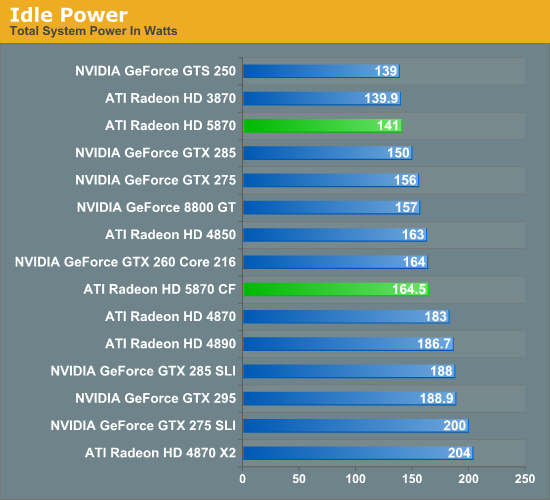

On our test bench, the idle power load of the system comes in at 141W, a good 42W lower than either the 4870 or 4890. The difference is even more pronounced when compared to the multi-GPU cards that the 5870 competes with performance wise, with the gap opening up to as much as 63W when compared to the 4870X2. In fact the only cards that the 5870 can’t beat are some of the slowest cards we have: the GTS 250 and the Radeon HD 3870.

As for the 5870 CF, we see AMD’s CF-specific power savings in play here. They told us they can get the second card down to 20W, and on our rig the power consumption of adding a second card is 23.5W, which after taking power inefficiencies into account is right on the dot.

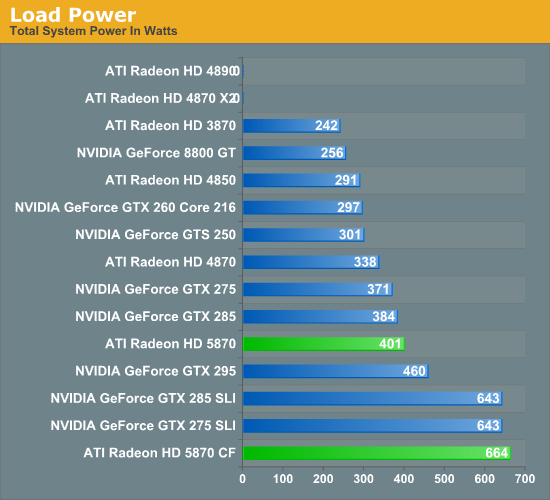

Moving on to load power, we are using the latest version of the OCCT stress testing tool, as we have found that it creates the largest load out of any of the games and programs we have. As we stated in our look at Cypress’ power capabilities, OCCT is being actively throttled by AMD’s drivers on the 4000 and 3000 series hardware. So while this is the largest load we can generate on those cards, it’s not quite the largest load they could ever experience. For the 5000 series, any throttling would be done by the GPU’s own sensors, and only if the VRMs start to overload.

In spite of AMD’s throttling of the 4000 series, right off the bat we have two failures. Our 4870X2 and 4890 both crash the moment OCCT starts. If you ever wanted proof as to why AMD needed to move to hardware based overcurrent protection, you will get no better example of that than here.

For the cards that don’t fail the test, the 5870 ends up being the most power-hungry single-GPU card, at 401W total system power. This puts it slightly ahead of the GTX 285, and well, well behind any of the dual-GPU cards or configurations we are testing. Meanwhile the 5870 CF takes the cake, beating every other configuration for a load power of 664W. If we haven’t mentioned this already we will now: if you want to run multiple 5870s, you’re going to need a good power supply.

Ultimately with the throttling of OCCT it’s difficult to make accurate predictions about all possible cases. But from our tests with it, it looks like it’s fair to say that the 5870 has the capability to be a slightly bigger power hog than any previous single-GPU card.

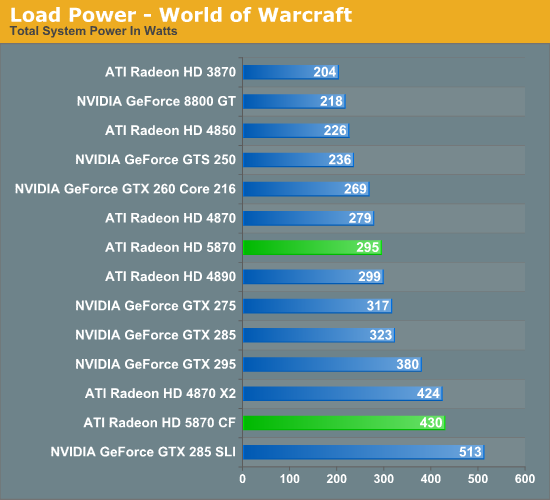

In light of our results with OCCT, we have also taken load power results for our suite of cards when running World of Warcraft. As it’s not a stress-tester it should produce results more in line with what power consumption will look like with a regular game.

Right off the bat, system power consumption is significantly lower. The biggest power hogs are the are the GTX 285 and GTX 285 SLI for single and dual-GPU configurations respectively. The bulk of the lineup is the same in terms of what cards consume more power, but the 5870 has moved down the ladder, coming in behind the GTX 275 and ahead of the 4870.

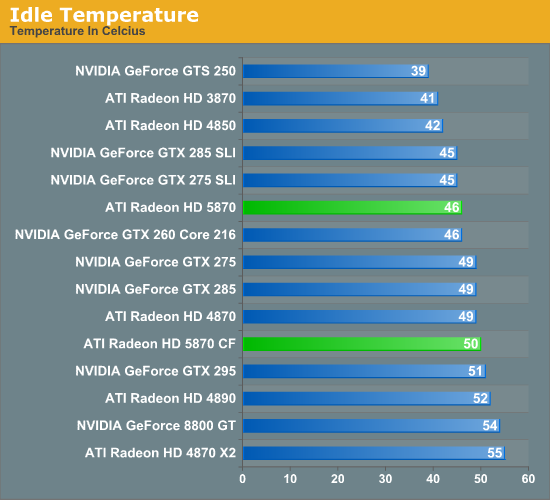

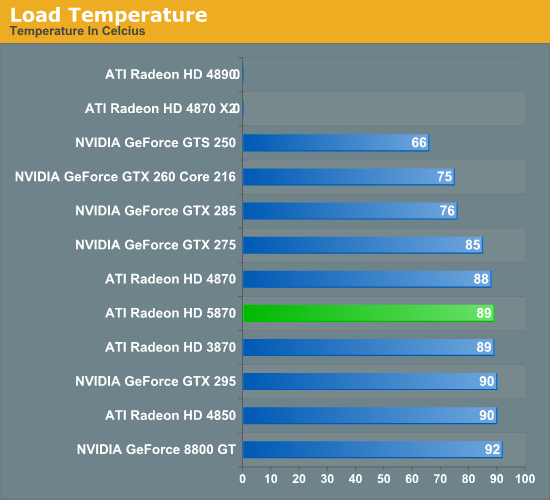

Next up we have card temperatures, measured using the on-board sensors of the card. With a good cooler, lower idle power consumption should lead to lower idle temperatures.

The floor for a good cooler looks to be about 40C, with the GTS 250, 3870, and 4850 all turning in temperatures around here. For the 5870, it comes in at 46C, which is enough to beat the 4870 and the NVIDIA GTX lineup.

Unlike power consumption, load temperatures are all over the place. All of the AMD cards approach 90C, while NVIDIA’s cards are between 92C for an old 8800GT, and a relatively chilly 75C for the GTX 260. As far as the 5870 is concerned, this is solid proof that the half-slot exhaust vent isn’t going to cause any issues with cooling.

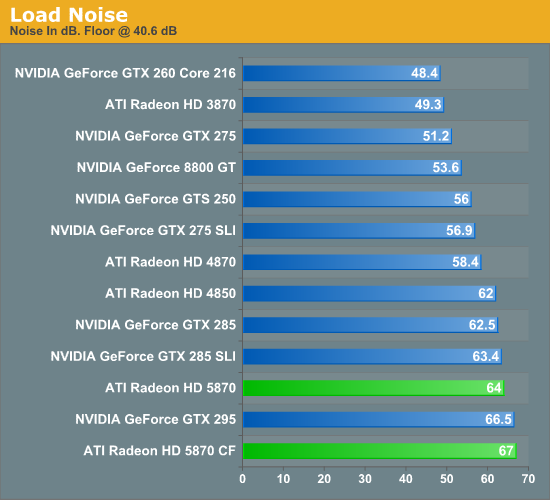

Finally we have fan noise, as measured 6” from the card. The noise floor for our setup is 40.4 dB.

All of the cards, save the GTX 295, generate practically the same amount of noise when idling. Given the lower energy consumption of the 5870 when idling, we had been expecting it to end up a bit quieter, but this was not to be.

At load, the picture changes entirely. The more powerful the card the louder it tends to get, and the 5870 is no exception. At 64 dB it’s louder than everything other than the GTX 295 and a pair of 5870s. Hopefully this is something that the card manufacturers can improve on later on with custom coolers, as while 64 dB at 6" is not egregious it’s still an unwelcome increase in fan noise.

327 Comments

View All Comments

RubberJohnny - Thursday, September 24, 2009 - link

Well silicondoc you sure have some hatred for ATI/love for nvidia.It's almost as if you work for the green team...

You seem to have all this time on your hands to go around the net looking for links to spread FUD...sitting on new egg watching these cards come in and out of stock like you have a vested interest in seeing ATI fail...unlike any sane person it appears you want nvidia to have a monopoly on the industry?

Maybe you are privy to some inside info over at nvidia and know they have nothing to counter the 5870 with?

Maybe the cash they paid you to spin these BS comments would have been better spent on R&D?

SiliconDoc - Thursday, September 24, 2009 - link

That's a nice personal, grating, insulting ripppp, it's almost funny, too.---

The real problems remain.

I bring up this stuff because of course, no one else will, it is almost forbidden. Telling the truth shouldn't be that hard, and calling it fairly and honestly should not be such a burden.

I will gladly take correction when one of you noticing insulters has any to offer. Of course, that never comes.

Break some new ground, won't you ?

I don't think you will, nor do I think anyone else will - once again, that simply confirms my factual points.

I guess I'll give you a point for complaining about delivery, if that's what you were doing, but frankly, there are a lot of complainers here no different - let's take for instance the ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275 article here.

http://www.anandtech.com/video/showdoc.aspx?i=3539">http://www.anandtech.com/video/showdoc.aspx?i=3539

Boy, the red fans went into rip mode, and Anand came in and changed the articles (Derek's) words and hence "result", from GTX275 wins to ATI4890 wins.

--

No, it's not just me, it's just the bias here consistently leans to ati, and wether it's rooting for the underdog that causes it, or the brooding undercurrent hatred that surfaces for "the bigshot" "greedy" "ripoff artist" "nvidia overchargers" "industry controlling and bribing" "profit demon" Nvidia, who knows...

I'm just not afraid to point it out, since it's so sickening, yes, probably just to me, "I'm sure".

How about this glaring one I have never pointed out even to this day, but will now:

ATI is ALWAYS listed first, or "on top" - and of course, NVIDIA, second, and it is no doubt, in the "reviewer's minds" because of "the alphabet", and "here we go in alphabetical order".

A very, very convenient excuse, that quite easily causes a perception bias, that is quite marked for the readers.

But, that's ok.

---

So, you want to tell me why I shouldn't laugh out loud when ATI uses NVIDIA cards to develope their "PhysX" competition Bullet ?

ROFLMAO

I have heard 100 times here (from guess whom) that the ati has the wanted "new technology", so will that same refrain come when NVIDIA introduces their never before done MIMD capable cores in a few months ? LOL

I can hardly wait to see the "new technology" wannabes proclaiming their switched fealty.

Gee sorry for noticing such things, I guess I should be a mind numbed zombie babbling along with the PC required fanning for ati ?

silverblue - Thursday, September 24, 2009 - link

No; if he did work for nVidia, he'd be far better informed and far less prone to using the phrase "red rooster" every five seconds.crackshot91 - Wednesday, September 23, 2009 - link

Any possibility of benchmarks with a core 2 duo?I wanna know if it will be necessary to upgrade to an i5 or i7 (All new mobo) to see big performance gains over my 8800GT. Will a C2D E6750 @ 3.2GHz bottleneck it?

Ryan Smith - Wednesday, September 23, 2009 - link

Our recent Core i7 860 article should do an adequate job of answering that question. Several of the benchmarks were taken right out of this article.therealnickdanger - Wednesday, September 23, 2009 - link

You dedicated a full page to the flawless performance of its A/V output, but didn't mention it in the "features" part of the conclusion. It's a very powerful feature, IMO. Granted, this card may be a tad too hot and loud to find a home in a lot of HTPCs, but it's still an awesome feature and you should probably append your conclusion... just a suggestion though.Ultimately, I have to admit to being a little disappointed by the performance of this card. All the Eyefinity hype and playable framerates at massive 7000x3000 resolutions led me to believe that this single card would scale down and simply dominate everything at the 30" level and below. It just seems logical, so I was taken aback when it was beat by, well, anything else. I expected the 5870 and 5870CF to be at the top of every chart. Oh well.

Awesome article though! I'm sure there's a 5850 in my future!

MrMom - Wednesday, September 23, 2009 - link

Does anyone have a good explanation why the massive HD5870 is still slower/@par with the GTX295?Thanks

SiliconDoc - Thursday, September 24, 2009 - link

Yes, because the ati core "really sucks". It needs DDR5, and much higher MHZ to compete with Nvidia, and their what, over 1 year old core. LOL Even their own 4870x2.Or the 3 year old G92 vs the ddr3 "4850" the "topcore" before yesterday. (the ati topcore minus the well done 3m mhz+ REBRAND ring around the 4890)

That's the sad, actual truth. That's the truth many cannot bear to bring themselves to realize, and it's going to get WORSE for them very soon, with nvidia's next release, with ddr5, a 512 bit bus, and the NEW TECHNOLOGY BY NVIDIA THAT ATI DOES NOT HAVE MIMD capable cores.

Oh, I can hardly wait, but you bet I'm going to wait, you can count on that 100%.

Spoelie - Thursday, September 24, 2009 - link

because those are 2 480mm² dies, while this is only 1 360mm² die?Griswold - Wednesday, September 23, 2009 - link

Its one GPU instead of two, maybe?