AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

A Quick Refresher on the RV770

As Cypress is a direct evolution of the RV770 design, before we talk about what’s new with Cypress we are going to go over a quick rehash of RV770’s internal workings. As it’s necessary to understand how RV770 was built to understand what Cypress changes, if you’re completely unfamiliar with RV770, please take a look at our expanded discussion of RV770 from last year. For the rest of you, let’s get started.

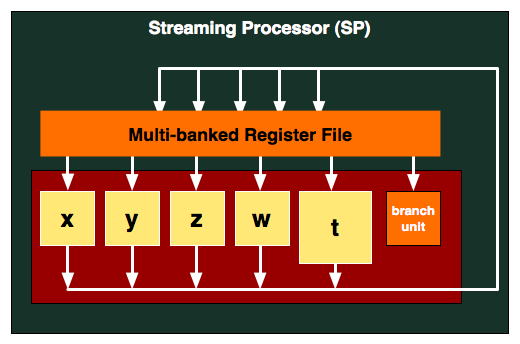

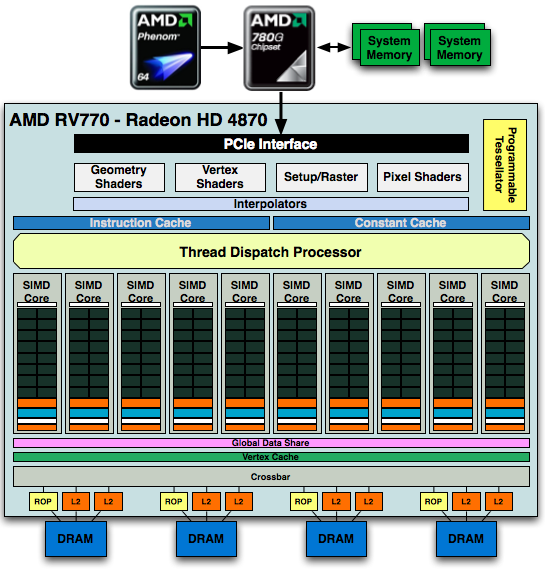

At the center of the RV770 is the Stream Processing Unit (SPU), a single arithmetic logic unit. The RV770 has 800 of these, and they are packaged together in groups of 5 and are what we call a Streaming Processor (SP). A SP contains a register file, a branch predictor, and the aforementioned 5 SPUs, with the 5th SPU being a more complex unit capable of transcendental functions along with the base functions of an ALU. The SP is the smallest unit that can do individual work; every SPU in an SP must execute the same instruction.

For every 16 SPs, AMD groups them together with texture units, L1 cache, shared memory, and controlling logic. This combined block is what AMD calls a SIMD, and RV770 has 10 of them. These 10 SIMDs form the core computational power of the RV770, and in the chip work with various specialized units such as ROPs, rasterizers, L2 cache, and tesselators to form a complete chip.

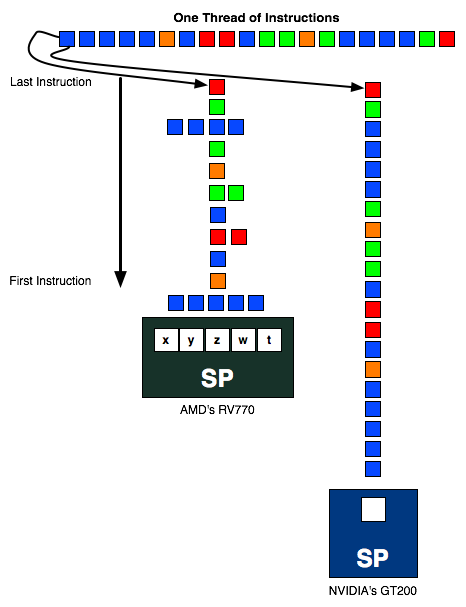

To utilize the computational power of the hardware, instruction threads are issued to the SPs. These threads are grouped into wavefronts, where there are 64 threads per wavefront. To maximize the utilization of the GPU, threads need to be organized so that they can feed all 5 SPUs in a SP an instruction every clock cycle. Doing this requires extracting instruction level parallelism (ILP) out of programs being passed to the GPU, which is difficult task of AMD’s compiler.

If SPUs go unused, then the performance of the chip suffers due to underutilization. This design gives AMD a great deal of theoretical computational power, but it is always a challenge to fully exploit it.

327 Comments

View All Comments

ClownPuncher - Wednesday, September 23, 2009 - link

Absolutely, I can answer that for you.Those 2 "ports" you see are for aesthetic purposes only, the card has a shroud internally so those 2 ports neither intake nor exhaust any air, hot or otherwise.

Ryan Smith - Wednesday, September 23, 2009 - link

ClownPuncher gets a cookie. This is exactly correct; the actual fan shroud is sealed so that air only goes out the front of the card to go outside of the case. The holes do serve a cooling purpose though; allow airflow to help cool the bits of the card that aren't hooked up to the main cooler; various caps and what have you.SiliconDoc - Wednesday, September 23, 2009 - link

Ok good, now we know.So the problem now moves to the tiny 1/2 exhaust port on the back, did you stick your hand there and see how much that is blowing ? Does it whistle through there ? lol

Same amount of air(or a bit less) in half the exit space... that's going to strain the fan and or/reduce flow, no matter what anyone claims to the contrary.

It sure looks like ATI is doing a big favor to aftermarket cooler vendors.

GhandiInstinct - Wednesday, September 23, 2009 - link

Ryan,Developers arent pushing graphics anymore. Its not economnical, PC game supports is slowing down, everything is console now which is DX9. what purpose does this ATI serve with DX11 and all this other technology that won't even make use of games 2 years from now?

Waste of money..

ClownPuncher - Wednesday, September 23, 2009 - link

Clearly he should stop reviewing computer technology like this because people like you are content with gaming on their Wii and iPhone.This message has been brought to you by Sarcasm.

Griswold - Wednesday, September 23, 2009 - link

So you're echoing what nvidia recently said, when they claimed dx11/gaming on the PC isnt all that (anymore)? I guess nvidia can close shop (at least the gaming relevant part of it) now and focus on GPGPU. Why wait for GT300 as a gamer?Oh right, its gonna be blasting past the 5xxx and suddenly dx11 will be the holy grail again... I see how it is.

SiliconDoc - Wednesday, September 23, 2009 - link

rofl- It's great to see red roosters not crowing and hopping around flapping their wings and screaming nvidia is going down.Don't take any of this personal except the compliments, you're doing a fine job.

It's nice to see you doing my usual job, albiet from the other side, so allow me to compliment your fine perceptions. Sweltering smart.

But, now, let's not forget how ambient occlusion got poo-pooed here and shading in the game was said to be "an irritant" when Nvidia cards rendered it with just driver changes for the hardware. lol

Then of course we heard endless crowing about "tesselation" for ati.

Now it's what, SSAA (rebirthed), and Eyefinity, and we'll hear how great it is for some time to come. Let's not forget the endless screeching about how terrible and useless PhysX is by Nvidia, but boy when "open standards" finally gets "Havok and Ati" cranking away, wow the sky is the limit for in game destruction and water movement and shooting and bouncing, and on and on....

Of course it was "Nvidia's fault" that "open havok" didn't happen.

I'm wondering if 30" top resolution will now be "all there is!" for the next month or two until Nvidia comes out with their next generation - because that was quite a trick switching from top rez 30" DOWN to 1920x when Nvidia put out their 2560x GTX275 driver and it whomped Ati's card at 30" 2560x, but switched places at 1920x, which was then of course "the winning rez" since Ati was stuck there.

I could go on but you're probably fuming already and will just make an insult back so let the spam posting IZ2000 or whatever it's name will be this time handle it.

BTW there's a load of bias in the article and I'll be glad to point it out in another post, but the reason the red rooster rooting is not going beyond any sane notion of "truthful" or even truthiness, is because this 5870 Ati card is already percieved as " EPIC FAIL" !

I cannot imagine this is all Ati has, and if it is they are in deep trouble I believe.

I suspect some further releases with more power soon.

Finally - Wednesday, September 23, 2009 - link

Team Green - full foam ahead!*hands over towel*

There you go. Keep on foaming, I'm all amused :)

araczynski - Wednesday, September 23, 2009 - link

is DirectX11 going to be as worthless as 10? in terms of being used in any meaningful way in a meaningful amount of games?my 2 4850's are still keeping me very happy in my 'ancient' E8500.

curious to see how this compares to whatever nvidia rolls out, probably more of the same, better in some, worse in others, bottom line will be the price.... maybe in a year or two i'll build a new system.

of course by that time these'll be worthless too.

SiliconDoc - Wednesday, September 23, 2009 - link

Well it's certainly going to be less useful than PhysX, which is here said to be worthless, but of course DX11 won't get that kind of dissing, at least not for the next two months or so, before NVidia joins in.Since there's only 1 game "kinda ready" with DX11, I suppose all the hype and heady talk will have to wait until... until... uhh.. the 5870's are actually available and not just listed on the egg and tiger.

Here's something else in the article I found so very heartwarming:

---

" Wrapping things up, one of the last GPGPU projects AMD presented at their press event was a GPU implementation of Bullet Physics, an open source physics simulation library. Although they’ll never admit it, AMD is probably getting tired of being beaten over the head by NVIDIA and PhysX; Bullet Physics is AMD’s proof that they can do physics too. "

---

Unfortunately for this place,one of my friends pointed me to this little expose' that show ATI uses NVIDIA CARDS to develope "Bullet Physics" - ROFLMAO

-

" We have seen a presentation where Nvidia claims that Mr. Erwin Coumans, the creator of Bullet Physics Engine, said that he developed Bullet physics on Geforce cards. The bad thing for ATI is that they are betting on this open standard physics tech as the one that they want to accelerate on their GPUs.

"ATI’s Bullet GPU acceleration via Open CL will work with any compliant drivers, we use NVIDIA Geforce cards for our development and even use code from their OpenCL SDK, they are a great technology partner. “ said Erwin.

This means that Bullet physics is being developed on Nvidia Geforce cards even though ATI is supposed to get driver and hardware acceleration for Bullet Physics."

---

rofl - hahahahahha now that takes the cake!

http://www.fudzilla.com/content/view/15642/34/">http://www.fudzilla.com/content/view/15642/34/

--

Boy do we "hate PhysX" as ati fans, but then again... why not use the nvidia PhysX card to whip up some B Physics, folks I couldn't make this stuff up.