Reflexes and Input Generation

Human Reaction Time

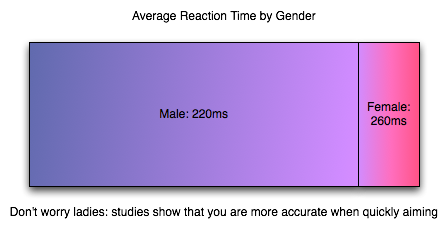

The impact of input lag is compounded by what goes on before we even react. As soon as an image requiring a response hits your eyes, it will take somewhere between 150ms and 300ms to translate that into action. Average human response time to visual stimulus is about 200ms (0.2 seconds) for young adults, which is a long time compared to how quickly games can respond to input. But with this built-in handicap, when fast response to what's happening on screen is required, it is helpful to claim every advantage possible (especially for relative geezers like us).

Human response time is mitigated by the fact that we are also capable of learning, anticipation and extrapolation. In "practicing," a.k.a. playing a game, we can learn to predict future frames from current state for very small time slices to compensate for our response time. Our previous responses to input and the results that followed can also factor in to our future responses. This is part of the learning curve, especially for FPS games. When input lag is below a reasonable threshold, we are able to compensate without issue (and, in fact, do not perceive the input lag at all).

The larger input lag gets, the harder it gets to do something like aim at a moving target. Our expectation of the effect our input should have is different from what we see. This gets into something that combines reaction time and proprioception (reception of self produced stimulus). I'm not a psychologist, but I would love to see some studies done on how much input lag people can compensate for, where it starts to be uncomfortable (where it just "feels" wrong) and when it becomes an obviously visible phenomenon. In digging around the net, I've seen a few game developers conjecture that the threshold is about 100 milliseconds, but I haven't found any actual data on the subject. At the same time, 100 milliseconds (or maybe something like 1/2 reaction time?) seems a pretty reasonable hypothesis to me.

The Input Pipeline

As it is key in most games, we'll examine the case of the mouse when it comes to input. As soon as a mouse is moved, we have a delay. The mouse must begin by detecting this movement. Sorting out how responsive a mouse is these days is incredibly clouded by horrendous terminology. As understanding how a mouse works is important in groking it's impact on input lag, we'll dissect Logitech's specs and try to get some good information on exactly what's going on.

There are three key numbers in the reported specifications of Logitech mice we'll look at: megapixels/second, maximum speed, DPI, and reports/second. For the Logitech G9x high end gaming mouse, this is: 9 MP/s, 150 inches/second, 5000 DPI, and 1000 reports/second. Other gaming and good quality mice can do 500 to 1000 reports/second and have lower DPI and MP/s stats.

The first stat, megapixels/second, is important in how fast the mouse sensor itself can collect movement data. Optical and laser mice detect movement by taking pictures of the surface they are on and comparing the difference in images many times every second. To really understand how fast the mouse takes pictures (and thus how fast it can detect and calculate movement in units called "counts"), we would need to know how many pixels per frame the image is. Our guess is that it can't be larger than 17x17 based on its maximum speed rating (though it might be more like 12x12 if it needs to generate two frames for every count rather than reusing frames from the previous calculation). It'd be great if they listed this data anywhere, but we are left guessing based on other stats at this point.

Next up is DPI, or dots per inch. 5000 for the G9x. DPI is sort of a misrepresentation as the real specification should be in CPI (counts per inch). As it is, the number can be considered maximum DPI if each count moves the cursor one pixel (or dot). Under MS Windows, with no ballistics applied at the default pointer speed, DPI = CPI. Decreasing pointer speed means moving one dot for more than one count, and increasing pointer speed means moving more than one dot for every count. Of course with ballistics, talking about DPI as related to the mouse doesn't make any sense: moving the mouse faster or slower changes the number of dots moved per count dynamically. Because of this, we'll talk about CPI for accuracy sake, and consider that mouse manufacturers intend to use the terms interchangeably (despite the fact that they are not).

CPI is the number of steps the mouse can count within one inch; 1 / CPI inches is the smallest distance in inches the mouse is able to measure as a movement. The full benefit of a high definition mouse is realized when one count is less than or equal to one "dot," which is possible in games (with sensitivity sliders) and in windows if you decrease your mouse speed (though going to something with an odd cadence could cause problems).

Thus, when you tell your 5000 "DPI" mouse to run at 200 "DPI", it would be nice if it still reported 5000 CPI yet and allowed the driver to handle scaling the data down (or performing ballistics on raw data). For this example, we would only move the cursor one dot (one unit on the screen) every 25 counts. But the easy way out is it maintain a 1:1 ratio of counts to dots and drop your actual counts per inch down to 200. This provides no accuracy advantage (though with a fixed sensor speed it does increase maximum velocity and acceleration tolerance). And again it would be helpful if mouse makers could actually tell us what they are doing.

Since the Logitech G9x can do 150 inches/second maximum movement speed at 200 CPI, we know how many counts it must generate per second (though Logitech doesn't make it clear that the maximum speed and acceleration can only happen at the lowest CPI, it only makes sense with the math). The reported specifications indicate that the G9x can do about 30000 counts per second (150 inches in one second at 200 counts per inch). This is consistent with a 9 megapixel/second speed in that such a sensor could collect about 30000 17x17 frames every second based on this data.

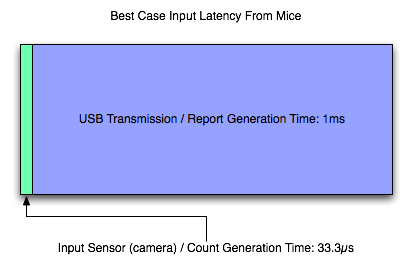

After looking at all that, we can say that our Logitech G9x mouse is capable of detecting movement of between 1/5000th and 1/200th of an inch (depending on the selected CPI) about every 33.3 microseconds (these are 1/1000ths of a millisecond) after the movement happens. That's pretty freaking fast. Other mice can be much slower, but even cutting the speed in half won't affect hugely affect latency (though it will affect the maximum speed at which the mouse can be moved without problem).

Once the mouse has generated a count (or several) we need to send that data to the computer over USB. Counts are aggregated into groups called reports. USB is limited to 1000 Hz polling, so the 1000 reports/second maximum of the G9x makes sense: USB limits the transmission rate here. For those interested, to actually achieve 150 inches per second at 200 CPI, the mouse would need to be able to send about 30 counts per report at 1000 reports per second. This seems reasonable, but it'd be great if someone with USB engineering experience could give us some feedback and let us know for sure.

So, let's say that we've moved our mouse about a couple dozen microseconds before a report is sent. In this case, we've actually got to wait the whole millisecond for that data to be sent to the PC (because the count can't be generated fast enough to be included in the current report). So despite the very fast sensor in the mouse, we are transmission bound and our first "large" delay is on the order of single digit milliseconds. Other mice (like the Logitech G5 I'm using right now) may generate 500 reports per second, while the slowest speed we can expect is 127 reports/second. This can mean a 1ms - 8ms delay in input getting from the motion of the mouse to the computer.

Most gamers use halfway decent mice these days, so we can expect that latency is more like 2ms to 4ms for most wired USB mouse users and 1ms for gamers with higher end mice. This delay can't be cut down to anything less than 1ms until USB 2.0 is replaced by something faster. We'll ignore any cable (or any other wire) delay, as this will only add something on the order of nanoseconds to transmission time.

The input lag from a good mouse, on it's own, is in not perceivable to humans, but remember that this is all part of a larger picture. And now it's on to the software.

85 Comments

View All Comments

Zolcos - Thursday, July 16, 2009 - link

The article is logically inconsistent. On page 1 it states "input lag is defined as the delay between the when a user does something with an input device and when that action is reflected on the monitor" and on page two it has "Input lag starts from before we even react".DerekWilson - Thursday, July 16, 2009 - link

i'll fix that..."The impact of input lag is compounded by what goes on before we even react."

yacoub - Thursday, July 16, 2009 - link

The input lag everyone's most concerned with is the amount the display adds, because while all the rest is consistent, displays add a variable amount depending on which one you get. The ones that add more than ~20 ms add a NOTICEABLE amount (for most people) which takes input lag to the point that it becomes frustrating.DerekWilson - Thursday, July 16, 2009 - link

Part of the point was to explain that there is a lot at the end of the chain that can significantly impact performance and it's all about the display.If we do consider a 100ms threshold as valid, then based on our numbers from TF2 it is clear that we would end up in the >100ms input lag range with a monitor that adds more than 20ms of lag.

And if we can't expect a twitch shooter to come in under the mark, how is everything else going to do? Not well I would imagine.

I did think about looking at a wide array of monitors, but I feel like that might be better suited to a more focused review of monitor performance rather than an exploration of input lag in general.

yacoub - Thursday, July 16, 2009 - link

Sure but for whatever reason, all of the lag prior to the display's lag is essentially transparent because it doesn't add up to be enough to be perceptible. This would equate to your threshold.When using a display with little or no noticeable display lag, any FPS game will feel very responsive and without discernible latency (assuming your GPU hardware is up to the task of rendering the frames quickly enough and you're not using one of the early optical mice from a decade ago that had terrible tracking refresh rates, etc etc).

Yet simply switching to a display with higher latency is enough to make input latency noticeable and frustrating for FPS gamers. So the key issue is finding a TN or IPS display since those panel technologies have the least input lag. Of course most panels out there are -VA based panels because they are cheaper to produce than IPS, and TN may be snappy in display response but they have a number of other downsides.

What matters most is getting panel makers focused on IPS-based displays (or new panel technologies that significantly reduce the input lag most non-TN displays presently suffer. And hey, the more they produce and sell, the lower the production cost per unit so the better the pricing can be and the more opportunities for improved technology to be added to the IPS design.

ocyl - Friday, July 17, 2009 - link

@ yacoubDid you read the article at all?

yacoub - Friday, July 17, 2009 - link

Yes. I must not be explaining myself well, so forget it.DDuckMan - Saturday, December 18, 2010 - link

While this article was great, I'm still not sure if I am better off disabling SLI to eliminate the syncronization lag or having the higher framerates with SLI enabled in twitch games. It seems to me that with 120Hz monitors, vsync (which I need for 3D) and SLI lag would not be as important as keeping the framerate above the monitor refresh rate. I don't have the equipment to properly test, so I am looking forward the the next article.http://hardforum.com/showthread.php?t=1569281

burner1980 - Thursday, March 10, 2011 - link

Quote: "Input lag with multiGPU systems is something we will want to explore at a later time."I`m still waiting patiently and looking forward to a follow up investigation. The topic of input lag is VERY important to gamers who play FPS. I do notice it in racing games, too.

I suggest to use true 120Hz monitors in the follow up article. They of course won`t reduce input lag, but help to reduce screen tearing and thus allowing to optimize one`s settings to reduce input lag while keeping screen tearing at a low enough level.

I´m also courious if using a 3 screen setup a la Eyefinity oder Vision Surround using two GPUs will have an impact.

dmnwlv - Thursday, April 28, 2011 - link

Impressive report.Regarding mouse polling rate (I may have missed it out):

1) I believe the actual mouse input into the CPU is already calculated and the end result (of that action) already registered before you get to see it on screen. It does not wait for the GPU/monitor to finish processing before determining the end result. Hence the influence of mouse response is even more substantial if we take out the whole chunk of lag times that were included in the total lag calculation here - Derek Wilson, pls correct me if I am wrong.

Coupled with the predictive ability of human (also reported here) to react accordingly in advance from the existing state of game situation, it seems to match and explain why it is hard to imagine a few milliseconds of difference in mouse lag can have an impact to the overall gaming experience. The brain and reaction is (trying its best) interpolating and working in tandem with the CPU than the monitor.

2) And another scenario where the user already intended to do a series of / continuous / extended action (eg, drawing a long curve line), does the response rate of the mouse play a part in drawing the most accurate curve that the person input/intended? - Maybe Derek can help on this as well.

Thanks for the great report.