What are Double Buffering, vsync and Triple Buffering?

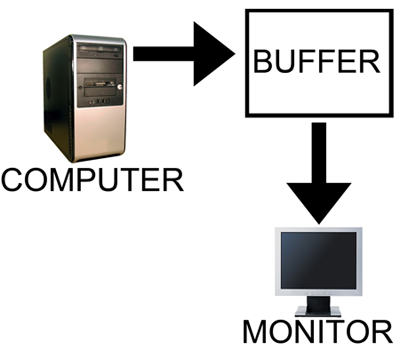

When a computer needs to display something on a monitor, it draws a picture of what the screen is supposed to look like and sends this picture (which we will call a buffer) out to the monitor. In the old days there was only one buffer and it was continually being both drawn to and sent to the monitor. There are some advantages to this approach, but there are also very large drawbacks. Most notably, when objects on the display were updated, they would often flicker.

The computer draws in as the contents are sent out.

All illustrations courtesy Laura Wilson.

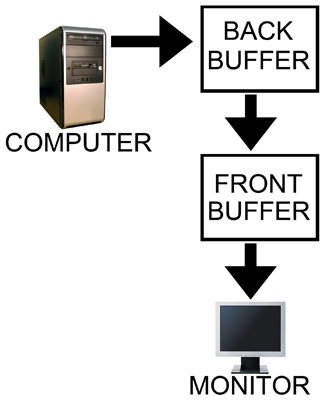

In order to combat the issues with reading from while drawing to the same buffer, double buffering, at a minimum, is employed. The idea behind double buffering is that the computer only draws to one buffer (called the "back" buffer) and sends the other buffer (called the "front" buffer) to the screen. After the computer finishes drawing the back buffer, the program doing the drawing does something called a buffer "swap." This swap doesn't move anything: swap only changes the names of the two buffers: the front buffer becomes the back buffer and the back buffer becomes the front buffer.

Computer draws to the back, monitor is sent the front.

After a buffer swap, the software can start drawing to the new back buffer and the computer sends the new front buffer to the monitor until the next buffer swap happens. And all is well. Well, almost all anyway.

In this form of double buffering, a swap can happen anytime. That means that while the computer is sending data to the monitor, the swap can occur. When this happens, the rest of the screen is drawn according to what the new front buffer contains. If the new front buffer is different enough from the old front buffer, a visual artifact known as "tearing" can be seen. This type of problem can be seen often in high framerate FPS games when whipping around a corner as fast as possible. Because of the quick motion, every frame is very different, when a swap happens during drawing the discrepancy is large and can be distracting.

The most common approach to combat tearing is to wait to swap buffers until the monitor is ready for another image. The monitor is ready after it has fully drawn what was sent to it and the next vertical refresh cycle is about to start. Synchronizing buffer swaps with the Vertical refresh is called vsync.

While enabling vsync does fix tearing, it also sets the internal framerate of the game to, at most, the refresh rate of the monitor (typically 60Hz for most LCD panels). This can hurt performance even if the game doesn't run at 60 frames per second as there will still be artificial delays added to effect synchronization. Performance can be cut nearly in half cases where every frame takes just a little longer than 16.67 ms (1/60th of a second). In such a case, frame rate would drop to 30 FPS despite the fact that the game should run at just under 60 FPS. The elimination of tearing and consistency of framerate, however, do contribute to an added smoothness that double buffering without vsync just can't deliver.

Input lag also becomes more of an issue with vsync enabled. This is because the artificial delay introduced increases the difference between when something actually happened (when the frame was drawn) and when it gets displayed on screen. Input lag always exists (it is impossible to instantaneously draw what is currently happening to the screen), but the trick is to minimize it.

Our options with double buffering are a choice between possible visual problems like tearing without vsync and an artificial delay that can negatively effect both performance and can increase input lag with vsync enabled. But not to worry, there is an option that combines the best of both worlds with no sacrifice in quality or actual performance. That option is triple buffering.

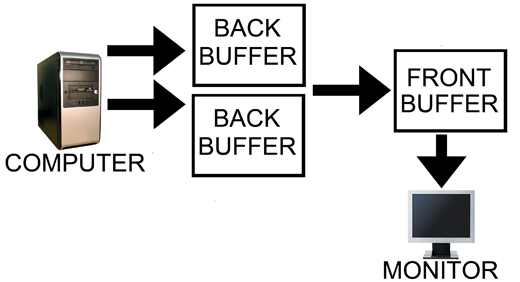

Computer has two back buffers to bounce between while the monitor is sent the front buffer.

The name gives a lot away: triple buffering uses three buffers instead of two. This additional buffer gives the computer enough space to keep a buffer locked while it is being sent to the monitor (to avoid tearing) while also not preventing the software from drawing as fast as it possibly can (even with one locked buffer there are still two that the software can bounce back and forth between). The software draws back and forth between the two back buffers and (at best) once every refresh the front buffer is swapped for the back buffer containing the most recently completed fully rendered frame. This does take up some extra space in memory on the graphics card (about 15 to 25MB), but with modern graphics card dropping at least 512MB on board this extra space is no longer a real issue.

In other words, with triple buffering we get the same high actual performance and similar decreased input lag of a vsync disabled setup while achieving the visual quality and smoothness of leaving vsync enabled.

Now, it is important to note, that when you look at the "frame rate" of a triple buffered game, you will not see the actual "performance." This is because frame counters like FRAPS only count the number of times the front buffer (the one currently being sent to the monitor) is swapped out. In double buffering, this happens with every frame even if the next frames done after the monitor is finished receiving and drawing the current frame (meaning that it might not be displayed at all if another frame is completed before the next refresh). With triple buffering, front buffer swaps only happen at most once per vsync.

The software is still drawing the entire time behind the scenes on the two back buffers when triple buffering. This means that when the front buffer swap happens, unlike with double buffering and vsync, we don't have artificial delay. And unlike with double buffering without vsync, once we start sending a fully rendered frame to the monitor, we don't switch to another frame in the middle.

This last point does bring to bear the one issue with triple buffering. A frame that completes just a tiny bit after the refresh, when double buffering without vsync, will tear near the top and the rest of the frame would carry a bit less lag for most of that refresh than triple buffering which would have to finish drawing the frame it had already started. Even in this case, though, at least part of the frame will be the exact same between the double buffered and triple buffered output and the delay won't be significant, nor will it have any carryover impact on future frames like enabling vsync on double buffering does. And even if you count this as an advantage of double buffering without vsync, the advantage only appears below a potential tear.

Let's help bring the idea home with an example comparison of rendering using each of these three methods.

184 Comments

View All Comments

Scalarscience - Saturday, June 27, 2009 - link

...I'm posting this as a reply but please use the reply button on the previous post unless you're really wanting to reply to this particular post...The next thing that I see COMPLETELY neglected in this article and most of the comments--outside of 1 or 2 mentions of higher framerates increasing your movement rate--is that many games will use the engine's currently processing frame for collision & 'hitscan' decisions. My experience/understanding is that this is in addition to the input latency issues covered above, and in fact is MORE important to me than just worrying about visual tearing.

Hitscan is a term I learned more from the original UT game, I know there was a term CS players used back in the 1.x days too but it slips my mind (and probably more terms now). Basically it applies to projectiles who have 'infinite' velocity, ie, hit their targets immediately when calculated. Hitscan isn't the only type of calculation used for in-game weapons by any means, but the it's the one who (imo) is most affect by latency & discontinuities in the gameplay (caused by bad prediction or perhaps a stalling render thread) so it's the one that is most discussed. My impression is that the hitscan issues apply to other collisions & weapon hits as well, modified by how their weapons work.

As FPS players are not only concerned with 'what they see' but also what their 'shots' and 'movements' are translating into (especially in competitive online fps games) this is one of the main reasons that players used to force vsync off even if they could get their CRT to do 100hz or higher. Older games do have things directly tied to the render thread (physics, movement speed etc) but as time has gone on that's been reduced and methods of 'prediction' have been added, which means that the discussions get complicated over time. Also some games will use client side detection for certain things (instead of correlating things from the server's perspective to make the final 'decision') which can reduce apparent latency for hitscan style shots

Konstantineb - Saturday, June 27, 2009 - link

Would like to know if anandtech is considering an Arma 2 review with some GPUs performance data.The game is realy good looking, and it deserves a review from anandtech....StarRide - Friday, June 26, 2009 - link

Since WoW's triple buffering actually says it might cause input lag, I'm beginning to wonder if WoW's triple buffering implementation is actually just 3 frame render ahead...profoundWHALE - Monday, January 19, 2015 - link

"I'm beginning to wonder if WoW's triple buffering implementation is actually just 3 frame render ahead... "Congradulations, you just accurately described a triple-buffer as a "3 frame render ahead", which it is. It's really not that difficult a concept.

We apply the same concept to a youtube video. We buffer ahead so we get a smooth, steady frame-rate, although in the case of video streaming, we are buffering many, many frames.

This means that if you have terribly low fps, you'll see a bigger hit to latency with triple-buffering than someone with 100 fps.

30 fps = 33.3 ms per frame, meaning that you can end up with latency of about 100 ms with triple buffering

120 fps = 8.33 ms per frame, meaning that you can end up with latency of about 25 ms with triple buffering

Vsync will continue rendering as usual, but will simple make sure that only an exact multiple is displayed, so if your average fps is about 45 fps and you have a 60 Hz refresh rate, you'll get a steady output of 30 fps. The downside is you'll probably get an effect similar to the 3:2 pulldown where some frames will last as short of a time as 25 ms, and as long as 33 ms.

This is why things like Gsync and Freesync are awesome, because we can just throw vsync and buffering out the window and instead of trying to sync the input the the output, we sync the output to the input.

profoundWHALE - Monday, January 19, 2015 - link

I should clarify that the real world lag from triple buffer isn't as bad as the numbers I put out there, but because you have vsync, you will have greater input lag so it still gives a good idea of what latencies you could be looking at.MamiyaOtaru - Friday, June 26, 2009 - link

Triple buffering is obv. better than double, but I'd still not use it.In the final set of comparison shots, two thirds of the screen on the double buff without vsync example is newer than the corresponding screen in the triple buffering example. This means tears, yes, but that's newer info you aren't getting until later with triple buffering.

With double, a mouse movement that starts halfway between screen refreshes can (if the framerate is high enough) show up as soon as a new frame is rendered and swapped to the front to be read by the monitor. Granted, it will only show up on some bottom portion of the screen, with older info above, but in triple buffering that movement will not show up at all until the monitor finishes the current refresh and starts another.

In the horse example posted, the max time between input and reaction on screen is 3 times greater with triple buffering than with double w/o vsync, even if with the latter that reaction is only shown on the bottom 2/3rds or 1/3rd of the screen. It's *there* and it isn't until later with triple buffering.

But it's sure as hell better than double buff with vsync :) And visually better than double buff w/o vsync. A nice compromise. But let's not pretend there are precisely 0 drawbacks. If you'd prefer faster reaction time and can accept tearing to get it, you'll not want triple buffering, as that shuts out any new info until the monitor has drawn a completed, now out of date frame.

It's similar to interlaced vs progressive. Progressive looks nicer, no artifacts. But given the same bits per second, interlaced will have a higher framerate as it isn't drawing the whole frame each time. With double buffering you get bits of several frames rendered onto the screen at once. Artifacts, but you're getting more up to date info *even if that more up to date info isn't on the whole screen*.

justniz - Friday, June 26, 2009 - link

This article makes it sound like triple-buffering is always best, which is just not true.If you play games that require fast reactions and you have powerful enough hardware that you dont get tearing on double-buffering then DONT USE TRIPLE BUFFERING.

Why?

Because triple buffering adds an extra frame's worth of delay before you see the picture. Its only maybe 1/30 to 1/60th of a second extra, but in twitch/frag games removing that delay may be enough to give you the edge.

DerekWilson - Friday, June 26, 2009 - link

triple buffering does not add an extra frame of delay.triple buffering is always the best option, even for twitch shooters where a bad tear can look like something that's actually there and distract the gamer.

having powerful hardware does not reduce tearing. in fact, at higher framerates there is always a higher chance of tearing than at lower frame rates (at 300 FPS tears will happen every frame, whereas at 40 FPS, tears cannot possibly happen every frame -- the lower the frame rate, the less likely or often tearing occurs).

you still get the reduce lag "edge" that is apparent in double buffering without vsync, but you get the most recently fully completed frame of animation rather than a composite of multiple frames starting with the one that triple buffering would have given you in full.

MamiyaOtaru - Friday, June 26, 2009 - link

ou get the most recently fully completed frame of animation rather than a composite of multiple frames starting with the one that triple buffering would have given you in full.starting with, but ending with newer stuff, which is exactly the point. Triple buffering is offering the most up to date feedback on your input only up until the point a tear would happen in double buffering.

DerekWilson - Friday, June 26, 2009 - link

i did note that this is the only potential advantage of double buffering without vsync, but that in order to actually benefit from it, it needs to be a visible artifact (the frames need to be different enough to cause a visible tear) and you only get the benefit in the percentage of the frame that comes after the tear.part of the double buffered frame is the same as the triple buffered frame ... if you need information from this part of the screen (if you're looking there) you still won't get the benefit and the peripheral distraction of tearing can ... well ... distract.

some people may see this as a real advantage, but i certainly think the downside certainly outweighs any potential upside.