What are Double Buffering, vsync and Triple Buffering?

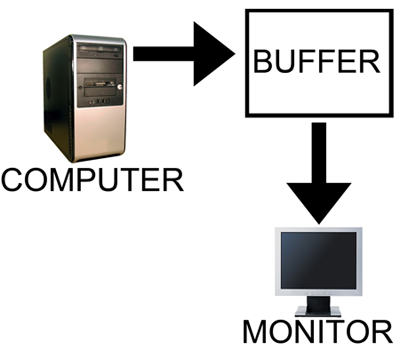

When a computer needs to display something on a monitor, it draws a picture of what the screen is supposed to look like and sends this picture (which we will call a buffer) out to the monitor. In the old days there was only one buffer and it was continually being both drawn to and sent to the monitor. There are some advantages to this approach, but there are also very large drawbacks. Most notably, when objects on the display were updated, they would often flicker.

The computer draws in as the contents are sent out.

All illustrations courtesy Laura Wilson.

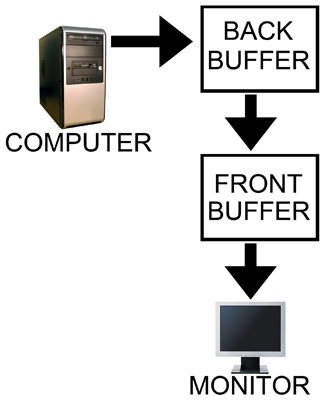

In order to combat the issues with reading from while drawing to the same buffer, double buffering, at a minimum, is employed. The idea behind double buffering is that the computer only draws to one buffer (called the "back" buffer) and sends the other buffer (called the "front" buffer) to the screen. After the computer finishes drawing the back buffer, the program doing the drawing does something called a buffer "swap." This swap doesn't move anything: swap only changes the names of the two buffers: the front buffer becomes the back buffer and the back buffer becomes the front buffer.

Computer draws to the back, monitor is sent the front.

After a buffer swap, the software can start drawing to the new back buffer and the computer sends the new front buffer to the monitor until the next buffer swap happens. And all is well. Well, almost all anyway.

In this form of double buffering, a swap can happen anytime. That means that while the computer is sending data to the monitor, the swap can occur. When this happens, the rest of the screen is drawn according to what the new front buffer contains. If the new front buffer is different enough from the old front buffer, a visual artifact known as "tearing" can be seen. This type of problem can be seen often in high framerate FPS games when whipping around a corner as fast as possible. Because of the quick motion, every frame is very different, when a swap happens during drawing the discrepancy is large and can be distracting.

The most common approach to combat tearing is to wait to swap buffers until the monitor is ready for another image. The monitor is ready after it has fully drawn what was sent to it and the next vertical refresh cycle is about to start. Synchronizing buffer swaps with the Vertical refresh is called vsync.

While enabling vsync does fix tearing, it also sets the internal framerate of the game to, at most, the refresh rate of the monitor (typically 60Hz for most LCD panels). This can hurt performance even if the game doesn't run at 60 frames per second as there will still be artificial delays added to effect synchronization. Performance can be cut nearly in half cases where every frame takes just a little longer than 16.67 ms (1/60th of a second). In such a case, frame rate would drop to 30 FPS despite the fact that the game should run at just under 60 FPS. The elimination of tearing and consistency of framerate, however, do contribute to an added smoothness that double buffering without vsync just can't deliver.

Input lag also becomes more of an issue with vsync enabled. This is because the artificial delay introduced increases the difference between when something actually happened (when the frame was drawn) and when it gets displayed on screen. Input lag always exists (it is impossible to instantaneously draw what is currently happening to the screen), but the trick is to minimize it.

Our options with double buffering are a choice between possible visual problems like tearing without vsync and an artificial delay that can negatively effect both performance and can increase input lag with vsync enabled. But not to worry, there is an option that combines the best of both worlds with no sacrifice in quality or actual performance. That option is triple buffering.

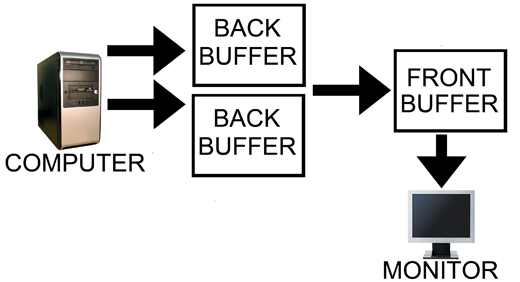

Computer has two back buffers to bounce between while the monitor is sent the front buffer.

The name gives a lot away: triple buffering uses three buffers instead of two. This additional buffer gives the computer enough space to keep a buffer locked while it is being sent to the monitor (to avoid tearing) while also not preventing the software from drawing as fast as it possibly can (even with one locked buffer there are still two that the software can bounce back and forth between). The software draws back and forth between the two back buffers and (at best) once every refresh the front buffer is swapped for the back buffer containing the most recently completed fully rendered frame. This does take up some extra space in memory on the graphics card (about 15 to 25MB), but with modern graphics card dropping at least 512MB on board this extra space is no longer a real issue.

In other words, with triple buffering we get the same high actual performance and similar decreased input lag of a vsync disabled setup while achieving the visual quality and smoothness of leaving vsync enabled.

Now, it is important to note, that when you look at the "frame rate" of a triple buffered game, you will not see the actual "performance." This is because frame counters like FRAPS only count the number of times the front buffer (the one currently being sent to the monitor) is swapped out. In double buffering, this happens with every frame even if the next frames done after the monitor is finished receiving and drawing the current frame (meaning that it might not be displayed at all if another frame is completed before the next refresh). With triple buffering, front buffer swaps only happen at most once per vsync.

The software is still drawing the entire time behind the scenes on the two back buffers when triple buffering. This means that when the front buffer swap happens, unlike with double buffering and vsync, we don't have artificial delay. And unlike with double buffering without vsync, once we start sending a fully rendered frame to the monitor, we don't switch to another frame in the middle.

This last point does bring to bear the one issue with triple buffering. A frame that completes just a tiny bit after the refresh, when double buffering without vsync, will tear near the top and the rest of the frame would carry a bit less lag for most of that refresh than triple buffering which would have to finish drawing the frame it had already started. Even in this case, though, at least part of the frame will be the exact same between the double buffered and triple buffered output and the delay won't be significant, nor will it have any carryover impact on future frames like enabling vsync on double buffering does. And even if you count this as an advantage of double buffering without vsync, the advantage only appears below a potential tear.

Let's help bring the idea home with an example comparison of rendering using each of these three methods.

184 Comments

View All Comments

SirLamer - Friday, June 26, 2009 - link

It's just because nVidia hasn't designed their control panel to be super invasive. The only way to make it work is to have a program sitting there that intercepts calls from DirectX and changes them.Rather than blaming AMD and nVidia, it's probably better to ask why DirectX doesn't include a mechanism to control this performance parameter like it does for most other driver-configurable settings.

Download Riva Tuner, and from the zip file install D3D Overider. It will sit on your taskbar and do the job. I have used this program in the past, but I forgot to put it back since my last reformat and will now do it tonight. Thanks for the reminder article!

GourdFreeMan - Friday, June 26, 2009 - link

Hmm... it seems you are correct.How bizarre! I can understand the usefulness in keeping previous frames for post-processing effects, but you would only be reading from the frames and writing to the new frame, never writing to old ones. Why doesn't this "just work" under the control panel for DirectX like it does for OpenGL?

smn198 - Saturday, June 27, 2009 - link

I suppose we have monitor refresh rates as a legacy from CRT technology. Is there any reason (other than comparability) why we can't have a LCD that refreshes ad-hoc, when both it and the next frame are ready? No more just missing a refresh.Alternatively could LCD lie about its refresh rate and have some sort of buffer internally to achieve the same thing - reducing lag?

GourdFreeMan - Sunday, June 28, 2009 - link

All LCDs (both passive and active matrix) still refresh the screen periodically to prevent individual pixel elements from fading, so there is still a notion of refresh rate for LCDs. You do raise a good question of whether it would be possible to refresh the screen whenever frames are completed (which would have to be in addition to this base refresh rate, or you would get a flickering in brightness).Having an input buffer to reduce perceived display lag would result in torn frames if you tried to swap in the new frame mid-refresh. You still have to wait until the refresh is completed.

erple2 - Tuesday, June 30, 2009 - link

That doesn't make sense from the perspective of how an LCD works. The charge that twists the polarizing LCD element doesn't fade over time (well, not over the few milliseconds between updates - though the charge probably fades over the years as they wear out).The pixel elements don't generate any light themselves. How do they fade then?

I think that you're confusing 2 things here. The refresh rate of the LCD is tied to the output signal - they're both set to run at 60 Hz, so the video card outputs a "new frame" (even if the frame hasn't changed, it's a new frame) ever 1/60th of a second. The LCD then reads that signal every 1/60th of a second and displays it. Part of the reason they chose 60 Hz is due to the bandwidth limitation of the set standard. To update more frequently than that, you'll clearly need the capability of transmitting more data down the interface. Right now, the DVI interface can transmit up to 3.96 Gigabits of info per second. at 24 bits per pixel, and a 1920x1200 resolution, that's 55,296,000 bits per image. Given the hard cap of 3.96 Gbps, that's 3.96/55296000 * 1billion which is about 71 Hz. That's the fastest a single link DVI interface can refresh at that resolution. I believe it was therefore decided to cap the refresh rate at 60 Hz for any WUXGA resolutions. But, that's out of convenience, not for any reason related to fading pixels (unlike a CRT).

LCD's don't flicker per se, as there's no light that's turning on and off. The backlight is more or less constantly on.

overzealot - Wednesday, July 1, 2009 - link

As the pixels untwist (no power applied, or power applied in reverse) they transition back to blocking light. You could, theoretically, call the process fading, as that's what it would appear to do.The backlights in most LCD's run off AC, you can't say they're always on. Best you can say is that because the frequency is much higher than 50/60hz you can't see the flickering. It's still there.

There are faster than 60hz panels, it's just that the electronics are more costly - and the majority of people don't care.

I do care, but not enough to pay the extra cost of a 120hz panel.

I'd rather have a larger panel.

DerekWilson - Wednesday, July 1, 2009 - link

it is my understanding that pixel state on an LCD panel is driven by a steady voltage applied across the liquid crystal cell (aside from possible overdrive on the upswing to increase transition time). because they are digital, until the controller changes the state of that pixel, it can remain at a constant percentage of twist because there is a constant voltage applied. no refresh is "required" and the bandwidth issue is what drives "refresh rate" on LCD panels.many LCDs do use CCFL for backlight which can have a slight flicker for a very very short time period every time current alternates polarity, but it isn't really ever "off" as they are driven both ways (there are no dedicated anodes and cathodes - they switch with current).

But as we move toward LED backlighting (or away from CCFL and toward other technologies which are DC) then we won't have any flicker at all there either.

GourdFreeMan - Monday, August 31, 2009 - link

This "steady" voltage (only true of active-matrix LCDs) isn't maintained directly by the LCD's power supply. For TFTs there are one or more capacitors gated by a transistor for controlling their voltage per pixel element (R,G,B) that maintains the state. These capacitors slowly discharge and must be refreshed periodically. In this sense all LCDs have a "base refresh rate".If you do a Google search for LCD controllers integrated into consumer products you will find there is an issue with perceived flickering in brightness as the pixel elements fade if you do not refresh them often enough.

I was asked if it was possible to refresh the screen only when frames are completed, and this was the first issue I discovered when researching the question. Other than increased power usage and added controller complexity I do not know if there would be other issues if you tried to do a second "just in time" refresh and left my reply to the original question at that.

RSmith - Thursday, April 8, 2010 - link

Hey GourdFree Man,I got here thinking exactly the same thing as you: why do we need a fixed refresh rate on LCD's?

Did you get any answers to that?

I hope that future display technologies will allow this to happen, it would certainly be of huge benefit to gaming if frames were drawn as they were rendered.

homerdog - Sunday, June 28, 2009 - link

I can set my LCD to 75Hz, which AFAIK is a lie.