What are Double Buffering, vsync and Triple Buffering?

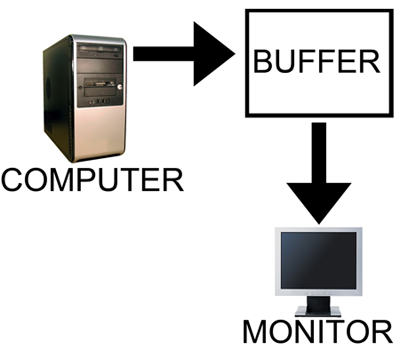

When a computer needs to display something on a monitor, it draws a picture of what the screen is supposed to look like and sends this picture (which we will call a buffer) out to the monitor. In the old days there was only one buffer and it was continually being both drawn to and sent to the monitor. There are some advantages to this approach, but there are also very large drawbacks. Most notably, when objects on the display were updated, they would often flicker.

The computer draws in as the contents are sent out.

All illustrations courtesy Laura Wilson.

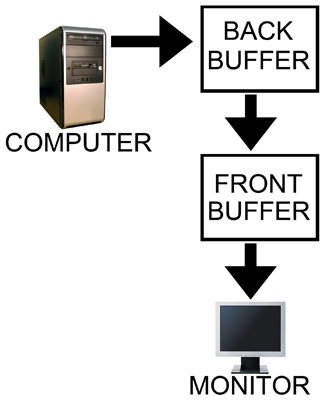

In order to combat the issues with reading from while drawing to the same buffer, double buffering, at a minimum, is employed. The idea behind double buffering is that the computer only draws to one buffer (called the "back" buffer) and sends the other buffer (called the "front" buffer) to the screen. After the computer finishes drawing the back buffer, the program doing the drawing does something called a buffer "swap." This swap doesn't move anything: swap only changes the names of the two buffers: the front buffer becomes the back buffer and the back buffer becomes the front buffer.

Computer draws to the back, monitor is sent the front.

After a buffer swap, the software can start drawing to the new back buffer and the computer sends the new front buffer to the monitor until the next buffer swap happens. And all is well. Well, almost all anyway.

In this form of double buffering, a swap can happen anytime. That means that while the computer is sending data to the monitor, the swap can occur. When this happens, the rest of the screen is drawn according to what the new front buffer contains. If the new front buffer is different enough from the old front buffer, a visual artifact known as "tearing" can be seen. This type of problem can be seen often in high framerate FPS games when whipping around a corner as fast as possible. Because of the quick motion, every frame is very different, when a swap happens during drawing the discrepancy is large and can be distracting.

The most common approach to combat tearing is to wait to swap buffers until the monitor is ready for another image. The monitor is ready after it has fully drawn what was sent to it and the next vertical refresh cycle is about to start. Synchronizing buffer swaps with the Vertical refresh is called vsync.

While enabling vsync does fix tearing, it also sets the internal framerate of the game to, at most, the refresh rate of the monitor (typically 60Hz for most LCD panels). This can hurt performance even if the game doesn't run at 60 frames per second as there will still be artificial delays added to effect synchronization. Performance can be cut nearly in half cases where every frame takes just a little longer than 16.67 ms (1/60th of a second). In such a case, frame rate would drop to 30 FPS despite the fact that the game should run at just under 60 FPS. The elimination of tearing and consistency of framerate, however, do contribute to an added smoothness that double buffering without vsync just can't deliver.

Input lag also becomes more of an issue with vsync enabled. This is because the artificial delay introduced increases the difference between when something actually happened (when the frame was drawn) and when it gets displayed on screen. Input lag always exists (it is impossible to instantaneously draw what is currently happening to the screen), but the trick is to minimize it.

Our options with double buffering are a choice between possible visual problems like tearing without vsync and an artificial delay that can negatively effect both performance and can increase input lag with vsync enabled. But not to worry, there is an option that combines the best of both worlds with no sacrifice in quality or actual performance. That option is triple buffering.

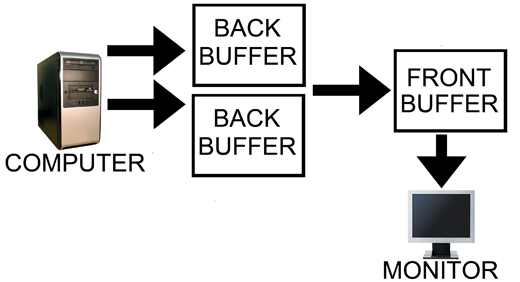

Computer has two back buffers to bounce between while the monitor is sent the front buffer.

The name gives a lot away: triple buffering uses three buffers instead of two. This additional buffer gives the computer enough space to keep a buffer locked while it is being sent to the monitor (to avoid tearing) while also not preventing the software from drawing as fast as it possibly can (even with one locked buffer there are still two that the software can bounce back and forth between). The software draws back and forth between the two back buffers and (at best) once every refresh the front buffer is swapped for the back buffer containing the most recently completed fully rendered frame. This does take up some extra space in memory on the graphics card (about 15 to 25MB), but with modern graphics card dropping at least 512MB on board this extra space is no longer a real issue.

In other words, with triple buffering we get the same high actual performance and similar decreased input lag of a vsync disabled setup while achieving the visual quality and smoothness of leaving vsync enabled.

Now, it is important to note, that when you look at the "frame rate" of a triple buffered game, you will not see the actual "performance." This is because frame counters like FRAPS only count the number of times the front buffer (the one currently being sent to the monitor) is swapped out. In double buffering, this happens with every frame even if the next frames done after the monitor is finished receiving and drawing the current frame (meaning that it might not be displayed at all if another frame is completed before the next refresh). With triple buffering, front buffer swaps only happen at most once per vsync.

The software is still drawing the entire time behind the scenes on the two back buffers when triple buffering. This means that when the front buffer swap happens, unlike with double buffering and vsync, we don't have artificial delay. And unlike with double buffering without vsync, once we start sending a fully rendered frame to the monitor, we don't switch to another frame in the middle.

This last point does bring to bear the one issue with triple buffering. A frame that completes just a tiny bit after the refresh, when double buffering without vsync, will tear near the top and the rest of the frame would carry a bit less lag for most of that refresh than triple buffering which would have to finish drawing the frame it had already started. Even in this case, though, at least part of the frame will be the exact same between the double buffered and triple buffered output and the delay won't be significant, nor will it have any carryover impact on future frames like enabling vsync on double buffering does. And even if you count this as an advantage of double buffering without vsync, the advantage only appears below a potential tear.

Let's help bring the idea home with an example comparison of rendering using each of these three methods.

184 Comments

View All Comments

DerekWilson - Friday, June 26, 2009 - link

Really, the argument against including the option is more complex ...In the past, that extra memory required might not have been worth it -- using that memory on a 128mb card could actually degrade performance because of the additional resource usage. we've really recently gotten beyond this as a reasonable limitation.

Also, double buffering is often seen as "good enough" and triple buffering doesn't add any additional eye candy. triple buffering is at best only as performant as double buffering. enabling vsync already eliminates tearing. neither of these options requires any extra work and triple buffering (at least under directx) does.

Developers typically try to spend time on the things that they determine will be most desired by their customers or that will add the largest impact. Some developers have taken the time to start implementing triple buffering.

but the "drawback" is development time... part of the goal here is to convince developers that it's worth the development time investment.

Frumious1 - Friday, June 26, 2009 - link

...this sounds fine for games running at over 60FPS - and in fact I don't think there's really that much difference between double and triple buffering in that case; 60FPS is smooth and would be great.The thing is, what happens if the frame rate is just under 60 FPS? It seems to me that you'll still get some benefit - i.e. you'd see the 58 FPS - but there's a delay of one frame between when the scene is rendered and when it appears on the display. You neglected to spell out the the maximum "input latency" is entirely dependent on frame rate... though it will never be more than 17ms I don't think.

I'm not one to state that input lag is a huge issue, provided it stays below around 20ms. I've used some LCDs that have definite lag (Samsung 245T - around 40ms I've read), and it is absolutely distracting even in normal windows use. Add another 17ms for triple buffering... well, I suppose the difference between 40ms and 57ms isn't all that huge, but neither is desirable.

GourdFreeMan - Friday, June 26, 2009 - link

Derek has already mentioned that the additional delay is at most one screen refresh, not exactly one refresh, but let me add two more points. First, the the additional delay will be dependent on the refresh rate of your monitor. If you have a 60 Hz LCD then, yes it will be ~16.6ms. If you have a 120 Hz LCD the additonal delay would be at most ~8.3ms. Second, that if you are running without vsync, the screen you see will be split into two regions -- one region is the newly rendered frame, the other will be the previous frame that will be the same age as the entire frame you would be getting with triple buffering. Running without vsync only reduces your latency if what you are focusing on is in the former.Also, we should probably call this display lag, not input lag, as the rate at which input is polled isn't necessarily related to screen refresh (it is for some games like Oblivion and Hitman: Blood Money, however).

DerekWilson - Friday, June 26, 2009 - link

you are right that maximum input latency is very dependent on framerate, but I believe I mentioned that maximum input latency with triple buffering is never more than 16.67ms, while with double buffering and vsync it could potentially climb to an additional 16.67ms due to the fact that the game has to wait to start rendering the next frame. If a frame completes just after a refresh, the game must artificially wait until after the next refresh to start drawing again giving something like an upper limit of input lag as (frametime + 33.3ms).With triple buffering, input lag is no longer than double buffering without vsync /for at least part of the frame/ ... This is never going to be longer than (frametime + 16.7ms) in either case.

triple buffering done correctly does not add more input lag than double buffering in the general case (even when frametime > 17ms) unless/until you have a tear in the double buffered case. and there again, if the frames are similar enough that you don't see a tear, then then there was little need for an update half way through a frame anyway.

i tried to keep the article as simple as i could, and getting into every situation of where frames finish rendering, how long frames take, and all that can get very messy ... but in the general case, triple buffering still has the advantages.

DerekWilson - Friday, June 26, 2009 - link

sorry, i meant input lag /due/ to triple buffering is never more than 16.67ms ... but the average case is shorter than this.total input lag can be longer than this because frame data is based on input when the frame began rendering so when framerate is less than 60FPS, frametime is already more than 16.67ms ... at 30 FPS, frametime is 33.3ms.

Edirol - Friday, June 26, 2009 - link

The wiki article on the subject mentions that it depends on the implementation of triple buffering. Can frames be dropped or not? Also there may be limitations to using triple buffering in SLI setups.DerekWilson - Friday, June 26, 2009 - link

i'm not a fan of wikipedia's article on the subject ... they refer to the DX 3 frame render ahead as a form of "triple buffering" ... I disagree with the application of the term in this case.sure, it's got three things that are buffers, but the implication in the term triple buffering (just like in the term double buffering) when applied to displaying graphics on a monitor is more specific than that.

just because something has two buffers to do something doesn't mean it uses "double buffering" in the sense that it is meant when talking about drawing to back buffers and swaping to front buffers for display.

In fact, any game has a lot more than two or three buffers that it uses in it's rendering process.

The DX 3 frame render ahead can actually be combined with double and triple buffering techniques when things are actually being displayed.

I get that the wikipedia article is trying to be more "generally" correct in that something that uses three buffers to do anything is "triple buffered" in a sense ... but I submit that the term has a more specific meaning in graphics that has specifically to do with page flipping and how it is handled.

StarRide - Friday, June 26, 2009 - link

Very Informative. WoW is one of those games with inbuilt triple buffering, and the ingame tooltip to the triple buffering option says "may cause slight input lag", which is the reason why I haven't used triple buffering so far. But by this article, this is clearly false, so I will be turning triple buffering on from now on, thanks.Bull Dog - Friday, June 26, 2009 - link

Now, how do we enable it? And when we enable it, how do we make sure we are getting triple buffering and not double buffering?ATI has an option in the CCC to enable triple buffering for OpenGL. What about D3D?

gwolfman - Friday, June 26, 2009 - link

What about nVidia? Do we have to go to the game profile to change this?