Real-world virtualization benchmarking: the best server CPUs compared

by Johan De Gelas on May 21, 2009 3:00 AM EST- Posted in

- IT Computing

Heavy Virtualization Benchmarking

All tests run on ESX 3.5 Update 4 (Build 153875), which has support for AMD's RVI. It also supports the Intel Xeon X55xx Nehalem but has no support yet for EPT.

Getting one score out of a virtualized machine is not straightforward: you cannot add up URL/s, transactions per second, and queries per second. If virtualized system A turns out twice as many web responses but fails to deliver half of the transactions machine B delivers, which one is the fastest? Luckily for us, Intel (vConsolidate) and VMware (VMmark) have already solved this problem. We use a very similar approach. First, we test each application on its native operating system with four physical cores. Those four physical cores belong to one Opteron Shanghai 8389 2.9GHz. This becomes our reference score.

| Opteron Shanghai 8389 2.9GHz Reference System | |

| Test | Reference score |

| OLAP - Nieuws.be | 175.3 Queries /s |

| Web portal - MCS | 45.8 URL/s |

| OLTP - Calling Circle | 155.3 Transactions/s |

We then divide the score of the first VM by the "native" score. In other words, divide the number of queries per second in the first OLAP VM by the number of queries that one Opteron 8389 2.9GHz gets when it is running the Nieuws.be OLAP Database.

| Performance Relative to Reference System | ||||

| Server System Processors | OLAP VM | Web portal VM 2 | Web portal VM 3 | OLTP VM |

| Dual Xeon X5570 2.93 | 94% | 50% | 51% | 59% |

| Dual Xeon X5570 2.93 HT off | 92% | 43% | 43% | 43% |

| Dual Xeon E5450 3.0 | 82% | 36% | 36% | 45% |

| Dual Xeon X5365 3.0 | 79% | 35% | 35% | 32% |

| Dual Xeon L5350 1.86 | 54% | 24% | 24% | 20% |

| Dual Xeon 5080 3.73 | 47% | 12% | 12% | 7% |

| Dual Opteron 8389 2.9 | 85% | 39% | 39% | 51% |

| Dual Opteron 2222 3.0 | 50% | 17% | 17% | 12% |

So for example, the OLAP VM on the dual Opteron 8389 got a score of 85% of that of the same application running on one Opteron 8389. As you can see the web portal server only has 39% of the performance of a native machine. This does not mean that the hypervisor is inefficient, however. Don't forget that we gave each VM four virtual CPUs and that we have only eight physical CPUs. If the CPUs are perfectly isolated and there was no hypervisor, we would expect that each VM gets 2 physical CPUs or about 50% of our reference system. What you see is that OLAP VM and OLTP VM "steal" a bit of performance away from the web portal VMs.

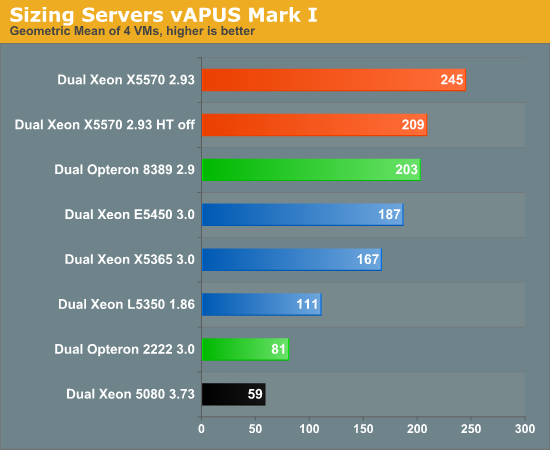

Of course, the above table is not very user-friendly. To calculate one vApus Mark I score per physical server we take the geometric mean of all those percentages, and as we want to understand how much work the machine has done, we multiply it by 4. There is a reason why we take the geometric mean and not the arithmetic mean. The geometric mean penalizes systems that score well on one VM and very badly on another VM. Peaks and lows are not as desirable as a good steady increase in performance over all virtual machines, and the geometric mean expresses this. Let's look at the results.

After seeing so many VMmark scores, the result of vApus Mark I really surprised us. The Nehalem based Xeons are still the fastest servers, but do not crush the competition as we have witnessed in VMmark and VConsolidate. Just to refresh your memory, here's a quick comparison:

| VMmark vs. vApus Mark I Summary | ||

| Comparison | VMmark | vApus Mark I |

| Xeon X5570 2.93 vs. Xeon 5450 3.0 | 133-184% faster (*) | 31% faster |

| Xeon X5570 2.93 vs. Opteron 8389 2.9 | +/- 100% faster (*)(**) | 21% faster |

| Opteron 8389 2.9 vs. Xeon 5450 3.0 | +/- 42% | 9% faster |

(*) Xeon X5570 results are measured on ESX 4.0; the others are on ESX 3.5.

(**) Xeon X5570 best score is 108% faster than Opteron at 2.7GHz. We have extrapolated the 2.7GHz scores to get the 2.9GHz ones.

Our first virtualization benchmark disagrees strongly with the perception that the large OEMs and recent press releases have created with the VMmark scores. "Xeon 54xx and anything older are hopelessly outdated virtualization platforms, and the Xeon X55xx make any other virtualization platform including the latest Opteron 'Shanghai' look silly". That is the impression you get when you quickly glance over the VMmark scores.

However, vApus Mark I tells you that you should not pull your older Xeons and newer Opterons out of your rack just yet if you are planning to continue to run your VMs on ESX 3.5. This does not mean that either vApus Mark I or VMmark is wrong, as they are very different benchmarks, and vApus Mark I was run exclusively on ESX 3.5 update 4 while some of the VMmark scores have been run on vSphere 4.0. What it does show us how important it is to have a second data point and a second independent "opinion". That said, the results are still weird. In vApus Mark I, Nehalem is no longer the ultimate, far superior virtualization platform; at the same time, the Shanghai Opteron does not run any circles around the Xeon 54xx. There is so much to discuss that a few lines will not do the job. Let's break things up a bit more.

66 Comments

View All Comments

Bandoleer - Thursday, May 21, 2009 - link

I have been running Vmware Virtual Infrastructure for 2 years now. While this article can be useful for someone looking for hardware upgrades or scaling of a virtual system, CPU and memory are hardly the bottlenecks in the real world. I'm sure there are some organizations that want to run 100+ vm's on "one" physical machine with 2 physical processors, but what are they really running????The fact is, if you want VM flexability, you need central storage of all your VMDK's that are accessible by all hosts. There is where you find your bottlenecks, in the storage arena. FC or iSCSI, where are those benchmarks? Where's the TOE vs QLogic HBA? Considering 2 years ago, there was no QLogic HBA for blade servers, nor does Vmware support TOE.

However, it does appear i'll be able to do my own baseline/benching once vSphere ie VI4 materializes to see if its even worth sticking with vmware or making the move to HyperV which already supports Jumbo, TOE iSCSI with 600% increased iSCSI performance on the exact same hardware.

But it would really be nice to see central storage benchmarks, considering that is the single most expensive investment of a virtual system.

duploxxx - Friday, May 22, 2009 - link

perhaps before you would even consider to move from Vmware to HyperV check first in reality what huge functionality you will loose in stead of some small gains in HyperV.ESX 3.5 does support Jumbo, iscsi offload adapters and no idea how you are going to gain 600% if iscsi is only about 15% slower then FC if you have decent network and dedicated iscsi box?????

Bandoleer - Friday, May 22, 2009 - link

"perhaps before you would even consider to move from Vmware to HyperV check first in reality what huge functionality you will loose in stead of some small gains in HyperV. "what you are calling functionality here are the same features that will not work in ESX4.0 in order to gain direct hardware access for performance.

Bandoleer - Friday, May 22, 2009 - link

The reality is I lost around 500MBps storage throughput when I moved from Direct Attached Storage. Not because of our new central storage, but because of the limitations of the driver-less Linux iSCSI capability or the lack there of. Yes!! in ESX 3.5 vmware added Jumbo frame support as well as flow control support for iSCSI!! It was GREAT, except for the part that you can't run JUMBO frames + flow control, you have to pick one, flow control or JUMBO.I said 2 years ago there was no such thing as iSCSI HBA's for blade servers. And that ESX does not support the TOE feature of Multifunction adapters (because that "functionality" requires a driver).

Functionality you lose by moving to hyperV? In my case, i call them useless features, which are second to performance and functionality.

JohanAnandtech - Friday, May 22, 2009 - link

I fully agree that in many cases the bottleneck is your shared storage. However, the article's title indicated "Server CPU", so it was clear from the start that this article would discuss CPU performance."move to HyperV which already supports Jumbo, TOE iSCSI with 600% increased iSCSI performance on the exact same hardware. "

Can you back that up with a link to somewhere? Because the 600% sounds like an MS Advertisement :-).

Bandoleer - Friday, May 22, 2009 - link

My statement is based on my own experience and findings. I can send you my benchmark comparisons if you wish.I wasn't ranting at the article, its great for what it is, which is what the title represents. I was responding to this part of the article that accidentally came out as a rant because i'm so passionate about virtualization.

"What about ESX 4.0? What about the hypervisors of Xen/Citrix and Microsoft? What will happen once we test with 8 or 12 VMs? The tests are running while I am writing this. We'll be back with more. Until then, we look forward to reading your constructive criticism and feedback.

Sorry, i meant to be more constructive haha...

JohanAnandtech - Sunday, May 24, 2009 - link

"My statement is based on my own experience and findings. I can send you my benchmark comparisons if you wish. "Yes, please do. Very interested in to reading what you found.

"I wasn't ranting at the article, its great for what it is, which is what the title represents. "

Thx. no problem...Just understand that these things takes time and cooperation of the large vendors. And getting the right $5000 storage hardware in lab is much harder than getting a $250 videocard. About 20 times harder :-).

Bandoleer - Sunday, May 24, 2009 - link

I haven't looked recently, but high performance tiered storage was anywhere from $40k - $80k each, just for the iSCSI versions, the FC versions are clearly absurd.solori - Monday, May 25, 2009 - link

Look at ZFS-based storage solutions. ZFS enables hybrid storage pools and an elegant use of SSDs with commodity hardware. You can get it from Sun, Nexenta or by rolling-your-own with OpenSolaris:http://solori.wordpress.com/2009/05/06/add-ssd-to-...">http://solori.wordpress.com/2009/05/06/add-ssd-to-...

pmonti80 - Friday, May 22, 2009 - link

Still it would be interesting to see those central storage benchmarks or at least knowing if you will/won't be doing them for whatever reason.