ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

Then we have NVIDIA publishing slides like this:

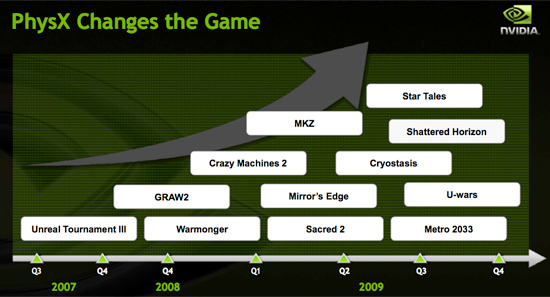

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

So he just told you why he's gettting NVidia, and the little red fanboy in you couldn't stand it. You recommend taking steps that void the warranty, but why should you care - then you blabber about a 4850, but he already noted the 260 - and finally you let the last word in you could barely bring your red rooster self to say GTS250 - as in you might as well get it.... if you have to - 'cept he was already looking above that.You red ragers just have to spew about your crap card to people who already seemingly decided they don't want it. Why is that ?

You gonna offer him red cuda, red physx, red vreveal, red badaboom, red forced game profiles, red forced dual gpu ? ANY OF THAT ?

NO -

you tell him to HACK a red piece of crap to make it reasonable. LOL

What a shame.

Hey, maybe he can hack the buzzing fan on it , too ?

helldrell666 - Monday, April 6, 2009 - link

What the hell are you talking about?Im not a fan boy of any of those big companies that don't give a shit about me.

I have used both nvidia and ATI, and both produce great graphics cards.

I had a 8800gtx before my current 4870, and i had and athlon x2 6400+ before my current core i7 920.I go with whoever has the product with the best price.

SiliconDoc - Monday, April 6, 2009 - link

No, you are a fanboy and you are exposed. Deal with it.helldrell666 - Monday, April 6, 2009 - link

It's you, who the fanboy is.You're trying hard to show the advantages of nvidia cards over the ATI cards, and guess what, you fail.And i don't give a rat ass about CUDA.Im a gamer, and what matters to me is the card's gaming performance and the features that enhance my my gaming experience "which is what 99.9% of who buy those cards care for", Like physx, which is a true con for nvidia cards.But physx isn't supported on most games, and the physx effects that nvidia is trying to promote can be easily processed on a decent sub 300$ cpu by enahncing the on cpu physx performance via a driver/software that could utilize all cpu cores and utiliz the cpu processing power in a better way.

Ohh wait.ATI has DX10.1 and tessellation which nvidia doesn't have, and thanks to your nvidia, we didn't get much games that support

DX10.1, and we didn't get any game that supports tessellation which is a geometry accelerating technique that can accelerate geometry processing by up to 4 times using the same amount of floating points aka. processing power.If tessellation, which is included in ATI cards APIs since the Hd2000 series days, was used in those demanding games like crysis and stalker, we would've been able to play them using sub 300$ graphics solutions.Put aside the DX10.1 features like the aa enhancement.....and the GRS color detector that allows the gpu to use more accurate color degree for a texel using a more advanced texturing algorithm compared to the tri/bi-linear buffering teqnique used in nvidia's illegal uncompleted DX10 API.

SiliconDoc - Monday, April 6, 2009 - link

rofl - the long list of your imaginary hatreds against nvidia - you FREAK fanboy.The problem being, that just like some lying retard, you blame ati's epic failure with tessalation on who ? ROFL NVIDIA.

Sorry bub, you're another one who is INSANE.

You won't face what IS - you want something that isn't - wasn't - or won't be - so keep on WHINING forever, looney tuner.

In the mean time, nvidia users outnumber you, enjoy the large amount of added benefits, and don't have a 2 billion dollar loss hanging over their heads - with a company that might collapse in bankruptcy - and lose support of the already problematci drivers.

You bought the wrong thing, now you have a hundred could be would be if and an's and garbage can complaints that have nothing to do with reality and how it actually is.

Fantasy red rooster fanboy.

LOL - it's amazing.

helldrell666 - Tuesday, April 7, 2009 - link

Freak fanboy......? Hatred....? Are you serious...or ....?I don't hate any company because i have no reason to.I blame nvidia for not allowing those few developed games companies to include those great features in these very few modern demanding games.

Accelerating physx processing using the gpu is a great idea, but is it worth it?

Is the cpu realy unable to keep up with the gpu in games due to it's slow physx processing ability?

Are those primitive physx effects realy that heavy n a modern quad core cpu?

These are questions that you should ask yourself before trolling for the idea.

And i have to remind you that ATI has coded Havok physx effects in

OPEN-CL programming language, which in case you don't know, is standard langauage compared to nvidia CUDA, which is based on some kind of c programming codes.

Talking about the drivers, i haven't had problems with my 4870 on MY Vista 64bit OS, compared to my old 8800gtx that almost brought me a heart attack.

As for your beloved nvidia, we need nvidia as much as we need AMD and INTEL to keep the competetition alive, which in its turn will keep innovations going and adjust the prices.

Ohh... and thanx to the red camp, we can get a decent graphics card for less than 300$.so have some respect for them.

as for you, i wonder how old you are, cuz you don't seem to have a mature logic.

tamalero - Monday, April 20, 2009 - link

dont worry, this guy clearly as mental issues, a nvidia paid troll.SiliconDoc - Tuesday, April 7, 2009 - link

Yeah, sure. Just like any red rooster, you never had a problem with ati drivers, but nvidia drove you nearly to a heart attack, but I have problems with logic or detecting a red rooster fanboy blabberer ! ROFLDude, you keep digging your own hole deeper.

Then you try the immature assault - another losing proposition.

YOU'RE WHINING about nvidia and yeah, you do blame them, and after going on like a lunatic about wanting offload to the cpu, you admit it might not work - yes I read your asinine quadcore offload blabberings, too, and your bloody ragging about nvidia "not letting" your insane fantasy occur - purportedly to advantage ati (not like you're banking on intel graphics).

So red rooster, keep crowing, and never face the reality that is, and carry that chip for all your imiginary grievances of what should be or, what you say could have been.

In the mean time, know you are marked, and I know who and what you are, and I'm sure you'll have further whining and wailing about what nvidia did or didn't do for ati. LOL

roflmao

Logic ? ROFLMAO

" I, almost had a heart attack " said the sissy. lol " But I'm an objective person with logic ". roflmao

Jamahl - Monday, April 6, 2009 - link

Wow what a stunning pile of crap i've just read.ATI's can fold, guess what it's downloaded via CCC.

ATI has open source cloth physx, stream and avivo which pisses all over that trash nvidia call 'purevideo' or whatever.

But best of all, you can ACTUALLY BUY A 4890 whereas the 275 only exists in the Nvidia fanbois tiny little green with envy minds.

The0ne - Tuesday, April 7, 2009 - link

If you haven't noticed, SiliconDoc is basically ignored in all his responses. Be wise and do that same. He'll eventually kill himself :)