ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

Then we have NVIDIA publishing slides like this:

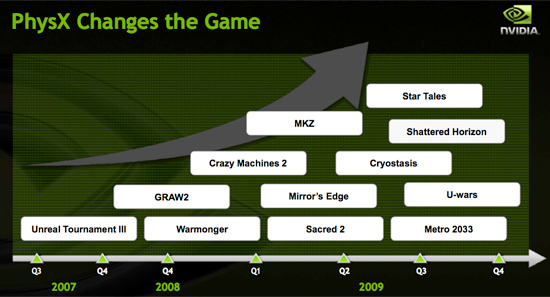

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

LOL - antoher hidden red rooster bias uncovered...Umm... look, when there's a new ati card, there's no talking about crunching down on former ati cards - OK ? That just is NOT allowed.

" No mention of the death of the HD 4850X2 as the HD4890 trashes the power consumption, price, availability, speed and OC-ability "

Dude, not allowed !

PS- Don't mention how this card is going to smash the "4870" "profit" "flagship" - gee now just don't talk about it - don't mention it - look, there's no rooster crying in fps gaming, ok ?

Torquer350 - Friday, April 3, 2009 - link

Props to ATi for delivering a very compelling product. I admit I've always been an Nvidia fan, and I'll generally forgive them a single generational performance loss to ATi, but I've recommended ATi products recently to friends due to their resurgent desirability.That being said, am I the only one who detects a subtle but distinct underlying disdain for Nvidia? So they tried to market the hell out of you - so what? They are trying to sell cards here. Why the surprise that sales and marketing people are trying to do exactly what they're paid to do? Congrats for being smart enough to see it for what it is, but jeers for making an issue of it as if its some kind of new tactic. Has AMD/ATi never done the same?

CUDA and PhysX are compelling, but I agree not a good reason to overcome a significant gap between Nvidia and ATi at a comparable price point. You clearly agree, but it seems like what little praise you offer is begrudging in the extreme.

Nvidia has definitely acted in bad form in a number of ways throughout this very lengthy generation of hardware. However, you guys are journalists and in my opinion should make a more concerted effort to leave the vitriol and sensationalism at the door, regardless of who it is that is being reviewed. That kind of emotional reaction, personal opinion, irritation, etc is better served for your blog posts than a review article.

Love the site, keep up the good work. Nobodys perfect.

SiliconDoc - Monday, April 6, 2009 - link

Yeah thanks for noticing, too. It been going on a long time. Notice how now, suddenly when ati doesn't have 2560 sewn up - it doesn't matter anymore ... LOLOf course the "brilliiantly unbiased" reviewers will claim they did a poll on monitor resolution useage, and therefore sudenyl came to their conclusion about $2,000.00 monitor users, when they tiddled and taddled for years about 10 bucks between framerates and nvidia ati - and chose ati for the 10 bucks difference.

Yep, 10 bucks matters, but $1,700.00 difference for a monitor doesn't matter until they take a poll. Now they didn't say it, but they will - wait it's coming...

Just like I kept pointing out when they raved about ati taking the 30" resolution and not much if anything else, that declaring it the winner wasn't right. Now of course, when ati isn't winning the 30 rez - yes, well, they finally caught on. No bias here ! Nothing to notice, pure professionalism, and hatred of cuda and physx for it's lack of ability to run on ati cards is fully justified, and should offer NO advantage to nvidia when making a purchase decision ! LOL

OMG ! they're like GONERZ man.

Dried - Friday, April 3, 2009 - link

Best review so far. And nice cards BTW, they are both worth it, but i like the 4890 betterFunny thing is that GTX 275 > GTX 280.

But my guess is that GTX 280 benefits more from overclocking.

Arbie - Friday, April 3, 2009 - link

Because of my PC's location I am concerned with idle power, and purchase based on that if other specs and price are even comparable. Peak power doesn't matter as long as it's within the capability of my 800W PSU.I bought an ATI HD4850 last year because it idled significantly lower than the 4870, and it would run everything in sight. A great card. The Nvidia GTX 260 and 280 had even better performance vs idle power ratios but were way too expensive at the time.

So I think Nvidia takes the laurels now with the GTX 275. 30W less (!) than the HD 4890 at idle, with essentially the same performance. If I were shopping now it would be a VERY easy choice.

I really hope ATI can get their idle power down too. They need to pay more attention to throttling back or downpowering circuits that aren't needed in 2D modes.

helldrell666 - Friday, April 3, 2009 - link

Use the radeon bios editor to edit the 2d profile and then downclock your gpu frequencies.OCedHrt - Friday, April 3, 2009 - link

The power consumption on the 4890 really interests me. While it uses more than 275 at idle, it uses less under load. Also, it is a significant drop from the 4870 which is a slower card.bobvodka - Friday, April 3, 2009 - link

So, on the charge of drivers; I've gone from recently having a GT8800GTX 512Meg to a HD4870X2 2gig and if anything I've seen stability improvements between the two. Or to put it another way NV drivers were bluescreening my Vista install when I was doing nothing more than using my TV card and it was crashing in a DirectDraw DLL. Nice.Not to say AMD hasn't had issues; trying to use hardware acceleration with any bluray play back resulted in a bluescreen due to the gpu going into an infinite loop. Nice. Fortunately, unlike the DDraw error above, I could at least turn off hardware acceleration (and honestly, with an i7 it's not like I needed it).

So, stability wise it's a wash.

As for the memory usage complaints about CCC;

Unless it is running it is NOT taking up physical memory. Like many things in the windows world it might load something into the background but this is quickly paged out and doesn't live in ram. Even if it does living in ram for a short period of time being inactive it will be paged out as soon as memory presure requires it. The simple fact is unused ram is wasted ram; this is why I'm glad Vista uses 10gig of my 12 for cache when it isn't needed for anything else, it speeds up the system.

Cuda.. well, the idea is nice and I like the idea but as mentioned in the article unless you have cross vendor support it isn't as useful as it could be. OpenCL and, for games, DX11's compute shaders are going to make life intresting for both Cuda and AMD's option. I will say this much; I suspect you'll get better performance from NV, AMD and indeed Larrabee when it appears by going 'to the metal' with them but as with many things in the software world you have to trade something for speed.

Now, PhysX.. well, this one is even more fun (and I guess it effects Cuda as well to a degree). Right now, with Vista, you can't run more than one vendor's gfx card in your system at once due to how WDDM1.0 works; so it's AMD or NV and that's your choice. With Win7 however the rules change slight and you'll be able to run, with WDDM1.1 drivers, cards from both vendors at once. Right away this paints an intresting landscape for those intrested; if you want an AMD card but also want some PhysX hardware power than you'll be able to slide in a 'cheap' NV series card to use for that reason (or indeed if you have an old series 8 laying about use that if the driver supports it).

Of course, with Havoc going OpenCL and being free for games which retail for <$10 (iirc) this is probably going to be much of a muchness in the end, but it's an intresting idea at least.

SiliconDoc - Monday, April 6, 2009 - link

Except you can run 2 nvidia cards, one for gaming, the other for physx.... so red fanboys are sol."Right now, with Vista, you can't run more than one vendor's gfx card in your system at once due to how WDDM1.0 works; so it's AMD or NV and that's your choice. "

WRONG, it's TWO nvidia or just ONE ati. Hello - you knew it - but you didn't say it that way - makes ati look bad, and we just cannot have that here....

Rhino2 - Monday, April 13, 2009 - link

The hell are you talking about? Crossfire works in vista just fine.