NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

by Derek Wilson on March 3, 2009 3:00 AM EST- Posted in

- GPUs

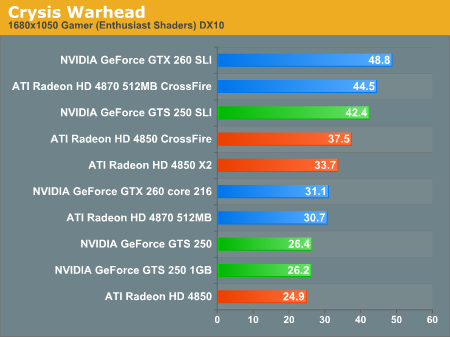

Crysis Warhead Performance

Again NVIDIA leads, though our settings were a little aggressive for these parts which are just under playable at 1680x1050. 1280x1024 would have been a good target resolution for this game with these settings, or dropping the enthusiast shaders might also have helped out. But whatever way you slice it, the GTS 250 leads in both single and dual configurations (the latter actually remain playable through 1920x1200 as well).

1680x1050 1920x1200 2560x1600

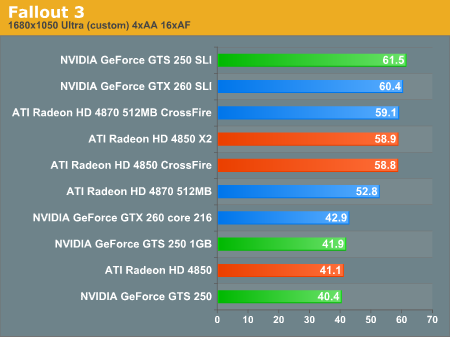

Fallout 3 Performance

This one is pretty interesting even though our multiGPU options are system/LOD limited by the game at 1680x1050 and 1920x1200. It's clear that memory really helps in this test when resolution increases, as the 1GB GTS 250 improves on the 512MB by about 50%. At lower resolutions, the GTS 250 1GB just comes in ahead of the 512MB 4850 which just comes in ahead of the 512MB GTS 250. It looks like at 1680x1050 and lower the memory stops making a difference and all three of these cards just tie.

1680x1050 1920x1200 2560x1600

103 Comments

View All Comments

VooDooAddict - Tuesday, March 3, 2009 - link

If trying to decide for purchasing, I would cut that list down to the following:8800GTS 512MB - Good bang for the $ but hotter, power hungry GPU

9800GTX+ 512MB - Die shrink gave more speed and lower temps

GTX250 1GB - New board design gives better power usage

SiliconDoc - Wednesday, March 18, 2009 - link

VooDoo where the heck can you get an 8800gts anymore ? ebay ?The 9800GT ultimate by Asus ? Those are literally gone as well ...

Which brings me to something... I hadn't thought of yet...

A LOT of the core g80/g92/g92b cards are GONERS - they're sold out !

So - nvidia makes a new "flavor".

Golly Wally, I never thought of that before.

" That's why you're the Beave. "

____________________

Oh, THEY SOLD OUT - HOW ABOUT THAT.

erple2 - Wednesday, March 4, 2009 - link

No, I disagree - the OP has a good point. Compare all of the G92 parts together to see just how much real difference there is. Throwing in the G80 part (8800GTX, I suppose) is an interesting twist as well, to show how the G80 evolved over time.nVidia has a crazy number of cards that are all "the same". The evaluation proposed sure would help explain away what was going on.

I'd definitely be interested in seeing what the results of that were!

SiliconDoc - Wednesday, March 18, 2009 - link

Go to techpowerup and see their reviews, for instance on the 4830 - it has LOTS of games and lots of g80/g92/g92b flaovr - including the gtx768 (G80) which YES, pulls out some wins even against the 4870x2....Check it out at techpowerup.

emboss - Tuesday, March 3, 2009 - link

I'd also say for the purpose of comparison to throw a G80 in there (ie: a 8800 GTX or Ultra). It'd be interesting if the extra bandwidth and ROPs of the G80s make a difference in any cases.Casper42 - Tuesday, March 3, 2009 - link

1) You should have included results for a 9800GTX+ so we could truly see if the results were identical to the "new" card.2) If you can, please stick a 9800GTX+ and a GTS 250 512MB into the same machine and see if you can still enable SLI.

I own a 9800 GTX+ and item #2 is especially interesting to me as it means when I want to go SLI, I may have an easier time finding a GTS 250 rather than hunting on eBay for a 9800 GTX+

Thanks,

Casper42

SiliconDoc - Wednesday, March 18, 2009 - link

Casper as DEREK said in the article > " Anyway, we haven't previously tested a 1GB 9800 GTX+," (until now)THAT'S WHAT THEY USED.

lol

Yes, well you still don't have an answer to your question though...

How about the lower power consumption and better memory and core creation translating into higher overlclocks ?

LOL

No checking that, either...

"A 9800gtx+" will do - "bahhhumbug ! I hate nvidia and it's the same ding dang thing ! Forget that I derek said it's better memory, a better made core itteration, and therefore lower power, a smaller pcb make, SCREW all that I can' overclock I don't have the DAM*! CARD I HATE NVIDIA ANYWAY SO WHO CARES! "

____________________________________

Sorry for the psychological profile but it's all too obvious - and it's obvious nvidia knows it as well.

Hope the endless red fan ragers save the multiple billion dollar charge off losers, ati. I really do. I really appreciate the constant slant for ati, I think it helps lower the prices on the cards I like to buy.

It's great.

Mr Perfect - Tuesday, March 3, 2009 - link

Now that's a good question(number 2 that is). Maybe a 9800GTX+ can be BIOS flashed into a 250 to enable SLI?DerekWilson - Tuesday, March 3, 2009 - link

GTS 250 can be SLI'd with 9800 GTX+ -- NVIDIA usually disables SLI with different device IDs, but this is an exception.If you've got a 9800 GTX+ 512MB you can SLI it with a GTS 250. If you have a 9800 GTX+ 1GB you can SLI it with a GTS 250 1GB. You can't mix memory sizes though.

Also, the 9800 GTX+ and the GTS 250 are completely identical and there is no reason to put two in a system and test them because they are the same card with a different name. At least until NVIDIA's partners release GTS 250s based on the updated board, but even then we don't exepct any performance difference whatsoever.

These numbers were run with a 9800 GTX+ and named GTS 250 to help show the current line up.

dgingeri - Tuesday, March 3, 2009 - link

I noticed that the 512MB version of te 4870 beats the GTS250 1GB in everything, and yet costs the same. even when the video memory makes a big difference, the 512MB 4870 wins out. Even better is that the 4870 512MB board costs the same, or at least will soon, as the GTS250 1GB board.Doesn't this make the 4870 512MB board a better deal?