MultiGPU Update: Two-GPU Options in Depth

by Derek Wilson on February 23, 2009 7:30 AM EST- Posted in

- GPUs

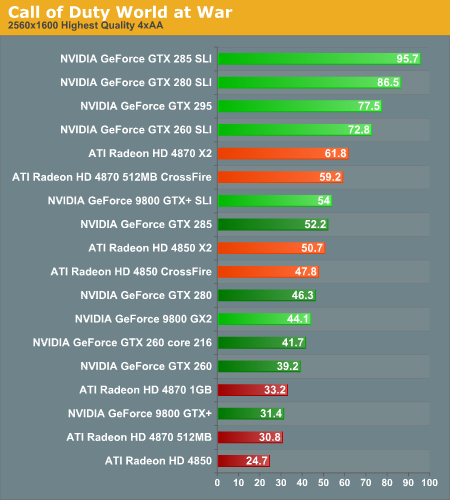

Call of Duty World at War Analysis

This game, as with previous CoD installments, tends to favor NVIDIA hardware. The updated graphics engine of World at War, while looking pretty good, still offers good performance and good scalability.

1680x1050 1920x1200 2560x1600

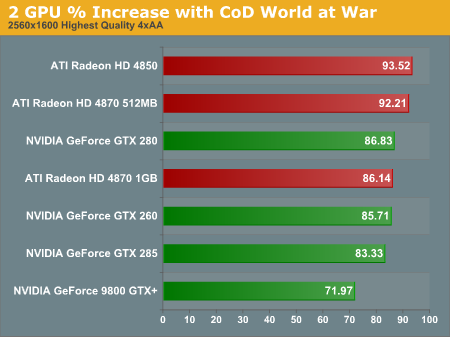

In this test, even though we disabled the frame rate limit and vsync, single GPU solutions seem limited to around 60 frames per second. This is part of why we see beyond linear scaling with more than one GPU in some cases: it's not magic, it's that single card performance isn't as high as it should be. We don't stop seeing artificial limits on single GPU performance until 2560x1600.

1680x1050 1920x1200 2560x1600

SLI rules this benchmark with GT 200 based parts coming out on top across the board. This game does scale very well with multiple GPUs, most of the time coming in over 80% (the exception is the 9800 GTX+ at 2560x1600). At higher resolutions, the AMD multiGPU options do scale better than their SLI counter parts, but the baseline NVIDIA performance is so much higher that it really doesn't make a big practical difference.

1680x1050 1920x1200 2560x1600

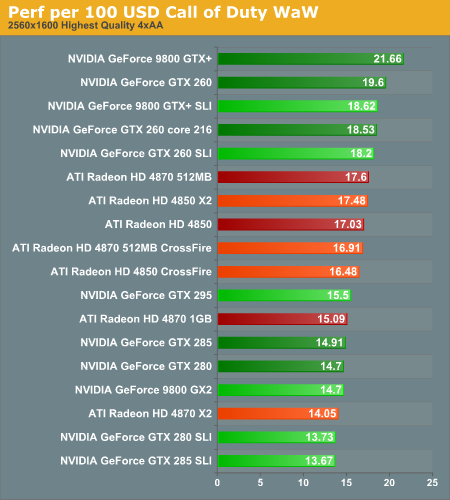

In terms of value, the 9800 GTX+ (at today's prices), leads the way in CoD. Of course, though it offers the most frames per second per dollar, it is a good example of the need to account for both absolute performance and value: it only barely squeaks by as playable at 2560x1600.

Because we see very good performance across the board, multiple GPUs are not required even for the highest settings at the highest resolution. The only card that isn't quite up to the task at 2560x1600 is the Radeon HD 4850 (though the 4870 512MB and 9800 GTX+ are both borderline).

95 Comments

View All Comments

DerekWilson - Tuesday, February 24, 2009 - link

actually, you can't get CRTs that go that high afaik -- the highest res CRTs I've seen go to 2048x1536 ...30" LCD monitors support this resolution such as the Dell I use. Apple among others also make 30" LCDs with 2560x1600 resolution.

The barrier to entry with 30" LCD monitors that do 2560x1600 is about $1000 ...

Finally - Tuesday, February 24, 2009 - link

As usual, this isn't an article, meant for the proles, but for the aforementioned 1%.I wouldn't read it if they used 8 GPUs and quadrupled that resolution...

Really, what do you wanna prove, Anandtech?

That you can test things, that don't matter to anyone?

That you can test things, because you can test things?

Come on!

Get something with a BIT more information value for the general crowd out here.

For example:

A big lot of us has still old GPUs in use. But if any piece of hardware is older than 2 months, you erase it from the benchmarking process, effectively annihilating any comparisons that could have been made.

SiliconDoc - Wednesday, March 18, 2009 - link

So 66% are 1650 and below, and we know people like to exagerrate on the internet, so the numbers are actually HIGHER in the lower end.So let's round up to 75% at 1650 1280 and 1024.

They didn't offer the 1440x900 monitor rez all over wal mart - not to mention 1280 800 laptops (who await $ tran$fer to a game rig) - .

Yes, perhaps not 1% but 3% isn't much different - this site would COLLAPSE INSTANTLY without the 75%- not true the other way around.

Frankjly I'd like to see the list of cards from both companies that do sli or xfire - I want to see just how big that list is - and I want everyone else to see it.

DerekWilson - Tuesday, February 24, 2009 - link

Actually, from a recent poll we did, 2560x1600 usage is around 3% among AnandTech readers.http://anandtech.com/weblog/showpost.aspx?i=547">http://anandtech.com/weblog/showpost.aspx?i=547

1920x1200 and 1680x1050 are definitely in more use, but this article is also useful for those users.

This article demonstrates the lack of necessity and value in multiGPU solutions at resolutions below 2560x1600 in most cases. This is important information for gamers to consider.

Jamer - Monday, February 23, 2009 - link

What a great article! Absolutely brilliant. This helps so much, bringing simplicity to all those possible GPU choices. Thank you!SirKronan - Monday, February 23, 2009 - link

Any chance we could get some figures on average performance per dollar from the whole suite you through at it? And performance per power consumption figures would be awesome, too.Just some suggestions that would benefit your readers.

I have a hard time not seeing the value in the 295. It's much closer performance-wise to 2x280/285 than one 280/285, yet costs much less, doesn't require an SLI motherboard, and consumes much less power at load and at idle.

It seems that you get a considerably larger performance boost for your money with the 295 than is traditional with the fastest graphics card available. Remember the 8800 Ultra? How much faster was it than the GTX, and how much price difference was there? The 9800GX2 was much worse. $600 for the same performance as two $200 8800GTS, and not much better power consumption numbers either, a very bad buy.

And just because most games out now run fine on a 260 at 1920x1200 and don't need anymore power, some of the value in buying the higher end is longevity. On of my friends actually bought an Ultra nearly two years ago. He's still using it and hasn't need an upgrade near as often as I have, as I usually go the midrange route. I'm always more tempted to upgrade as new things come out because of how much better they usually are than my older midrange hardware.

croc - Monday, February 23, 2009 - link

Overall, an excellent article. But I found it a bit 'cluttered' with all of the bar graphs in three different formats. Perhaps a line graph with all formats might be just as cluttered... Hmm. Maybe one button to change the default resolution for the article instead of the one selection / graph might help? And possibly another button to look at bar or line graphs? Food for thought... My thought. Could I get a CSV file of the raw data?mhouck - Monday, February 23, 2009 - link

Great article Derek. I've been waiting for an update on the state of the multi gpu tech. Thank you for taking the time to include the 3 different resolutions and range of cards. Can't wait to see Tri and Quad gpu setups. Please keep the 1920x1200 resolutions in your upcoming article!sabrewolfy - Monday, February 23, 2009 - link

Great read. The single GPU/multiple GPU option is always a tough decision.On paper the 4850x2 2gb is awesome. Amazon was selling these for $260 AR awhile back. Although I was in the market for a new GPU, I didn't buy one. If you read the newegg reviews, 20% of buyers give it 1 or 2 stars. Issues include heat, noise, and poor driver support. The card is also 11.5" long. I'd have to mangle my hard drive cage to make it fit. At the end of the day, I'd rather spend another $50 (GTX 280) and get a card that runs quiet, cool, and just works without headaches.

dubyadubya - Monday, February 23, 2009 - link

Nvidia cards do not perform AA correctly or at all. This has been a problem since the 1xx.xx version drivers were released right up through the latest 182.06 drivers. 9x.xx drivers and prior do not have this problem. This can easily be reproduced by using a 6,7 or early 8 series card and swapping between a 9x.xx driver and any 1xx.xx driver. This test cant be done on newer cards because 9x.xx drivers do not support the hardware.Best case AA is only acting on objects close to your in game view point. Anything farther away gets no AA at all. Worst case AA does not function at all. This happens using the AA settings in game or through the driver it self. I find this problem most noticeable in racing games as there are lots of straight objects at a distance. ATI cards do not have this problem in my testing.

Nvidia forums has had several threads over the years about this problem. Here is 40 plus page thread about the problem. This thread was closed because someone said a bad word "ATI".

http://forums.nvidia.com/index.php?showtopic=58863...">http://forums.nvidia.com/index.php?showtopic=58863...

Nvidia has known about this problem near forever. I would guess its by design. Doing full screen AA takes horse power so if they limit or eliminate AA their cards will bench faster.

What really sucks is review sites seem not to care about image quality only FPS. While I'm on the subject what about 2d image quality and performance. Some of the newer cards just plain suck as far as 2d performance goes.

Now you may think I'm anti Nvidia well I'm not I'm running a 8800 GT in the box I'm typing this from. I tend to buy what I get the most bang for the buck from though the next card I buy will have working AA if you get the idea.

So Anandtech please start comparing 3d image quality in all reviews. While your at it test basic 2d image quality and 2d performance. Performance Test would be a good measure of 2d performance BTW.