Jasper Is Here: A Look at the New Xbox 360

by Anand Lal Shimpi on December 10, 2008 12:00 AM EST- Posted in

- Smartphones

- Mobile

And I thought the reason people bought consoles was to avoid dealing with the hardware nitty-gritty.

| Xbox 360 Revision | CPU | GPU | eDRAM |

| Xenon/Zephyr | 90nm | 90nm | 90nm |

| Falcon/Opus | 65nm | 80nm | 80nm |

| Jasper | 65nm | 65nm | 80nm |

First let's get the codenames right. The first Xbox 360 was released in 2005 and used a motherboard codenamed Xenon. The Xenon platform featured a 90nm Xenon CPU (clever naming there), a 90nm Xenos GPU and a 90nm eDRAM. Microsoft added HDMI support to Xenon and called it Zephyr, the big three chips were still all 90nm designs.

From top to bottom: Jasper (Arcade so no chrome on the DVD drive), Xenon, Falcon, Xenon. Can you tell them apart? I'll show you how.

In 2007 the 2nd generation Xbox 360 came out, codenamed Falcon. Falcon featured a 65nm CPU, 80nm GPU and 80nm eDRAM. Falcon came with HDMI by default, but Microsoft eventually made a revision without HDMI called Opus (opus we built a console that fails a lot! sorry, couldn't resist).

Finally, after much speculation, the 3rd generation Xbox 360 started popping up in stores right around the holiday buying season and it's called Jasper. Jasper keeps the same 65nm CPU from Falcon/Opus, but shrinks the GPU down to 65nm as well. The eDRAM remains at 80nm.

When Xenon came out, we bought one and took it apart. The same for Falcon, and naturally, the same for Jasper. The stakes are a lot higher with Jasper however; this may very well be the Xbox 360 to get, not only is it a lot cooler and cheaper for Microsoft to manufacture, but it may finally solve the 360's biggest issue to date.

A Cure for the Red Ring of Death?

The infamous Red Ring of Death (RRoD) has plagued Microsoft since the launch of the Xbox 360. The symptoms are pretty simple: you go to turn on your console and three of the four lights in a circle on your Xbox 360 turn red. I've personally had it happen to two consoles and every single one of my friends who has owned a 360 for longer than a year has had to send it in at least once. By no means is this the largest sample size, but it's a problem that impacts enough Xbox 360 owners for it to be a real issue.

The infamous Red Ring of Death (RRoD) has plagued Microsoft since the launch of the Xbox 360. The symptoms are pretty simple: you go to turn on your console and three of the four lights in a circle on your Xbox 360 turn red. I've personally had it happen to two consoles and every single one of my friends who has owned a 360 for longer than a year has had to send it in at least once. By no means is this the largest sample size, but it's a problem that impacts enough Xbox 360 owners for it to be a real issue.

While Microsoft has yet to publicly state the root cause of the problem, we finally have a Microsoft that's willing to admit that its consoles had an unacceptably high rate of failure in the field. The Microsoft solution was to extend all Xbox 360 warranties for the RRoD to 3 years, a solution that managed to help most users but not all.

No one ever got to the bottom of what caused the RRoD. Many suspected that it was the lead-free solder balls between the CPU and/or GPU and the motherboard losing contact. The clamps that Microsoft used to attach the heatsinks to the CPU and GPU put a lot of pressure on the chips; it's possible that the combination of the lead-free solder, a lot of heat from the GPU, inadequate cooling and the heatsink clamps resulted in the RRoD. The CPU and/or GPU would get very hot, the solder would either begin to melt or otherwise dislodge, resulting in a bad connection and an irrecoverable failure. That's where the infamous "towel trick" came into play, wrap your console in a towel so its internals heat up a lot and potentially reseat the misbehaving solder balls.

The glue between the GPU and the motherboard started appearing with the Falcon revision

With the Falcon revision Microsoft seemed to admit to this as being a problem by putting glue between the CPU/GPU and the motherboard itself, presumably to keep the chips in place should the solder weaken. We all suspected that Falcon might reduce the likelihood of the RRoD because shrinking the CPU down to 65nm and the GPU down to 80nm would reduce power consumption, thermal output and hopefully put less stress on the solder balls - if that was indeed the problem. Unfortunately with Falcon Microsoft didn't appear to eliminate RRoD, although anecdotally it seemed to have gotten better.

How About Some Wild Speculation?

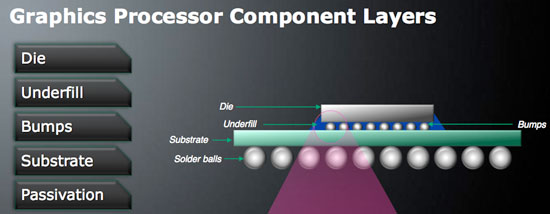

This year NVIDIA fell victim to its own set of GPU failures resulting in a smaller-scale replacement strategy than what Microsoft had to implement with the Xbox 360. The NVIDIA GPU problem was well documented by Charlie over at The Inquirer, but in short the issue here was the solder bumps between the GPU die and the GPU package substrate (whereas the problem I just finished explaining is between the GPU package substrate and the motherboard itself).

The anatomy of a GPU, the Falcon Xbox 360 addressed a failure in the solder balls, but perhaps the problem resides in the bumps between the die and substrate?

Traditionally GPUs had used high-lead bumps between the GPU die and the chip package, these bumps can carry a lot of current but are quite rigid, and rigid materials tend to break in a high stress environment. Unlike the bumps between the GPU package and a motherboard (or video card PCB), the solder bumps between a GPU die and the GPU package are connecting two different materials, each with its own rate of thermal expansion. The GPU die itself gets hotter much quicker than the GPU package, which puts additional stress on the bumps themselves. The type of stress also mattered, while simply maintaining high temperatures for a period of time provided one sort of stress, power cycling the GPUs provided a different one entirely - one that eventually resulted in these bumps, and the GPU as a whole, failing.

The GPU failures ended up being most pronounced in notebooks because of the usage model. With notebooks the number of times you turn them on and off in a day is much greater than a desktop, which puts a unique type of thermal stress on the aforementioned solder bumps, causing the sorts of failures that plagued NVIDIA GPUs.

In 2005, ATI switched from high-lead bumps (90% lead, 10% tin) to eutectic bumps (37% lead, 63% tin). These eutectic bumps can't carry as much current as high-lead bumps, they have a lower melting point but most importantly, they are not as rigid as high-lead bumps. So in those high stress situations caused by many power cycles, they don't crack, and thus you don't get the same GPU failure rates in notebooks as you do with NVIDIA hardware.

What does all of this have to do with the Xbox 360 and its RRoD problems? Although ATI made the switch to eutectic bumps with its GPUs in 2005, Microsoft was in charge of manufacturing the Xenos GPU and it was still built with high-lead bumps, just like the failed NVIDIA GPUs. Granted NVIDIA's GPUs back in 2005 and 2006 didn't have these problems, but the Microsoft Xenos design was a bit ahead of its time. It is possible, although difficult to prove given the lack of publicly available documentation, that a similar problem to what plagued NVIDIA's GPUs also plagued the Xbox 360's GPU.

If this is indeed true, then it would mean that the RRoD failures would be caused by the number of power cycles (number of times you turn the box on and off) and not just heat alone. It's a temperature and materials problem, one that (if true) would eventually affect all consoles. It would also mean that in order to solve the problem Microsoft would have to switch to eutectic bumps, similar to what ATI did back in 2005, which would require fairly major changes to the GPU in order to fix. ATI's eutectic designs actually required an additional metal layer, meaning a new spin of the silicon, something that would have to be reserved for a fairly major GPU change.

With Falcon, the GPU definitely got smaller - the new die was around 85% the size of the old die. I surmised that the slight reduction in die size corresponded to either a further optimized GPU design (it's possible to get more area-efficient at the same process node) or a half-node shrink to 80nm; the latter seemed most likely. If Falcon truly only brought a move to 80nm, chances are that Microsoft didn't have enough time to truly re-work the design to include a move to eutectic bumps, they would most likely save that for the transition to 65nm.

Which brings us to Jasper today, a noticeably smaller GPU die thanks to the move to 65nm and a potentially complete fix to the dreaded RRoD. There are a lot of assumptions being made here and it's just as likely that none of this is correct, but given that Falcon and its glue-supported substrates didn't solve RRoD I'm wondering if part of the problem was actually not correctable without a significant redesign of the GPU, something I'm guessing had to happen with the move to 65nm anyways.

It took about a year for RRoD to really hit a critical mass with Xenon and it's only now been about a year for Falcon, so only time will tell if Jasper owners suffer the same fate. One thing is for sure, if it's a GPU design flaw, then Jasper was Microsoft's chance to correct it. And if it's a heat issue, Jasper should reduce the likelihood as well.

Who knows, after three years of production you may finally be able to buy an Xbox 360 that won't die on you.

84 Comments

View All Comments

strikeback03 - Wednesday, December 10, 2008 - link

In the US we would say the number 12.1 as "Twelve point one". In places where comma and period usage are switched, how would 12,1 be spoken?Spoelie - Thursday, December 11, 2008 - link

12,1"twaalf komma een"

just as you say "point", we say "comma"

strikeback03 - Thursday, December 11, 2008 - link

Interesting, thanks!Spivonious - Wednesday, December 10, 2008 - link

Not European, but I believe it's "12 mark 1" or still "12 point 1" (i.e. in German it's 12 punkt 1, which translates to 12 point 1".geogaddi - Wednesday, December 10, 2008 - link

No - Krauts write "12,1" and say "zwoelf komma eins".Now comes the time to dance. Dieter, touch my monkey...

dasyentist - Wednesday, December 10, 2008 - link

''The Xbox 360 had a bit more graphics power than a Radeon X800 XT''Maybe i'm wrong but from the spec ive read it look more like the core of a x1800/x1900...

JarredWalton - Wednesday, December 10, 2008 - link

The Xenos GPU is somewhere in between X800 and X1800. It has more in the way of X1800 hardware, but performance is a lot more like X800 I believe. Neither the Xbox 360 nor the PS3 launched with graphics performance that could match - let alone surpass - that of the then-current top PC GPUs (i.e. X1800 and 7800 GTX). But, there is something to be said for having a static hardware to target when making games.bill3 - Thursday, December 11, 2008 - link

I was going to comment on this glaring innacuracy in the article as well! See I'm not the only one who spotted it.Xenos doesnt match well to any specific ATI PC hardware of the time, being custom, but I feel fully confident in declaring it a good deal more powerful, in fact a generation ahead, of X800XT!

The simplest way to deduce this is to compare to PS3. The RSX in PS3 is simply an 500 mhz 7800GTX with a 128 bit memory bus and a few other minor modifications (such as larger texture caches so it can handle the larger latency from PS3's XDR memory pool). The 128bit bus is not a huge handicap for many reasons. (low 720P rendering resolution, the fact RSX can texture from XDR as well as GDDR for more BW, etc etc).

Now 360 outputs roughly the same level of graphics as PS3 overall (while a few PS3 exclusives seem to look slightly better, OTOH most multiplatform games look/run better on the 360). So it's a necessity to assume Xenos is at least roughly as powerful as 7800GTX. Does X800XT fit that bill? No. In fact the 7800GTX and it's same base specced but higher clocked brother the 7900GTX, traded blows against the ATI X1800 and X1900 cards of the time. So it's much more reasonable to place the Xenos with the X1800/1900 class ATI cards. I believe tape out times would also support that. I believe Xenos taped out in the same time frame (late 04) as G70/R520.

You can also derive that Xenox>X800XT from more complex maneuvers such as execution resources (and probably die size as well, though I dont have that info). Xenos has 48 shader ALU's. Comparable to the 7800GTX which has 24 pipes, with 2 alu's each, for 48 (though it would also contain 6-8 vertex shader ALU's). And X800XT would have 16 pipes X2 ALU's =32 (plus 8? vertex shader ALU's). Now there are all sorts of caveats in comparing ALU's across different parts (if anything Xenos is probably a lot more efficient in utilizing it's more plentiful ALU's over X800XT, due to it's unified shading abilities, and it's ALUs can crunch an extra component as well I believe making the difference even greater), but nonetheless it probably tells us something.

Heck, Xenos even has more raw shader resources than X1800XT. X1800 had only 32 shader ALU's as well, albeit higher clocks. Xenos is probably somewhere between X1800 and X1900 if you ask me, right in that class.

Another evidence of this is the games, I'm not familiar with Gears of War PC benchmarks, but I bet even at 720P a X800XT would be crushed by the game, let alone Gears 2 (even allowing for the greater optimization in consoles doesnt go far enough to negate this point imo). I also dont believe games that look as great as Rage, Resident Evil 5, etc would run on an X800XT.

Anand Lal Shimpi - Thursday, December 11, 2008 - link

The Xenos GPU should have fallen in between the R420 and R520 in terms of performance, remember that Xenos was ATI's first unified shader architecture GPU so direct comparisons between it and the non-unified ATI architectures of the time aren't exactly the easiest to make.We originally proposed that the Xenos GPU would perform similar to a 24-pipe R420, but you're correct in that it should be closer to the X1800 in performance. I will update the article to reflect that its performance falls in between both R420/520 but is closer to the 520.

Remember that one major advantage Xenos has is its 10MB eDRAM, which definitely helps in the effective bandwidth department - making rendering with AA at 720p much more possible than other high end PC architectures available at the time.

Even if you make the 7800 GTX comparison, we're still around 4x the speed of that with high end PC graphics today. G80 was 2x G70, and GT200 is 2x G80. By the end of next year we'll hopefully have something that is 2x GT200.

-A

john-ZX10R - Tuesday, April 20, 2010 - link

so my elight got the one red light?? 16v. GPU failure ..i went on a search to the game spots and get me my elight 12 1a unit much quieter and power brick is smaller seems good noticed buy touching the top it was a lot cooler after 10 hrs of games and netflix.but seems like the disc tray is a little sloppy when it ejects and goes back in, but i can live with it its the least of my worries i just don't want to get the red ring again i have had if for 2 days and seems to be running good so far i bought a glass pedestal that keeps it about 4 feet off the ground and allows the needed air flow i used the inter cooler on my old one and never had any issues just loud i didn't put it on my new unit as people seem to think it robs power and makes it run hotter due to power usage?? nyko 3 fan system i used to use the usb ports for charging my wireless mic and cell but not with my new one I'm going to try to keep the power usage to just what the xbox needs only.nothing more well that's my 2 cents..Sarasota Florida..