Jasper Is Here: A Look at the New Xbox 360

by Anand Lal Shimpi on December 10, 2008 12:00 AM EST- Posted in

- Smartphones

- Mobile

And I thought the reason people bought consoles was to avoid dealing with the hardware nitty-gritty.

| Xbox 360 Revision | CPU | GPU | eDRAM |

| Xenon/Zephyr | 90nm | 90nm | 90nm |

| Falcon/Opus | 65nm | 80nm | 80nm |

| Jasper | 65nm | 65nm | 80nm |

First let's get the codenames right. The first Xbox 360 was released in 2005 and used a motherboard codenamed Xenon. The Xenon platform featured a 90nm Xenon CPU (clever naming there), a 90nm Xenos GPU and a 90nm eDRAM. Microsoft added HDMI support to Xenon and called it Zephyr, the big three chips were still all 90nm designs.

From top to bottom: Jasper (Arcade so no chrome on the DVD drive), Xenon, Falcon, Xenon. Can you tell them apart? I'll show you how.

In 2007 the 2nd generation Xbox 360 came out, codenamed Falcon. Falcon featured a 65nm CPU, 80nm GPU and 80nm eDRAM. Falcon came with HDMI by default, but Microsoft eventually made a revision without HDMI called Opus (opus we built a console that fails a lot! sorry, couldn't resist).

Finally, after much speculation, the 3rd generation Xbox 360 started popping up in stores right around the holiday buying season and it's called Jasper. Jasper keeps the same 65nm CPU from Falcon/Opus, but shrinks the GPU down to 65nm as well. The eDRAM remains at 80nm.

When Xenon came out, we bought one and took it apart. The same for Falcon, and naturally, the same for Jasper. The stakes are a lot higher with Jasper however; this may very well be the Xbox 360 to get, not only is it a lot cooler and cheaper for Microsoft to manufacture, but it may finally solve the 360's biggest issue to date.

A Cure for the Red Ring of Death?

The infamous Red Ring of Death (RRoD) has plagued Microsoft since the launch of the Xbox 360. The symptoms are pretty simple: you go to turn on your console and three of the four lights in a circle on your Xbox 360 turn red. I've personally had it happen to two consoles and every single one of my friends who has owned a 360 for longer than a year has had to send it in at least once. By no means is this the largest sample size, but it's a problem that impacts enough Xbox 360 owners for it to be a real issue.

The infamous Red Ring of Death (RRoD) has plagued Microsoft since the launch of the Xbox 360. The symptoms are pretty simple: you go to turn on your console and three of the four lights in a circle on your Xbox 360 turn red. I've personally had it happen to two consoles and every single one of my friends who has owned a 360 for longer than a year has had to send it in at least once. By no means is this the largest sample size, but it's a problem that impacts enough Xbox 360 owners for it to be a real issue.

While Microsoft has yet to publicly state the root cause of the problem, we finally have a Microsoft that's willing to admit that its consoles had an unacceptably high rate of failure in the field. The Microsoft solution was to extend all Xbox 360 warranties for the RRoD to 3 years, a solution that managed to help most users but not all.

No one ever got to the bottom of what caused the RRoD. Many suspected that it was the lead-free solder balls between the CPU and/or GPU and the motherboard losing contact. The clamps that Microsoft used to attach the heatsinks to the CPU and GPU put a lot of pressure on the chips; it's possible that the combination of the lead-free solder, a lot of heat from the GPU, inadequate cooling and the heatsink clamps resulted in the RRoD. The CPU and/or GPU would get very hot, the solder would either begin to melt or otherwise dislodge, resulting in a bad connection and an irrecoverable failure. That's where the infamous "towel trick" came into play, wrap your console in a towel so its internals heat up a lot and potentially reseat the misbehaving solder balls.

The glue between the GPU and the motherboard started appearing with the Falcon revision

With the Falcon revision Microsoft seemed to admit to this as being a problem by putting glue between the CPU/GPU and the motherboard itself, presumably to keep the chips in place should the solder weaken. We all suspected that Falcon might reduce the likelihood of the RRoD because shrinking the CPU down to 65nm and the GPU down to 80nm would reduce power consumption, thermal output and hopefully put less stress on the solder balls - if that was indeed the problem. Unfortunately with Falcon Microsoft didn't appear to eliminate RRoD, although anecdotally it seemed to have gotten better.

How About Some Wild Speculation?

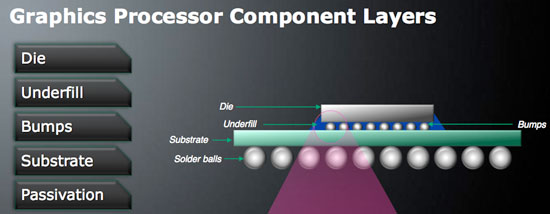

This year NVIDIA fell victim to its own set of GPU failures resulting in a smaller-scale replacement strategy than what Microsoft had to implement with the Xbox 360. The NVIDIA GPU problem was well documented by Charlie over at The Inquirer, but in short the issue here was the solder bumps between the GPU die and the GPU package substrate (whereas the problem I just finished explaining is between the GPU package substrate and the motherboard itself).

The anatomy of a GPU, the Falcon Xbox 360 addressed a failure in the solder balls, but perhaps the problem resides in the bumps between the die and substrate?

Traditionally GPUs had used high-lead bumps between the GPU die and the chip package, these bumps can carry a lot of current but are quite rigid, and rigid materials tend to break in a high stress environment. Unlike the bumps between the GPU package and a motherboard (or video card PCB), the solder bumps between a GPU die and the GPU package are connecting two different materials, each with its own rate of thermal expansion. The GPU die itself gets hotter much quicker than the GPU package, which puts additional stress on the bumps themselves. The type of stress also mattered, while simply maintaining high temperatures for a period of time provided one sort of stress, power cycling the GPUs provided a different one entirely - one that eventually resulted in these bumps, and the GPU as a whole, failing.

The GPU failures ended up being most pronounced in notebooks because of the usage model. With notebooks the number of times you turn them on and off in a day is much greater than a desktop, which puts a unique type of thermal stress on the aforementioned solder bumps, causing the sorts of failures that plagued NVIDIA GPUs.

In 2005, ATI switched from high-lead bumps (90% lead, 10% tin) to eutectic bumps (37% lead, 63% tin). These eutectic bumps can't carry as much current as high-lead bumps, they have a lower melting point but most importantly, they are not as rigid as high-lead bumps. So in those high stress situations caused by many power cycles, they don't crack, and thus you don't get the same GPU failure rates in notebooks as you do with NVIDIA hardware.

What does all of this have to do with the Xbox 360 and its RRoD problems? Although ATI made the switch to eutectic bumps with its GPUs in 2005, Microsoft was in charge of manufacturing the Xenos GPU and it was still built with high-lead bumps, just like the failed NVIDIA GPUs. Granted NVIDIA's GPUs back in 2005 and 2006 didn't have these problems, but the Microsoft Xenos design was a bit ahead of its time. It is possible, although difficult to prove given the lack of publicly available documentation, that a similar problem to what plagued NVIDIA's GPUs also plagued the Xbox 360's GPU.

If this is indeed true, then it would mean that the RRoD failures would be caused by the number of power cycles (number of times you turn the box on and off) and not just heat alone. It's a temperature and materials problem, one that (if true) would eventually affect all consoles. It would also mean that in order to solve the problem Microsoft would have to switch to eutectic bumps, similar to what ATI did back in 2005, which would require fairly major changes to the GPU in order to fix. ATI's eutectic designs actually required an additional metal layer, meaning a new spin of the silicon, something that would have to be reserved for a fairly major GPU change.

With Falcon, the GPU definitely got smaller - the new die was around 85% the size of the old die. I surmised that the slight reduction in die size corresponded to either a further optimized GPU design (it's possible to get more area-efficient at the same process node) or a half-node shrink to 80nm; the latter seemed most likely. If Falcon truly only brought a move to 80nm, chances are that Microsoft didn't have enough time to truly re-work the design to include a move to eutectic bumps, they would most likely save that for the transition to 65nm.

Which brings us to Jasper today, a noticeably smaller GPU die thanks to the move to 65nm and a potentially complete fix to the dreaded RRoD. There are a lot of assumptions being made here and it's just as likely that none of this is correct, but given that Falcon and its glue-supported substrates didn't solve RRoD I'm wondering if part of the problem was actually not correctable without a significant redesign of the GPU, something I'm guessing had to happen with the move to 65nm anyways.

It took about a year for RRoD to really hit a critical mass with Xenon and it's only now been about a year for Falcon, so only time will tell if Jasper owners suffer the same fate. One thing is for sure, if it's a GPU design flaw, then Jasper was Microsoft's chance to correct it. And if it's a heat issue, Jasper should reduce the likelihood as well.

Who knows, after three years of production you may finally be able to buy an Xbox 360 that won't die on you.

84 Comments

View All Comments

kilkennycat - Wednesday, December 10, 2008 - link

Yep, and for the latest classic example, consider the PC port of GTA4. This port hold the all-time (so far) rotten-banana-prize for the worst console to PC port of a major video-game. Besides the DRM and gross game-play/graphics bugs, the game REQUIRES at least 3 CPU-cores for optimum performance. Code obviously ported over from the 3-core Xbox360 version, with zero optimization for a fewer number of far more capable PC CPU cores. The cartoon-type graphics puts little stress on the GPU. Hopefully, Anandtech in one of the occasional PC game-related articles will lacerate Rockstar and Take Two for this lazily-awful port to the PC.seriouscat - Wednesday, December 10, 2008 - link

Comon AT! Wheres the temperature benchmarks? This was the single most interesting test I was looking foward to after all these months and what do I read? Nothing!Pirks - Wednesday, December 10, 2008 - link

Otherwise he wouldn't write "I'm actually a bit surprised that we haven't seen more focus on delivering incredible visuals on PC games given the existing performance gap" because the answer to that has been printed in media many times, and here it is posted on DailyTech this morning: http://www.dailytech.com/article.aspx?newsid=13648">http://www.dailytech.com/article.aspx?newsid=13648See Anand, it's really easy to make you stop feeling surprised. You won't ever now, will ya? Just remember this P-word, always remember it.

Gunbuster - Wednesday, December 10, 2008 - link

"Most lead-free replacements for conventional Sn60/Pb40 and Sn63/Pb37 solder have melting points from 5–20 °C higher"You need to back up your facts in this one boys.

Staples - Wednesday, December 10, 2008 - link

I have yet to see someone hook up a Jasper to a current meter to test out how much power the darn thing draws.I hope this does cure the RROD because my launch system (Xenon) and the Falcon I bought a year ago both have gone bad. The Falcon used much less power but if the GPU was the real cause of the RROD like many speculate, then hopefully this die shrink takes care of it.

And for all of those who do not know which version you have, do what I do. I have never once looked into the console with a flashlight. I have a kill a watt meter and by comparing anand's numbers to that of your own will narrow down the generation of Xbox you have.

ss284 - Wednesday, December 10, 2008 - link

Power(W) = current(A) * voltage(V).I'm assuming you can do the math. The killawatt is in essence a volt/current meter.

sprockkets - Wednesday, December 10, 2008 - link

Close. Watts is energy. Watts over time is power, or kWh.ahmshaegar - Wednesday, December 10, 2008 - link

Wow. Can't believe I just saw someone post that.The watt is definitely a unit of power. Power is the rate at which you use energy. 1 W = 1 J/s. So the kWh is actually a unit of energy, since you multiply the watt with the hour (a unit of time), which is very odd* if you think about it (1 W = 1 J/s, so 1 kWh is 1000 Wh, or 1000 (J/s)h.

Because 1 hour contains 3600 seconds, 1 kWh is 1000 joules per second multiplied by 1 hour multiplied by 3600 seconds per hour (this last term converts the 1 hour to seconds, so I can cancel out the seconds.)

You then get 1 kWh = 3600000 J, proving that the kWh is indeed a unit of energy.

*It's one of those odd units if you just think about it, but very useful for the utilities.

adhoc - Wednesday, December 10, 2008 - link

I don't understand the comment about lead-free solder melting at high temperatures...Lead-free solder actually has a HIGHER melting point than leaded solder. Instead of worrying about solder melting at "high temperatures" from chip heat dissipation, I'd be more worried about PCB and component reliabilities due to the initial soldering process. PCB laminates that aren't suited for lead-free/RoHS elevated temperatures can warp and/or delaminate, creating immediate or possibly latent failures.

Aside from the PCB materials, components need to be characterized for the higher temperatures during the reflow/wave processes. Ceramic capacitors come to mind as a specific issue; the ceramic can crack under high temperatures (especially temperature gradients during hand-soldering), which can eventually create an open circuit, or worse even a short between power planes.

In the end, I'm just dubious of the explanation of lead-free solder as the failure mode. On the ohter hand, it may very well be related to the required higher temperatures during assembly (and thus bad PCBs and/or component failures).

The0ne - Wednesday, December 10, 2008 - link

Some designers don't account for the higher temperatures when they design PCBs. Actually there's quite alot of them around. That and mixing leaded and lead free parts where SMT has a much harder time processing them. In such case, they end up separating the process to lead, lead-free and sometimes even hand solder because of the particular design. Then you have designs that doesn't take into consideration of the distances between components or more specifically between vias. With very fine pitches this can become a nightmare for SMT.