ATI Radeon HD 4350 and 4550: Great HTPC Solutions

by Derek Wilson on September 30, 2008 12:45 AM EST- Posted in

- GPUs

The Benefits Over Integrated Graphics

For our comparison to integrated graphics, we looked at two games: Crysis and Oblivion. These games tend to cover the spectrum fairly well from DX9 to DX10, and they tell the same story: integrated graphics suck.

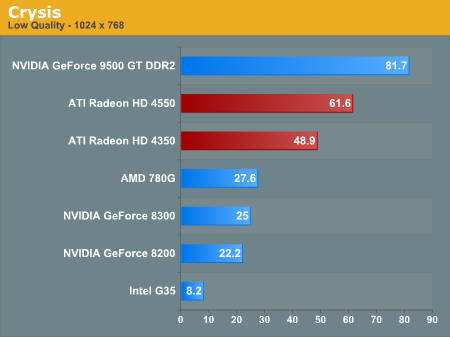

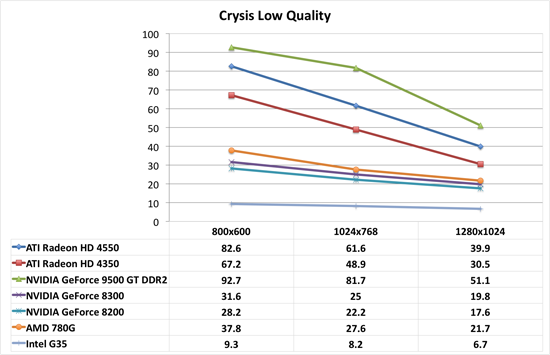

First up is Crysis. For integrated graphics, we needed to test everything at the absolute lowest setting, and even that was painful. It is too bad we couldn't test 640x480, as that might have given some of this hardware a chance at playability. But with the tests we did run, none of our integrated solutions were really playable at 1024x768, and only the AMD 780G did anything useful at 800x600. By contrast, the 4350 and the 4550 both we very playable at this very low quality setting. Pushing up to 1280x1024 wasn't really as effective, but the 4550 did still hang on to playable framerates at that resolution.

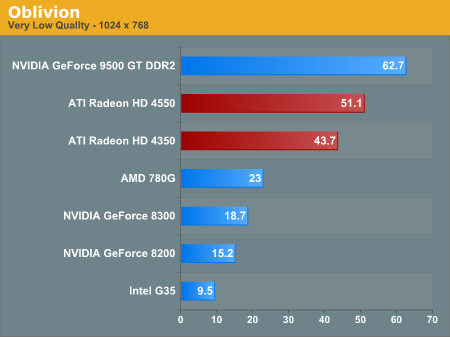

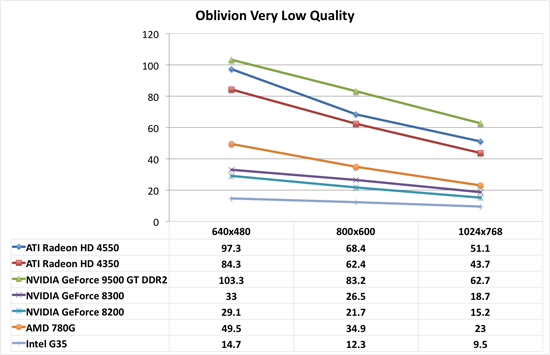

As for Oblivion, we see nearly the same behavior as with Crysis. There is a huge performance gap between integrated graphics and even the lowest end of add-in cards we are testing today. And these settings with Oblivion are insanely ugly. We would never recommend playing with very low settings ever. It's a horrendous experience.

As usual, Intel's integrated graphics are the biggest joke of the bunch. But that's not any sort of feather in AMD or NVIDIA's cap here: Intel's G35 is just really horrible hardware for 3D.

So, with the benefit over integrated graphics well established, how do these parts compare to the slightly higher price bracket right next door? Let's take a look at how they compare to NVIDIA's 9500 GT DDR2 and AMD's 4670.

55 Comments

View All Comments

Basilisk - Tuesday, September 30, 2008 - link

"The Radeon HD 4350 is an even cheaper alternative to adding 8-channel LPCM output and ...". Please enlighten me how 8-channel is possible on a card w/o HDMI. Are they using Magic? Or is there a way to extract it w/o HDMI? Or is the card they showed in the photo an example of a 4350 that's too-cheap to offer 8-channel? Or....Quite possibly I missed the obvious, but I didn't find any 4350's on the ATI site to double check this. Or, perhaps this review had a bit too much sales blurb and too little testing? I agree with others who feel that if you're going to hype 8-channel and HTPC, you ought to be performing quantitative/qualitative tests.

Veerappan - Tuesday, September 30, 2008 - link

As Natfly mentioned, they use an adapter to transform one of the DVI ports into HDMI (with some of the DVI pins carrying audio data).It's probably the same adapter that came in the box of my 4850.

Basilisk - Tuesday, September 30, 2008 - link

Oh! Then... it's not a DVI-D dual-port card, despite the use of that connector?! Or, they diddle a non-data pin (like +5v for monitor stand-by) to permit both DVI-D/dp and audio? 'Spose that's too much out of an inexpensive card... Thanks for the info!Zoomer - Tuesday, September 30, 2008 - link

If they are DVI-D, the DVI-A pins are avaliable for use.If not, there are always unused pins, extra ground pins, etc.

Natfly - Tuesday, September 30, 2008 - link

They send the audio over dvi, an adapter from ati will turn the dvi input to hdmi output w/ video + audio. I assume the retail packaging would ship with the adapter.ie

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.aspx?Item=N8...

toyota - Tuesday, September 30, 2008 - link

as usual you have some wrong numbers in the charts. the 4650/4670 have 32 texture units not 16. whats strange is that you actually corrected it in the 4670 review only to make the mistake again in these charts.vlado08 - Tuesday, September 30, 2008 - link

I also expect comparison of video quality between nVIDIA Ati and IntelMore explanation about video processing what does this specs mean are they possible to turn off:

Color space conversion

Chroma subsampling format conversion

Advanced vector adaptive per-pixel de-interlacing

De-blocking and noise reduction filtering

Detail enhancement

Inverse telecine (2:2 and 3:2 pull-down correction)

Bad edit correction

Automatic dynamic contrast adjustment

Full 30-bit display processing

Programmable piecewise linear gamma correction, color correction, and color space conversion

Spatial/temporal dithering provides 30-bit color quality on 24-bit and 18-bit displays

Is it possible to select the video output range 16-235 vs 0-255 manually?

I expect that there will be more in dept article for HTPC and mabe there you will explain what should we pay attention to.

vlado08 - Tuesday, September 30, 2008 - link

Just to addGive us a screen shot comparison of the driver setting pages of the Ati nVIDIA Intel.

I want to know what settings are possible with Clear Video vs Avivo HD vs Purevideo HD.

Also about how do we select colors rec BT 601 vs rec BT 709

pfroo40 - Tuesday, September 30, 2008 - link

I would have appreciated it if they had included a video quality comparison for this new crop of HTPC cards. I made the mistake of buying a cheap 3450 for bluray, which does accelerates fine but has low image quality. It'd be useful for my next purchase if I had more to base a comparison on. Otherwise, so far it looks like the passively cooled 4550 would be a solid upgrade.Dribble - Tuesday, September 30, 2008 - link

I agree - it's not a good HTPC solution if it doesn't give you the same playback quality as a high end card. You didn't test that so you can't really make a judgement, and hence have no basis for saying it is.