Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

The Future of Larrabee: The Many Core Era

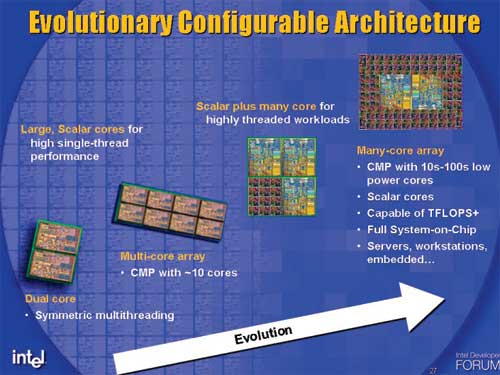

I keep going back to this slide because it really tells us where Intel sees its architectures going:

Today we're in the era of the multi-core array. Next year, Nehalem will bring us 8-cores on a single chip and it's conceivable that we'll see 10 and 12 core versions in the two years following it. Larrabee isn't actually on this chart, it remains separate until we hit the heterogeneous multi-threaded cores (the last two items on the evolutionary path).

It looks like future Intel desktop chips will be a mixture of these large Nehalem-like cores surrounded by tons of little Larrabee-like cores. Your future CPUs will be capable of handling whatever is thrown at them, whether that is traditional single-threaded desktop applications, 3D games, physics or other highly parallelizable workloads. It also paints an interesting picture of the future - with proper OS support, all you'd need for a gaming system would be a single Larrabee, you wouldn't need a traditional x86 CPU.

This future is a long time from now, but just as Pentium M eventually evolved into the future of desktop microprocessors from Intel today, keep an eye on Larrabee, because in 5 years it could be behind what you're running everything on.

Changing the Way GPUs Are Launched?

Here's an interesting thought. By the time Larrabee rolls out in 2009/2010, Intel's 45nm process will have been able to reach maturity. It's very possible that Intel could launch Larrabee much like it does its CPUs, with many SKUs covering a broad range of market segments. Intel could decide to launch $199 all the way up to $999 Larrabee parts, instead of the more traditional single GPU launch (perhaps with two SKUs) and waiting months before the technology trickles down to the mainstream.

Intel could take the GPU industry by storm and get Larrabee out into the wild quicker if it launched top to bottom, akin to how its CPU introductions work.

101 Comments

View All Comments

Shinei - Monday, August 4, 2008 - link

Some competition might do nVidia good--if Larrabee manages to outperform nvidia, you know nvidia will go berserk and release another hammer like the NV40 after R3x0 spanked them for a year.Maybe we'll start seeing those price/performance gains we've been spoiled with until ATI/AMD decided to stop being competitive.

Overall, this can only mean good things, even if Larrabee itself ultimately fails.

Griswold - Monday, August 4, 2008 - link

Wake-up call dumbo. AMD just started to mop the floor with nvidias products as far as price/performance goes.watersb - Monday, August 4, 2008 - link

great article!You compare the Larrabee to a Core 2 duo - for SIMD instructions, you multiplied by a (hypothetical) 10 cores to show Larrabee at 160 SIMD instructions per clock (IPC). But you show non-vector IPC as 2.

For a 10-core Larrabee, shouldn't that be x10 as well? For 20 scalar IPC

Adamv1 - Monday, August 4, 2008 - link

I know Intel has been working on Ray Tracing and I'm really curious how this is going to fit into the picture.From what i remember Ray Tracing is a highly parallel and scales quite well with more cores and they were talking about introducing it on 8 core processors, it seems to me this would be a great platform to try it on.

SuperGee - Thursday, August 7, 2008 - link

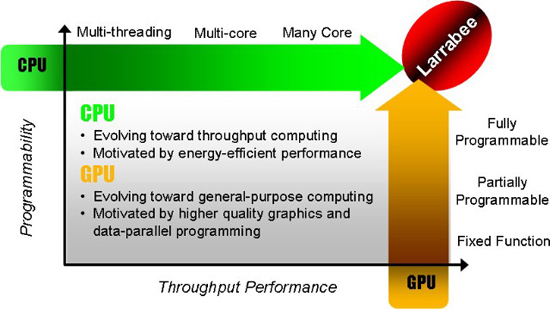

How it fit's.GPU from ATI and nV are called HArdware renderers. Stil a lot of fixed funtion. Rops TMU blender rasterizer etc. And unified shader are on the evolution to get more general purpouse. But they aren't fully GP.

This larrabee a exotic X86 massive multi core. Will act as just like a Multicore CPU. But optimised for GPU task and deployed as GPU.

So iNTel use a Software renderer and wil first emulate DirectX/OpenGL on it with its drivers.

Like nv ATI is more HAL with as backup HEL

Where Larrabee is pure HEL. But it's parralel power wil boost Software method as it is just like a large bunch of X86 cores.

HEL wil runs fast, as if it was 'HAL' with LArrabee. Because the software computing power for such task are avaible with it.

What this means is that as a GFX engine developer you got full freedom if you going to use larrabee directly.

Like they say first with a DirectX/openGL driver. Later with also a CPU driver where it can be easy target directly. thus like GPGPU task. but larrabee could pop up as extra cores in windows.

This means, because whatever you do is like a software solution.

You can make a software rendere on Ratracing method, but also a Voxel engine could be done to. But this software rendere will be accelerated bij the larrabee massive multicore CPU with could do GPU stuf also very good. But will boost any software renderer. Offcourse it must be full optimised for larrabee to get the most out of it. using those vector units and X86 larrabee extention.

Novalogic could use this to, for there Voxel game engine back in the day's of PIII.

It could accelerate any software renderer wich depend heavily on parralel computing.

icrf - Monday, August 4, 2008 - link

Since I don't play many games anymore, that aspect of Larrabee doesn't interest me any more than making economies of scale so I can buy one cheap. I'm very interested in seeing how well something like POV-Ray or an H.264 encoder can be implemented, and what kind of speed increase it'd see. Sure, these things could be implemented on current GPUs through Cuda/CTM, but that's such an different kind of task, it's not at all quick or easy. If it's significantly simpler, we'd actually see software sooner that supports it.cyberserf - Monday, August 4, 2008 - link

one word: MATROXGuuts - Monday, August 4, 2008 - link

You're going to have to use more than one word, sorry... I have no idea what in this article has anything to do with Matrox.phaxmohdem - Monday, August 4, 2008 - link

What you mean you DON'T have a Parhelia card in your PC? WTF is wrong with you?TonyB - Monday, August 4, 2008 - link

but can it play crysis?!