The Radeon HD 4850 & 4870: AMD Wins at $199 and $299

by Anand Lal Shimpi & Derek Wilson on June 25, 2008 12:00 AM EST- Posted in

- GPUs

Wrapping Up the Architecture and Efficiency Discussion

Engineering is all about tradeoffs and balance. The choice to increase capability in one area may decrease capability in another. The addition of a feature may not be worth the cost of including it. In the worst case, as Intel found with NetBurst, an architecture may inherently flawed and a starting over down an entirely different path might be the best solution.

We are at a point where there are quite a number of similarities between NVIDIA and AMD hardware. They both require maintaining a huge number of threads in flight to hide memory and instruction latency. They both manage threads in large blocks of threads that share context. Caching, coalescing memory reads and writes, and handling resource allocation need to be carefully managed in order to keep the execution units fed. Both GT200 and RV770 execute branches via dynamic predication of direction a thread does not branch (meaning if a thread in a warp or wavefront branches differently from others, all threads in that group must execute both code paths). Both share instruction and constant caches across hardware that is SIMD in nature servicing multiple threads in one context in order to effect hardware that fits the SPMD (single program multiple data) programming model.

But the hearts of GT200 and RV770, the SPA (Steaming Processor Array) and the DPP (Data Parallel Processing) Array, respectively, are quite different. The explicitly scalar one operation per thread at a time approach that NVIDIA has taken is quite different from the 5 wide VLIW approach AMD has packed into their architecture. Both of them are SIMD in nature, but NVIDIA is more like S(operation)MD and AMD is S(VLIW)MD.

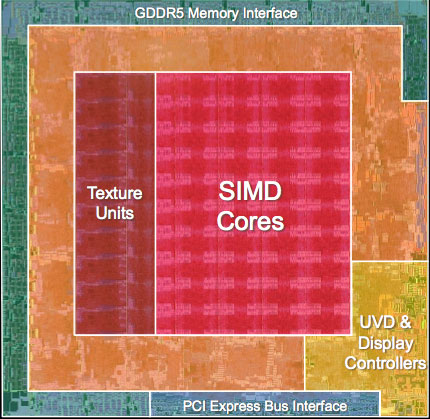

AMD's RV770, all built up and pretty

Filling the execution units of each to capacity is a challenge but looks to be more consistent on NVIDIA hardware, while in the cases where AMD hardware is used effectively (like Bioshock) we see that RV770 surpasses GTX 280 in not only performance but power efficiency as well. Area efficiency is completely owned by AMD, which means that their cost for performance delivered is lower than NVIDIA's (in terms of manufacturing -- R&D is a whole other story) since smaller ICs mean cheaper to produce parts.

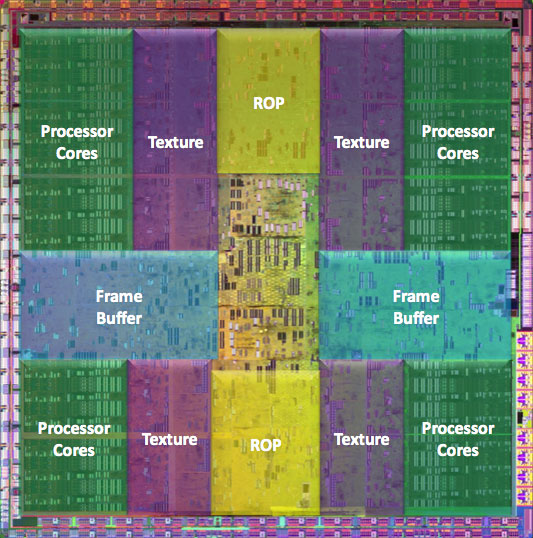

NVIDIA's GT200, in all its daunting glory

While shader/kernel length isn't as important on GT200 (except that the ratio of FP and especially multiply-add operations to other code needs to be high to extract high levels of performance), longer programs are easier for AMD's compiler to extract ILP from. Both RV770 and GT200 must balance thread issue with resource usage, but RV770 can leverage higher performance in situations where ILP can be extracted from shader/kernel code which could also help in situations where the GT200 would not be able to hide latency well.

We believe based on information found on the CUDA forums and from some of our readers that G80's SPs have about a 22 stage pipeline and that GT200 is also likely deeply piped, and while AMD has told us that their pipeline is significantly shorter than this they wouldn't tell us how long it actually is. Regardless, a shorter pipeline and the ability to execute one wavefront over multiple scheduling cycles means massive amounts of TLP isn't needed just to cover instruction latency. Yes massive amounts of TLP are needed to cover memory latency, but shader programs with lots of internal compute can also help to do this on RV770.

All of this adds up to the fact that, despite the advent of DX10 and the fact that both of these architectures are very good at executing large numbers of independent threads very quickly, getting the most out of GT200 and RV770 requires vastly different approaches in some cases. Long shaders can benefit RV770 due to increased ILP that can be extracted, while the increased resource use of long shaders may mean less threads can be issued on GT200 causing lowered performance. Of course going the other direction would have the opposite effect. Caches and resource availability/management are different, meaning that tradeoffs and choices must be made in when and how data is fetched and used. Fixed function resources are different and optimization of the usage of things like texture filters and the impact of the different setup engines can have a large (and differing with architecture) impact on performance.

We still haven't gotten to the point where we can write simple shader code that just does what we want it to do and expect it to perform perfectly everywhere. Right now it seems like typical usage models favor GT200, while relative performance can vary wildly on RV770 depending on how well the code fits the hardware. G80 (and thus NVIDIA's architecture) did have a lead in the industry for months before R600 hit the scene, and it wasn't until RV670 that AMD had a real competitor in the market place. This could be part of the reason we are seeing fewer titles benefiting from the massive amount of compute available on AMD hardware. But with this launch, AMD has solidified their place in the market (as we will see the 4800 series offers a lot of value), and it will be very interesting to see what happens going forward.

215 Comments

View All Comments

Final Destination II - Wednesday, June 25, 2008 - link

Dear girls and guys,does anyone know of a manufacturer, who offers a HD4850 with a better cooler? I'm desperately searching for one...

Please reply!

Graven Image - Wednesday, June 25, 2008 - link

Asus recently announced a 4850 with a non-stock cooler, though their version still doesn't expel the air out the back like a dual slot design. (http://www.asus.com/news_show.aspx?id=11871)">http://www.asus.com/news_show.aspx?id=11871). Its not available yet thought. My guess is mid-July we'll probably start seeing a couple different fan and heatsink designs.strikeback03 - Thursday, June 26, 2008 - link

Only dual-slot card I've ever used was an EVGA 8800GTS 640, it sucked air in the back and blew it into the case.Final Destination II - Wednesday, June 25, 2008 - link

Nice! 7°C cooler, that's a start! I guess I'll wait a bit more, then.Spacecomber - Wednesday, June 25, 2008 - link

Although I'm somewhat dubious about dual card solutions, I keep looking at the benchmarks and then at the prices for a couple of 8800 GTs.Perhaps, if the 4870 forces Nvidia to reduce their prices for the GTX 260 and the GTX 280, they will likewise bring down the price for the 9800 GX2. This is already the fastest single card solution, and it sells for less than the GTX 280. If this card starts selling for under $400 (maybe around $350), will this become Nvidia's best answer to the 4870?

Given the performance and the prices for the 4870 and the 9800 GX2 will Nvidia be able to price the GTX 280 competitively, or will it simply be vanity product - ridiculously priced and produced only in very small numbers?

It should be interesting to see where the prices for video cards end up over the course of the next few weeks.

kelmerp - Wednesday, June 25, 2008 - link

Better HD knickknacks? Better offloading/upscaling?chizow - Wednesday, June 25, 2008 - link

The HD4000 series have better HDMI sound support with 8ch LPCM over HDMI, but still can't pass uncompressed bistreams. Image quality hasn't changed as there isn't really any room to improve.kelmerp - Wednesday, June 25, 2008 - link

It would be nice to have a video card, where it doesn't matter how weak the current-gen processor is (say the lowliest celeron available), the card can still output 1080p HDTV without dropping any frames.Chaser - Wednesday, June 25, 2008 - link

Good to have back at the FRONT of the finish line.JPForums - Wednesday, June 25, 2008 - link

Ragarding the SLI scaling in Witcher:The GTX 280 SLI setup may be running into a bottleneck or driver issues, rather than seeing inherent scaling issues. Consider, the 9800 GTX+ SLI setup scales from 22.9 to 44.5. So the scaling isn't an inherent SLI scaling problem. Though it may point to scaling issues specific to the GTX 280, it is more likely that the problem lies elsewhere. I do, however, agree with your general statement that when CF is working properly, it tends to scale better. In my systems, it seems to require less CPU overhead.